Compare commits

80 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 2cb54595c9 | |||

| 284079d18b | |||

| 1c9b09fb78 | |||

| 9fb14f23d2 | |||

| 4795dc4f68 | |||

| acf0f804c5 | |||

| 4e2951854b | |||

| 80dfb429d7 | |||

| 9c0ba77e22 | |||

| 46b4651073 | |||

| 86dd5246c6 | |||

| a1227c88ee | |||

| 535d7ab568 | |||

| af10494b31 | |||

| 39c1042827 | |||

| 16e7dc11f4 | |||

| 7a27babefd | |||

| d53ae9d51d | |||

| 910cf7727d | |||

| 1698605f15 | |||

| eda124a123 | |||

| 15e9ce8d2f | |||

| c01dd603d7 | |||

| 9d5157d69f | |||

| d78795bdf5 | |||

| ff2b7f473e | |||

| 73c9a91811 | |||

| 27b765d902 | |||

| fddba419be | |||

| f42d6308e8 | |||

| c167002754 | |||

| ea26ee7d0c | |||

| 5280e908b2 | |||

| 1c5dd8c664 | |||

| 3aca153be5 | |||

| 65c8e1653c | |||

| 58e4fa918c | |||

| 3af13d3f90 | |||

| d2eb86e534 | |||

| 03842353e4 | |||

| 48747e20af | |||

| 58af593af6 | |||

| 450575a927 | |||

| eac2bb19b2 | |||

| 756a815bf0 | |||

| 23a7b080eb | |||

| bf39bcdec9 | |||

| 0276632491 | |||

| d14d71f760 | |||

| ef6efc2f55 | |||

| 07e4b593dd | |||

| 497591bf3b | |||

| a2a3e334d6 | |||

| 1ccbfaf800 | |||

| a9afa0555c | |||

| 83b2183cf0 | |||

| f49e7a760e | |||

| 6e0255ebec | |||

| 379d3df46b | |||

| 491a3f24da | |||

| c7d70e0fb1 | |||

| d59f8e99cb | |||

| 0a91b49417 | |||

| ced64541b9 | |||

| 3c30cfe02b | |||

| 0d6267bcf1 | |||

| b47175d1df | |||

| 6f23a30eed | |||

| ff7b5c7e27 | |||

| 19f7ae862e | |||

| 5e9f74744a | |||

| 7787179a5a | |||

| 22bb07f00e | |||

| 660f883197 | |||

| 988de80b66 | |||

| dc6aa226ee | |||

| a7b6b080ab | |||

| 9202cbd4d4 | |||

| 1db8484402 | |||

| cdaec8a837 |

@@ -68,7 +68,6 @@ temp/

|

||||

exports/*

|

||||

|

||||

.claude/settings.local.json

|

||||

.claude/skills/ship-it/

|

||||

|

||||

.venv

|

||||

|

||||

|

||||

@@ -1,4 +1,11 @@

|

||||

.PHONY: lint format check test install-hooks help frontend-install frontend-dev frontend-build

|

||||

.PHONY: lint format check test test-tools test-live test-all install-hooks help frontend-install frontend-dev frontend-build

|

||||

|

||||

# ── Ensure uv is findable in Git Bash on Windows ──────────────────────────────

|

||||

# uv installs to ~/.local/bin on Windows/Linux/macOS. Git Bash may not include

|

||||

# this in PATH by default, so we prepend it here.

|

||||

export PATH := $(HOME)/.local/bin:$(PATH)

|

||||

|

||||

# ── Targets ───────────────────────────────────────────────────────────────────

|

||||

|

||||

help: ## Show this help

|

||||

@grep -E '^[a-zA-Z_-]+:.*?## .*$$' $(MAKEFILE_LIST) | \

|

||||

@@ -46,4 +53,4 @@ frontend-dev: ## Start frontend dev server

|

||||

cd core/frontend && npm run dev

|

||||

|

||||

frontend-build: ## Build frontend for production

|

||||

cd core/frontend && npm run build

|

||||

cd core/frontend && npm run build

|

||||

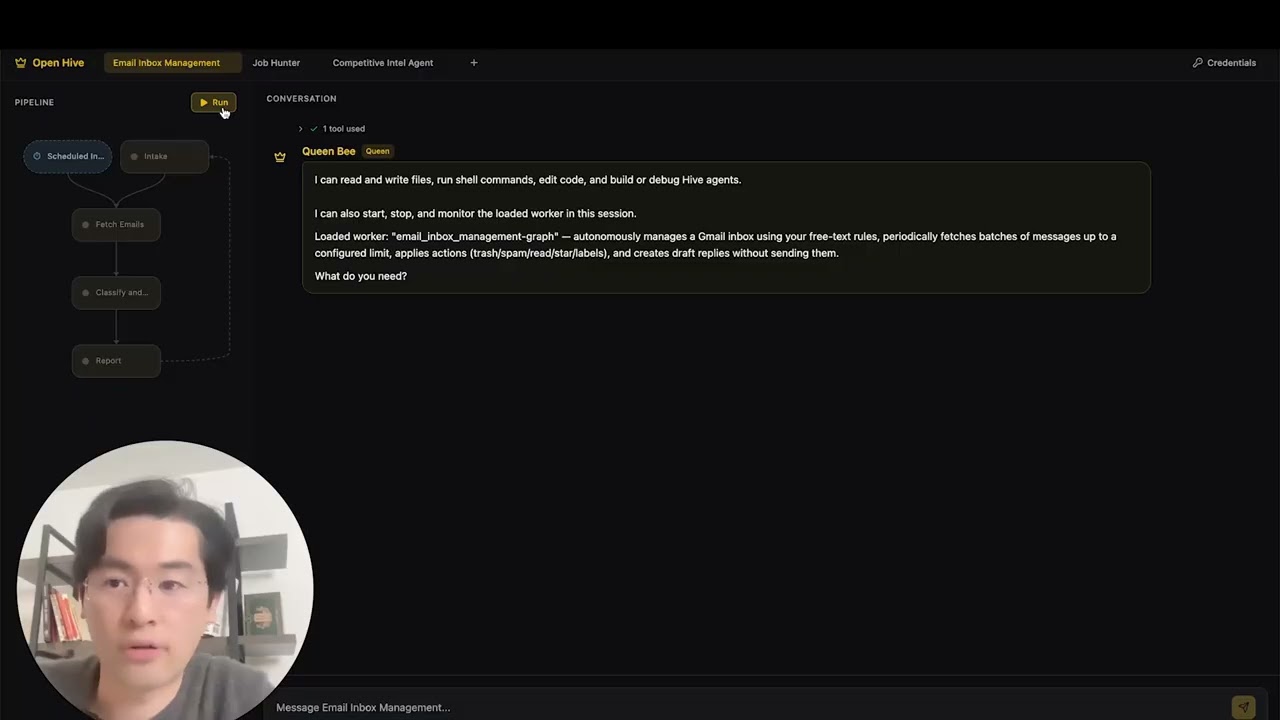

@@ -41,7 +41,8 @@ Generate a swarm of worker agents with a coding agent(queen) that control them.

|

||||

|

||||

Visit [adenhq.com](https://adenhq.com) for complete documentation, examples, and guides.

|

||||

|

||||

[](https://www.youtube.com/watch?v=XDOG9fOaLjU)

|

||||

https://github.com/user-attachments/assets/aad3a035-e7b3-4cac-b13d-4a83c7002c30

|

||||

|

||||

|

||||

## Who Is Hive For?

|

||||

|

||||

|

||||

@@ -1,31 +0,0 @@

|

||||

perf: reduce subprocess spawning in quickstart scripts (#4427)

|

||||

|

||||

## Problem

|

||||

Windows process creation (CreateProcess) is 10-100x slower than Linux fork/exec.

|

||||

The quickstart scripts were spawning 4+ separate `uv run python -c "import X"`

|

||||

processes to verify imports, adding ~600ms overhead on Windows.

|

||||

|

||||

## Solution

|

||||

Consolidated all import checks into a single batch script that checks multiple

|

||||

modules in one subprocess call, reducing spawn overhead by ~75%.

|

||||

|

||||

## Changes

|

||||

- **New**: `scripts/check_requirements.py` - Batched import checker

|

||||

- **New**: `scripts/test_check_requirements.py` - Test suite

|

||||

- **New**: `scripts/benchmark_quickstart.ps1` - Performance benchmark tool

|

||||

- **Modified**: `quickstart.ps1` - Updated import verification (2 sections)

|

||||

- **Modified**: `quickstart.sh` - Updated import verification

|

||||

|

||||

## Performance Impact

|

||||

**Benchmark results on Windows:**

|

||||

- Before: ~19.8 seconds for import checks

|

||||

- After: ~4.9 seconds for import checks

|

||||

- **Improvement: 14.9 seconds saved (75.2% faster)**

|

||||

|

||||

## Testing

|

||||

- ✅ All functional tests pass (`scripts/test_check_requirements.py`)

|

||||

- ✅ Quickstart scripts work correctly on Windows

|

||||

- ✅ Error handling verified (invalid imports reported correctly)

|

||||

- ✅ Performance benchmark confirms 75%+ improvement

|

||||

|

||||

Fixes #4427

|

||||

@@ -1,740 +0,0 @@

|

||||

#!/usr/bin/env python3

|

||||

"""

|

||||

EventLoopNode WebSocket Demo

|

||||

|

||||

Real LLM, real FileConversationStore, real EventBus.

|

||||

Streams EventLoopNode execution to a browser via WebSocket.

|

||||

|

||||

Usage:

|

||||

cd /home/timothy/oss/hive/core

|

||||

python demos/event_loop_wss_demo.py

|

||||

|

||||

Then open http://localhost:8765 in your browser.

|

||||

"""

|

||||

|

||||

import asyncio

|

||||

import json

|

||||

import logging

|

||||

import sys

|

||||

import tempfile

|

||||

from http import HTTPStatus

|

||||

from pathlib import Path

|

||||

|

||||

import httpx

|

||||

import websockets

|

||||

from bs4 import BeautifulSoup

|

||||

from websockets.http11 import Request, Response

|

||||

|

||||

# Add core, tools, and hive root to path

|

||||

_CORE_DIR = Path(__file__).resolve().parent.parent

|

||||

_HIVE_DIR = _CORE_DIR.parent

|

||||

sys.path.insert(0, str(_CORE_DIR)) # framework.*

|

||||

sys.path.insert(0, str(_HIVE_DIR / "tools" / "src")) # aden_tools.*

|

||||

sys.path.insert(0, str(_HIVE_DIR)) # core.framework.* (for aden_tools imports)

|

||||

|

||||

import os # noqa: E402

|

||||

|

||||

from aden_tools.credentials import CREDENTIAL_SPECS, CredentialStoreAdapter # noqa: E402

|

||||

from core.framework.credentials import CredentialStore # noqa: E402

|

||||

|

||||

from framework.credentials.storage import ( # noqa: E402

|

||||

CompositeStorage,

|

||||

EncryptedFileStorage,

|

||||

EnvVarStorage,

|

||||

)

|

||||

from framework.graph.event_loop_node import EventLoopNode, LoopConfig # noqa: E402

|

||||

from framework.graph.node import NodeContext, NodeSpec, SharedMemory # noqa: E402

|

||||

from framework.llm.litellm import LiteLLMProvider # noqa: E402

|

||||

from framework.llm.provider import Tool # noqa: E402

|

||||

from framework.runner.tool_registry import ToolRegistry # noqa: E402

|

||||

from framework.runtime.core import Runtime # noqa: E402

|

||||

from framework.runtime.event_bus import EventBus, EventType # noqa: E402

|

||||

from framework.storage.conversation_store import FileConversationStore # noqa: E402

|

||||

|

||||

logging.basicConfig(level=logging.INFO, format="%(asctime)s %(name)s %(message)s")

|

||||

logger = logging.getLogger("demo")

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# Persistent state (shared across WebSocket connections)

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

STORE_DIR = Path(tempfile.mkdtemp(prefix="hive_demo_"))

|

||||

STORE = FileConversationStore(STORE_DIR / "conversation")

|

||||

RUNTIME = Runtime(STORE_DIR / "runtime")

|

||||

LLM = LiteLLMProvider(model="claude-sonnet-4-5-20250929")

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# Tool Registry — real tools via ToolRegistry (same pattern as GraphExecutor)

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

TOOL_REGISTRY = ToolRegistry()

|

||||

|

||||

# Credential store: Aden sync (OAuth2 tokens) + encrypted files + env var fallback

|

||||

_env_mapping = {name: spec.env_var for name, spec in CREDENTIAL_SPECS.items()}

|

||||

_local_storage = CompositeStorage(

|

||||

primary=EncryptedFileStorage(),

|

||||

fallbacks=[EnvVarStorage(env_mapping=_env_mapping)],

|

||||

)

|

||||

|

||||

if os.environ.get("ADEN_API_KEY"):

|

||||

try:

|

||||

from framework.credentials.aden import ( # noqa: E402

|

||||

AdenCachedStorage,

|

||||

AdenClientConfig,

|

||||

AdenCredentialClient,

|

||||

AdenSyncProvider,

|

||||

)

|

||||

|

||||

_client = AdenCredentialClient(AdenClientConfig(base_url="https://api.adenhq.com"))

|

||||

_provider = AdenSyncProvider(client=_client)

|

||||

_storage = AdenCachedStorage(

|

||||

local_storage=_local_storage,

|

||||

aden_provider=_provider,

|

||||

)

|

||||

_cred_store = CredentialStore(storage=_storage, providers=[_provider], auto_refresh=True)

|

||||

_synced = _provider.sync_all(_cred_store)

|

||||

logger.info("Synced %d credentials from Aden", _synced)

|

||||

except Exception as e:

|

||||

logger.warning("Aden sync unavailable: %s", e)

|

||||

_cred_store = CredentialStore(storage=_local_storage)

|

||||

else:

|

||||

logger.info("ADEN_API_KEY not set, using local credential storage")

|

||||

_cred_store = CredentialStore(storage=_local_storage)

|

||||

|

||||

CREDENTIALS = CredentialStoreAdapter(_cred_store)

|

||||

|

||||

# Debug: log which credentials resolved

|

||||

for _name in ["brave_search", "hubspot", "anthropic"]:

|

||||

_val = CREDENTIALS.get(_name)

|

||||

if _val:

|

||||

logger.debug("credential %s: OK (len=%d)", _name, len(_val))

|

||||

else:

|

||||

logger.debug("credential %s: not found", _name)

|

||||

|

||||

# --- web_search (Brave Search API) ---

|

||||

|

||||

TOOL_REGISTRY.register(

|

||||

name="web_search",

|

||||

tool=Tool(

|

||||

name="web_search",

|

||||

description=(

|

||||

"Search the web for current information. "

|

||||

"Returns titles, URLs, and snippets from search results."

|

||||

),

|

||||

parameters={

|

||||

"type": "object",

|

||||

"properties": {

|

||||

"query": {

|

||||

"type": "string",

|

||||

"description": "The search query (1-500 characters)",

|

||||

},

|

||||

"num_results": {

|

||||

"type": "integer",

|

||||

"description": "Number of results to return (1-20, default 10)",

|

||||

},

|

||||

},

|

||||

"required": ["query"],

|

||||

},

|

||||

),

|

||||

executor=lambda inputs: _exec_web_search(inputs),

|

||||

)

|

||||

|

||||

|

||||

def _exec_web_search(inputs: dict) -> dict:

|

||||

api_key = CREDENTIALS.get("brave_search")

|

||||

if not api_key:

|

||||

return {"error": "brave_search credential not configured"}

|

||||

query = inputs.get("query", "")

|

||||

num_results = min(inputs.get("num_results", 10), 20)

|

||||

resp = httpx.get(

|

||||

"https://api.search.brave.com/res/v1/web/search",

|

||||

params={"q": query, "count": num_results},

|

||||

headers={"X-Subscription-Token": api_key, "Accept": "application/json"},

|

||||

timeout=30.0,

|

||||

)

|

||||

if resp.status_code != 200:

|

||||

return {"error": f"Brave API HTTP {resp.status_code}"}

|

||||

data = resp.json()

|

||||

results = [

|

||||

{

|

||||

"title": item.get("title", ""),

|

||||

"url": item.get("url", ""),

|

||||

"snippet": item.get("description", ""),

|

||||

}

|

||||

for item in data.get("web", {}).get("results", [])[:num_results]

|

||||

]

|

||||

return {"query": query, "results": results, "total": len(results)}

|

||||

|

||||

|

||||

# --- web_scrape (httpx + BeautifulSoup, no playwright for sync compat) ---

|

||||

|

||||

TOOL_REGISTRY.register(

|

||||

name="web_scrape",

|

||||

tool=Tool(

|

||||

name="web_scrape",

|

||||

description=(

|

||||

"Scrape and extract text content from a webpage URL. "

|

||||

"Returns the page title and main text content."

|

||||

),

|

||||

parameters={

|

||||

"type": "object",

|

||||

"properties": {

|

||||

"url": {

|

||||

"type": "string",

|

||||

"description": "URL of the webpage to scrape",

|

||||

},

|

||||

"max_length": {

|

||||

"type": "integer",

|

||||

"description": "Maximum text length (default 50000)",

|

||||

},

|

||||

},

|

||||

"required": ["url"],

|

||||

},

|

||||

),

|

||||

executor=lambda inputs: _exec_web_scrape(inputs),

|

||||

)

|

||||

|

||||

_SCRAPE_HEADERS = {

|

||||

"User-Agent": (

|

||||

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) "

|

||||

"AppleWebKit/537.36 (KHTML, like Gecko) "

|

||||

"Chrome/131.0.0.0 Safari/537.36"

|

||||

),

|

||||

"Accept": "text/html,application/xhtml+xml",

|

||||

}

|

||||

|

||||

|

||||

def _exec_web_scrape(inputs: dict) -> dict:

|

||||

url = inputs.get("url", "")

|

||||

max_length = max(1000, min(inputs.get("max_length", 50000), 500000))

|

||||

if not url.startswith(("http://", "https://")):

|

||||

url = "https://" + url

|

||||

try:

|

||||

resp = httpx.get(url, timeout=30.0, follow_redirects=True, headers=_SCRAPE_HEADERS)

|

||||

if resp.status_code != 200:

|

||||

return {"error": f"HTTP {resp.status_code}"}

|

||||

soup = BeautifulSoup(resp.text, "html.parser")

|

||||

for tag in soup(["script", "style", "nav", "footer", "header", "aside", "noscript"]):

|

||||

tag.decompose()

|

||||

title = soup.title.get_text(strip=True) if soup.title else ""

|

||||

main = (

|

||||

soup.find("article")

|

||||

or soup.find("main")

|

||||

or soup.find(attrs={"role": "main"})

|

||||

or soup.find("body")

|

||||

)

|

||||

text = main.get_text(separator=" ", strip=True) if main else ""

|

||||

text = " ".join(text.split())

|

||||

if len(text) > max_length:

|

||||

text = text[:max_length] + "..."

|

||||

return {"url": url, "title": title, "content": text, "length": len(text)}

|

||||

except httpx.TimeoutException:

|

||||

return {"error": "Request timed out"}

|

||||

except Exception as e:

|

||||

return {"error": f"Scrape failed: {e}"}

|

||||

|

||||

|

||||

# --- HubSpot CRM tools (optional, requires HUBSPOT_ACCESS_TOKEN) ---

|

||||

|

||||

_HUBSPOT_API = "https://api.hubapi.com"

|

||||

|

||||

|

||||

def _hubspot_headers() -> dict | None:

|

||||

token = CREDENTIALS.get("hubspot")

|

||||

if token:

|

||||

logger.debug("HubSpot token: %s...%s (len=%d)", token[:8], token[-4:], len(token))

|

||||

else:

|

||||

logger.debug("HubSpot token: not found")

|

||||

if not token:

|

||||

return None

|

||||

return {

|

||||

"Authorization": f"Bearer {token}",

|

||||

"Content-Type": "application/json",

|

||||

"Accept": "application/json",

|

||||

}

|

||||

|

||||

|

||||

def _exec_hubspot_search(inputs: dict) -> dict:

|

||||

headers = _hubspot_headers()

|

||||

if not headers:

|

||||

return {"error": "HUBSPOT_ACCESS_TOKEN not set"}

|

||||

object_type = inputs.get("object_type", "contacts")

|

||||

query = inputs.get("query", "")

|

||||

limit = min(inputs.get("limit", 10), 100)

|

||||

body: dict = {"limit": limit}

|

||||

if query:

|

||||

body["query"] = query

|

||||

try:

|

||||

resp = httpx.post(

|

||||

f"{_HUBSPOT_API}/crm/v3/objects/{object_type}/search",

|

||||

headers=headers,

|

||||

json=body,

|

||||

timeout=30.0,

|

||||

)

|

||||

if resp.status_code != 200:

|

||||

return {"error": f"HubSpot API HTTP {resp.status_code}: {resp.text[:200]}"}

|

||||

return resp.json()

|

||||

except httpx.TimeoutException:

|

||||

return {"error": "Request timed out"}

|

||||

except Exception as e:

|

||||

return {"error": f"HubSpot error: {e}"}

|

||||

|

||||

|

||||

TOOL_REGISTRY.register(

|

||||

name="hubspot_search",

|

||||

tool=Tool(

|

||||

name="hubspot_search",

|

||||

description=(

|

||||

"Search HubSpot CRM objects (contacts, companies, or deals). "

|

||||

"Returns matching records with their properties."

|

||||

),

|

||||

parameters={

|

||||

"type": "object",

|

||||

"properties": {

|

||||

"object_type": {

|

||||

"type": "string",

|

||||

"description": "CRM object type: 'contacts', 'companies', or 'deals'",

|

||||

},

|

||||

"query": {

|

||||

"type": "string",

|

||||

"description": "Search query (name, email, domain, etc.)",

|

||||

},

|

||||

"limit": {

|

||||

"type": "integer",

|

||||

"description": "Max results (1-100, default 10)",

|

||||

},

|

||||

},

|

||||

"required": ["object_type"],

|

||||

},

|

||||

),

|

||||

executor=lambda inputs: _exec_hubspot_search(inputs),

|

||||

)

|

||||

|

||||

logger.info(

|

||||

"ToolRegistry loaded: %s",

|

||||

", ".join(TOOL_REGISTRY.get_registered_names()),

|

||||

)

|

||||

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# HTML page (embedded)

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

HTML_PAGE = ( # noqa: E501

|

||||

"""<!DOCTYPE html>

|

||||

<html lang="en">

|

||||

<head>

|

||||

<meta charset="utf-8">

|

||||

<meta name="viewport" content="width=device-width, initial-scale=1">

|

||||

<title>EventLoopNode Live Demo</title>

|

||||

<style>

|

||||

* { box-sizing: border-box; margin: 0; padding: 0; }

|

||||

body {

|

||||

font-family: 'SF Mono', 'Fira Code', monospace;

|

||||

background: #0d1117; color: #c9d1d9;

|

||||

height: 100vh; display: flex; flex-direction: column;

|

||||

}

|

||||

header {

|

||||

background: #161b22; padding: 12px 20px;

|

||||

border-bottom: 1px solid #30363d;

|

||||

display: flex; align-items: center; gap: 16px;

|

||||

}

|

||||

header h1 { font-size: 16px; color: #58a6ff; font-weight: 600; }

|

||||

.status {

|

||||

font-size: 12px; padding: 3px 10px; border-radius: 12px;

|

||||

background: #21262d; color: #8b949e;

|

||||

}

|

||||

.status.running { background: #1a4b2e; color: #3fb950; }

|

||||

.status.done { background: #1a3a5c; color: #58a6ff; }

|

||||

.status.error { background: #4b1a1a; color: #f85149; }

|

||||

.chat { flex: 1; overflow-y: auto; padding: 16px; }

|

||||

.msg {

|

||||

margin: 8px 0; padding: 10px 14px; border-radius: 8px;

|

||||

line-height: 1.6; white-space: pre-wrap; word-wrap: break-word;

|

||||

}

|

||||

.msg.user { background: #1a3a5c; color: #58a6ff; }

|

||||

.msg.assistant { background: #161b22; color: #c9d1d9; }

|

||||

.msg.event {

|

||||

background: transparent; color: #8b949e; font-size: 11px;

|

||||

padding: 4px 14px; border-left: 3px solid #30363d;

|

||||

}

|

||||

.msg.event.loop { border-left-color: #58a6ff; }

|

||||

.msg.event.tool { border-left-color: #d29922; }

|

||||

.msg.event.stall { border-left-color: #f85149; }

|

||||

.input-bar {

|

||||

padding: 12px 16px; background: #161b22;

|

||||

border-top: 1px solid #30363d; display: flex; gap: 8px;

|

||||

}

|

||||

.input-bar input {

|

||||

flex: 1; background: #0d1117; border: 1px solid #30363d;

|

||||

color: #c9d1d9; padding: 8px 12px; border-radius: 6px;

|

||||

font-family: inherit; font-size: 14px; outline: none;

|

||||

}

|

||||

.input-bar input:focus { border-color: #58a6ff; }

|

||||

.input-bar button {

|

||||

background: #238636; color: #fff; border: none;

|

||||

padding: 8px 20px; border-radius: 6px; cursor: pointer;

|

||||

font-family: inherit; font-weight: 600;

|

||||

}

|

||||

.input-bar button:hover { background: #2ea043; }

|

||||

.input-bar button:disabled {

|

||||

background: #21262d; color: #484f58; cursor: not-allowed;

|

||||

}

|

||||

.input-bar button.clear { background: #da3633; }

|

||||

.input-bar button.clear:hover { background: #f85149; }

|

||||

</style>

|

||||

</head>

|

||||

<body>

|

||||

<header>

|

||||

<h1>EventLoopNode Live</h1>

|

||||

<span id="status" class="status">Idle</span>

|

||||

<span id="iter" class="status" style="display:none">Step 0</span>

|

||||

</header>

|

||||

<div id="chat" class="chat"></div>

|

||||

<div class="input-bar">

|

||||

<input id="input" type="text"

|

||||

placeholder="Ask anything..." autofocus />

|

||||

<button id="go" onclick="run()">Send</button>

|

||||

<button class="clear"

|

||||

onclick="clearConversation()">Clear</button>

|

||||

</div>

|

||||

|

||||

<script>

|

||||

let ws = null;

|

||||

let currentAssistantEl = null;

|

||||

let iterCount = 0;

|

||||

const chat = document.getElementById('chat');

|

||||

const status = document.getElementById('status');

|

||||

const iterEl = document.getElementById('iter');

|

||||

const goBtn = document.getElementById('go');

|

||||

const inputEl = document.getElementById('input');

|

||||

|

||||

inputEl.addEventListener('keydown', e => {

|

||||

if (e.key === 'Enter') run();

|

||||

});

|

||||

|

||||

function setStatus(text, cls) {

|

||||

status.textContent = text;

|

||||

status.className = 'status ' + cls;

|

||||

}

|

||||

|

||||

function addMsg(text, cls) {

|

||||

const el = document.createElement('div');

|

||||

el.className = 'msg ' + cls;

|

||||

el.textContent = text;

|

||||

chat.appendChild(el);

|

||||

chat.scrollTop = chat.scrollHeight;

|

||||

return el;

|

||||

}

|

||||

|

||||

function connect() {

|

||||

ws = new WebSocket('ws://' + location.host + '/ws');

|

||||

ws.onopen = () => {

|

||||

setStatus('Ready', 'done');

|

||||

goBtn.disabled = false;

|

||||

};

|

||||

ws.onmessage = handleEvent;

|

||||

ws.onerror = () => { setStatus('Error', 'error'); };

|

||||

ws.onclose = () => {

|

||||

setStatus('Reconnecting...', '');

|

||||

goBtn.disabled = true;

|

||||

setTimeout(connect, 2000);

|

||||

};

|

||||

}

|

||||

|

||||

function handleEvent(msg) {

|

||||

const evt = JSON.parse(msg.data);

|

||||

|

||||

if (evt.type === 'llm_text_delta') {

|

||||

if (currentAssistantEl) {

|

||||

currentAssistantEl.textContent += evt.content;

|

||||

chat.scrollTop = chat.scrollHeight;

|

||||

}

|

||||

}

|

||||

else if (evt.type === 'ready') {

|

||||

setStatus('Ready', 'done');

|

||||

if (currentAssistantEl && !currentAssistantEl.textContent)

|

||||

currentAssistantEl.remove();

|

||||

goBtn.disabled = false;

|

||||

}

|

||||

else if (evt.type === 'node_loop_iteration') {

|

||||

iterCount = evt.iteration || (iterCount + 1);

|

||||

iterEl.textContent = 'Step ' + iterCount;

|

||||

iterEl.style.display = '';

|

||||

}

|

||||

else if (evt.type === 'tool_call_started') {

|

||||

var info = evt.tool_name + '('

|

||||

+ JSON.stringify(evt.tool_input).slice(0, 120) + ')';

|

||||

addMsg('TOOL ' + info, 'event tool');

|

||||

}

|

||||

else if (evt.type === 'tool_call_completed') {

|

||||

var preview = (evt.result || '').slice(0, 200);

|

||||

var cls = evt.is_error ? 'stall' : 'tool';

|

||||

addMsg('RESULT ' + evt.tool_name + ': ' + preview,

|

||||

'event ' + cls);

|

||||

currentAssistantEl = addMsg('', 'assistant');

|

||||

}

|

||||

else if (evt.type === 'result') {

|

||||

setStatus('Session ended', evt.success ? 'done' : 'error');

|

||||

if (evt.error) addMsg('ERROR ' + evt.error, 'event stall');

|

||||

if (currentAssistantEl && !currentAssistantEl.textContent)

|

||||

currentAssistantEl.remove();

|

||||

goBtn.disabled = false;

|

||||

}

|

||||

else if (evt.type === 'node_stalled') {

|

||||

addMsg('STALLED ' + evt.reason, 'event stall');

|

||||

}

|

||||

else if (evt.type === 'cleared') {

|

||||

chat.innerHTML = '';

|

||||

iterCount = 0;

|

||||

iterEl.textContent = 'Step 0';

|

||||

iterEl.style.display = 'none';

|

||||

setStatus('Ready', 'done');

|

||||

goBtn.disabled = false;

|

||||

}

|

||||

}

|

||||

|

||||

function run() {

|

||||

const text = inputEl.value.trim();

|

||||

if (!text || !ws || ws.readyState !== 1) return;

|

||||

addMsg(text, 'user');

|

||||

currentAssistantEl = addMsg('', 'assistant');

|

||||

inputEl.value = '';

|

||||

setStatus('Running', 'running');

|

||||

goBtn.disabled = true;

|

||||

ws.send(JSON.stringify({ topic: text }));

|

||||

}

|

||||

|

||||

function clearConversation() {

|

||||

if (ws && ws.readyState === 1) {

|

||||

ws.send(JSON.stringify({ command: 'clear' }));

|

||||

}

|

||||

}

|

||||

|

||||

connect();

|

||||

</script>

|

||||

</body>

|

||||

</html>"""

|

||||

)

|

||||

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# WebSocket handler

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

|

||||

async def handle_ws(websocket):

|

||||

"""Persistent WebSocket: long-lived EventLoopNode with client_facing blocking."""

|

||||

global STORE

|

||||

|

||||

# -- Event forwarding (WebSocket ← EventBus) ----------------------------

|

||||

bus = EventBus()

|

||||

|

||||

async def forward_event(event):

|

||||

try:

|

||||

payload = {"type": event.type.value, **event.data}

|

||||

if event.node_id:

|

||||

payload["node_id"] = event.node_id

|

||||

await websocket.send(json.dumps(payload))

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

bus.subscribe(

|

||||

event_types=[

|

||||

EventType.NODE_LOOP_STARTED,

|

||||

EventType.NODE_LOOP_ITERATION,

|

||||

EventType.NODE_LOOP_COMPLETED,

|

||||

EventType.LLM_TEXT_DELTA,

|

||||

EventType.TOOL_CALL_STARTED,

|

||||

EventType.TOOL_CALL_COMPLETED,

|

||||

EventType.NODE_STALLED,

|

||||

],

|

||||

handler=forward_event,

|

||||

)

|

||||

|

||||

# -- Per-connection state -----------------------------------------------

|

||||

node = None

|

||||

loop_task = None

|

||||

|

||||

tools = list(TOOL_REGISTRY.get_tools().values())

|

||||

tool_executor = TOOL_REGISTRY.get_executor()

|

||||

|

||||

node_spec = NodeSpec(

|

||||

id="assistant",

|

||||

name="Chat Assistant",

|

||||

description="A conversational assistant that remembers context across messages",

|

||||

node_type="event_loop",

|

||||

client_facing=True,

|

||||

system_prompt=(

|

||||

"You are a helpful assistant with access to tools. "

|

||||

"You can search the web, scrape webpages, and query HubSpot CRM. "

|

||||

"Use tools when the user asks for current information or external data. "

|

||||

"You have full conversation history, so you can reference previous messages."

|

||||

),

|

||||

)

|

||||

|

||||

# -- Ready callback: subscribe to CLIENT_INPUT_REQUESTED on the bus ---

|

||||

async def on_input_requested(event):

|

||||

try:

|

||||

await websocket.send(json.dumps({"type": "ready"}))

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

bus.subscribe(

|

||||

event_types=[EventType.CLIENT_INPUT_REQUESTED],

|

||||

handler=on_input_requested,

|

||||

)

|

||||

|

||||

async def start_loop(first_message: str):

|

||||

"""Create an EventLoopNode and run it as a background task."""

|

||||

nonlocal node, loop_task

|

||||

|

||||

memory = SharedMemory()

|

||||

ctx = NodeContext(

|

||||

runtime=RUNTIME,

|

||||

node_id="assistant",

|

||||

node_spec=node_spec,

|

||||

memory=memory,

|

||||

input_data={},

|

||||

llm=LLM,

|

||||

available_tools=tools,

|

||||

)

|

||||

node = EventLoopNode(

|

||||

event_bus=bus,

|

||||

config=LoopConfig(max_iterations=10_000, max_context_tokens=32_000),

|

||||

conversation_store=STORE,

|

||||

tool_executor=tool_executor,

|

||||

)

|

||||

await node.inject_event(first_message)

|

||||

|

||||

async def _run():

|

||||

try:

|

||||

result = await node.execute(ctx)

|

||||

try:

|

||||

await websocket.send(

|

||||

json.dumps(

|

||||

{

|

||||

"type": "result",

|

||||

"success": result.success,

|

||||

"output": result.output,

|

||||

"error": result.error,

|

||||

"tokens": result.tokens_used,

|

||||

}

|

||||

)

|

||||

)

|

||||

except Exception:

|

||||

pass

|

||||

logger.info(f"Loop ended: success={result.success}, tokens={result.tokens_used}")

|

||||

except websockets.exceptions.ConnectionClosed:

|

||||

logger.info("Loop stopped: WebSocket closed")

|

||||

except Exception as e:

|

||||

logger.exception("Loop error")

|

||||

try:

|

||||

await websocket.send(

|

||||

json.dumps(

|

||||

{

|

||||

"type": "result",

|

||||

"success": False,

|

||||

"error": str(e),

|

||||

"output": {},

|

||||

}

|

||||

)

|

||||

)

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

loop_task = asyncio.create_task(_run())

|

||||

|

||||

async def stop_loop():

|

||||

"""Signal the node and wait for the loop task to finish."""

|

||||

nonlocal node, loop_task

|

||||

if loop_task and not loop_task.done():

|

||||

if node:

|

||||

node.signal_shutdown()

|

||||

try:

|

||||

await asyncio.wait_for(loop_task, timeout=5.0)

|

||||

except (TimeoutError, asyncio.CancelledError):

|

||||

loop_task.cancel()

|

||||

node = None

|

||||

loop_task = None

|

||||

|

||||

# -- Message loop (runs for the lifetime of this WebSocket) -------------

|

||||

try:

|

||||

async for raw in websocket:

|

||||

try:

|

||||

msg = json.loads(raw)

|

||||

except Exception:

|

||||

continue

|

||||

|

||||

# Clear command

|

||||

if msg.get("command") == "clear":

|

||||

import shutil

|

||||

|

||||

await stop_loop()

|

||||

await STORE.close()

|

||||

conv_dir = STORE_DIR / "conversation"

|

||||

if conv_dir.exists():

|

||||

shutil.rmtree(conv_dir)

|

||||

STORE = FileConversationStore(conv_dir)

|

||||

await websocket.send(json.dumps({"type": "cleared"}))

|

||||

logger.info("Conversation cleared")

|

||||

continue

|

||||

|

||||

topic = msg.get("topic", "")

|

||||

if not topic:

|

||||

continue

|

||||

|

||||

if node is None:

|

||||

# First message — spin up the loop

|

||||

logger.info(f"Starting persistent loop: {topic}")

|

||||

await start_loop(topic)

|

||||

else:

|

||||

# Subsequent message — inject into the running loop

|

||||

logger.info(f"Injecting message: {topic}")

|

||||

await node.inject_event(topic)

|

||||

|

||||

except websockets.exceptions.ConnectionClosed:

|

||||

pass

|

||||

finally:

|

||||

await stop_loop()

|

||||

logger.info("WebSocket closed, loop stopped")

|

||||

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# HTTP handler for serving the HTML page

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

|

||||

async def process_request(connection, request: Request):

|

||||

"""Serve HTML on GET /, upgrade to WebSocket on /ws."""

|

||||

if request.path == "/ws":

|

||||

return None # let websockets handle the upgrade

|

||||

# Serve the HTML page for any other path

|

||||

return Response(

|

||||

HTTPStatus.OK,

|

||||

"OK",

|

||||

websockets.Headers({"Content-Type": "text/html; charset=utf-8"}),

|

||||

HTML_PAGE.encode(),

|

||||

)

|

||||

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# Main

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

|

||||

async def main():

|

||||

port = 8765

|

||||

async with websockets.serve(

|

||||

handle_ws,

|

||||

"0.0.0.0",

|

||||

port,

|

||||

process_request=process_request,

|

||||

):

|

||||

logger.info(f"Demo running at http://localhost:{port}")

|

||||

logger.info("Open in your browser and enter a topic to research.")

|

||||

await asyncio.Future() # run forever

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

asyncio.run(main())

|

||||

File diff suppressed because it is too large

Load Diff

@@ -1,930 +0,0 @@

|

||||

#!/usr/bin/env python3

|

||||

"""

|

||||

Two-Node ContextHandoff Demo

|

||||

|

||||

Demonstrates ContextHandoff between two EventLoopNode instances:

|

||||

Node A (Researcher) → ContextHandoff → Node B (Analyst)

|

||||

|

||||

Real LLM, real FileConversationStore, real EventBus.

|

||||

Streams both nodes to a browser via WebSocket.

|

||||

|

||||

Usage:

|

||||

cd /home/timothy/oss/hive/core

|

||||

python demos/handoff_demo.py

|

||||

|

||||

Then open http://localhost:8766 in your browser.

|

||||

"""

|

||||

|

||||

import asyncio

|

||||

import json

|

||||

import logging

|

||||

import sys

|

||||

import tempfile

|

||||

from http import HTTPStatus

|

||||

from pathlib import Path

|

||||

|

||||

import httpx

|

||||

import websockets

|

||||

from bs4 import BeautifulSoup

|

||||

from websockets.http11 import Request, Response

|

||||

|

||||

# Add core, tools, and hive root to path

|

||||

_CORE_DIR = Path(__file__).resolve().parent.parent

|

||||

_HIVE_DIR = _CORE_DIR.parent

|

||||

sys.path.insert(0, str(_CORE_DIR)) # framework.*

|

||||

sys.path.insert(0, str(_HIVE_DIR / "tools" / "src")) # aden_tools.*

|

||||

sys.path.insert(0, str(_HIVE_DIR)) # core.framework.* (for aden_tools imports)

|

||||

|

||||

from aden_tools.credentials import CREDENTIAL_SPECS, CredentialStoreAdapter # noqa: E402

|

||||

from core.framework.credentials import CredentialStore # noqa: E402

|

||||

|

||||

from framework.credentials.storage import ( # noqa: E402

|

||||

CompositeStorage,

|

||||

EncryptedFileStorage,

|

||||

EnvVarStorage,

|

||||

)

|

||||

from framework.graph.context_handoff import ContextHandoff # noqa: E402

|

||||

from framework.graph.conversation import NodeConversation # noqa: E402

|

||||

from framework.graph.event_loop_node import EventLoopNode, LoopConfig # noqa: E402

|

||||

from framework.graph.node import NodeContext, NodeSpec, SharedMemory # noqa: E402

|

||||

from framework.llm.litellm import LiteLLMProvider # noqa: E402

|

||||

from framework.llm.provider import Tool # noqa: E402

|

||||

from framework.runner.tool_registry import ToolRegistry # noqa: E402

|

||||

from framework.runtime.core import Runtime # noqa: E402

|

||||

from framework.runtime.event_bus import EventBus, EventType # noqa: E402

|

||||

from framework.storage.conversation_store import FileConversationStore # noqa: E402

|

||||

|

||||

logging.basicConfig(level=logging.INFO, format="%(asctime)s %(name)s %(message)s")

|

||||

logger = logging.getLogger("handoff_demo")

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# Persistent state

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

STORE_DIR = Path(tempfile.mkdtemp(prefix="hive_handoff_"))

|

||||

RUNTIME = Runtime(STORE_DIR / "runtime")

|

||||

LLM = LiteLLMProvider(model="claude-sonnet-4-5-20250929")

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# Credentials

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

# Composite credential store: encrypted files (primary) + env vars (fallback)

|

||||

_env_mapping = {name: spec.env_var for name, spec in CREDENTIAL_SPECS.items()}

|

||||

_composite = CompositeStorage(

|

||||

primary=EncryptedFileStorage(),

|

||||

fallbacks=[EnvVarStorage(env_mapping=_env_mapping)],

|

||||

)

|

||||

CREDENTIALS = CredentialStoreAdapter(CredentialStore(storage=_composite))

|

||||

|

||||

for _name in ["brave_search", "hubspot"]:

|

||||

_val = CREDENTIALS.get(_name)

|

||||

if _val:

|

||||

logger.debug("credential %s: OK (len=%d)", _name, len(_val))

|

||||

else:

|

||||

logger.debug("credential %s: not found", _name)

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# Tool Registry — web_search + web_scrape for Node A (Researcher)

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

TOOL_REGISTRY = ToolRegistry()

|

||||

|

||||

|

||||

def _exec_web_search(inputs: dict) -> dict:

|

||||

api_key = CREDENTIALS.get("brave_search")

|

||||

if not api_key:

|

||||

return {"error": "brave_search credential not configured"}

|

||||

query = inputs.get("query", "")

|

||||

num_results = min(inputs.get("num_results", 10), 20)

|

||||

resp = httpx.get(

|

||||

"https://api.search.brave.com/res/v1/web/search",

|

||||

params={"q": query, "count": num_results},

|

||||

headers={

|

||||

"X-Subscription-Token": api_key,

|

||||

"Accept": "application/json",

|

||||

},

|

||||

timeout=30.0,

|

||||

)

|

||||

if resp.status_code != 200:

|

||||

return {"error": f"Brave API HTTP {resp.status_code}"}

|

||||

data = resp.json()

|

||||

results = [

|

||||

{

|

||||

"title": item.get("title", ""),

|

||||

"url": item.get("url", ""),

|

||||

"snippet": item.get("description", ""),

|

||||

}

|

||||

for item in data.get("web", {}).get("results", [])[:num_results]

|

||||

]

|

||||

return {"query": query, "results": results, "total": len(results)}

|

||||

|

||||

|

||||

TOOL_REGISTRY.register(

|

||||

name="web_search",

|

||||

tool=Tool(

|

||||

name="web_search",

|

||||

description=(

|

||||

"Search the web for current information. "

|

||||

"Returns titles, URLs, and snippets from search results."

|

||||

),

|

||||

parameters={

|

||||

"type": "object",

|

||||

"properties": {

|

||||

"query": {

|

||||

"type": "string",

|

||||

"description": "The search query (1-500 characters)",

|

||||

},

|

||||

"num_results": {

|

||||

"type": "integer",

|

||||

"description": "Number of results (1-20, default 10)",

|

||||

},

|

||||

},

|

||||

"required": ["query"],

|

||||

},

|

||||

),

|

||||

executor=lambda inputs: _exec_web_search(inputs),

|

||||

)

|

||||

|

||||

_SCRAPE_HEADERS = {

|

||||

"User-Agent": (

|

||||

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) "

|

||||

"AppleWebKit/537.36 (KHTML, like Gecko) "

|

||||

"Chrome/131.0.0.0 Safari/537.36"

|

||||

),

|

||||

"Accept": "text/html,application/xhtml+xml",

|

||||

}

|

||||

|

||||

|

||||

def _exec_web_scrape(inputs: dict) -> dict:

|

||||

url = inputs.get("url", "")

|

||||

max_length = max(1000, min(inputs.get("max_length", 50000), 500000))

|

||||

if not url.startswith(("http://", "https://")):

|

||||

url = "https://" + url

|

||||

try:

|

||||

resp = httpx.get(

|

||||

url,

|

||||

timeout=30.0,

|

||||

follow_redirects=True,

|

||||

headers=_SCRAPE_HEADERS,

|

||||

)

|

||||

if resp.status_code != 200:

|

||||

return {"error": f"HTTP {resp.status_code}"}

|

||||

soup = BeautifulSoup(resp.text, "html.parser")

|

||||

for tag in soup(["script", "style", "nav", "footer", "header", "aside", "noscript"]):

|

||||

tag.decompose()

|

||||

title = soup.title.get_text(strip=True) if soup.title else ""

|

||||

main = (

|

||||

soup.find("article")

|

||||

or soup.find("main")

|

||||

or soup.find(attrs={"role": "main"})

|

||||

or soup.find("body")

|

||||

)

|

||||

text = main.get_text(separator=" ", strip=True) if main else ""

|

||||

text = " ".join(text.split())

|

||||

if len(text) > max_length:

|

||||

text = text[:max_length] + "..."

|

||||

return {

|

||||

"url": url,

|

||||

"title": title,

|

||||

"content": text,

|

||||

"length": len(text),

|

||||

}

|

||||

except httpx.TimeoutException:

|

||||

return {"error": "Request timed out"}

|

||||

except Exception as e:

|

||||

return {"error": f"Scrape failed: {e}"}

|

||||

|

||||

|

||||

TOOL_REGISTRY.register(

|

||||

name="web_scrape",

|

||||

tool=Tool(

|

||||

name="web_scrape",

|

||||

description=(

|

||||

"Scrape and extract text content from a webpage URL. "

|

||||

"Returns the page title and main text content."

|

||||

),

|

||||

parameters={

|

||||

"type": "object",

|

||||

"properties": {

|

||||

"url": {

|

||||

"type": "string",

|

||||

"description": "URL of the webpage to scrape",

|

||||

},

|

||||

"max_length": {

|

||||

"type": "integer",

|

||||

"description": "Maximum text length (default 50000)",

|

||||

},

|

||||

},

|

||||

"required": ["url"],

|

||||

},

|

||||

),

|

||||

executor=lambda inputs: _exec_web_scrape(inputs),

|

||||

)

|

||||

|

||||

logger.info(

|

||||

"ToolRegistry loaded: %s",

|

||||

", ".join(TOOL_REGISTRY.get_registered_names()),

|

||||

)

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# Node Specs

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

RESEARCHER_SPEC = NodeSpec(

|

||||

id="researcher",

|

||||

name="Researcher",

|

||||

description="Researches a topic using web search and scraping tools",

|

||||

node_type="event_loop",

|

||||

input_keys=["topic"],

|

||||

output_keys=["research_summary"],

|

||||

system_prompt=(

|

||||

"You are a thorough research assistant. Your job is to research "

|

||||

"the given topic using the web_search and web_scrape tools.\n\n"

|

||||

"1. Search for relevant information on the topic\n"

|

||||

"2. Scrape 1-2 of the most promising URLs for details\n"

|

||||

"3. Synthesize your findings into a comprehensive summary\n"

|

||||

"4. Use set_output with key='research_summary' to save your "

|

||||

"findings\n\n"

|

||||

"Be thorough but efficient. Aim for 2-4 search/scrape calls, "

|

||||

"then summarize and set_output."

|

||||

),

|

||||

)

|

||||

|

||||

ANALYST_SPEC = NodeSpec(

|

||||

id="analyst",

|

||||

name="Analyst",

|

||||

description="Analyzes research findings and provides insights",

|

||||

node_type="event_loop",

|

||||

input_keys=["context"],

|

||||

output_keys=["analysis"],

|

||||

system_prompt=(

|

||||

"You are a strategic analyst. You receive research findings from "

|

||||

"a previous researcher and must:\n\n"

|

||||

"1. Identify key themes and patterns\n"

|

||||

"2. Assess the reliability and significance of the findings\n"

|

||||

"3. Provide actionable insights and recommendations\n"

|

||||

"4. Use set_output with key='analysis' to save your analysis\n\n"

|

||||

"Be concise but insightful. Focus on what matters most."

|

||||

),

|

||||

)

|

||||

|

||||

|

||||

# -------------------------------------------------------------------------

|

||||

# HTML page

|

||||

# -------------------------------------------------------------------------

|

||||

|

||||

HTML_PAGE = ( # noqa: E501

|

||||

"""<!DOCTYPE html>

|

||||

<html lang="en">

|

||||

<head>

|

||||

<meta charset="utf-8">

|

||||

<meta name="viewport" content="width=device-width, initial-scale=1">

|

||||

<title>ContextHandoff Demo</title>

|

||||

<style>

|

||||

* {

|

||||

box-sizing: border-box;

|

||||

margin: 0;

|

||||

padding: 0;

|

||||

}

|

||||

body {

|

||||

font-family: 'SF Mono', 'Fira Code', monospace;

|

||||

background: #0d1117;

|

||||

color: #c9d1d9;

|

||||

height: 100vh;

|

||||

display: flex;

|

||||

flex-direction: column;

|

||||

}

|

||||

header {

|

||||

background: #161b22;

|

||||

padding: 12px 20px;

|

||||

border-bottom: 1px solid #30363d;

|

||||

display: flex;

|

||||

align-items: center;

|

||||

gap: 16px;

|

||||

}

|

||||

header h1 {

|

||||

font-size: 16px;

|

||||

color: #58a6ff;

|

||||

font-weight: 600;

|

||||

}

|

||||

.badge {

|

||||

font-size: 12px;

|

||||

padding: 3px 10px;

|

||||

border-radius: 12px;

|

||||

background: #21262d;

|

||||

color: #8b949e;

|

||||

}

|

||||

.badge.researcher {

|

||||

background: #1a3a5c;

|

||||

color: #58a6ff;

|

||||

}

|

||||

.badge.analyst {

|

||||

background: #1a4b2e;

|

||||

color: #3fb950;

|

||||

}

|

||||

.badge.handoff {

|

||||

background: #3d1f00;

|

||||

color: #d29922;

|

||||

}

|

||||

.badge.done {

|

||||

background: #21262d;

|

||||

color: #8b949e;

|

||||

}

|

||||

.badge.error {

|

||||

background: #4b1a1a;

|

||||

color: #f85149;

|

||||

}

|

||||

.chat {

|

||||

flex: 1;

|

||||

overflow-y: auto;

|

||||

padding: 16px;

|

||||

}

|

||||

.msg {

|

||||

margin: 8px 0;

|

||||

padding: 10px 14px;

|

||||

border-radius: 8px;

|

||||

line-height: 1.6;

|

||||

white-space: pre-wrap;

|

||||

word-wrap: break-word;

|

||||

}

|

||||

.msg.user {

|

||||

background: #1a3a5c;

|

||||

color: #58a6ff;

|

||||

}

|

||||

.msg.assistant {

|

||||

background: #161b22;

|

||||

color: #c9d1d9;

|

||||

}

|

||||

.msg.assistant.analyst-msg {

|

||||

border-left: 3px solid #3fb950;

|

||||

}

|

||||

.msg.event {

|

||||

background: transparent;

|

||||

color: #8b949e;

|

||||

font-size: 11px;

|

||||

padding: 4px 14px;

|

||||

border-left: 3px solid #30363d;

|

||||

}

|

||||

.msg.event.loop {

|

||||

border-left-color: #58a6ff;

|

||||

}

|

||||

.msg.event.tool {

|

||||

border-left-color: #d29922;

|

||||

}

|

||||

.msg.event.stall {

|

||||

border-left-color: #f85149;

|

||||

}

|

||||

.handoff-banner {

|

||||

margin: 16px 0;

|

||||

padding: 16px;

|

||||

background: #1c1200;

|

||||

border: 1px solid #d29922;

|

||||

border-radius: 8px;

|

||||

text-align: center;

|

||||

}

|

||||

.handoff-banner h3 {

|

||||

color: #d29922;

|

||||

font-size: 14px;

|

||||

margin-bottom: 8px;

|

||||

}

|

||||

.handoff-banner p, .result-banner p {

|

||||

color: #8b949e;

|

||||

font-size: 12px;

|

||||

line-height: 1.5;

|

||||

max-height: 200px;

|

||||

overflow-y: auto;

|

||||

white-space: pre-wrap;

|

||||

text-align: left;

|

||||

}

|

||||

.result-banner {

|

||||

margin: 16px 0;

|

||||

padding: 16px;

|

||||

background: #0a2614;

|

||||

border: 1px solid #3fb950;

|

||||

border-radius: 8px;

|

||||

}

|

||||

.result-banner h3 {

|

||||

color: #3fb950;

|

||||

font-size: 14px;

|

||||

margin-bottom: 8px;

|

||||

text-align: center;

|

||||

}

|

||||

.result-banner .label {

|

||||

color: #58a6ff;

|

||||

font-size: 11px;

|

||||

font-weight: 600;

|

||||

margin-top: 10px;

|

||||

margin-bottom: 2px;

|

||||

}

|

||||

.result-banner .tokens {

|

||||

color: #484f58;

|

||||

font-size: 11px;

|

||||

text-align: center;

|

||||

margin-top: 10px;

|

||||

}

|

||||

.input-bar {

|

||||

padding: 12px 16px;

|

||||

background: #161b22;

|

||||

border-top: 1px solid #30363d;

|

||||

display: flex;

|

||||

gap: 8px;

|

||||

}

|

||||

.input-bar input {

|

||||

flex: 1;

|

||||

background: #0d1117;

|

||||

border: 1px solid #30363d;

|

||||

color: #c9d1d9;

|

||||

padding: 8px 12px;

|

||||

border-radius: 6px;

|

||||

font-family: inherit;

|

||||

font-size: 14px;

|

||||

outline: none;

|

||||

}

|

||||

.input-bar input:focus {

|

||||

border-color: #58a6ff;

|

||||

}

|

||||

.input-bar button {

|

||||

background: #238636;

|

||||

color: #fff;

|

||||

border: none;

|

||||

padding: 8px 20px;

|

||||

border-radius: 6px;

|

||||

cursor: pointer;

|

||||

font-family: inherit;

|

||||

font-weight: 600;

|

||||

}

|

||||

.input-bar button:hover {

|

||||

background: #2ea043;

|

||||

}

|

||||

.input-bar button:disabled {

|

||||

background: #21262d;

|

||||

color: #484f58;

|

||||

cursor: not-allowed;

|

||||

}

|

||||

</style>

|

||||

</head>

|

||||

<body>

|

||||

<header>

|

||||

<h1>ContextHandoff Demo</h1>

|

||||

<span id="phase" class="badge">Idle</span>

|

||||

<span id="iter" class="badge" style="display:none">Step 0</span>

|

||||

</header>

|

||||

<div id="chat" class="chat"></div>

|

||||

<div class="input-bar">

|

||||

<input id="input" type="text"

|

||||

placeholder="Enter a research topic..." autofocus />

|

||||

<button id="go" onclick="run()">Research</button>

|

||||

</div>

|

||||

|

||||

<script>

|

||||

let ws = null;

|

||||

let currentAssistantEl = null;

|

||||

let iterCount = 0;

|

||||

let currentPhase = 'idle';

|

||||

const chat = document.getElementById('chat');

|

||||

const phase = document.getElementById('phase');

|

||||

const iterEl = document.getElementById('iter');

|

||||

const goBtn = document.getElementById('go');

|

||||

const inputEl = document.getElementById('input');

|

||||

|

||||

inputEl.addEventListener('keydown', e => {

|

||||

if (e.key === 'Enter') run();

|

||||

});

|

||||

|

||||

function setPhase(text, cls) {

|

||||

phase.textContent = text;

|

||||

phase.className = 'badge ' + cls;

|

||||

currentPhase = cls;

|

||||

}

|

||||

|

||||

function addMsg(text, cls) {

|

||||

const el = document.createElement('div');

|

||||

el.className = 'msg ' + cls;

|

||||

el.textContent = text;

|

||||

chat.appendChild(el);

|

||||

chat.scrollTop = chat.scrollHeight;

|

||||

return el;

|

||||

}

|

||||

|

||||

function addHandoffBanner(summary) {

|

||||

const banner = document.createElement('div');

|

||||

banner.className = 'handoff-banner';

|

||||

const h3 = document.createElement('h3');

|

||||

h3.textContent = 'Context Handoff: Researcher -> Analyst';

|

||||

const p = document.createElement('p');

|

||||

p.textContent = summary || 'Passing research context...';

|

||||

banner.appendChild(h3);

|

||||

banner.appendChild(p);

|

||||

chat.appendChild(banner);

|

||||

chat.scrollTop = chat.scrollHeight;

|

||||

}

|

||||

|

||||

function addResultBanner(researcher, analyst, tokens) {

|

||||

const banner = document.createElement('div');

|

||||

banner.className = 'result-banner';

|

||||

const h3 = document.createElement('h3');

|

||||

h3.textContent = 'Pipeline Complete';

|

||||

banner.appendChild(h3);

|

||||

|

||||

if (researcher && researcher.research_summary) {

|

||||

const lbl = document.createElement('div');

|

||||

lbl.className = 'label';

|

||||

lbl.textContent = 'RESEARCH SUMMARY';

|

||||

banner.appendChild(lbl);

|

||||

const p = document.createElement('p');

|

||||

p.textContent = researcher.research_summary;

|

||||

banner.appendChild(p);

|

||||

}

|

||||

|

||||

if (analyst && analyst.analysis) {

|

||||

const lbl = document.createElement('div');

|

||||

lbl.className = 'label';

|

||||

lbl.textContent = 'ANALYSIS';

|

||||

lbl.style.color = '#3fb950';

|

||||

banner.appendChild(lbl);

|

||||

const p = document.createElement('p');

|

||||

p.textContent = analyst.analysis;

|

||||

banner.appendChild(p);

|

||||

}

|

||||

|

||||

if (tokens) {

|

||||

const t = document.createElement('div');

|

||||

t.className = 'tokens';

|

||||

t.textContent = 'Total tokens: ' + tokens.toLocaleString();

|

||||

banner.appendChild(t);

|

||||

}

|

||||

|

||||

chat.appendChild(banner);

|

||||

chat.scrollTop = chat.scrollHeight;

|

||||

}

|

||||

|

||||

function connect() {

|

||||

ws = new WebSocket('ws://' + location.host + '/ws');

|

||||

ws.onopen = () => {

|

||||

setPhase('Ready', 'done');

|

||||

goBtn.disabled = false;

|

||||

};

|

||||

ws.onmessage = handleEvent;

|

||||

ws.onerror = () => { setPhase('Error', 'error'); };

|

||||

ws.onclose = () => {

|

||||

setPhase('Reconnecting...', '');

|

||||

goBtn.disabled = true;

|

||||

setTimeout(connect, 2000);

|

||||

};

|

||||

}

|

||||

|

||||

function handleEvent(msg) {

|

||||

const evt = JSON.parse(msg.data);

|

||||

|

||||

if (evt.type === 'phase') {

|

||||

if (evt.phase === 'researcher') {

|

||||

setPhase('Researcher', 'researcher');

|

||||

} else if (evt.phase === 'handoff') {

|

||||

setPhase('Handoff', 'handoff');

|

||||

} else if (evt.phase === 'analyst') {

|

||||

setPhase('Analyst', 'analyst');

|

||||

}

|

||||

iterCount = 0;

|

||||

iterEl.style.display = 'none';

|

||||

}

|

||||

else if (evt.type === 'llm_text_delta') {

|

||||

if (currentAssistantEl) {

|

||||

currentAssistantEl.textContent += evt.content;

|

||||

chat.scrollTop = chat.scrollHeight;

|

||||

}

|

||||

}

|

||||

else if (evt.type === 'node_loop_iteration') {

|

||||

iterCount = evt.iteration || (iterCount + 1);

|

||||

iterEl.textContent = 'Step ' + iterCount;

|

||||

iterEl.style.display = '';

|

||||

}

|

||||

else if (evt.type === 'tool_call_started') {

|

||||

var info = evt.tool_name + '('

|

||||

+ JSON.stringify(evt.tool_input).slice(0, 120) + ')';

|

||||

addMsg('TOOL ' + info, 'event tool');

|

||||

}

|

||||

else if (evt.type === 'tool_call_completed') {

|

||||

var preview = (evt.result || '').slice(0, 200);

|

||||

var cls = evt.is_error ? 'stall' : 'tool';

|

||||

addMsg(

|

||||

'RESULT ' + evt.tool_name + ': ' + preview,

|

||||

'event ' + cls

|

||||

);

|

||||

var assistCls = currentPhase === 'analyst'

|

||||

? 'assistant analyst-msg' : 'assistant';

|

||||

currentAssistantEl = addMsg('', assistCls);

|

||||

}

|

||||

else if (evt.type === 'handoff_context') {

|

||||

addHandoffBanner(evt.summary);

|

||||

var assistCls = 'assistant analyst-msg';

|

||||

currentAssistantEl = addMsg('', assistCls);

|

||||

}

|

||||

else if (evt.type === 'node_result') {

|

||||

if (evt.node_id === 'researcher') {

|

||||

if (currentAssistantEl

|

||||

&& !currentAssistantEl.textContent) {

|

||||

currentAssistantEl.remove();

|

||||

}

|

||||

}

|

||||

}

|

||||

else if (evt.type === 'done') {

|

||||

setPhase('Done', 'done');

|

||||

iterEl.style.display = 'none';

|

||||

if (currentAssistantEl

|

||||

&& !currentAssistantEl.textContent) {

|

||||

currentAssistantEl.remove();

|

||||

}

|

||||

currentAssistantEl = null;

|

||||

addResultBanner(

|

||||

evt.researcher, evt.analyst, evt.total_tokens

|

||||

);

|

||||

goBtn.disabled = false;

|

||||

inputEl.placeholder = 'Enter another topic...';

|

||||

}

|

||||

else if (evt.type === 'error') {

|

||||

setPhase('Error', 'error');

|

||||

addMsg('ERROR ' + evt.message, 'event stall');

|

||||

goBtn.disabled = false;

|

||||

}

|

||||

else if (evt.type === 'node_stalled') {

|

||||

addMsg('STALLED ' + evt.reason, 'event stall');

|

||||

}

|

||||

}

|

||||

|

||||

function run() {

|