Compare commits

391 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| d78473ff20 | |||

| 8ecb728148 | |||

| 4a2141bce9 | |||

| 3b4d6e4602 | |||

| 89ccc664bd | |||

| 4872c01886 | |||

| 4620380341 | |||

| fca2deb980 | |||

| d7ce923ca6 | |||

| 403b47db61 | |||

| 0d0e78579f | |||

| 447bfdfab8 | |||

| c77d21e393 | |||

| 6ded508b4d | |||

| 75f8bf5696 | |||

| 62fc02220b | |||

| 5d4f279646 | |||

| 920a840756 | |||

| 8680a35c39 | |||

| c9134cfd91 | |||

| 55ce751385 | |||

| aca2dfb536 | |||

| d11f539209 | |||

| 64a223353a | |||

| 2d154c2db6 | |||

| a00c934d9d | |||

| 18bee9cb90 | |||

| c1664e47e5 | |||

| 2cb972fc5a | |||

| 0bd841ce01 | |||

| 88ec4b7e64 | |||

| 27d5061d97 | |||

| a2cd96a1a7 | |||

| 07b82a51f6 | |||

| 3e1282b31e | |||

| 736756b257 | |||

| 90efe7009d | |||

| 4adb369bde | |||

| d4a30eb2f3 | |||

| 94bb4a2984 | |||

| 648bad26ed | |||

| f0c7470f3d | |||

| fe533b72a6 | |||

| e581767cab | |||

| 0663ee5950 | |||

| 4b97baa34b | |||

| a89296d397 | |||

| d568912ba2 | |||

| c4d7980058 | |||

| 8549fe8238 | |||

| 2b8d85bb95 | |||

| 07f7801166 | |||

| 1f12a45151 | |||

| 936e02e8e6 | |||

| d59fe1e109 | |||

| 274318d3e5 | |||

| 0f0884c2e0 | |||

| 764012c598 | |||

| fd4dc1a69a | |||

| 377cd39c2a | |||

| e92caeef24 | |||

| b7e6226478 | |||

| a995818db2 | |||

| 0772b4d300 | |||

| 684e0d8dc6 | |||

| d284c5d790 | |||

| 7a9b9666c4 | |||

| a852cb91bf | |||

| 2f21e9eb4b | |||

| 8390ef8731 | |||

| 8d21479c24 | |||

| 965dec3ba1 | |||

| d4b54446be | |||

| 7992b862c2 | |||

| 44b3e0eaa2 | |||

| f480fc2b94 | |||

| 2844dbf19f | |||

| 22b7e4b0c3 | |||

| 5413833a69 | |||

| 02e1a4584a | |||

| 520840b1dd | |||

| ee96147336 | |||

| 705cef4dc1 | |||

| ab26e64122 | |||

| f365e219cb | |||

| 01621881c2 | |||

| f7639f8572 | |||

| fc643060ce | |||

| 9aebeb181e | |||

| acbbfaaa79 | |||

| bf170bce10 | |||

| 0a090d058b | |||

| 47bfadaad9 | |||

| d968dcd44c | |||

| 6fdaa9ea50 | |||

| 4d251fbdc2 | |||

| 6acceed288 | |||

| 8dd1d6e3aa | |||

| 1da28644a6 | |||

| 6452fe7fef | |||

| acff008bd2 | |||

| 651d6850a1 | |||

| c7fdc92594 | |||

| 43602a8801 | |||

| 3da04265a6 | |||

| 4c98f0d2d0 | |||

| d84c3364d0 | |||

| ae921f6cee | |||

| 6b506a1c08 | |||

| 0c9f4fa97e | |||

| 95e30bc607 | |||

| 0f1f0090b0 | |||

| c0da3bec02 | |||

| 9dadb5264d | |||

| e39e6a75cc | |||

| 23c66d1059 | |||

| b9d529d94e | |||

| 1c9b09fb78 | |||

| 9fb14f23d2 | |||

| 4795dc4f68 | |||

| acf0f804c5 | |||

| 4e2951854b | |||

| 80dfb429d7 | |||

| 9c0ba77e22 | |||

| 46b4651073 | |||

| 86dd5246c6 | |||

| a1227c88ee | |||

| 535d7ab568 | |||

| af10494b31 | |||

| 39c1042827 | |||

| 16e7dc11f4 | |||

| 7a27babefd | |||

| d53ae9d51d | |||

| 910cf7727d | |||

| 1698605f15 | |||

| eda124a123 | |||

| 15e9ce8d2f | |||

| c01dd603d7 | |||

| 9d5157d69f | |||

| d78795bdf5 | |||

| ff2b7f473e | |||

| 73c9a91811 | |||

| 27b765d902 | |||

| fddba419be | |||

| f42d6308e8 | |||

| c167002754 | |||

| ea26ee7d0c | |||

| 5280e908b2 | |||

| 1c5dd8c664 | |||

| 3aca153be5 | |||

| 65c8e1653c | |||

| 58e4fa918c | |||

| 3af13d3f90 | |||

| b799789dbe | |||

| 2cd73dfccc | |||

| 57d77d5479 | |||

| 5814021773 | |||

| 4f4cc9c8ce | |||

| d9c840eee5 | |||

| d2eb86e534 | |||

| 03842353e4 | |||

| 48747e20af | |||

| 58af593af6 | |||

| 450575a927 | |||

| eac2bb19b2 | |||

| 756a815bf0 | |||

| 23a7b080eb | |||

| bf39bcdec9 | |||

| 0276632491 | |||

| ae2993d0d1 | |||

| d14d71f760 | |||

| ef6efc2f55 | |||

| 738641d35f | |||

| 22f5534f08 | |||

| b79e7eca73 | |||

| 28250dc45e | |||

| fe5df6a87a | |||

| 07e4b593dd | |||

| 497591bf3b | |||

| a2a3e334d6 | |||

| 1ccbfaf800 | |||

| a9afa0555c | |||

| 83b2183cf0 | |||

| c2dea88398 | |||

| f49e7a760e | |||

| dc95c88da0 | |||

| 6e0255ebec | |||

| b51e688d1a | |||

| 379d3df46b | |||

| b77a3031fe | |||

| c10eea04ec | |||

| 491a3f24da | |||

| c7d70e0fb1 | |||

| d59f8e99cb | |||

| 0a91b49417 | |||

| ced64541b9 | |||

| 88253883a3 | |||

| 3c30cfe02b | |||

| 0d6267bcf1 | |||

| b47175d1df | |||

| 6f23a30eed | |||

| ff7b5c7e27 | |||

| 69f0ff7ac9 | |||

| c3f13c50eb | |||

| 5477408d40 | |||

| 9fad385ddf | |||

| cf44ee1d9b | |||

| 4ab33a39d6 | |||

| ae19121802 | |||

| b518525418 | |||

| ac3fe38b33 | |||

| 3c6a30fcae | |||

| 2ced873fb5 | |||

| 6ed6e5b286 | |||

| 30bb0ad5d8 | |||

| cb0845f5ba | |||

| ce2525b59c | |||

| 1f77ec3831 | |||

| ab995d8b96 | |||

| 6ab5aa8004 | |||

| 4449cd8ee8 | |||

| 8b60c03a0a | |||

| c2e560fc07 | |||

| 19f7ae862e | |||

| 5e9f74744a | |||

| 0e98023e40 | |||

| 7787179a5a | |||

| b63205b91a | |||

| 347bccb9ee | |||

| 22bb07f00e | |||

| 660f883197 | |||

| 9d83f0298f | |||

| 988de80b66 | |||

| dc6aa226ee | |||

| 48a54b4ee2 | |||

| 7f7e8b4dff | |||

| f48a7380f5 | |||

| 3c7f129d86 | |||

| 4533b27aa1 | |||

| 3adf268c29 | |||

| ac8579900f | |||

| abbaaa68f3 | |||

| 11089093ef | |||

| 99b7cb07d5 | |||

| 70d61ae67a | |||

| dd054815a3 | |||

| 8e5eaae9dd | |||

| 2d0128eb5c | |||

| 06f1d4dcef | |||

| 0e7b11b5b2 | |||

| 291b78f934 | |||

| e196a03972 | |||

| a0abe2685d | |||

| e8f642c8b6 | |||

| 6260f628eb | |||

| 4a4f17ed40 | |||

| 36dcf2025b | |||

| 85c70c94e6 | |||

| 336e82ba22 | |||

| a7b6b080ab | |||

| 9202cbd4d4 | |||

| f2ddd1051d | |||

| 2dd60c8d52 | |||

| ff01c1fd99 | |||

| 421b25fdb7 | |||

| 795c3c33e2 | |||

| 97821f4d80 | |||

| 505e1e30fd | |||

| 3fb2b285fb | |||

| a76109840c | |||

| 1db8484402 | |||

| 39212350ba | |||

| f3399fe95b | |||

| d02e1155ed | |||

| 7ede3ba171 | |||

| cdaec8a837 | |||

| 2272491cf5 | |||

| bb38cb974f | |||

| 635d2976f4 | |||

| 4e1525880d | |||

| b80559df68 | |||

| 08d93ef90a | |||

| 22bf035522 | |||

| 15944a42ab | |||

| 8440ec70ba | |||

| eacf2520cf | |||

| def4f62a51 | |||

| b0c5bcd210 | |||

| 2fe1343343 | |||

| de0dcff50f | |||

| 20427e213a | |||

| 1fb5c6337a | |||

| 1e74f194a1 | |||

| 08157d2bd6 | |||

| ef036257a9 | |||

| 16ce984c74 | |||

| 1e8b5b96eb | |||

| 094ba89f19 | |||

| 7008c9f310 | |||

| 94d7cbacc2 | |||

| bddc2b413a | |||

| 48c8fb7fff | |||

| 52b1a3f472 | |||

| 079e00c8f7 | |||

| 60bba38941 | |||

| ea8e7b11c6 | |||

| 3dc2b25b01 | |||

| 543b90b34f | |||

| 2ad78ec8a2 | |||

| 412658e9f2 | |||

| 9bfddec322 | |||

| bbd9c10169 | |||

| 51fdc4ddde | |||

| 04685d33ca | |||

| 729a0e0cec | |||

| 2bcb0cacee | |||

| 44bf191f53 | |||

| 993b31f19b | |||

| 41b3b9619f | |||

| 2a4fe4020c | |||

| 9d1f268078 | |||

| 2185e127b1 | |||

| 99ed885fd0 | |||

| d8a390a685 | |||

| f50cf1735b | |||

| 04eb57f54e | |||

| 7378408eb8 | |||

| cf05420417 | |||

| f5ed4c7d43 | |||

| 5547432b6e | |||

| 336557d7c7 | |||

| 87c172227c | |||

| c2c4929de8 | |||

| a978338738 | |||

| 8eb59b1f66 | |||

| f9d5f95936 | |||

| 651e99ffe3 | |||

| c01cd528d2 | |||

| 2434c86cdf | |||

| c4a5e621aa | |||

| 0f5b83d86a | |||

| b5aadcd51e | |||

| 290d2f6823 | |||

| 944567dc31 | |||

| 674cf05601 | |||

| 6fa71fa27d | |||

| 8c7065ad37 | |||

| a18ed5bbe6 | |||

| 9f3339650d | |||

| d5e5d3e83d | |||

| 5ea27dda09 | |||

| 6f9066ef20 | |||

| c37185732a | |||

| 0c900fb50e | |||

| 4d3ac28878 | |||

| 270c1f8c50 | |||

| 3d0859d06a | |||

| ed3d4bfe33 | |||

| 596ce9878d | |||

| ffe47c0f71 | |||

| bf4652db4b | |||

| 2acd526b71 | |||

| df71834e4b | |||

| bc3c5a5899 | |||

| 726016d24a | |||

| 4895cea08a | |||

| c9723a3ff2 | |||

| 6cb73a6fea | |||

| 0c7f43f595 | |||

| ea5cfcc5d6 | |||

| 34e85019c3 | |||

| c979dba958 | |||

| b4caa045e1 | |||

| e82133741c | |||

| 5076278dcb | |||

| 2398e04e11 | |||

| d00f321627 | |||

| e76b6cb575 | |||

| cba0ec110f | |||

| 0256e0c944 | |||

| 4d9d0362a0 | |||

| f474d0bc8e | |||

| 6a0681b9aa | |||

| c7e634851b | |||

| cdb7155960 | |||

| 3f7790c26a | |||

| 5676b115f4 | |||

| 61c59d57e8 | |||

| 151fbd7b00 | |||

| f88483f964 | |||

| b61ec8c94d |

@@ -0,0 +1,78 @@

|

||||

name: Standard Bounty

|

||||

description: A bounty task for general framework contributions (not integration-specific)

|

||||

title: "[Bounty]: "

|

||||

labels: []

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

## Standard Bounty

|

||||

|

||||

This issue is part of the [Bounty Program](../../docs/bounty-program/README.md).

|

||||

**Claim this bounty** by commenting below — a maintainer will assign you within 24 hours.

|

||||

|

||||

- type: dropdown

|

||||

id: bounty-size

|

||||

attributes:

|

||||

label: Bounty Size

|

||||

options:

|

||||

- "Small (10 pts)"

|

||||

- "Medium (30 pts)"

|

||||

- "Large (75 pts)"

|

||||

- "Extreme (150 pts)"

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: dropdown

|

||||

id: difficulty

|

||||

attributes:

|

||||

label: Difficulty

|

||||

options:

|

||||

- Easy

|

||||

- Medium

|

||||

- Hard

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

id: description

|

||||

attributes:

|

||||

label: Description

|

||||

description: What needs to be done to complete this bounty.

|

||||

placeholder: |

|

||||

Describe the specific task, including:

|

||||

- What the contributor needs to do

|

||||

- Links to relevant files in the repo

|

||||

- Any context or motivation for the change

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

id: acceptance-criteria

|

||||

attributes:

|

||||

label: Acceptance Criteria

|

||||

description: What "done" looks like. The PR must meet all criteria.

|

||||

placeholder: |

|

||||

- [ ] Criterion 1

|

||||

- [ ] Criterion 2

|

||||

- [ ] CI passes

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

id: relevant-files

|

||||

attributes:

|

||||

label: Relevant Files

|

||||

description: Links to files or directories related to this bounty.

|

||||

placeholder: |

|

||||

- `path/to/file.py`

|

||||

- `path/to/directory/`

|

||||

|

||||

- type: textarea

|

||||

id: resources

|

||||

attributes:

|

||||

label: Resources

|

||||

description: Links to docs, issues, or external references that will help.

|

||||

placeholder: |

|

||||

- Related issue: #XXXX

|

||||

- Docs: https://...

|

||||

@@ -2,14 +2,22 @@ name: Bounty completed

|

||||

description: Awards points and notifies Discord when a bounty PR is merged

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

pull_request_target:

|

||||

types: [closed]

|

||||

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

pr_number:

|

||||

description: "PR number to process (for missed bounties)"

|

||||

required: true

|

||||

type: number

|

||||

|

||||

jobs:

|

||||

bounty-notify:

|

||||

if: >

|

||||

github.event.pull_request.merged == true &&

|

||||

contains(join(github.event.pull_request.labels.*.name, ','), 'bounty:')

|

||||

github.event_name == 'workflow_dispatch' ||

|

||||

(github.event.pull_request.merged == true &&

|

||||

contains(join(github.event.pull_request.labels.*.name, ','), 'bounty:'))

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 5

|

||||

permissions:

|

||||

@@ -32,6 +40,8 @@ jobs:

|

||||

GITHUB_REPOSITORY_OWNER: ${{ github.repository_owner }}

|

||||

GITHUB_REPOSITORY_NAME: ${{ github.event.repository.name }}

|

||||

DISCORD_WEBHOOK_URL: ${{ secrets.DISCORD_BOUNTY_WEBHOOK_URL }}

|

||||

BOT_API_URL: ${{ secrets.BOT_API_URL }}

|

||||

BOT_API_KEY: ${{ secrets.BOT_API_KEY }}

|

||||

LURKR_API_KEY: ${{ secrets.LURKR_API_KEY }}

|

||||

LURKR_GUILD_ID: ${{ secrets.LURKR_GUILD_ID }}

|

||||

PR_NUMBER: ${{ github.event.pull_request.number }}

|

||||

PR_NUMBER: ${{ inputs.pr_number || github.event.pull_request.number }}

|

||||

|

||||

@@ -1,126 +0,0 @@

|

||||

name: Link Discord account

|

||||

description: Auto-creates a PR to add contributor to contributors.yml when a link-discord issue is opened

|

||||

|

||||

on:

|

||||

issues:

|

||||

types: [opened]

|

||||

|

||||

jobs:

|

||||

link-discord:

|

||||

if: contains(github.event.issue.labels.*.name, 'link-discord')

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 2

|

||||

permissions:

|

||||

contents: write

|

||||

issues: write

|

||||

pull-requests: write

|

||||

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Parse issue and update contributors.yml

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const fs = require('fs');

|

||||

|

||||

const issue = context.payload.issue;

|

||||

const githubUsername = issue.user.login;

|

||||

|

||||

// Parse the issue body for form fields

|

||||

const body = issue.body || '';

|

||||

|

||||

// Extract Discord ID — look for the numeric value after the "Discord User ID" heading

|

||||

const discordMatch = body.match(/### Discord User ID\s*\n\s*(\d{17,20})/);

|

||||

if (!discordMatch) {

|

||||

await github.rest.issues.createComment({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

body: `Could not find a valid Discord ID in the issue body. Please make sure you entered a numeric ID (17-20 digits), not a username.\n\nExample: \`123456789012345678\``

|

||||

});

|

||||

await github.rest.issues.update({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

state: 'closed',

|

||||

state_reason: 'not_planned'

|

||||

});

|

||||

return;

|

||||

}

|

||||

const discordId = discordMatch[1];

|

||||

|

||||

// Extract display name (optional)

|

||||

const nameMatch = body.match(/### Display Name \(optional\)\s*\n\s*(.+)/);

|

||||

const displayName = nameMatch ? nameMatch[1].trim() : '';

|

||||

|

||||

// Check if user already exists

|

||||

const yml = fs.readFileSync('contributors.yml', 'utf-8');

|

||||

if (yml.includes(`github: ${githubUsername}`)) {

|

||||

await github.rest.issues.createComment({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

body: `@${githubUsername} is already in \`contributors.yml\`. If you need to update your Discord ID, please edit the file directly via PR.`

|

||||

});

|

||||

await github.rest.issues.update({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

state: 'closed',

|

||||

state_reason: 'completed'

|

||||

});

|

||||

return;

|

||||

}

|

||||

|

||||

// Append entry to contributors.yml

|

||||

let entry = ` - github: ${githubUsername}\n discord: "${discordId}"`;

|

||||

if (displayName && displayName !== '_No response_') {

|

||||

entry += `\n name: ${displayName}`;

|

||||

}

|

||||

entry += '\n';

|

||||

|

||||

const updated = yml.trimEnd() + '\n' + entry;

|

||||

fs.writeFileSync('contributors.yml', updated);

|

||||

|

||||

// Set outputs for commit step

|

||||

core.exportVariable('GITHUB_USERNAME', githubUsername);

|

||||

core.exportVariable('DISCORD_ID', discordId);

|

||||

core.exportVariable('ISSUE_NUMBER', issue.number.toString());

|

||||

|

||||

- name: Create PR

|

||||

run: |

|

||||

# Check if there are changes

|

||||

if git diff --quiet contributors.yml; then

|

||||

echo "No changes to contributors.yml"

|

||||

exit 0

|

||||

fi

|

||||

|

||||

BRANCH="docs/link-discord-${GITHUB_USERNAME}"

|

||||

git config user.name "github-actions[bot]"

|

||||

git config user.email "41898282+github-actions[bot]@users.noreply.github.com"

|

||||

git checkout -b "$BRANCH"

|

||||

git add contributors.yml

|

||||

git commit -m "docs: link @${GITHUB_USERNAME} to Discord"

|

||||

git push origin "$BRANCH"

|

||||

|

||||

gh pr create \

|

||||

--title "docs: link @${GITHUB_USERNAME} to Discord" \

|

||||

--body "Adds @${GITHUB_USERNAME} (Discord \`${DISCORD_ID}\`) to \`contributors.yml\` for bounty XP tracking.

|

||||

|

||||

Closes #${ISSUE_NUMBER}" \

|

||||

--base main \

|

||||

--head "$BRANCH" \

|

||||

--label "link-discord"

|

||||

env:

|

||||

GH_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Notify on issue

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const username = process.env.GITHUB_USERNAME;

|

||||

const issueNumber = parseInt(process.env.ISSUE_NUMBER);

|

||||

|

||||

await github.rest.issues.createComment({

|

||||

...context.repo,

|

||||

issue_number: issueNumber,

|

||||

body: `A PR has been created to link your account. A maintainer will merge it shortly — once merged, you'll receive XP and Discord pings when your bounty PRs are merged.`

|

||||

});

|

||||

@@ -35,6 +35,8 @@ jobs:

|

||||

GITHUB_REPOSITORY_OWNER: ${{ github.repository_owner }}

|

||||

GITHUB_REPOSITORY_NAME: ${{ github.event.repository.name }}

|

||||

DISCORD_WEBHOOK_URL: ${{ secrets.DISCORD_BOUNTY_WEBHOOK_URL }}

|

||||

BOT_API_URL: ${{ secrets.BOT_API_URL }}

|

||||

BOT_API_KEY: ${{ secrets.BOT_API_KEY }}

|

||||

LURKR_API_KEY: ${{ secrets.LURKR_API_KEY }}

|

||||

LURKR_GUILD_ID: ${{ secrets.LURKR_GUILD_ID }}

|

||||

SINCE_DATE: ${{ github.event.inputs.since_date || '' }}

|

||||

|

||||

@@ -68,7 +68,6 @@ temp/

|

||||

exports/*

|

||||

|

||||

.claude/settings.local.json

|

||||

.claude/skills/ship-it/

|

||||

|

||||

.venv

|

||||

|

||||

|

||||

+150

-27

@@ -1,17 +1,149 @@

|

||||

# Release Notes

|

||||

|

||||

## v0.7.1

|

||||

|

||||

**Release Date:** March 13, 2026

|

||||

**Tag:** v0.7.1

|

||||

|

||||

### Chrome-Native Browser Control

|

||||

|

||||

v0.7.1 replaces Playwright with direct Chrome DevTools Protocol (CDP) integration. The GCU now launches the user's system Chrome via `open -n` on macOS, connects over CDP, and manages browser lifecycle end-to-end -- no extra browser binary required.

|

||||

|

||||

---

|

||||

|

||||

### Highlights

|

||||

|

||||

#### System Chrome via CDP

|

||||

|

||||

The entire GCU browser stack has been rewritten:

|

||||

|

||||

- **Chrome finder & launcher** -- New `chrome_finder.py` discovers installed Chrome and `chrome_launcher.py` manages process lifecycle with `--remote-debugging-port`

|

||||

- **Coexist with user's browser** -- `open -n` on macOS launches a separate Chrome instance so the user's tabs stay untouched

|

||||

- **Dynamic viewport sizing** -- Viewport auto-sizes to the available display area, suppressing Chrome warning bars

|

||||

- **Orphan cleanup** -- Chrome processes are killed on GCU server shutdown to prevent leaks

|

||||

- **`--no-startup-window`** -- Chrome launches headlessly by default until a page is needed

|

||||

|

||||

#### Per-Subagent Browser Isolation

|

||||

|

||||

Each GCU subagent gets its own Chrome user-data directory, preventing cookie/session cross-contamination:

|

||||

|

||||

- Unique browser profiles injected per subagent

|

||||

- Profiles cleaned up after top-level GCU node execution

|

||||

- Tab origin and age metadata tracked per subagent

|

||||

|

||||

#### Dummy Agent Testing Framework

|

||||

|

||||

A comprehensive test suite for validating agent graph patterns without LLM calls:

|

||||

|

||||

- 8 test modules covering echo, pipeline, branch, parallel merge, retry, feedback loop, worker, and GCU subagent patterns

|

||||

- Shared fixtures and a `run_all.py` runner for CI integration

|

||||

- Subagent lifecycle tests

|

||||

|

||||

---

|

||||

|

||||

### What's New

|

||||

|

||||

#### GCU Browser

|

||||

|

||||

- **Switch from Playwright to system Chrome via CDP** -- Direct CDP connection replaces Playwright dependency. (@bryanadenhq)

|

||||

- **Chrome finder and launcher modules** -- `chrome_finder.py` and `chrome_launcher.py` for cross-platform Chrome discovery and process management. (@bryanadenhq)

|

||||

- **Dynamic viewport sizing** -- Auto-size viewport and suppress Chrome warning bar. (@bryanadenhq)

|

||||

- **Per-subagent browser profile isolation** -- Unique user-data directories per subagent with cleanup. (@bryanadenhq)

|

||||

- **Tab origin/age metadata** -- Track which subagent opened each tab and when. (@bryanadenhq)

|

||||

- **`browser_close_all` tool** -- Bulk tab cleanup for agents managing many pages. (@bryanadenhq)

|

||||

- **Auto-track popup pages** -- Popups are automatically captured and tracked. (@bryanadenhq)

|

||||

- **Auto-snapshot from browser interactions** -- Browser interaction tools return screenshots automatically. (@bryanadenhq)

|

||||

- **Kill orphaned Chrome processes** -- GCU server shutdown cleans up lingering Chrome instances. (@bryanadenhq)

|

||||

- **`--no-startup-window` Chrome flag** -- Prevent empty window on launch. (@bryanadenhq)

|

||||

- **Launch Chrome via `open -n` on macOS** -- Coexist with the user's running browser. (@bryanadenhq)

|

||||

|

||||

#### Framework & Runtime

|

||||

|

||||

- **Session resume fix for new agents** -- Correctly resume sessions when a new agent is loaded. (@bryanadenhq)

|

||||

- **Queen upsert fix** -- Prevent duplicate queen entries on session restore. (@bryanadenhq)

|

||||

- **Anchor worker monitoring to queen's session ID on cold-restore** -- Worker monitors reconnect to the correct queen after restart. (@bryanadenhq)

|

||||

- **Update meta.json when loading workers** -- Worker metadata stays in sync with runtime state. (@RichardTang-Aden)

|

||||

- **Generate worker MCP file correctly** -- Fix MCP config generation for spawned workers. (@RichardTang-Aden)

|

||||

- **Share event bus so tool events are visible to parent** -- Tool execution events propagate up to parent graphs. (@bryanadenhq)

|

||||

- **Subagent activity tracking in queen status** -- Queen instructions include live subagent status. (@bryanadenhq)

|

||||

- **GCU system prompt updates** -- Auto-snapshots, batching, popup tracking, and close_all guidance. (@bryanadenhq)

|

||||

|

||||

#### Frontend

|

||||

|

||||

- **Loading spinner in draft panel** -- Shows spinner during planning phase instead of blank panel. (@bryanadenhq)

|

||||

- **Fix credential modal errors** -- Modal no longer eats errors; banner stays visible. (@bryanadenhq)

|

||||

- **Fix credentials_required loop** -- Stop clearing the flag on modal close to prevent infinite re-prompting. (@bryanadenhq)

|

||||

- **Fix "Add tab" dropdown overflow** -- Dropdown no longer hidden when many agents are open. (@prasoonmhwr)

|

||||

|

||||

#### Testing

|

||||

|

||||

- **Dummy agent test framework** -- 8 test modules (echo, pipeline, branch, parallel merge, retry, feedback loop, worker, GCU subagent) with shared fixtures and CI runner. (@bryanadenhq)

|

||||

- **Subagent lifecycle tests** -- Validate subagent spawn and completion flows. (@bryanadenhq)

|

||||

|

||||

#### Documentation & Infrastructure

|

||||

|

||||

- **MCP integration PRD** -- Product requirements for MCP server registry. (@TimothyZhang7)

|

||||

- **Skills registry PRD** -- Product requirements for skill registry system. (@bryanadenhq)

|

||||

- **Bounty program updates** -- Standard bounty issue template and updated contributor guide. (@bryanadenhq)

|

||||

- **Windows quickstart** -- Add default context limit for PowerShell setup. (@bryanadenhq)

|

||||

- **Remove deprecated files** -- Clean up `setup_mcp.py`, `verify_mcp.py`, `antigravity-setup.md`, and `setup-antigravity-mcp.sh`. (@bryanadenhq)

|

||||

|

||||

---

|

||||

|

||||

### Bug Fixes

|

||||

|

||||

- Fix credential modal eating errors and banner staying open

|

||||

- Stop clearing `credentials_required` on modal close to prevent infinite loop

|

||||

- Share event bus so tool events are visible to parent graph

|

||||

- Use lazy %-formatting in subagent completion log to avoid f-string in logger

|

||||

- Anchor worker monitoring to queen's session ID on cold-restore

|

||||

- Update meta.json when loading workers

|

||||

- Generate worker MCP file correctly

|

||||

- Fix "Add tab" dropdown partially hidden when creating multiple agents

|

||||

|

||||

---

|

||||

|

||||

### Community Contributors

|

||||

|

||||

- **Prasoon Mahawar** (@prasoonmhwr) -- Fix UI overflow on agent tab dropdown

|

||||

- **Richard Tang** (@RichardTang-Aden) -- Worker MCP generation and meta.json fixes

|

||||

|

||||

---

|

||||

|

||||

### Upgrading

|

||||

|

||||

```bash

|

||||

git pull origin main

|

||||

uv sync

|

||||

```

|

||||

|

||||

The Playwright dependency is no longer required for GCU browser operations. Chrome must be installed on the host system.

|

||||

|

||||

---

|

||||

|

||||

## v0.7.0

|

||||

|

||||

**Release Date:** March 5, 2026

|

||||

**Tag:** v0.7.0

|

||||

|

||||

Session management refactor release.

|

||||

|

||||

---

|

||||

|

||||

## v0.5.1

|

||||

|

||||

**Release Date:** February 18, 2026

|

||||

**Tag:** v0.5.1

|

||||

|

||||

## The Hive Gets a Brain

|

||||

### The Hive Gets a Brain

|

||||

|

||||

v0.5.1 is our most ambitious release yet. Hive agents can now **build other agents** -- the new Hive Coder meta-agent writes, tests, and fixes agent packages from natural language. The runtime grows multi-graph support so one session can orchestrate multiple agents simultaneously. The TUI gets a complete overhaul with an in-app agent picker, live streaming, and seamless escalation to the Coder. And we're now provider-agnostic: Claude Code subscriptions, OpenAI-compatible endpoints, and any LiteLLM-supported model work out of the box.

|

||||

|

||||

---

|

||||

|

||||

## Highlights

|

||||

### Highlights

|

||||

|

||||

### Hive Coder -- The Agent That Builds Agents

|

||||

#### Hive Coder -- The Agent That Builds Agents

|

||||

|

||||

A native meta-agent that lives inside the framework at `core/framework/agents/hive_coder/`. Give it a natural-language specification and it produces a complete agent package -- goal definition, node prompts, edge routing, MCP tool wiring, tests, and all boilerplate files.

|

||||

|

||||

@@ -30,7 +162,7 @@ The Coder ships with:

|

||||

- **Coder Tools MCP server** -- file I/O, fuzzy-match editing, git snapshots, and sandboxed shell execution (`tools/coder_tools_server.py`)

|

||||

- **Test generation** -- structural tests for forever-alive agents that don't hang on `runner.run()`

|

||||

|

||||

### Multi-Graph Agent Runtime

|

||||

#### Multi-Graph Agent Runtime

|

||||

|

||||

`AgentRuntime` now supports loading, managing, and switching between multiple agent graphs within a single session. Six new lifecycle tools give agents (and the TUI) full control:

|

||||

|

||||

@@ -44,7 +176,7 @@ await runtime.add_graph("exports/deep_research_agent")

|

||||

|

||||

The Hive Coder uses multi-graph internally -- when you escalate from a worker agent, the Coder loads as a separate graph while the worker stays alive in the background.

|

||||

|

||||

### TUI Revamp

|

||||

#### TUI Revamp

|

||||

|

||||

The Terminal UI gets a ground-up rebuild with five major additions:

|

||||

|

||||

@@ -54,7 +186,7 @@ The Terminal UI gets a ground-up rebuild with five major additions:

|

||||

- **PDF attachments** -- `/attach` and `/detach` commands with native OS file dialog (macOS, Linux, Windows)

|

||||

- **Multi-graph commands** -- `/graphs`, `/graph <id>`, `/load <path>`, `/unload <id>` for managing agent graphs in-session

|

||||

|

||||

### Provider-Agnostic LLM Support

|

||||

#### Provider-Agnostic LLM Support

|

||||

|

||||

Hive is no longer Anthropic-only. v0.5.1 adds first-class support for:

|

||||

|

||||

@@ -66,9 +198,9 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

|

||||

---

|

||||

|

||||

## What's New

|

||||

### What's New

|

||||

|

||||

### Architecture & Runtime

|

||||

#### Architecture & Runtime

|

||||

|

||||

- **Hive Coder meta-agent** -- Natural-language agent builder with reference docs, guardian watchdog, and `hive code` CLI command. (@TimothyZhang7)

|

||||

- **Multi-graph agent sessions** -- `add_graph`/`remove_graph` on AgentRuntime with 6 lifecycle tools (`load_agent`, `unload_agent`, `start_agent`, `restart_agent`, `list_agents`, `get_user_presence`). (@TimothyZhang7)

|

||||

@@ -79,7 +211,7 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

- **Pre-start confirmation prompt** -- Interactive prompt before agent execution allowing credential updates or abort. (@RichardTang-Aden)

|

||||

- **Event bus multi-graph support** -- `graph_id` on events, `filter_graph` on subscriptions, `ESCALATION_REQUESTED` event type, `exclude_own_graph` filter. (@TimothyZhang7)

|

||||

|

||||

### TUI Improvements

|

||||

#### TUI Improvements

|

||||

|

||||

- **In-app agent picker** (Ctrl+A) -- Tabbed modal for browsing agents with metadata badges (nodes, tools, sessions, tags). (@TimothyZhang7)

|

||||

- **Runtime-optional TUI startup** -- Launches without a pre-loaded agent, shows agent picker on startup. (@TimothyZhang7)

|

||||

@@ -89,7 +221,7 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

- **Multi-graph TUI commands** -- `/graphs`, `/graph <id>`, `/load <path>`, `/unload <id>`. (@TimothyZhang7)

|

||||

- **Agent Guardian watchdog** -- Event-driven monitor that catches secondary agent failures and triggers automatic remediation, with `--no-guardian` CLI flag. (@TimothyZhang7)

|

||||

|

||||

### New Tool Integrations

|

||||

#### New Tool Integrations

|

||||

|

||||

| Tool | Description | Contributor |

|

||||

| ---------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ------------------ |

|

||||

@@ -99,7 +231,7 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

| **Google Docs** | Document creation, reading, and editing with OAuth credential support | @haliaeetusvocifer |

|

||||

| **Gmail enhancements** | Expanded mail operations for inbox management | @bryanadenhq |

|

||||

|

||||

### Infrastructure

|

||||

#### Infrastructure

|

||||

|

||||

- **Default node type → `event_loop`** -- `NodeSpec.node_type` defaults to `"event_loop"` instead of `"llm_tool_use"`. (@TimothyZhang7)

|

||||

- **Default `max_node_visits` → 0 (unlimited)** -- Nodes default to unlimited visits, reducing friction for feedback loops and forever-alive agents. (@TimothyZhang7)

|

||||

@@ -112,7 +244,7 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

|

||||

---

|

||||

|

||||

## Bug Fixes

|

||||

### Bug Fixes

|

||||

|

||||

- Flush WIP accumulator outputs on cancel/failure so edge conditions see correct values on resume

|

||||

- Stall detection state preserved across resume (no more resets on checkpoint restore)

|

||||

@@ -125,13 +257,13 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

- Fix email agent version conflicts (@RichardTang-Aden)

|

||||

- Fix coder tool timeouts (120s for tests, 300s cap for commands)

|

||||

|

||||

## Documentation

|

||||

### Documentation

|

||||

|

||||

- Clarify installation and prevent root pip install misuse (@paarths-collab)

|

||||

|

||||

---

|

||||

|

||||

## Agent Updates

|

||||

### Agent Updates

|

||||

|

||||

- **Email Inbox Management** -- Consolidate `gmail_inbox_guardian` and `inbox_management` into a single unified agent with updated prompts and config. (@RichardTang-Aden, @bryanadenhq)

|

||||

- **Job Hunter** -- Updated node prompts, config, and agent metadata; added PDF resume selection. (@bryanadenhq)

|

||||

@@ -141,7 +273,7 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

|

||||

---

|

||||

|

||||

## Breaking Changes

|

||||

### Breaking Changes

|

||||

|

||||

- **Deprecated node types raise `RuntimeError`** -- `llm_tool_use`, `llm_generate`, `function`, `router`, `human_input` now fail instead of warning. Migrate to `event_loop`.

|

||||

- **`NodeSpec.node_type` defaults to `"event_loop"`** (was `"llm_tool_use"`)

|

||||

@@ -150,7 +282,7 @@ The quickstart script auto-detects Claude Code subscriptions and ZAI Code instal

|

||||

|

||||

---

|

||||

|

||||

## Community Contributors

|

||||

### Community Contributors

|

||||

|

||||

A huge thank you to everyone who contributed to this release:

|

||||

|

||||

@@ -165,14 +297,14 @@ A huge thank you to everyone who contributed to this release:

|

||||

|

||||

---

|

||||

|

||||

## Upgrading

|

||||

### Upgrading

|

||||

|

||||

```bash

|

||||

git pull origin main

|

||||

uv sync

|

||||

```

|

||||

|

||||

### Migration Guide

|

||||

#### Migration Guide

|

||||

|

||||

If your agents use deprecated node types, update them:

|

||||

|

||||

@@ -196,12 +328,3 @@ hive code

|

||||

# Or from TUI -- press Ctrl+E to escalate

|

||||

hive tui

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## What's Next

|

||||

|

||||

- **Agent-to-agent communication** -- one agent's output triggers another agent's entry point

|

||||

- **Cost visibility** -- detailed runtime log of LLM costs per node and per session

|

||||

- **Persistent webhook subscriptions** -- survive agent restarts without re-registering

|

||||

- **Remote agent deployment** -- run agents as long-lived services with HTTP APIs

|

||||

|

||||

+16

-5

@@ -4,7 +4,7 @@

|

||||

|

||||

Welcome to Aden Hive, an open-source AI agent framework built for developers who demand production-grade reliability, cross-platform support, and real-world performance. This guide will help you contribute effectively, whether you're fixing bugs, adding features, improving documentation, or building new tools.

|

||||

|

||||

Thank you for your interest in contributing! We're especially looking for help building tools, integrations ([check #2805](https://github.com/adenhq/hive/issues/2805)), and example agents for the framework.

|

||||

Thank you for your interest in contributing! We're especially looking for help building tools, integrations ([check #2805](https://github.com/aden-hive/hive/issues/2805)), and example agents for the framework.

|

||||

|

||||

---

|

||||

|

||||

@@ -121,9 +121,15 @@ uv sync

|

||||

6. Make your changes

|

||||

7. Run checks and tests:

|

||||

```bash

|

||||

make check # Lint and format checks (ruff check + ruff format --check)

|

||||

make check # Lint and format checks

|

||||

make test # Core tests

|

||||

```

|

||||

On Windows (no make), run directly:

|

||||

```powershell

|

||||

uv run ruff check core/ tools/

|

||||

uv run ruff format --check core/ tools/

|

||||

uv run pytest core/tests/

|

||||

```

|

||||

8. Commit your changes following our commit conventions

|

||||

9. Push to your fork and submit a Pull Request

|

||||

|

||||

@@ -222,8 +228,7 @@ else: # linux

|

||||

- **Node.js 18+** (optional, for frontend development)

|

||||

|

||||

> **Windows Users:**

|

||||

> If you are on native Windows, it is recommended to use **WSL (Windows Subsystem for Linux)**.

|

||||

> Alternatively, make sure to run PowerShell or Git Bash with Python 3.11+ installed, and disable "App Execution Aliases" in Windows settings.

|

||||

> Native Windows is supported. Use `.\quickstart.ps1` for setup and `.\hive.ps1` to run (PowerShell 5.1+). Disable "App Execution Aliases" in Windows settings to avoid Python path conflicts. WSL is also an option but not required.

|

||||

|

||||

> **Tip:** Installing Claude Code skills is optional for running existing agents, but required if you plan to **build new agents**.

|

||||

|

||||

@@ -385,6 +390,8 @@ Aden Hive supports **100+ LLM providers** via LiteLLM, giving users maximum flex

|

||||

|----------|--------|-------|

|

||||

| **Anthropic** | Claude 3.5 Sonnet, Haiku, Opus | Default provider, best for reasoning |

|

||||

| **OpenAI** | GPT-4, GPT-4 Turbo, GPT-4o | Function calling, vision |

|

||||

| **OpenRouter** | Any OpenRouter catalog model | Uses `OPENROUTER_API_KEY` and `https://openrouter.ai/api/v1` |

|

||||

| **Hive LLM** | `queen`, `kimi-2.5`, `GLM-5` | Uses `HIVE_API_KEY` and the Hive-managed endpoint |

|

||||

| **Google** | Gemini 1.5 Pro, Flash | Long context windows |

|

||||

| **DeepSeek** | DeepSeek V3 | Cost-effective, strong reasoning |

|

||||

| **Mistral** | Mistral Large, Medium, Small | Open weights, EU hosting |

|

||||

@@ -410,6 +417,10 @@ DEFAULT_MODEL = "claude-haiku-4-5-20251001"

|

||||

- **Cost**: DeepSeek or Gemini Flash (budget-conscious)

|

||||

- **Privacy**: Ollama with local models (no data leaves server)

|

||||

|

||||

**Provider-Specific Notes**

|

||||

- **OpenRouter**: store `provider` as `openrouter`, use the raw OpenRouter model ID in `model` (for example `x-ai/grok-4.20-beta`), and use `OPENROUTER_API_KEY`

|

||||

- **Hive LLM**: store `provider` as `hive`, use Hive model names such as `queen`, `kimi-2.5`, or `GLM-5`, and use `HIVE_API_KEY`

|

||||

|

||||

**For Development**

|

||||

- Use cheaper/faster models (Haiku, GPT-4o-mini)

|

||||

- Test with multiple providers to catch provider-specific issues

|

||||

@@ -421,7 +432,7 @@ DEFAULT_MODEL = "claude-haiku-4-5-20251001"

|

||||

2. **Add credential handling** in `core/framework/credentials/`

|

||||

3. **Add provider-specific configuration** in `core/framework/llm/`

|

||||

4. **Write tests** in `core/tests/test_llm_provider.py`

|

||||

5. **Update documentation** in `docs/llm_providers.md`

|

||||

5. **Update documentation** in `README.md`, `docs/configuration.md`, and any setup guides that mention provider configuration

|

||||

|

||||

**Example: Testing LLM Integration**

|

||||

|

||||

|

||||

@@ -1,24 +1,31 @@

|

||||

.PHONY: lint format check test install-hooks help frontend-install frontend-dev frontend-build

|

||||

.PHONY: lint format check test test-tools test-live test-all install-hooks help frontend-install frontend-dev frontend-build

|

||||

|

||||

# ── Ensure uv is findable in Git Bash on Windows ──────────────────────────────

|

||||

# uv installs to ~/.local/bin on Windows/Linux/macOS. Git Bash may not include

|

||||

# this in PATH by default, so we prepend it here.

|

||||

export PATH := $(HOME)/.local/bin:$(PATH)

|

||||

|

||||

# ── Targets ───────────────────────────────────────────────────────────────────

|

||||

|

||||

help: ## Show this help

|

||||

@grep -E '^[a-zA-Z_-]+:.*?## .*$$' $(MAKEFILE_LIST) | \

|

||||

awk 'BEGIN {FS = ":.*?## "}; {printf " \033[36m%-15s\033[0m %s\n", $$1, $$2}'

|

||||

|

||||

lint: ## Run ruff linter and formatter (with auto-fix)

|

||||

cd core && ruff check --fix .

|

||||

cd tools && ruff check --fix .

|

||||

cd core && ruff format .

|

||||

cd tools && ruff format .

|

||||

cd core && uv run ruff check --fix .

|

||||

cd tools && uv run ruff check --fix .

|

||||

cd core && uv run ruff format .

|

||||

cd tools && uv run ruff format .

|

||||

|

||||

format: ## Run ruff formatter

|

||||

cd core && ruff format .

|

||||

cd tools && ruff format .

|

||||

cd core && uv run ruff format .

|

||||

cd tools && uv run ruff format .

|

||||

|

||||

check: ## Run all checks without modifying files (CI-safe)

|

||||

cd core && ruff check .

|

||||

cd tools && ruff check .

|

||||

cd core && ruff format --check .

|

||||

cd tools && ruff format --check .

|

||||

cd core && uv run ruff check .

|

||||

cd tools && uv run ruff check .

|

||||

cd core && uv run ruff format --check .

|

||||

cd tools && uv run ruff format --check .

|

||||

|

||||

test: ## Run all tests (core + tools, excludes live)

|

||||

cd core && uv run python -m pytest tests/ -v

|

||||

@@ -46,4 +53,4 @@ frontend-dev: ## Start frontend dev server

|

||||

cd core/frontend && npm run dev

|

||||

|

||||

frontend-build: ## Build frontend for production

|

||||

cd core/frontend && npm run build

|

||||

cd core/frontend && npm run build

|

||||

@@ -27,7 +27,7 @@

|

||||

<img src="https://img.shields.io/badge/Multi--Agent-Systems-blue?style=flat-square" alt="Multi-Agent" />

|

||||

<img src="https://img.shields.io/badge/Headless-Development-purple?style=flat-square" alt="Headless" />

|

||||

<img src="https://img.shields.io/badge/Human--in--the--Loop-orange?style=flat-square" alt="HITL" />

|

||||

<img src="https://img.shields.io/badge/Production--Ready-red?style=flat-square" alt="Production" />

|

||||

<img src="https://img.shields.io/badge/Browser-Use-red?style=flat-square" alt="Browser Use" />

|

||||

</p>

|

||||

<p align="center">

|

||||

<img src="https://img.shields.io/badge/OpenAI-supported-412991?style=flat-square&logo=openai" alt="OpenAI" />

|

||||

@@ -37,15 +37,17 @@

|

||||

|

||||

## Overview

|

||||

|

||||

Build autonomous, reliable, self-improving AI agents without hardcoding workflows. Define your goal through conversation with hive coding agent(queen), and the framework generates a node graph with dynamically created connection code. When things break, the framework captures failure data, evolves the agent through the coding agent, and redeploys. Built-in human-in-the-loop nodes, credential management, and real-time monitoring give you control without sacrificing adaptability.

|

||||

Generate a swarm of worker agents with a coding agent(queen) that control them. Define your goal through conversation with hive queen, and the framework generates a node graph with dynamically created connection code. When things break, the framework captures failure data, evolves the agent through the coding agent, and redeploys. Built-in human-in-the-loop nodes, browser use, credential management, and real-time monitoring give you control without sacrificing adaptability.

|

||||

|

||||

Visit [adenhq.com](https://adenhq.com) for complete documentation, examples, and guides.

|

||||

|

||||

[](https://www.youtube.com/watch?v=XDOG9fOaLjU)

|

||||

|

||||

https://github.com/user-attachments/assets/bf10edc3-06ba-48b6-98ba-d069b15fb69d

|

||||

|

||||

|

||||

## Who Is Hive For?

|

||||

|

||||

Hive is designed for developers and teams who want to build **production-grade AI agents** without manually wiring complex workflows.

|

||||

Hive is designed for developers and teams who want to build many **autonomous AI agents** fast without manually wiring complex workflows.

|

||||

|

||||

Hive is a good fit if you:

|

||||

|

||||

@@ -73,7 +75,7 @@ Use Hive when you need:

|

||||

- **[Self-Hosting Guide](https://docs.adenhq.com/getting-started/quickstart)** - Deploy Hive on your infrastructure

|

||||

- **[Changelog](https://github.com/aden-hive/hive/releases)** - Latest updates and releases

|

||||

- **[Roadmap](docs/roadmap.md)** - Upcoming features and plans

|

||||

- **[Report Issues](https://github.com/adenhq/hive/issues)** - Bug reports and feature requests

|

||||

- **[Report Issues](https://github.com/aden-hive/hive/issues)** - Bug reports and feature requests

|

||||

- **[Contributing](CONTRIBUTING.md)** - How to contribute and submit PRs

|

||||

|

||||

## Quick Start

|

||||

@@ -84,7 +86,7 @@ Use Hive when you need:

|

||||

- An LLM provider that powers the agents

|

||||

- **ripgrep (optional, recommended on Windows):** The `search_files` tool uses ripgrep for faster file search. If not installed, a Python fallback is used. On Windows: `winget install BurntSushi.ripgrep` or `scoop install ripgrep`

|

||||

|

||||

> **Note for Windows Users:** It is strongly recommended to use **WSL (Windows Subsystem for Linux)** or **Git Bash** to run this framework. Some core automation scripts may not execute correctly in standard Command Prompt or PowerShell.

|

||||

> **Windows Users:** Native Windows is supported via `quickstart.ps1` and `hive.ps1`. Run these in PowerShell 5.1+. WSL is also an option but not required.

|

||||

|

||||

### Installation

|

||||

|

||||

@@ -108,18 +110,16 @@ This sets up:

|

||||

- **framework** - Core agent runtime and graph executor (in `core/.venv`)

|

||||

- **aden_tools** - MCP tools for agent capabilities (in `tools/.venv`)

|

||||

- **credential store** - Encrypted API key storage (`~/.hive/credentials`)

|

||||

- **LLM provider** - Interactive default model configuration

|

||||

- **LLM provider** - Interactive default model configuration, including Hive LLM and OpenRouter

|

||||

- All required Python dependencies with `uv`

|

||||

|

||||

- Finally, it will open the Hive interface in your browser

|

||||

|

||||

> **Tip:** To reopen the dashboard later, run `hive open` from the project directory.

|

||||

|

||||

<img width="2500" height="1214" alt="home-screen" src="https://github.com/user-attachments/assets/134d897f-5e75-4874-b00b-e0505f6b45c4" />

|

||||

|

||||

### Build Your First Agent

|

||||

|

||||

Type the agent you want to build in the home input box

|

||||

Type the agent you want to build in the home input box. The queen is going to ask you questions and work out a solution with you.

|

||||

|

||||

<img width="2500" height="1214" alt="Image" src="https://github.com/user-attachments/assets/1ce19141-a78b-46f5-8d64-dbf987e048f4" />

|

||||

|

||||

@@ -131,7 +131,7 @@ Click "Try a sample agent" and check the templates. You can run a template direc

|

||||

|

||||

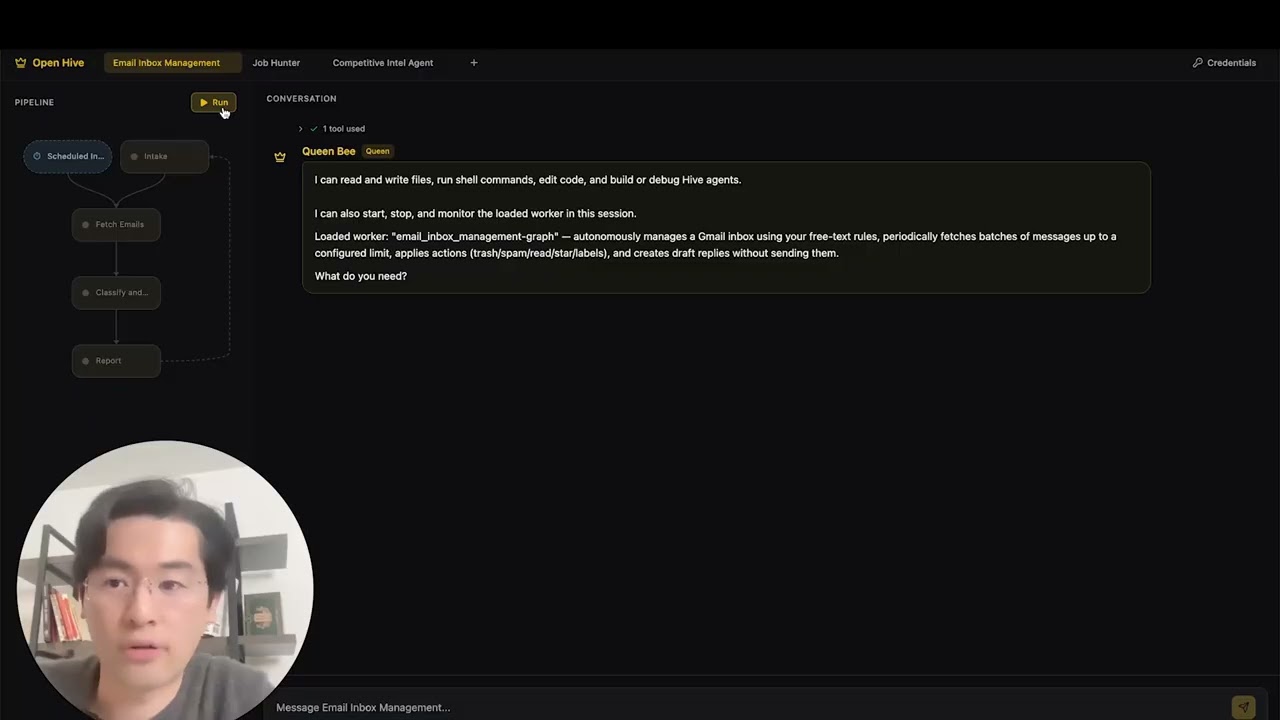

Now you can run an agent by selecting the agent (either an existing agent or example agent). You can click the Run button on the top left, or talk to the queen agent and it can run the agent for you.

|

||||

|

||||

<img width="2500" height="1214" alt="Image" src="https://github.com/user-attachments/assets/71c38206-2ad5-49aa-bde8-6698d0bc55f5" />

|

||||

<img width="2549" height="1174" alt="Screenshot 2026-03-12 at 9 27 36 PM" src="https://github.com/user-attachments/assets/7c7d30fa-9ceb-4c23-95af-b1caa405547d" />

|

||||

|

||||

## Features

|

||||

|

||||

@@ -143,14 +143,13 @@ Now you can run an agent by selecting the agent (either an existing agent or exa

|

||||

- **SDK-Wrapped Nodes** - Every node gets shared memory, local RLM memory, monitoring, tools, and LLM access out of the box

|

||||

- **[Human-in-the-Loop](docs/key_concepts/graph.md#human-in-the-loop)** - Intervention nodes that pause execution for human input with configurable timeouts and escalation

|

||||

- **Real-time Observability** - WebSocket streaming for live monitoring of agent execution, decisions, and node-to-node communication

|

||||

- **Production-Ready** - Self-hostable, built for scale and reliability

|

||||

|

||||

## Integration

|

||||

|

||||

<a href="https://github.com/aden-hive/hive/tree/main/tools/src/aden_tools/tools"><img width="100%" alt="Integration" src="https://github.com/user-attachments/assets/a1573f93-cf02-4bb8-b3d5-b305b05b1e51" /></a>

|

||||

Hive is built to be model-agnostic and system-agnostic.

|

||||

|

||||

- **LLM flexibility** - Hive Framework is designed to support various types of LLMs, including hosted and local models through LiteLLM-compatible providers.

|

||||

- **LLM flexibility** - Hive Framework supports Anthropic, OpenAI, OpenRouter, Hive LLM, and other hosted or local models through LiteLLM-compatible providers.

|

||||

- **Business system connectivity** - Hive Framework is designed to connect to all kinds of business systems as tools, such as CRM, support, messaging, data, file, and internal APIs via MCP.

|

||||

|

||||

## Why Aden

|

||||

@@ -378,7 +377,7 @@ This project is licensed under the Apache License 2.0 - see the [LICENSE](LICENS

|

||||

|

||||

**Q: What LLM providers does Hive support?**

|

||||

|

||||

Hive supports 100+ LLM providers through LiteLLM integration, including OpenAI (GPT-4, GPT-4o), Anthropic (Claude models), Google Gemini, DeepSeek, Mistral, Groq, and many more. Simply set the appropriate API key environment variable and specify the model name. We recommend using Claude, GLM and Gemini as they have the best performance.

|

||||

Hive supports 100+ LLM providers through LiteLLM integration, including OpenAI (GPT-4, GPT-4o), Anthropic (Claude models), Google Gemini, DeepSeek, Mistral, Groq, OpenRouter, and Hive LLM. Simply set the appropriate API key environment variable and specify the model name. See [docs/configuration.md](docs/configuration.md) for provider-specific configuration examples.

|

||||

|

||||

**Q: Can I use Hive with local AI models like Ollama?**

|

||||

|

||||

@@ -392,10 +391,6 @@ Hive generates your entire agent system from natural language goals using a codi

|

||||

|

||||

Yes, Hive is fully open-source under the Apache License 2.0. We actively encourage community contributions and collaboration.

|

||||

|

||||

**Q: Can Hive handle complex, production-scale use cases?**

|

||||

|

||||

Yes. Hive is explicitly designed for production environments with features like automatic failure recovery, real-time observability, cost controls, and horizontal scaling support. The framework handles both simple automations and complex multi-agent workflows.

|

||||

|

||||

**Q: Does Hive support human-in-the-loop workflows?**

|

||||

|

||||

Yes, Hive fully supports [human-in-the-loop](docs/key_concepts/graph.md#human-in-the-loop) workflows through intervention nodes that pause execution for human input. These include configurable timeouts and escalation policies, allowing seamless collaboration between human experts and AI agents.

|

||||

@@ -420,6 +415,16 @@ Visit [docs.adenhq.com](https://docs.adenhq.com/) for complete guides, API refer

|

||||

|

||||

Contributions are welcome! Fork the repository, create your feature branch, implement your changes, and submit a pull request. See [CONTRIBUTING.md](CONTRIBUTING.md) for detailed guidelines.

|

||||

|

||||

## Star History

|

||||

|

||||

<a href="https://star-history.com/#aden-hive/hive&Date">

|

||||

<picture>

|

||||

<source media="(prefers-color-scheme: dark)" srcset="https://api.star-history.com/svg?repos=aden-hive/hive&type=Date&theme=dark" />

|

||||

<source media="(prefers-color-scheme: light)" srcset="https://api.star-history.com/svg?repos=aden-hive/hive&type=Date" />

|

||||

<img alt="Star History Chart" src="https://api.star-history.com/svg?repos=aden-hive/hive&type=Date" />

|

||||

</picture>

|

||||

</a>

|

||||

|

||||

---

|

||||

|

||||

<p align="center">

|

||||

|

||||

@@ -1,31 +0,0 @@

|

||||

perf: reduce subprocess spawning in quickstart scripts (#4427)

|

||||

|

||||

## Problem

|

||||

Windows process creation (CreateProcess) is 10-100x slower than Linux fork/exec.

|

||||

The quickstart scripts were spawning 4+ separate `uv run python -c "import X"`

|

||||

processes to verify imports, adding ~600ms overhead on Windows.

|

||||

|

||||

## Solution

|

||||

Consolidated all import checks into a single batch script that checks multiple

|

||||

modules in one subprocess call, reducing spawn overhead by ~75%.

|

||||

|

||||

## Changes

|

||||

- **New**: `scripts/check_requirements.py` - Batched import checker

|

||||

- **New**: `scripts/test_check_requirements.py` - Test suite

|

||||

- **New**: `scripts/benchmark_quickstart.ps1` - Performance benchmark tool

|

||||

- **Modified**: `quickstart.ps1` - Updated import verification (2 sections)

|

||||

- **Modified**: `quickstart.sh` - Updated import verification

|

||||

|

||||

## Performance Impact

|

||||

**Benchmark results on Windows:**

|

||||

- Before: ~19.8 seconds for import checks

|

||||

- After: ~4.9 seconds for import checks

|

||||

- **Improvement: 14.9 seconds saved (75.2% faster)**

|

||||

|

||||

## Testing

|

||||

- ✅ All functional tests pass (`scripts/test_check_requirements.py`)

|

||||

- ✅ Quickstart scripts work correctly on Windows

|

||||

- ✅ Error handling verified (invalid imports reported correctly)

|

||||

- ✅ Performance benchmark confirms 75%+ improvement

|

||||

|

||||

Fixes #4427

|

||||

@@ -1,27 +0,0 @@

|

||||

# Identity mapping: GitHub username -> Discord ID

|

||||

#

|

||||

# This file links GitHub accounts to Discord accounts for the

|

||||

# Integration Bounty Program. When a bounty PR is merged, the

|

||||

# GitHub Action uses this file to ping the contributor on Discord.

|

||||

#

|

||||

# HOW TO ADD YOURSELF:

|

||||

# Open a "Link Discord Account" issue:

|

||||

# https://github.com/aden-hive/hive/issues/new?template=link-discord.yml

|

||||

# A GitHub Action will automatically add your entry here.

|

||||

#

|

||||

# To find your Discord ID:

|

||||

# 1. Open Discord Settings > Advanced > Enable Developer Mode

|

||||

# 2. Right-click your name > Copy User ID

|

||||

#

|

||||

# Format:

|

||||

# - github: your-github-username

|

||||

# discord: "your-discord-id" # quotes required (it's a number)

|

||||

# name: Your Display Name # optional

|

||||

|

||||

contributors:

|

||||

# - github: example-user

|

||||

# discord: "123456789012345678"

|

||||

# name: Example User

|

||||

- github: TimothyZhang7

|

||||

discord: "408460790061072384"

|

||||

name: Timothy@Aden

|

||||

@@ -0,0 +1,583 @@

|

||||

#!/usr/bin/env python3

|

||||

"""Antigravity authentication CLI.

|

||||

|

||||

Implements OAuth2 flow for Google's Antigravity Code Assist gateway.

|

||||

Credentials are stored in ~/.hive/antigravity-accounts.json.

|

||||

|

||||

Usage:

|

||||

python -m antigravity_auth auth account add

|

||||

python -m antigravity_auth auth account list

|

||||

python -m antigravity_auth auth account remove <email>

|

||||

"""

|

||||

|

||||

from __future__ import annotations

|

||||

|

||||

import argparse

|

||||

import json

|

||||

import logging

|

||||

import os

|

||||

import secrets

|

||||

import socket

|

||||

import sys

|

||||

import time

|

||||

import urllib.parse

|

||||

import urllib.request

|

||||

import webbrowser

|

||||

from http.server import BaseHTTPRequestHandler, HTTPServer

|

||||

from pathlib import Path

|

||||

from typing import Any

|

||||

|

||||

logging.basicConfig(level=logging.INFO, format="%(message)s")

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

# OAuth endpoints

|

||||

_OAUTH_AUTH_URL = "https://accounts.google.com/o/oauth2/v2/auth"

|

||||

_OAUTH_TOKEN_URL = "https://oauth2.googleapis.com/token"

|

||||

|

||||

# Scopes for Antigravity/Cloud Code Assist

|

||||

_OAUTH_SCOPES = [

|

||||

"https://www.googleapis.com/auth/cloud-platform",

|

||||

"https://www.googleapis.com/auth/userinfo.email",

|

||||

"https://www.googleapis.com/auth/userinfo.profile",

|

||||

]

|

||||

|

||||

# Credentials file path in ~/.hive/

|

||||

_ACCOUNTS_FILE = Path.home() / ".hive" / "antigravity-accounts.json"

|

||||

|

||||

# Default project ID

|

||||

_DEFAULT_PROJECT_ID = "rising-fact-p41fc"

|

||||

_DEFAULT_REDIRECT_PORT = 51121

|

||||

|

||||

# OAuth credentials fetched from the opencode-antigravity-auth project.

|

||||

# This project reverse-engineered and published the public OAuth credentials

|

||||

# for Google's Antigravity/Cloud Code Assist API.

|

||||

# Source: https://github.com/NoeFabris/opencode-antigravity-auth

|

||||

_CREDENTIALS_URL = (

|

||||

"https://raw.githubusercontent.com/NoeFabris/opencode-antigravity-auth/dev/src/constants.ts"

|

||||

)

|

||||

|

||||

# Cached credentials fetched from public source

|

||||

_cached_client_id: str | None = None

|

||||

_cached_client_secret: str | None = None

|

||||

|

||||

|

||||

def _fetch_credentials_from_public_source() -> tuple[str | None, str | None]:

|

||||

"""Fetch OAuth client ID and secret from the public npm package source on GitHub."""

|

||||

global _cached_client_id, _cached_client_secret

|

||||

if _cached_client_id and _cached_client_secret:

|

||||

return _cached_client_id, _cached_client_secret

|

||||

|

||||

try:

|

||||

req = urllib.request.Request(

|

||||

_CREDENTIALS_URL, headers={"User-Agent": "Hive-Antigravity-Auth/1.0"}

|

||||

)

|

||||

with urllib.request.urlopen(req, timeout=10) as resp:

|

||||

content = resp.read().decode("utf-8")

|

||||

import re

|

||||

|

||||

id_match = re.search(r'ANTIGRAVITY_CLIENT_ID\s*=\s*"([^"]+)"', content)

|

||||

secret_match = re.search(r'ANTIGRAVITY_CLIENT_SECRET\s*=\s*"([^"]+)"', content)

|

||||

if id_match:

|

||||

_cached_client_id = id_match.group(1)

|

||||

if secret_match:

|

||||

_cached_client_secret = secret_match.group(1)

|

||||

return _cached_client_id, _cached_client_secret

|

||||

except Exception as e:

|

||||

logger.debug(f"Failed to fetch credentials from public source: {e}")

|

||||

return None, None

|

||||

|

||||

|

||||

def get_client_id() -> str:

|

||||

"""Get OAuth client ID from env, config, or public source."""

|

||||

env_id = os.environ.get("ANTIGRAVITY_CLIENT_ID")

|

||||

if env_id:

|

||||

return env_id

|

||||

|

||||

# Try hive config

|

||||

hive_cfg = Path.home() / ".hive" / "configuration.json"

|

||||

if hive_cfg.exists():

|

||||

try:

|

||||

with open(hive_cfg) as f:

|

||||

cfg = json.load(f)

|

||||

cfg_id = cfg.get("llm", {}).get("antigravity_client_id")

|

||||

if cfg_id:

|

||||

return cfg_id

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

# Fetch from public source

|

||||

client_id, _ = _fetch_credentials_from_public_source()

|

||||

if client_id:

|

||||

return client_id

|

||||

|

||||

raise RuntimeError("Could not obtain Antigravity OAuth client ID")

|

||||

|

||||

|

||||

def get_client_secret() -> str | None:

|

||||

"""Get OAuth client secret from env, config, or public source."""

|

||||

secret = os.environ.get("ANTIGRAVITY_CLIENT_SECRET")

|

||||

if secret:

|

||||

return secret

|

||||

|

||||

# Try to read from hive config

|

||||

hive_cfg = Path.home() / ".hive" / "configuration.json"

|

||||

if hive_cfg.exists():

|

||||

try:

|

||||

with open(hive_cfg) as f:

|

||||

cfg = json.load(f)

|

||||

secret = cfg.get("llm", {}).get("antigravity_client_secret")

|

||||

if secret:

|

||||

return secret

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

# Fetch from public source (npm package on GitHub)

|

||||

_, secret = _fetch_credentials_from_public_source()

|

||||

return secret

|

||||

|

||||

|

||||

def find_free_port() -> int:

|

||||

"""Find an available local port."""

|

||||

with socket.socket(socket.AF_INET, socket.SOCK_STREAM) as s:

|

||||

s.bind(("", 0))

|

||||

s.listen(1)

|

||||

return s.getsockname()[1]

|

||||

|

||||

|

||||

class OAuthCallbackHandler(BaseHTTPRequestHandler):

|

||||

"""Handle OAuth callback from browser."""

|

||||

|

||||

auth_code: str | None = None

|

||||

state: str | None = None

|

||||

error: str | None = None

|

||||

|

||||

def log_message(self, format: str, *args: Any) -> None:

|

||||

pass # Suppress default logging

|

||||

|

||||

def do_GET(self) -> None:

|

||||

parsed = urllib.parse.urlparse(self.path)

|

||||

|

||||

if parsed.path == "/oauth-callback":

|

||||

query = urllib.parse.parse_qs(parsed.query)

|

||||

|

||||

if "error" in query:

|

||||

self.error = query["error"][0]

|

||||

self._send_response("Authentication failed. You can close this window.")

|

||||

return

|

||||

|

||||

if "code" in query and "state" in query:

|

||||

OAuthCallbackHandler.auth_code = query["code"][0]

|

||||

OAuthCallbackHandler.state = query["state"][0]

|

||||

self._send_response(

|

||||

"Authentication successful! You can close this window "

|

||||

"and return to the terminal."

|

||||

)

|

||||

return

|

||||

|

||||

self._send_response("Waiting for authentication...")

|

||||

|

||||

def _send_response(self, message: str) -> None:

|

||||

self.send_response(200)

|

||||

self.send_header("Content-Type", "text/html")

|

||||

self.end_headers()

|

||||

html = f"""<!DOCTYPE html>

|

||||

<html>

|

||||

<head><title>Antigravity Auth</title></head>

|

||||

<body style="font-family: system-ui; display: flex; align-items: center;

|

||||

justify-content: center; height: 100vh; margin: 0; background: #1a1a2e;

|

||||

color: #eee;">

|

||||

<div style="text-align: center;">

|

||||

<h2>{message}</h2>

|

||||

</div>

|

||||

</body>

|

||||

</html>"""

|

||||