Compare commits

17 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 6b506a1c08 | |||

| 0c9f4fa97e | |||

| 1c9b09fb78 | |||

| 9fb14f23d2 | |||

| 4795dc4f68 | |||

| acf0f804c5 | |||

| 4e2951854b | |||

| 80dfb429d7 | |||

| 9c0ba77e22 | |||

| 46b4651073 | |||

| 03842353e4 | |||

| 22bb07f00e | |||

| 660f883197 | |||

| 988de80b66 | |||

| dc6aa226ee | |||

| a7b6b080ab | |||

| 9202cbd4d4 |

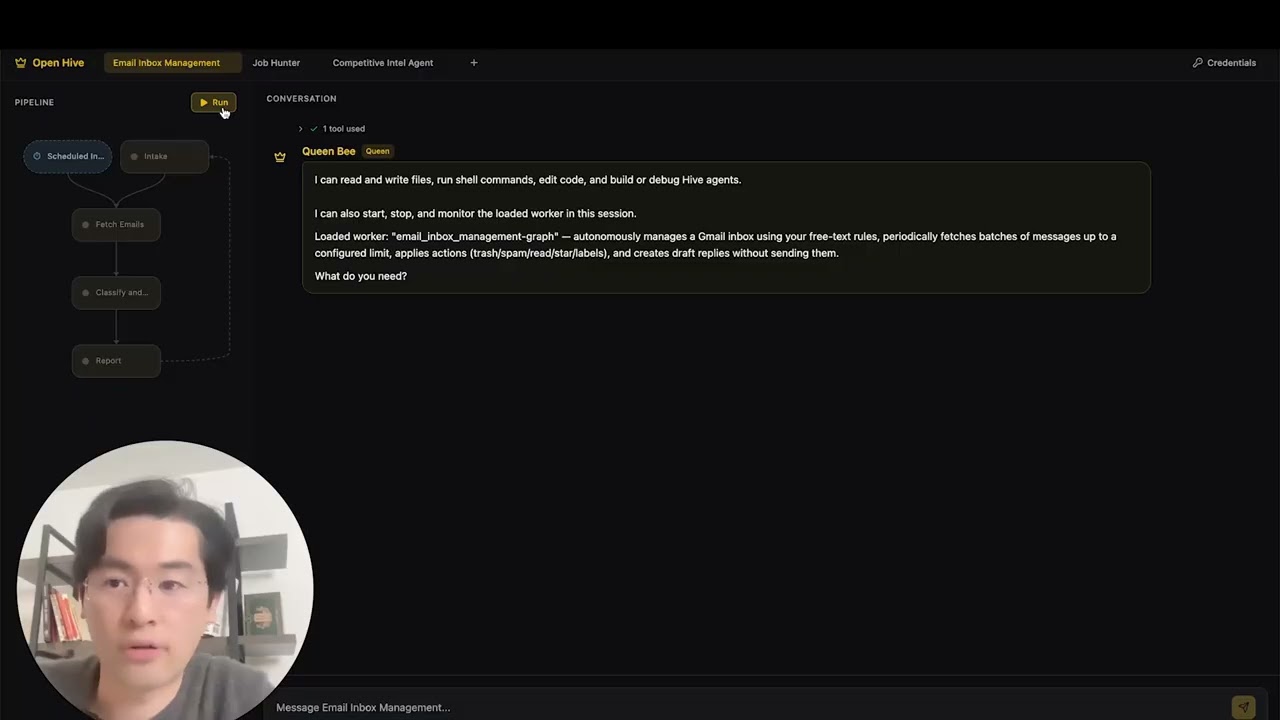

@@ -41,7 +41,8 @@ Generate a swarm of worker agents with a coding agent(queen) that control them.

|

||||

|

||||

Visit [adenhq.com](https://adenhq.com) for complete documentation, examples, and guides.

|

||||

|

||||

[](https://www.youtube.com/watch?v=XDOG9fOaLjU)

|

||||

https://github.com/user-attachments/assets/aad3a035-e7b3-4cac-b13d-4a83c7002c30

|

||||

|

||||

|

||||

## Who Is Hive For?

|

||||

|

||||

|

||||

@@ -1,31 +0,0 @@

|

||||

perf: reduce subprocess spawning in quickstart scripts (#4427)

|

||||

|

||||

## Problem

|

||||

Windows process creation (CreateProcess) is 10-100x slower than Linux fork/exec.

|

||||

The quickstart scripts were spawning 4+ separate `uv run python -c "import X"`

|

||||

processes to verify imports, adding ~600ms overhead on Windows.

|

||||

|

||||

## Solution

|

||||

Consolidated all import checks into a single batch script that checks multiple

|

||||

modules in one subprocess call, reducing spawn overhead by ~75%.

|

||||

|

||||

## Changes

|

||||

- **New**: `scripts/check_requirements.py` - Batched import checker

|

||||

- **New**: `scripts/test_check_requirements.py` - Test suite

|

||||

- **New**: `scripts/benchmark_quickstart.ps1` - Performance benchmark tool

|

||||

- **Modified**: `quickstart.ps1` - Updated import verification (2 sections)

|

||||

- **Modified**: `quickstart.sh` - Updated import verification

|

||||

|

||||

## Performance Impact

|

||||

**Benchmark results on Windows:**

|

||||

- Before: ~19.8 seconds for import checks

|

||||

- After: ~4.9 seconds for import checks

|

||||

- **Improvement: 14.9 seconds saved (75.2% faster)**

|

||||

|

||||

## Testing

|

||||

- ✅ All functional tests pass (`scripts/test_check_requirements.py`)

|

||||

- ✅ Quickstart scripts work correctly on Windows

|

||||

- ✅ Error handling verified (invalid imports reported correctly)

|

||||

- ✅ Performance benchmark confirms 75%+ improvement

|

||||

|

||||

Fixes #4427

|

||||

@@ -51,7 +51,13 @@ def get_preferred_model() -> str:

|

||||

"""Return the user's preferred LLM model string (e.g. 'anthropic/claude-sonnet-4-20250514')."""

|

||||

llm = get_hive_config().get("llm", {})

|

||||

if llm.get("provider") and llm.get("model"):

|

||||

return f"{llm['provider']}/{llm['model']}"

|

||||

provider = str(llm["provider"])

|

||||

model = str(llm["model"]).strip()

|

||||

# OpenRouter quickstart stores raw model IDs; tolerate pasted "openrouter/<id>" too.

|

||||

if provider.lower() == "openrouter" and model.lower().startswith("openrouter/"):

|

||||

model = model[len("openrouter/") :]

|

||||

if model:

|

||||

return f"{provider}/{model}"

|

||||

return "anthropic/claude-sonnet-4-20250514"

|

||||

|

||||

|

||||

@@ -61,6 +67,7 @@ def get_max_tokens() -> int:

|

||||

|

||||

|

||||

DEFAULT_MAX_CONTEXT_TOKENS = 32_000

|

||||

OPENROUTER_API_BASE = "https://openrouter.ai/api/v1"

|

||||

|

||||

|

||||

def get_max_context_tokens() -> int:

|

||||

@@ -142,7 +149,11 @@ def get_api_base() -> str | None:

|

||||

if llm.get("use_kimi_code_subscription"):

|

||||

# Kimi Code uses an Anthropic-compatible endpoint (no /v1 suffix).

|

||||

return "https://api.kimi.com/coding"

|

||||

return llm.get("api_base")

|

||||

if llm.get("api_base"):

|

||||

return llm["api_base"]

|

||||

if str(llm.get("provider", "")).lower() == "openrouter":

|

||||

return OPENROUTER_API_BASE

|

||||

return None

|

||||

|

||||

|

||||

def get_llm_extra_kwargs() -> dict[str, Any]:

|

||||

|

||||

@@ -51,6 +51,16 @@ def ensure_credential_key_env() -> None:

|

||||

if found and value:

|

||||

os.environ[var_name] = value

|

||||

logger.debug("Loaded %s from shell config", var_name)

|

||||

# Also load the currently configured LLM env var even if it's not in CREDENTIAL_SPECS.

|

||||

# This keeps quickstart-written keys available to fresh processes on Unix shells.

|

||||

from framework.config import get_hive_config

|

||||

|

||||

llm_env_var = str(get_hive_config().get("llm", {}).get("api_key_env_var", "")).strip()

|

||||

if llm_env_var and not os.environ.get(llm_env_var):

|

||||

found, value = check_env_var_in_shell_config(llm_env_var)

|

||||

if found and value:

|

||||

os.environ[llm_env_var] = value

|

||||

logger.debug("Loaded configured LLM env var %s from shell config", llm_env_var)

|

||||

except ImportError:

|

||||

pass

|

||||

|

||||

|

||||

@@ -822,6 +822,7 @@ class EventLoopNode(NodeProtocol):

|

||||

)

|

||||

_stream_retry_count = 0

|

||||

_turn_cancelled = False

|

||||

_llm_turn_failed_waiting_input = False

|

||||

while True:

|

||||

try:

|

||||

(

|

||||

@@ -941,6 +942,16 @@ class EventLoopNode(NodeProtocol):

|

||||

# can retry or adjust the request.

|

||||

if ctx.node_spec.client_facing:

|

||||

error_msg = f"LLM call failed: {e}"

|

||||

_guardrail_phrase = (

|

||||

"no endpoints available matching your guardrail restrictions "

|

||||

"and data policy"

|

||||

)

|

||||

if _guardrail_phrase in str(e).lower():

|

||||

error_msg += (

|

||||

" OpenRouter blocked this model under current privacy settings. "

|

||||

"Update https://openrouter.ai/settings/privacy or choose another "

|

||||

"OpenRouter model."

|

||||

)

|

||||

logger.error(

|

||||

"[%s] iter=%d: %s — waiting for user input",

|

||||

node_id,

|

||||

@@ -962,6 +973,7 @@ class EventLoopNode(NodeProtocol):

|

||||

f"[Error: {error_msg}. Please try again.]"

|

||||

)

|

||||

await self._await_user_input(ctx, prompt="")

|

||||

_llm_turn_failed_waiting_input = True

|

||||

break # exit retry loop, continue outer iteration

|

||||

|

||||

# Non-client-facing: crash as before

|

||||

@@ -1012,6 +1024,11 @@ class EventLoopNode(NodeProtocol):

|

||||

await self._await_user_input(ctx, prompt="")

|

||||

continue # back to top of for-iteration loop

|

||||

|

||||

# Client-facing non-transient LLM failures wait for user input and then

|

||||

# continue the outer loop without touching per-turn token vars.

|

||||

if _llm_turn_failed_waiting_input:

|

||||

continue

|

||||

|

||||

# 6e'. Feed actual API token count back for accurate estimation

|

||||

turn_input = turn_tokens.get("input", 0)

|

||||

if turn_input > 0:

|

||||

@@ -2298,7 +2315,6 @@ class EventLoopNode(NodeProtocol):

|

||||

|

||||

elif tc.tool_name == "ask_user":

|

||||

# --- Framework-level ask_user handling ---

|

||||

user_input_requested = True

|

||||

ask_user_prompt = tc.tool_input.get("question", "")

|

||||

raw_options = tc.tool_input.get("options", None)

|

||||

# Defensive: ensure options is a list of strings.

|

||||

@@ -2335,6 +2351,8 @@ class EventLoopNode(NodeProtocol):

|

||||

user_input_requested = False

|

||||

continue

|

||||

|

||||

user_input_requested = True

|

||||

|

||||

# Free-form ask_user (no options): stream the question

|

||||

# text as a chat message so the user can see it. When

|

||||

# options are present the QuestionWidget shows the

|

||||

@@ -2360,7 +2378,6 @@ class EventLoopNode(NodeProtocol):

|

||||

|

||||

elif tc.tool_name == "ask_user_multiple":

|

||||

# --- Framework-level ask_user_multiple ---

|

||||

user_input_requested = True

|

||||

raw_questions = tc.tool_input.get("questions", [])

|

||||

if not isinstance(raw_questions, list) or len(raw_questions) < 2:

|

||||

result = ToolResult(

|

||||

@@ -2398,6 +2415,8 @@ class EventLoopNode(NodeProtocol):

|

||||

}

|

||||

)

|

||||

|

||||

user_input_requested = True

|

||||

|

||||

# Store as multi-question prompt/options for

|

||||

# the event emission path

|

||||

ask_user_prompt = ""

|

||||

@@ -2708,7 +2727,11 @@ class EventLoopNode(NodeProtocol):

|

||||

content=result.content,

|

||||

is_error=result.is_error,

|

||||

)

|

||||

if tc.tool_name in ("ask_user", "ask_user_multiple"):

|

||||

if (

|

||||

tc.tool_name in ("ask_user", "ask_user_multiple")

|

||||

and user_input_requested

|

||||

and not result.is_error

|

||||

):

|

||||

# Defer tool_call_completed until after user responds

|

||||

self._deferred_tool_complete = {

|

||||

"stream_id": stream_id,

|

||||

|

||||

+647

-12

@@ -7,9 +7,13 @@ Groq, and local models.

|

||||

See: https://docs.litellm.ai/docs/providers

|

||||

"""

|

||||

|

||||

import ast

|

||||

import asyncio

|

||||

import hashlib

|

||||

import json

|

||||

import logging

|

||||

import os

|

||||

import re

|

||||

import time

|

||||

from collections.abc import AsyncIterator

|

||||

from datetime import datetime

|

||||

@@ -44,7 +48,10 @@ def _patch_litellm_anthropic_oauth() -> None:

|

||||

"""

|

||||

try:

|

||||

from litellm.llms.anthropic.common_utils import AnthropicModelInfo

|

||||

from litellm.types.llms.anthropic import ANTHROPIC_OAUTH_TOKEN_PREFIX

|

||||

from litellm.types.llms.anthropic import (

|

||||

ANTHROPIC_OAUTH_BETA_HEADER,

|

||||

ANTHROPIC_OAUTH_TOKEN_PREFIX,

|

||||

)

|

||||

except ImportError:

|

||||

logger.warning(

|

||||

"Could not apply litellm Anthropic OAuth patch — litellm internals may have "

|

||||

@@ -69,9 +76,27 @@ def _patch_litellm_anthropic_oauth() -> None:

|

||||

api_key=api_key,

|

||||

api_base=api_base,

|

||||

)

|

||||

# Check both authorization header and x-api-key for OAuth tokens.

|

||||

# litellm's optionally_handle_anthropic_oauth only checks headers["authorization"],

|

||||

# but hive passes OAuth tokens via api_key — so litellm puts them into x-api-key.

|

||||

# Anthropic rejects OAuth tokens in x-api-key; they must go in Authorization: Bearer.

|

||||

auth = result.get("authorization", "")

|

||||

if auth.startswith(f"Bearer {ANTHROPIC_OAUTH_TOKEN_PREFIX}"):

|

||||

x_api_key = result.get("x-api-key", "")

|

||||

oauth_prefix = f"Bearer {ANTHROPIC_OAUTH_TOKEN_PREFIX}"

|

||||

auth_is_oauth = auth.startswith(oauth_prefix)

|

||||

key_is_oauth = x_api_key.startswith(ANTHROPIC_OAUTH_TOKEN_PREFIX)

|

||||

if auth_is_oauth or key_is_oauth:

|

||||

token = x_api_key if key_is_oauth else auth.removeprefix("Bearer ").strip()

|

||||

result.pop("x-api-key", None)

|

||||

result["authorization"] = f"Bearer {token}"

|

||||

# Merge the OAuth beta header with any existing beta headers.

|

||||

existing_beta = result.get("anthropic-beta", "")

|

||||

beta_parts = (

|

||||

[b.strip() for b in existing_beta.split(",") if b.strip()] if existing_beta else []

|

||||

)

|

||||

if ANTHROPIC_OAUTH_BETA_HEADER not in beta_parts:

|

||||

beta_parts.append(ANTHROPIC_OAUTH_BETA_HEADER)

|

||||

result["anthropic-beta"] = ",".join(beta_parts)

|

||||

return result

|

||||

|

||||

AnthropicModelInfo.validate_environment = _patched_validate_environment

|

||||

@@ -130,11 +155,15 @@ def _patch_litellm_metadata_nonetype() -> None:

|

||||

if litellm is not None:

|

||||

_patch_litellm_anthropic_oauth()

|

||||

_patch_litellm_metadata_nonetype()

|

||||

# Let litellm silently drop params unsupported by the target provider

|

||||

# (e.g. stream_options for Anthropic) instead of forwarding them verbatim.

|

||||

litellm.drop_params = True

|

||||

|

||||

RATE_LIMIT_MAX_RETRIES = 10

|

||||

RATE_LIMIT_BACKOFF_BASE = 2 # seconds

|

||||

RATE_LIMIT_MAX_DELAY = 120 # seconds - cap to prevent absurd waits

|

||||

MINIMAX_API_BASE = "https://api.minimax.io/v1"

|

||||

OPENROUTER_API_BASE = "https://openrouter.ai/api/v1"

|

||||

|

||||

# Providers that accept cache_control on message content blocks.

|

||||

# Anthropic: native ephemeral caching. MiniMax & Z-AI/GLM: pass-through to their APIs.

|

||||

@@ -159,10 +188,69 @@ def _model_supports_cache_control(model: str) -> bool:

|

||||

# enforces a coding-agent whitelist that blocks unknown User-Agents.

|

||||

KIMI_API_BASE = "https://api.kimi.com/coding"

|

||||

|

||||

# Claude Code OAuth subscription: the Anthropic API requires a specific

|

||||

# User-Agent and a billing integrity header for OAuth-authenticated requests.

|

||||

CLAUDE_CODE_VERSION = "2.1.76"

|

||||

CLAUDE_CODE_USER_AGENT = f"claude-code/{CLAUDE_CODE_VERSION}"

|

||||

_CLAUDE_CODE_BILLING_SALT = "59cf53e54c78"

|

||||

|

||||

|

||||

def _sample_js_code_unit(text: str, idx: int) -> str:

|

||||

"""Return the character at UTF-16 code unit index *idx*, matching JS semantics."""

|

||||

encoded = text.encode("utf-16-le")

|

||||

unit_offset = idx * 2

|

||||

if unit_offset + 2 > len(encoded):

|

||||

return "0"

|

||||

code_unit = int.from_bytes(encoded[unit_offset : unit_offset + 2], "little")

|

||||

return chr(code_unit)

|

||||

|

||||

|

||||

def _claude_code_billing_header(messages: list[dict[str, Any]]) -> str:

|

||||

"""Build the billing integrity system block required by Anthropic's OAuth path."""

|

||||

# Find the first user message text

|

||||

first_text = ""

|

||||

for msg in messages:

|

||||

if msg.get("role") != "user":

|

||||

continue

|

||||

content = msg.get("content")

|

||||

if isinstance(content, str):

|

||||

first_text = content

|

||||

break

|

||||

if isinstance(content, list):

|

||||

for block in content:

|

||||

if isinstance(block, dict) and block.get("type") == "text" and block.get("text"):

|

||||

first_text = block["text"]

|

||||

break

|

||||

if first_text:

|

||||

break

|

||||

|

||||

sampled = "".join(_sample_js_code_unit(first_text, i) for i in (4, 7, 20))

|

||||

version_hash = hashlib.sha256(

|

||||

f"{_CLAUDE_CODE_BILLING_SALT}{sampled}{CLAUDE_CODE_VERSION}".encode()

|

||||

).hexdigest()

|

||||

entrypoint = os.environ.get("CLAUDE_CODE_ENTRYPOINT", "").strip() or "cli"

|

||||

return (

|

||||

f"x-anthropic-billing-header: cc_version={CLAUDE_CODE_VERSION}.{version_hash[:3]}; "

|

||||

f"cc_entrypoint={entrypoint}; cch=00000;"

|

||||

)

|

||||

|

||||

|

||||

# Empty-stream retries use a short fixed delay, not the rate-limit backoff.

|

||||

# Conversation-structure issues are deterministic — long waits don't help.

|

||||

EMPTY_STREAM_MAX_RETRIES = 3

|

||||

EMPTY_STREAM_RETRY_DELAY = 1.0 # seconds

|

||||

OPENROUTER_TOOL_COMPAT_ERROR_SNIPPETS = (

|

||||

"no endpoints found that support tool use",

|

||||

"no endpoints available that support tool use",

|

||||

"provider routing",

|

||||

)

|

||||

OPENROUTER_TOOL_CALL_RE = re.compile(

|

||||

r"<\|tool_call_start\|>\s*(.*?)\s*<\|tool_call_end\|>",

|

||||

re.DOTALL,

|

||||

)

|

||||

OPENROUTER_TOOL_COMPAT_CACHE_TTL_SECONDS = 3600

|

||||

# OpenRouter routing can change over time, so tool-compat caching must expire.

|

||||

OPENROUTER_TOOL_COMPAT_MODEL_CACHE: dict[str, float] = {}

|

||||

|

||||

# Directory for dumping failed requests

|

||||

FAILED_REQUESTS_DIR = Path.home() / ".hive" / "failed_requests"

|

||||

@@ -205,6 +293,24 @@ def _prune_failed_request_dumps(max_files: int = MAX_FAILED_REQUEST_DUMPS) -> No

|

||||

pass # Best-effort — never block the caller

|

||||

|

||||

|

||||

def _remember_openrouter_tool_compat_model(model: str) -> None:

|

||||

"""Cache OpenRouter tool-compat fallback for a bounded time window."""

|

||||

OPENROUTER_TOOL_COMPAT_MODEL_CACHE[model] = (

|

||||

time.monotonic() + OPENROUTER_TOOL_COMPAT_CACHE_TTL_SECONDS

|

||||

)

|

||||

|

||||

|

||||

def _is_openrouter_tool_compat_cached(model: str) -> bool:

|

||||

"""Return True when the cached OpenRouter compat entry is still fresh."""

|

||||

expires_at = OPENROUTER_TOOL_COMPAT_MODEL_CACHE.get(model)

|

||||

if expires_at is None:

|

||||

return False

|

||||

if expires_at <= time.monotonic():

|

||||

OPENROUTER_TOOL_COMPAT_MODEL_CACHE.pop(model, None)

|

||||

return False

|

||||

return True

|

||||

|

||||

|

||||

def _dump_failed_request(

|

||||

model: str,

|

||||

kwargs: dict[str, Any],

|

||||

@@ -408,6 +514,12 @@ class LiteLLMProvider(LLMProvider):

|

||||

self.api_key = api_key

|

||||

self.api_base = api_base or self._default_api_base_for_model(_original_model)

|

||||

self.extra_kwargs = kwargs

|

||||

# Detect Claude Code OAuth subscription by checking the api_key prefix.

|

||||

self._claude_code_oauth = bool(api_key and api_key.startswith("sk-ant-oat"))

|

||||

if self._claude_code_oauth:

|

||||

# Anthropic requires a specific User-Agent for OAuth requests.

|

||||

eh = self.extra_kwargs.setdefault("extra_headers", {})

|

||||

eh.setdefault("user-agent", CLAUDE_CODE_USER_AGENT)

|

||||

# The Codex ChatGPT backend (chatgpt.com/backend-api/codex) rejects

|

||||

# several standard OpenAI params: max_output_tokens, stream_options.

|

||||

self._codex_backend = bool(

|

||||

@@ -431,6 +543,8 @@ class LiteLLMProvider(LLMProvider):

|

||||

model_lower = model.lower()

|

||||

if model_lower.startswith("minimax/") or model_lower.startswith("minimax-"):

|

||||

return MINIMAX_API_BASE

|

||||

if model_lower.startswith("openrouter/"):

|

||||

return OPENROUTER_API_BASE

|

||||

if model_lower.startswith("kimi/"):

|

||||

return KIMI_API_BASE

|

||||

if model_lower.startswith("hive/"):

|

||||

@@ -773,6 +887,9 @@ class LiteLLMProvider(LLMProvider):

|

||||

return await self._collect_stream_to_response(stream_iter)

|

||||

|

||||

full_messages: list[dict[str, Any]] = []

|

||||

if self._claude_code_oauth:

|

||||

billing = _claude_code_billing_header(messages)

|

||||

full_messages.append({"role": "system", "content": billing})

|

||||

if system:

|

||||

sys_msg: dict[str, Any] = {"role": "system", "content": system}

|

||||

if _model_supports_cache_control(self.model):

|

||||

@@ -834,11 +951,504 @@ class LiteLLMProvider(LLMProvider):

|

||||

},

|

||||

}

|

||||

|

||||

def _is_anthropic_model(self) -> bool:

|

||||

"""Return True when the configured model targets Anthropic."""

|

||||

model = (self.model or "").lower()

|

||||

return model.startswith("anthropic/") or model.startswith("claude-")

|

||||

|

||||

def _is_minimax_model(self) -> bool:

|

||||

"""Return True when the configured model targets MiniMax."""

|

||||

model = (self.model or "").lower()

|

||||

return model.startswith("minimax/") or model.startswith("minimax-")

|

||||

|

||||

def _is_openrouter_model(self) -> bool:

|

||||

"""Return True when the configured model targets OpenRouter."""

|

||||

model = (self.model or "").lower()

|

||||

if model.startswith("openrouter/"):

|

||||

return True

|

||||

api_base = (self.api_base or "").lower()

|

||||

return "openrouter.ai/api/v1" in api_base

|

||||

|

||||

def _should_use_openrouter_tool_compat(

|

||||

self,

|

||||

error: BaseException,

|

||||

tools: list[Tool] | None,

|

||||

) -> bool:

|

||||

"""Return True when OpenRouter rejects native tool use for the model."""

|

||||

if not tools or not self._is_openrouter_model():

|

||||

return False

|

||||

error_text = str(error).lower()

|

||||

return "openrouter" in error_text and any(

|

||||

snippet in error_text for snippet in OPENROUTER_TOOL_COMPAT_ERROR_SNIPPETS

|

||||

)

|

||||

|

||||

@staticmethod

|

||||

def _extract_json_object(text: str) -> dict[str, Any] | None:

|

||||

"""Extract the first JSON object from a model response."""

|

||||

candidates = [text.strip()]

|

||||

|

||||

stripped = text.strip()

|

||||

if stripped.startswith("```"):

|

||||

fence_lines = stripped.splitlines()

|

||||

if len(fence_lines) >= 3:

|

||||

candidates.append("\n".join(fence_lines[1:-1]).strip())

|

||||

|

||||

decoder = json.JSONDecoder()

|

||||

for candidate in candidates:

|

||||

if not candidate:

|

||||

continue

|

||||

try:

|

||||

parsed = json.loads(candidate)

|

||||

except json.JSONDecodeError:

|

||||

parsed = None

|

||||

if isinstance(parsed, dict):

|

||||

return parsed

|

||||

|

||||

for start_idx, char in enumerate(candidate):

|

||||

if char != "{":

|

||||

continue

|

||||

try:

|

||||

parsed, _ = decoder.raw_decode(candidate[start_idx:])

|

||||

except json.JSONDecodeError:

|

||||

continue

|

||||

if isinstance(parsed, dict):

|

||||

return parsed

|

||||

return None

|

||||

|

||||

def _parse_openrouter_tool_compat_response(

|

||||

self,

|

||||

content: str,

|

||||

tools: list[Tool],

|

||||

) -> tuple[str, list[dict[str, Any]]]:

|

||||

"""Parse JSON tool-compat output into assistant text and tool calls."""

|

||||

payload = self._extract_json_object(content)

|

||||

if payload is None:

|

||||

text_tool_content, text_tool_calls = self._parse_openrouter_text_tool_calls(

|

||||

content,

|

||||

tools,

|

||||

)

|

||||

if text_tool_calls:

|

||||

logger.info(

|

||||

"[openrouter-tool-compat] Parsed textual tool-call markers for %s",

|

||||

self.model,

|

||||

)

|

||||

return text_tool_content, text_tool_calls

|

||||

logger.info(

|

||||

"[openrouter-tool-compat] %s returned non-JSON fallback content; "

|

||||

"treating it as plain text.",

|

||||

self.model,

|

||||

)

|

||||

return content.strip(), []

|

||||

|

||||

assistant_text = payload.get("assistant_response")

|

||||

if not isinstance(assistant_text, str):

|

||||

assistant_text = payload.get("content")

|

||||

if not isinstance(assistant_text, str):

|

||||

assistant_text = payload.get("response")

|

||||

if not isinstance(assistant_text, str):

|

||||

assistant_text = ""

|

||||

|

||||

tool_calls_raw = payload.get("tool_calls")

|

||||

if not tool_calls_raw and {"name", "arguments"} <= payload.keys():

|

||||

tool_calls_raw = [payload]

|

||||

elif isinstance(payload.get("tool_call"), dict):

|

||||

tool_calls_raw = [payload["tool_call"]]

|

||||

|

||||

if not isinstance(tool_calls_raw, list):

|

||||

tool_calls_raw = []

|

||||

|

||||

allowed_tool_names = {tool.name for tool in tools}

|

||||

tool_calls: list[dict[str, Any]] = []

|

||||

compat_prefix = f"openrouter_compat_{time.time_ns()}"

|

||||

|

||||

for idx, raw_call in enumerate(tool_calls_raw):

|

||||

if not isinstance(raw_call, dict):

|

||||

continue

|

||||

|

||||

function_block = raw_call.get("function")

|

||||

function_name = (

|

||||

raw_call.get("name")

|

||||

or raw_call.get("tool_name")

|

||||

or (function_block.get("name") if isinstance(function_block, dict) else None)

|

||||

)

|

||||

if not isinstance(function_name, str) or function_name not in allowed_tool_names:

|

||||

if function_name:

|

||||

logger.warning(

|

||||

"[openrouter-tool-compat] Ignoring unknown tool '%s' for model %s",

|

||||

function_name,

|

||||

self.model,

|

||||

)

|

||||

continue

|

||||

|

||||

arguments = raw_call.get("arguments")

|

||||

if arguments is None:

|

||||

arguments = raw_call.get("tool_input")

|

||||

if arguments is None:

|

||||

arguments = raw_call.get("input")

|

||||

if arguments is None and isinstance(function_block, dict):

|

||||

arguments = function_block.get("arguments")

|

||||

if arguments is None:

|

||||

arguments = {}

|

||||

|

||||

if isinstance(arguments, str):

|

||||

try:

|

||||

arguments = json.loads(arguments)

|

||||

except json.JSONDecodeError:

|

||||

arguments = {"_raw": arguments}

|

||||

elif not isinstance(arguments, dict):

|

||||

arguments = {"value": arguments}

|

||||

|

||||

tool_calls.append(

|

||||

{

|

||||

"id": f"{compat_prefix}_{idx}",

|

||||

"name": function_name,

|

||||

"input": arguments,

|

||||

}

|

||||

)

|

||||

|

||||

return assistant_text.strip(), tool_calls

|

||||

|

||||

@staticmethod

|

||||

def _close_truncated_json_fragment(fragment: str) -> str:

|

||||

"""Close a truncated JSON fragment by balancing quotes/brackets."""

|

||||

stack: list[str] = []

|

||||

in_string = False

|

||||

escaped = False

|

||||

normalized = fragment.rstrip()

|

||||

|

||||

while normalized and normalized[-1] in ",:{[":

|

||||

normalized = normalized[:-1].rstrip()

|

||||

|

||||

for char in normalized:

|

||||

if in_string:

|

||||

if escaped:

|

||||

escaped = False

|

||||

elif char == "\\":

|

||||

escaped = True

|

||||

elif char == '"':

|

||||

in_string = False

|

||||

continue

|

||||

|

||||

if char == '"':

|

||||

in_string = True

|

||||

elif char in "{[":

|

||||

stack.append(char)

|

||||

elif char == "}" and stack and stack[-1] == "{":

|

||||

stack.pop()

|

||||

elif char == "]" and stack and stack[-1] == "[":

|

||||

stack.pop()

|

||||

|

||||

if in_string:

|

||||

if escaped:

|

||||

normalized = normalized[:-1]

|

||||

normalized += '"'

|

||||

|

||||

for opener in reversed(stack):

|

||||

normalized += "}" if opener == "{" else "]"

|

||||

|

||||

return normalized

|

||||

|

||||

def _repair_truncated_tool_arguments(self, raw_arguments: str) -> dict[str, Any] | None:

|

||||

"""Try to recover a truncated JSON object from tool-call arguments."""

|

||||

stripped = raw_arguments.strip()

|

||||

if not stripped or stripped[0] != "{":

|

||||

return None

|

||||

|

||||

max_trim = min(len(stripped), 256)

|

||||

for trim in range(max_trim + 1):

|

||||

candidate = stripped[: len(stripped) - trim].rstrip()

|

||||

if not candidate:

|

||||

break

|

||||

candidate = self._close_truncated_json_fragment(candidate)

|

||||

try:

|

||||

parsed = json.loads(candidate)

|

||||

except json.JSONDecodeError:

|

||||

continue

|

||||

if isinstance(parsed, dict):

|

||||

return parsed

|

||||

return None

|

||||

|

||||

def _parse_tool_call_arguments(self, raw_arguments: str, tool_name: str) -> dict[str, Any]:

|

||||

"""Parse streamed tool arguments, repairing truncation when possible."""

|

||||

try:

|

||||

parsed = json.loads(raw_arguments) if raw_arguments else {}

|

||||

except json.JSONDecodeError:

|

||||

parsed = None

|

||||

|

||||

if isinstance(parsed, dict):

|

||||

return parsed

|

||||

|

||||

repaired = self._repair_truncated_tool_arguments(raw_arguments)

|

||||

if repaired is not None:

|

||||

logger.warning(

|

||||

"[tool-args] Recovered truncated arguments for %s on %s",

|

||||

tool_name,

|

||||

self.model,

|

||||

)

|

||||

return repaired

|

||||

|

||||

raise ValueError(

|

||||

f"Failed to parse tool call arguments for '{tool_name}' (likely truncated JSON)."

|

||||

)

|

||||

|

||||

def _parse_openrouter_text_tool_calls(

|

||||

self,

|

||||

content: str,

|

||||

tools: list[Tool],

|

||||

) -> tuple[str, list[dict[str, Any]]]:

|

||||

"""Parse textual OpenRouter tool calls into synthetic tool calls.

|

||||

|

||||

Supports both:

|

||||

- Marker wrapped payloads: <|tool_call_start|>...<|tool_call_end|>

|

||||

- Plain one-line tool calls: ask_user("...", ["..."])

|

||||

"""

|

||||

tools_by_name = {tool.name: tool for tool in tools}

|

||||

compat_prefix = f"openrouter_compat_{time.time_ns()}"

|

||||

tool_calls: list[dict[str, Any]] = []

|

||||

segment_index = 0

|

||||

|

||||

for match in OPENROUTER_TOOL_CALL_RE.finditer(content):

|

||||

parsed_calls = self._parse_openrouter_text_tool_call_block(

|

||||

block=match.group(1),

|

||||

tools_by_name=tools_by_name,

|

||||

compat_prefix=f"{compat_prefix}_{segment_index}",

|

||||

)

|

||||

if parsed_calls:

|

||||

segment_index += 1

|

||||

tool_calls.extend(parsed_calls)

|

||||

|

||||

stripped_content = OPENROUTER_TOOL_CALL_RE.sub("", content)

|

||||

retained_lines: list[str] = []

|

||||

for line in stripped_content.splitlines():

|

||||

stripped_line = line.strip()

|

||||

if not stripped_line:

|

||||

retained_lines.append(line)

|

||||

continue

|

||||

|

||||

candidate = stripped_line

|

||||

if candidate.startswith("`") and candidate.endswith("`") and len(candidate) > 1:

|

||||

candidate = candidate[1:-1].strip()

|

||||

|

||||

parsed_calls = self._parse_openrouter_text_tool_call_block(

|

||||

block=candidate,

|

||||

tools_by_name=tools_by_name,

|

||||

compat_prefix=f"{compat_prefix}_{segment_index}",

|

||||

)

|

||||

if parsed_calls:

|

||||

segment_index += 1

|

||||

tool_calls.extend(parsed_calls)

|

||||

continue

|

||||

|

||||

retained_lines.append(line)

|

||||

|

||||

stripped_text = "\n".join(retained_lines).strip()

|

||||

return stripped_text, tool_calls

|

||||

|

||||

def _parse_openrouter_text_tool_call_block(

|

||||

self,

|

||||

block: str,

|

||||

tools_by_name: dict[str, Tool],

|

||||

compat_prefix: str,

|

||||

) -> list[dict[str, Any]]:

|

||||

"""Parse a single textual tool-call block like [tool(arg='x')]."""

|

||||

try:

|

||||

parsed = ast.parse(block.strip(), mode="eval").body

|

||||

except SyntaxError:

|

||||

return []

|

||||

|

||||

call_nodes = parsed.elts if isinstance(parsed, ast.List) else [parsed]

|

||||

tool_calls: list[dict[str, Any]] = []

|

||||

|

||||

for call_index, call_node in enumerate(call_nodes):

|

||||

if not isinstance(call_node, ast.Call) or not isinstance(call_node.func, ast.Name):

|

||||

continue

|

||||

|

||||

tool_name = call_node.func.id

|

||||

tool = tools_by_name.get(tool_name)

|

||||

if tool is None:

|

||||

continue

|

||||

|

||||

try:

|

||||

tool_input = self._parse_openrouter_text_tool_call_arguments(

|

||||

call_node=call_node,

|

||||

tool=tool,

|

||||

)

|

||||

except (ValueError, SyntaxError):

|

||||

continue

|

||||

|

||||

tool_calls.append(

|

||||

{

|

||||

"id": f"{compat_prefix}_{call_index}",

|

||||

"name": tool_name,

|

||||

"input": tool_input,

|

||||

}

|

||||

)

|

||||

|

||||

return tool_calls

|

||||

|

||||

@staticmethod

|

||||

def _parse_openrouter_text_tool_call_arguments(

|

||||

call_node: ast.Call,

|

||||

tool: Tool,

|

||||

) -> dict[str, Any]:

|

||||

"""Parse positional/keyword args from a textual tool call."""

|

||||

properties = tool.parameters.get("properties", {})

|

||||

positional_keys = list(properties.keys())

|

||||

tool_input: dict[str, Any] = {}

|

||||

|

||||

if len(call_node.args) > len(positional_keys):

|

||||

raise ValueError("Too many positional args for textual tool call")

|

||||

|

||||

for idx, arg_node in enumerate(call_node.args):

|

||||

tool_input[positional_keys[idx]] = ast.literal_eval(arg_node)

|

||||

|

||||

for kwarg in call_node.keywords:

|

||||

if kwarg.arg is None:

|

||||

raise ValueError("Star args are not supported in textual tool calls")

|

||||

tool_input[kwarg.arg] = ast.literal_eval(kwarg.value)

|

||||

|

||||

return tool_input

|

||||

|

||||

def _build_openrouter_tool_compat_messages(

|

||||

self,

|

||||

messages: list[dict[str, Any]],

|

||||

system: str,

|

||||

tools: list[Tool],

|

||||

) -> list[dict[str, Any]]:

|

||||

"""Build a JSON-only prompt for models without native tool support."""

|

||||

tool_specs = [

|

||||

{

|

||||

"name": tool.name,

|

||||

"description": tool.description,

|

||||

"parameters": tool.parameters,

|

||||

}

|

||||

for tool in tools

|

||||

]

|

||||

compat_instruction = (

|

||||

"Tool compatibility mode is active because this OpenRouter model does not support "

|

||||

"native function calling on the routed provider.\n"

|

||||

"Return exactly one JSON object and nothing else.\n"

|

||||

'Schema: {"assistant_response": string, '

|

||||

'"tool_calls": [{"name": string, "arguments": object}]}\n'

|

||||

"Rules:\n"

|

||||

"- If a tool is required, put one or more entries in tool_calls "

|

||||

"and do not invent tool results.\n"

|

||||

"- If no tool is required, set tool_calls to [] and put the full "

|

||||

"answer in assistant_response.\n"

|

||||

"- Only use tool names from the allowed tool list.\n"

|

||||

"- arguments must always be valid JSON objects.\n"

|

||||

f"Allowed tools:\n{json.dumps(tool_specs, ensure_ascii=True)}"

|

||||

)

|

||||

compat_system = compat_instruction if not system else f"{system}\n\n{compat_instruction}"

|

||||

|

||||

full_messages: list[dict[str, Any]] = [{"role": "system", "content": compat_system}]

|

||||

full_messages.extend(messages)

|

||||

return [

|

||||

message

|

||||

for message in full_messages

|

||||

if not (

|

||||

message.get("role") == "assistant"

|

||||

and not message.get("content")

|

||||

and not message.get("tool_calls")

|

||||

)

|

||||

]

|

||||

|

||||

async def _acomplete_via_openrouter_tool_compat(

|

||||

self,

|

||||

messages: list[dict[str, Any]],

|

||||

system: str,

|

||||

tools: list[Tool],

|

||||

max_tokens: int,

|

||||

) -> LLMResponse:

|

||||

"""Emulate tool calling via JSON when OpenRouter rejects native tools."""

|

||||

full_messages = self._build_openrouter_tool_compat_messages(messages, system, tools)

|

||||

kwargs: dict[str, Any] = {

|

||||

"model": self.model,

|

||||

"messages": full_messages,

|

||||

"max_tokens": max_tokens,

|

||||

**self.extra_kwargs,

|

||||

}

|

||||

if self.api_key:

|

||||

kwargs["api_key"] = self.api_key

|

||||

if self.api_base:

|

||||

kwargs["api_base"] = self.api_base

|

||||

|

||||

response = await self._acompletion_with_rate_limit_retry(**kwargs)

|

||||

raw_content = response.choices[0].message.content or ""

|

||||

assistant_text, tool_calls = self._parse_openrouter_tool_compat_response(

|

||||

raw_content,

|

||||

tools,

|

||||

)

|

||||

usage = response.usage

|

||||

input_tokens = usage.prompt_tokens if usage else 0

|

||||

output_tokens = usage.completion_tokens if usage else 0

|

||||

stop_reason = "tool_calls" if tool_calls else (response.choices[0].finish_reason or "stop")

|

||||

|

||||

return LLMResponse(

|

||||

content=assistant_text,

|

||||

model=response.model or self.model,

|

||||

input_tokens=input_tokens,

|

||||

output_tokens=output_tokens,

|

||||

stop_reason=stop_reason,

|

||||

raw_response={

|

||||

"compat_mode": "openrouter_tool_emulation",

|

||||

"tool_calls": tool_calls,

|

||||

"response": response,

|

||||

},

|

||||

)

|

||||

|

||||

async def _stream_via_openrouter_tool_compat(

|

||||

self,

|

||||

messages: list[dict[str, Any]],

|

||||

system: str,

|

||||

tools: list[Tool],

|

||||

max_tokens: int,

|

||||

) -> AsyncIterator[StreamEvent]:

|

||||

"""Fallback stream for OpenRouter models without native tool support."""

|

||||

from framework.llm.stream_events import (

|

||||

FinishEvent,

|

||||

StreamErrorEvent,

|

||||

TextDeltaEvent,

|

||||

TextEndEvent,

|

||||

ToolCallEvent,

|

||||

)

|

||||

|

||||

logger.info(

|

||||

"[openrouter-tool-compat] Using compatibility mode for %s",

|

||||

self.model,

|

||||

)

|

||||

try:

|

||||

response = await self._acomplete_via_openrouter_tool_compat(

|

||||

messages=messages,

|

||||

system=system,

|

||||

tools=tools,

|

||||

max_tokens=max_tokens,

|

||||

)

|

||||

except Exception as e:

|

||||

yield StreamErrorEvent(error=str(e), recoverable=False)

|

||||

return

|

||||

|

||||

raw_response = response.raw_response if isinstance(response.raw_response, dict) else {}

|

||||

tool_calls = raw_response.get("tool_calls", [])

|

||||

|

||||

if response.content:

|

||||

yield TextDeltaEvent(content=response.content, snapshot=response.content)

|

||||

yield TextEndEvent(full_text=response.content)

|

||||

|

||||

for tool_call in tool_calls:

|

||||

yield ToolCallEvent(

|

||||

tool_use_id=tool_call["id"],

|

||||

tool_name=tool_call["name"],

|

||||

tool_input=tool_call["input"],

|

||||

)

|

||||

|

||||

yield FinishEvent(

|

||||

stop_reason=response.stop_reason,

|

||||

input_tokens=response.input_tokens,

|

||||

output_tokens=response.output_tokens,

|

||||

model=response.model,

|

||||

)

|

||||

|

||||

async def _stream_via_nonstream_completion(

|

||||

self,

|

||||

messages: list[dict[str, Any]],

|

||||

@@ -882,12 +1492,11 @@ class LiteLLMProvider(LLMProvider):

|

||||

tool_calls = msg.tool_calls or []

|

||||

|

||||

for tc in tool_calls:

|

||||

parsed_args: Any

|

||||

args = tc.function.arguments if tc.function else ""

|

||||

try:

|

||||

parsed_args = json.loads(args) if args else {}

|

||||

except json.JSONDecodeError:

|

||||

parsed_args = {"_raw": args}

|

||||

parsed_args = self._parse_tool_call_arguments(

|

||||

args,

|

||||

tc.function.name if tc.function else "",

|

||||

)

|

||||

yield ToolCallEvent(

|

||||

tool_use_id=getattr(tc, "id", ""),

|

||||

tool_name=tc.function.name if tc.function else "",

|

||||

@@ -946,7 +1555,20 @@ class LiteLLMProvider(LLMProvider):

|

||||

yield event

|

||||

return

|

||||

|

||||

if tools and self._is_openrouter_model() and _is_openrouter_tool_compat_cached(self.model):

|

||||

async for event in self._stream_via_openrouter_tool_compat(

|

||||

messages=messages,

|

||||

system=system,

|

||||

tools=tools,

|

||||

max_tokens=max_tokens,

|

||||

):

|

||||

yield event

|

||||

return

|

||||

|

||||

full_messages: list[dict[str, Any]] = []

|

||||

if self._claude_code_oauth:

|

||||

billing = _claude_code_billing_header(messages)

|

||||

full_messages.append({"role": "system", "content": billing})

|

||||

if system:

|

||||

sys_msg: dict[str, Any] = {"role": "system", "content": system}

|

||||

if _model_supports_cache_control(self.model):

|

||||

@@ -984,9 +1606,12 @@ class LiteLLMProvider(LLMProvider):

|

||||

"messages": full_messages,

|

||||

"max_tokens": max_tokens,

|

||||

"stream": True,

|

||||

"stream_options": {"include_usage": True},

|

||||

**self.extra_kwargs,

|

||||

}

|

||||

# stream_options is OpenAI-specific; Anthropic rejects it with 400.

|

||||

# Only include it for providers that support it.

|

||||

if not self._is_anthropic_model():

|

||||

kwargs["stream_options"] = {"include_usage": True}

|

||||

if self.api_key:

|

||||

kwargs["api_key"] = self.api_key

|

||||

if self.api_base:

|

||||

@@ -1092,10 +1717,10 @@ class LiteLLMProvider(LLMProvider):

|

||||

if choice.finish_reason:

|

||||

stream_finish_reason = choice.finish_reason

|

||||

for _idx, tc_data in sorted(tool_calls_acc.items()):

|

||||

try:

|

||||

parsed_args = json.loads(tc_data["arguments"])

|

||||

except (json.JSONDecodeError, KeyError):

|

||||

parsed_args = {"_raw": tc_data.get("arguments", "")}

|

||||

parsed_args = self._parse_tool_call_arguments(

|

||||

tc_data.get("arguments", ""),

|

||||

tc_data.get("name", ""),

|

||||

)

|

||||

tail_events.append(

|

||||

ToolCallEvent(

|

||||

tool_use_id=tc_data["id"],

|

||||

@@ -1276,6 +1901,16 @@ class LiteLLMProvider(LLMProvider):

|

||||

return

|

||||

|

||||

except Exception as e:

|

||||

if self._should_use_openrouter_tool_compat(e, tools):

|

||||

_remember_openrouter_tool_compat_model(self.model)

|

||||

async for event in self._stream_via_openrouter_tool_compat(

|

||||

messages=messages,

|

||||

system=system,

|

||||

tools=tools or [],

|

||||

max_tokens=max_tokens,

|

||||

):

|

||||

yield event

|

||||

return

|

||||

if _is_stream_transient_error(e) and attempt < RATE_LIMIT_MAX_RETRIES:

|

||||

wait = _compute_retry_delay(attempt, exception=e)

|

||||

logger.warning(

|

||||

|

||||

@@ -208,7 +208,12 @@ def configure_logging(

|

||||

|

||||

# Suppress noisy LiteLLM INFO logs (model/provider line + Provider List URL

|

||||

# printed on every single completion call). Warnings and errors still show.

|

||||

logging.getLogger("LiteLLM").setLevel(logging.WARNING)

|

||||

# Honour LITELLM_LOG env var so users can opt-in to debug output.

|

||||

_litellm_level = os.getenv("LITELLM_LOG", "").upper()

|

||||

if _litellm_level and hasattr(logging, _litellm_level):

|

||||

logging.getLogger("LiteLLM").setLevel(getattr(logging, _litellm_level))

|

||||

else:

|

||||

logging.getLogger("LiteLLM").setLevel(logging.WARNING)

|

||||

|

||||

# When in JSON mode, configure known third-party loggers to use JSON formatter

|

||||

# This ensures libraries like LiteLLM, httpcore also output clean JSON

|

||||

|

||||

@@ -1381,6 +1381,8 @@ class AgentRunner:

|

||||

return "MISTRAL_API_KEY"

|

||||

elif model_lower.startswith("groq/"):

|

||||

return "GROQ_API_KEY"

|

||||

elif model_lower.startswith("openrouter/"):

|

||||

return "OPENROUTER_API_KEY"

|

||||

elif self._is_local_model(model_lower):

|

||||

return None # Local models don't need an API key

|

||||

elif model_lower.startswith("azure/"):

|

||||

|

||||

Generated

+8

@@ -60,6 +60,7 @@

|

||||

"integrity": "sha512-CGOfOJqWjg2qW/Mb6zNsDm+u5vFQ8DxXfbM09z69p5Z6+mE1ikP2jUXw+j42Pf1XTYED2Rni5f95npYeuwMDQA==",

|

||||

"dev": true,

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"@babel/code-frame": "^7.29.0",

|

||||

"@babel/generator": "^7.29.0",

|

||||

@@ -1556,6 +1557,7 @@

|

||||

"integrity": "sha512-4K3bqJpXpqfg2XKGK9bpDTc6xO/xoUP/RBWS7AtRMug6zZFaRekiLzjVtAoZMquxoAbzBvy5nxQ7veS5eYzf8A==",

|

||||

"dev": true,

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"undici-types": "~7.18.0"

|

||||

}

|

||||

@@ -1571,6 +1573,7 @@

|

||||

"resolved": "https://registry.npmjs.org/@types/react/-/react-18.3.28.tgz",

|

||||

"integrity": "sha512-z9VXpC7MWrhfWipitjNdgCauoMLRdIILQsAEV+ZesIzBq/oUlxk0m3ApZuMFCXdnS4U7KrI+l3WRUEGQ8K1QKw==",

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"@types/prop-types": "*",

|

||||

"csstype": "^3.2.2"

|

||||

@@ -1783,6 +1786,7 @@

|

||||

}

|

||||

],

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"baseline-browser-mapping": "^2.9.0",

|

||||

"caniuse-lite": "^1.0.30001759",

|

||||

@@ -3560,6 +3564,7 @@

|

||||

"integrity": "sha512-5gTmgEY/sqK6gFXLIsQNH19lWb4ebPDLA4SdLP7dsWkIXHWlG66oPuVvXSGFPppYZz8ZDZq0dYYrbHfBCVUb1Q==",

|

||||

"dev": true,

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"engines": {

|

||||

"node": ">=12"

|

||||

},

|

||||

@@ -3611,6 +3616,7 @@

|

||||

"resolved": "https://registry.npmjs.org/react/-/react-18.3.1.tgz",

|

||||

"integrity": "sha512-wS+hAgJShR0KhEvPJArfuPVN1+Hz1t0Y6n5jLrGQbkb4urgPE/0Rve+1kMB1v/oWgHgm4WIcV+i7F2pTVj+2iQ==",

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"loose-envify": "^1.1.0"

|

||||

},

|

||||

@@ -3623,6 +3629,7 @@

|

||||

"resolved": "https://registry.npmjs.org/react-dom/-/react-dom-18.3.1.tgz",

|

||||

"integrity": "sha512-5m4nQKp+rZRb09LNH59GM4BxTh9251/ylbKIbpe7TpGxfJ+9kv6BLkLBXIjjspbgbnIBNqlI23tRnTWT0snUIw==",

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"loose-envify": "^1.1.0",

|

||||

"scheduler": "^0.23.2"

|

||||

@@ -4183,6 +4190,7 @@

|

||||

"integrity": "sha512-+Oxm7q9hDoLMyJOYfUYBuHQo+dkAloi33apOPP56pzj+vsdJDzr+j1NISE5pyaAuKL4A3UD34qd0lx5+kfKp2g==",

|

||||

"dev": true,

|

||||

"license": "MIT",

|

||||

"peer": true,

|

||||

"dependencies": {

|

||||

"esbuild": "^0.25.0",

|

||||

"fdir": "^6.4.4",

|

||||

|

||||

@@ -0,0 +1,209 @@

|

||||

import importlib.util

|

||||

from pathlib import Path

|

||||

|

||||

|

||||

def _load_check_llm_key_module():

|

||||

module_path = Path(__file__).resolve().parents[2] / "scripts" / "check_llm_key.py"

|

||||

spec = importlib.util.spec_from_file_location("check_llm_key_script", module_path)

|

||||

module = importlib.util.module_from_spec(spec)

|

||||

assert spec.loader is not None

|

||||

spec.loader.exec_module(module)

|

||||

return module

|

||||

|

||||

|

||||

def _run_openrouter_check(monkeypatch, status_code: int):

|

||||

module = _load_check_llm_key_module()

|

||||

calls = {}

|

||||

|

||||

class FakeResponse:

|

||||

def __init__(self, code):

|

||||

self.status_code = code

|

||||

|

||||

class FakeClient:

|

||||

def __init__(self, timeout):

|

||||

calls["timeout"] = timeout

|

||||

|

||||

def __enter__(self):

|

||||

return self

|

||||

|

||||

def __exit__(self, exc_type, exc, tb):

|

||||

return False

|

||||

|

||||

def get(self, endpoint, headers):

|

||||

calls["endpoint"] = endpoint

|

||||

calls["headers"] = headers

|

||||

return FakeResponse(status_code)

|

||||

|

||||

monkeypatch.setattr(module.httpx, "Client", FakeClient)

|

||||

result = module.check_openrouter("test-key")

|

||||

return result, calls

|

||||

|

||||

|

||||

def _run_openrouter_model_check(

|

||||

monkeypatch,

|

||||

status_code: int,

|

||||

payload: dict | None = None,

|

||||

model: str = "openai/gpt-4o-mini",

|

||||

):

|

||||

module = _load_check_llm_key_module()

|

||||

calls = {}

|

||||

|

||||

class FakeResponse:

|

||||

def __init__(self, code):

|

||||

self.status_code = code

|

||||

self._payload = payload

|

||||

self.text = ""

|

||||

|

||||

def json(self):

|

||||

if self._payload is None:

|

||||

raise ValueError("no json")

|

||||

return self._payload

|

||||

|

||||

class FakeClient:

|

||||

def __init__(self, timeout):

|

||||

calls["timeout"] = timeout

|

||||

|

||||

def __enter__(self):

|

||||

return self

|

||||

|

||||

def __exit__(self, exc_type, exc, tb):

|

||||

return False

|

||||

|

||||

def get(self, endpoint, headers):

|

||||

calls["endpoint"] = endpoint

|

||||

calls["headers"] = headers

|

||||

return FakeResponse(status_code)

|

||||

|

||||

monkeypatch.setattr(module.httpx, "Client", FakeClient)

|

||||

result = module.check_openrouter_model("test-key", model)

|

||||

return result, calls

|

||||

|

||||

|

||||

def test_check_openrouter_200(monkeypatch):

|

||||

result, calls = _run_openrouter_check(monkeypatch, 200)

|

||||

assert result == {"valid": True, "message": "OpenRouter API key valid"}

|

||||

assert calls["endpoint"] == "https://openrouter.ai/api/v1/models"

|

||||

assert calls["headers"] == {"Authorization": "Bearer test-key"}

|

||||

|

||||

|

||||

def test_check_openrouter_401(monkeypatch):

|

||||

result, _ = _run_openrouter_check(monkeypatch, 401)

|

||||

assert result == {"valid": False, "message": "Invalid OpenRouter API key"}

|

||||

|

||||

|

||||

def test_check_openrouter_403(monkeypatch):

|

||||

result, _ = _run_openrouter_check(monkeypatch, 403)

|

||||

assert result == {"valid": False, "message": "OpenRouter API key lacks permissions"}

|

||||

|

||||

|

||||

def test_check_openrouter_429(monkeypatch):

|

||||

result, _ = _run_openrouter_check(monkeypatch, 429)

|

||||

assert result == {"valid": True, "message": "OpenRouter API key valid"}

|

||||

|

||||

|

||||

def test_check_openrouter_model_200(monkeypatch):

|

||||

result, calls = _run_openrouter_model_check(

|

||||

monkeypatch,

|

||||

200,

|

||||

{

|

||||

"data": [

|

||||

{

|

||||

"id": "openai/gpt-4o-mini",

|

||||

"canonical_slug": "openai/gpt-4o-mini",

|

||||

}

|

||||

]

|

||||

},

|

||||

)

|

||||

assert result == {

|

||||

"valid": True,

|

||||

"message": "OpenRouter model is available: openai/gpt-4o-mini",

|

||||

"model": "openai/gpt-4o-mini",

|

||||

}

|

||||

assert calls["endpoint"] == "https://openrouter.ai/api/v1/models/user"

|

||||

assert calls["headers"] == {"Authorization": "Bearer test-key"}

|

||||

|

||||

|

||||

def test_check_openrouter_model_200_matches_canonical_slug(monkeypatch):

|

||||

result, _ = _run_openrouter_model_check(

|

||||

monkeypatch,

|

||||

200,

|

||||

{

|

||||

"data": [

|

||||

{

|

||||

"id": "mistralai/mistral-small-4",

|

||||

"canonical_slug": "mistralai/mistral-small-2603",

|

||||

}

|

||||

]

|

||||

},

|

||||

model="mistralai/mistral-small-2603",

|

||||

)

|

||||

assert result == {

|

||||

"valid": True,

|

||||

"message": "OpenRouter model is available: mistralai/mistral-small-2603",

|

||||

"model": "mistralai/mistral-small-2603",

|

||||

}

|

||||

|

||||

|

||||

def test_check_openrouter_model_200_sanitizes_pasted_unicode(monkeypatch):

|

||||

result, _ = _run_openrouter_model_check(

|

||||

monkeypatch,

|

||||

200,

|

||||

{

|

||||

"data": [

|

||||

{

|

||||

"id": "z-ai/glm-5-turbo",

|

||||

"canonical_slug": "z-ai/glm-5-turbo",

|

||||

}

|

||||

]

|

||||

},

|

||||

model="openrouter/z-ai\u200b/glm\u20115\u2011turbo",

|

||||

)

|

||||

assert result == {

|

||||

"valid": True,

|

||||

"message": "OpenRouter model is available: z-ai/glm-5-turbo",

|

||||

"model": "z-ai/glm-5-turbo",

|

||||

}

|

||||

|

||||

|

||||

def test_check_openrouter_model_200_not_found_with_suggestions(monkeypatch):

|

||||

result, _ = _run_openrouter_model_check(

|

||||

monkeypatch,

|

||||

200,

|

||||

{

|

||||

"data": [

|

||||

{"id": "z-ai/glm-5-turbo"},

|

||||

{"id": "z-ai/glm-4.6v"},

|

||||

]

|

||||

},

|

||||

model="z-ai/glm-5-turb",

|

||||

)

|

||||

assert result == {

|

||||

"valid": False,

|

||||

"message": (

|

||||

"OpenRouter model is not available for this key/settings: z-ai/glm-5-turb. "

|

||||

"Closest matches: z-ai/glm-5-turbo"

|

||||

),

|

||||

}

|

||||

|

||||

|

||||

def test_check_openrouter_model_404_with_error_message(monkeypatch):

|

||||

result, _ = _run_openrouter_model_check(

|

||||

monkeypatch,

|

||||

404,

|

||||

{"error": {"message": "No endpoints available for this model"}},

|

||||

)

|

||||

assert result == {

|

||||

"valid": False,

|

||||

"message": (

|

||||

"OpenRouter model is not available for this key/settings: openai/gpt-4o-mini. "

|

||||

"No endpoints available for this model"

|

||||

),

|

||||

}

|

||||

|

||||

|

||||

def test_check_openrouter_model_429(monkeypatch):

|

||||

result, _ = _run_openrouter_model_check(monkeypatch, 429)

|

||||

assert result == {

|

||||

"valid": True,

|

||||

"message": "OpenRouter model check rate-limited; assuming model is reachable",

|

||||

}

|

||||

@@ -2,7 +2,7 @@

|

||||

|

||||

import logging

|

||||

|

||||

from framework.config import get_hive_config

|

||||

from framework.config import get_api_base, get_hive_config, get_preferred_model

|

||||

|

||||

|

||||

class TestGetHiveConfig:

|

||||

@@ -21,3 +21,47 @@ class TestGetHiveConfig:

|

||||

assert result == {}

|

||||

assert "Failed to load Hive config" in caplog.text

|

||||

assert str(config_file) in caplog.text

|

||||

|

||||

|

||||

class TestOpenRouterConfig:

|

||||

"""OpenRouter config composition and fallback behavior."""

|

||||

|

||||

def test_get_preferred_model_for_openrouter(self, tmp_path, monkeypatch):

|

||||

config_file = tmp_path / "configuration.json"

|

||||

config_file.write_text(

|

||||

'{"llm":{"provider":"openrouter","model":"x-ai/grok-4.20-beta"}}',

|

||||

encoding="utf-8",

|

||||

)

|

||||

monkeypatch.setattr("framework.config.HIVE_CONFIG_FILE", config_file)

|

||||

|

||||

assert get_preferred_model() == "openrouter/x-ai/grok-4.20-beta"

|

||||

|

||||

def test_get_preferred_model_normalizes_openrouter_prefixed_model(self, tmp_path, monkeypatch):

|

||||

config_file = tmp_path / "configuration.json"

|

||||

config_file.write_text(

|

||||

'{"llm":{"provider":"openrouter","model":"openrouter/x-ai/grok-4.20-beta"}}',

|

||||

encoding="utf-8",

|

||||

)

|

||||

monkeypatch.setattr("framework.config.HIVE_CONFIG_FILE", config_file)

|

||||

|

||||

assert get_preferred_model() == "openrouter/x-ai/grok-4.20-beta"

|

||||

|

||||

def test_get_api_base_falls_back_to_openrouter_default(self, tmp_path, monkeypatch):

|

||||

config_file = tmp_path / "configuration.json"

|

||||

config_file.write_text(

|

||||

'{"llm":{"provider":"openrouter","model":"x-ai/grok-4.20-beta"}}',

|

||||

encoding="utf-8",

|

||||

)

|

||||

monkeypatch.setattr("framework.config.HIVE_CONFIG_FILE", config_file)

|

||||

|

||||

assert get_api_base() == "https://openrouter.ai/api/v1"

|

||||

|

||||

def test_get_api_base_keeps_explicit_openrouter_api_base(self, tmp_path, monkeypatch):

|

||||

config_file = tmp_path / "configuration.json"

|

||||

config_file.write_text(

|

||||

'{"llm":{"provider":"openrouter","model":"x-ai/grok-4.20-beta","api_base":"https://proxy.example/v1"}}',

|

||||

encoding="utf-8",

|

||||

)

|

||||

monkeypatch.setattr("framework.config.HIVE_CONFIG_FILE", config_file)

|

||||

|

||||

assert get_api_base() == "https://proxy.example/v1"

|

||||

|

||||

@@ -0,0 +1,70 @@

|

||||

import os

|

||||

import sys

|

||||

from types import ModuleType, SimpleNamespace

|

||||

|

||||

from framework.credentials import key_storage

|

||||

from framework.credentials.validation import ensure_credential_key_env

|

||||

|

||||

|

||||

def _install_fake_aden_modules(monkeypatch, check_fn, credential_specs):

|

||||

shell_config_module = ModuleType("aden_tools.credentials.shell_config")

|

||||

shell_config_module.check_env_var_in_shell_config = check_fn

|

||||

|

||||

credentials_module = ModuleType("aden_tools.credentials")

|

||||

credentials_module.CREDENTIAL_SPECS = credential_specs

|

||||

|

||||

monkeypatch.setitem(sys.modules, "aden_tools.credentials.shell_config", shell_config_module)

|

||||

monkeypatch.setitem(sys.modules, "aden_tools.credentials", credentials_module)

|

||||

|

||||

|

||||

def test_bootstrap_loads_configured_llm_env_var_from_shell_config(monkeypatch):

|

||||

monkeypatch.setattr(key_storage, "load_credential_key", lambda: None)

|

||||

monkeypatch.setattr(key_storage, "load_aden_api_key", lambda: None)

|

||||

monkeypatch.setattr(

|

||||

"framework.config.get_hive_config",

|

||||

lambda: {"llm": {"api_key_env_var": "OPENROUTER_API_KEY"}},

|

||||

)

|

||||

monkeypatch.delenv("OPENROUTER_API_KEY", raising=False)

|

||||

monkeypatch.delenv("ANTHROPIC_API_KEY", raising=False)

|

||||

|

||||

calls = []

|

||||

|

||||

def check_env(var_name):

|

||||

calls.append(var_name)

|

||||

if var_name == "OPENROUTER_API_KEY":

|

||||

return True, "or-key-123"

|

||||

return False, None

|

||||

|

||||

_install_fake_aden_modules(

|

||||

monkeypatch,

|

||||

check_env,

|

||||

{"anthropic": SimpleNamespace(env_var="ANTHROPIC_API_KEY")},

|

||||

)

|

||||

|

||||

ensure_credential_key_env()

|

||||

|

||||

assert os.environ.get("OPENROUTER_API_KEY") == "or-key-123"

|

||||

assert "OPENROUTER_API_KEY" in calls

|

||||

|

||||

|

||||

def test_bootstrap_does_not_override_existing_configured_llm_env_var(monkeypatch):

|

||||

monkeypatch.setattr(key_storage, "load_credential_key", lambda: None)

|

||||

monkeypatch.setattr(key_storage, "load_aden_api_key", lambda: None)

|

||||

monkeypatch.setattr(

|

||||

"framework.config.get_hive_config",

|

||||

lambda: {"llm": {"api_key_env_var": "OPENROUTER_API_KEY"}},

|

||||

)

|

||||

monkeypatch.setenv("OPENROUTER_API_KEY", "already-set")

|

||||

|

||||

calls = []

|

||||

|

||||

def check_env(var_name):

|

||||

calls.append(var_name)

|

||||

return True, "new-value-should-not-apply"

|

||||

|

||||

_install_fake_aden_modules(monkeypatch, check_env, {})

|

||||

|

||||

ensure_credential_key_env()

|

||||

|

||||

assert os.environ.get("OPENROUTER_API_KEY") == "already-set"

|

||||

assert "OPENROUTER_API_KEY" not in calls

|

||||

@@ -1530,6 +1530,34 @@ class TestTransientErrorRetry:

|

||||

await node.execute(ctx)

|

||||

assert llm._call_index == 1 # only tried once

|

||||

|

||||

@pytest.mark.asyncio

|

||||

async def test_client_facing_non_transient_error_does_not_crash(

|

||||