Compare commits

159 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 736756b257 | |||

| 90efe7009d | |||

| 4adb369bde | |||

| d4a30eb2f3 | |||

| 94bb4a2984 | |||

| 648bad26ed | |||

| f0c7470f3d | |||

| fe533b72a6 | |||

| e581767cab | |||

| 0663ee5950 | |||

| 4b97baa34b | |||

| a89296d397 | |||

| d568912ba2 | |||

| c4d7980058 | |||

| 8549fe8238 | |||

| 2b8d85bb95 | |||

| 07f7801166 | |||

| 1f12a45151 | |||

| 936e02e8e6 | |||

| d59fe1e109 | |||

| 274318d3e5 | |||

| 0f0884c2e0 | |||

| 764012c598 | |||

| fd4dc1a69a | |||

| 377cd39c2a | |||

| e92caeef24 | |||

| b7e6226478 | |||

| a995818db2 | |||

| 0772b4d300 | |||

| 684e0d8dc6 | |||

| d284c5d790 | |||

| 7a9b9666c4 | |||

| a852cb91bf | |||

| 2f21e9eb4b | |||

| 8390ef8731 | |||

| 8d21479c24 | |||

| 965dec3ba1 | |||

| d4b54446be | |||

| 7992b862c2 | |||

| 44b3e0eaa2 | |||

| f480fc2b94 | |||

| 2844dbf19f | |||

| 22b7e4b0c3 | |||

| 5413833a69 | |||

| 02e1a4584a | |||

| 520840b1dd | |||

| ee96147336 | |||

| 705cef4dc1 | |||

| ab26e64122 | |||

| f365e219cb | |||

| 01621881c2 | |||

| f7639f8572 | |||

| fc643060ce | |||

| 9aebeb181e | |||

| acbbfaaa79 | |||

| bf170bce10 | |||

| 0a090d058b | |||

| 47bfadaad9 | |||

| d968dcd44c | |||

| 6fdaa9ea50 | |||

| 4d251fbdc2 | |||

| 6acceed288 | |||

| 8dd1d6e3aa | |||

| 1da28644a6 | |||

| 6452fe7fef | |||

| acff008bd2 | |||

| 651d6850a1 | |||

| c7fdc92594 | |||

| 43602a8801 | |||

| 3da04265a6 | |||

| 4c98f0d2d0 | |||

| d84c3364d0 | |||

| ae921f6cee | |||

| 6b506a1c08 | |||

| 0c9f4fa97e | |||

| 95e30bc607 | |||

| 0f1f0090b0 | |||

| c0da3bec02 | |||

| 9dadb5264d | |||

| e39e6a75cc | |||

| 23c66d1059 | |||

| b9d529d94e | |||

| 1c9b09fb78 | |||

| 9fb14f23d2 | |||

| 4795dc4f68 | |||

| acf0f804c5 | |||

| 4e2951854b | |||

| 80dfb429d7 | |||

| 9c0ba77e22 | |||

| 46b4651073 | |||

| 86dd5246c6 | |||

| a1227c88ee | |||

| 535d7ab568 | |||

| af10494b31 | |||

| 39c1042827 | |||

| 16e7dc11f4 | |||

| 7a27babefd | |||

| d53ae9d51d | |||

| 910cf7727d | |||

| 1698605f15 | |||

| eda124a123 | |||

| 15e9ce8d2f | |||

| c01dd603d7 | |||

| 9d5157d69f | |||

| d78795bdf5 | |||

| ff2b7f473e | |||

| 73c9a91811 | |||

| 27b765d902 | |||

| fddba419be | |||

| f42d6308e8 | |||

| c167002754 | |||

| ea26ee7d0c | |||

| 5280e908b2 | |||

| 1c5dd8c664 | |||

| 3aca153be5 | |||

| 65c8e1653c | |||

| 58e4fa918c | |||

| 3af13d3f90 | |||

| b799789dbe | |||

| 2cd73dfccc | |||

| 57d77d5479 | |||

| 5814021773 | |||

| 4f4cc9c8ce | |||

| d9c840eee5 | |||

| d2eb86e534 | |||

| 03842353e4 | |||

| 48747e20af | |||

| 58af593af6 | |||

| 450575a927 | |||

| eac2bb19b2 | |||

| 756a815bf0 | |||

| 23a7b080eb | |||

| bf39bcdec9 | |||

| 0276632491 | |||

| ae2993d0d1 | |||

| d14d71f760 | |||

| 738641d35f | |||

| 22f5534f08 | |||

| b79e7eca73 | |||

| 28250dc45e | |||

| fe5df6a87a | |||

| 88253883a3 | |||

| ff7b5c7e27 | |||

| 6ed6e5b286 | |||

| 30bb0ad5d8 | |||

| cb0845f5ba | |||

| ce2525b59c | |||

| 1f77ec3831 | |||

| 6ab5aa8004 | |||

| 4449cd8ee8 | |||

| 8b60c03a0a | |||

| 0e98023e40 | |||

| 22bb07f00e | |||

| 660f883197 | |||

| 988de80b66 | |||

| dc6aa226ee | |||

| 48a54b4ee2 | |||

| a7b6b080ab | |||

| 9202cbd4d4 |

@@ -2,14 +2,22 @@ name: Bounty completed

|

||||

description: Awards points and notifies Discord when a bounty PR is merged

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

pull_request_target:

|

||||

types: [closed]

|

||||

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

pr_number:

|

||||

description: "PR number to process (for missed bounties)"

|

||||

required: true

|

||||

type: number

|

||||

|

||||

jobs:

|

||||

bounty-notify:

|

||||

if: >

|

||||

github.event.pull_request.merged == true &&

|

||||

contains(join(github.event.pull_request.labels.*.name, ','), 'bounty:')

|

||||

github.event_name == 'workflow_dispatch' ||

|

||||

(github.event.pull_request.merged == true &&

|

||||

contains(join(github.event.pull_request.labels.*.name, ','), 'bounty:'))

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 5

|

||||

permissions:

|

||||

@@ -32,6 +40,8 @@ jobs:

|

||||

GITHUB_REPOSITORY_OWNER: ${{ github.repository_owner }}

|

||||

GITHUB_REPOSITORY_NAME: ${{ github.event.repository.name }}

|

||||

DISCORD_WEBHOOK_URL: ${{ secrets.DISCORD_BOUNTY_WEBHOOK_URL }}

|

||||

BOT_API_URL: ${{ secrets.BOT_API_URL }}

|

||||

BOT_API_KEY: ${{ secrets.BOT_API_KEY }}

|

||||

LURKR_API_KEY: ${{ secrets.LURKR_API_KEY }}

|

||||

LURKR_GUILD_ID: ${{ secrets.LURKR_GUILD_ID }}

|

||||

PR_NUMBER: ${{ github.event.pull_request.number }}

|

||||

PR_NUMBER: ${{ inputs.pr_number || github.event.pull_request.number }}

|

||||

|

||||

@@ -1,126 +0,0 @@

|

||||

name: Link Discord account

|

||||

description: Auto-creates a PR to add contributor to contributors.yml when a link-discord issue is opened

|

||||

|

||||

on:

|

||||

issues:

|

||||

types: [opened]

|

||||

|

||||

jobs:

|

||||

link-discord:

|

||||

if: contains(github.event.issue.labels.*.name, 'link-discord')

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 2

|

||||

permissions:

|

||||

contents: write

|

||||

issues: write

|

||||

pull-requests: write

|

||||

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Parse issue and update contributors.yml

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const fs = require('fs');

|

||||

|

||||

const issue = context.payload.issue;

|

||||

const githubUsername = issue.user.login;

|

||||

|

||||

// Parse the issue body for form fields

|

||||

const body = issue.body || '';

|

||||

|

||||

// Extract Discord ID — look for the numeric value after the "Discord User ID" heading

|

||||

const discordMatch = body.match(/### Discord User ID\s*\n\s*(\d{17,20})/);

|

||||

if (!discordMatch) {

|

||||

await github.rest.issues.createComment({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

body: `Could not find a valid Discord ID in the issue body. Please make sure you entered a numeric ID (17-20 digits), not a username.\n\nExample: \`123456789012345678\``

|

||||

});

|

||||

await github.rest.issues.update({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

state: 'closed',

|

||||

state_reason: 'not_planned'

|

||||

});

|

||||

return;

|

||||

}

|

||||

const discordId = discordMatch[1];

|

||||

|

||||

// Extract display name (optional)

|

||||

const nameMatch = body.match(/### Display Name \(optional\)\s*\n\s*(.+)/);

|

||||

const displayName = nameMatch ? nameMatch[1].trim() : '';

|

||||

|

||||

// Check if user already exists

|

||||

const yml = fs.readFileSync('contributors.yml', 'utf-8');

|

||||

if (yml.includes(`github: ${githubUsername}`)) {

|

||||

await github.rest.issues.createComment({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

body: `@${githubUsername} is already in \`contributors.yml\`. If you need to update your Discord ID, please edit the file directly via PR.`

|

||||

});

|

||||

await github.rest.issues.update({

|

||||

...context.repo,

|

||||

issue_number: issue.number,

|

||||

state: 'closed',

|

||||

state_reason: 'completed'

|

||||

});

|

||||

return;

|

||||

}

|

||||

|

||||

// Append entry to contributors.yml

|

||||

let entry = ` - github: ${githubUsername}\n discord: "${discordId}"`;

|

||||

if (displayName && displayName !== '_No response_') {

|

||||

entry += `\n name: ${displayName}`;

|

||||

}

|

||||

entry += '\n';

|

||||

|

||||

const updated = yml.trimEnd() + '\n' + entry;

|

||||

fs.writeFileSync('contributors.yml', updated);

|

||||

|

||||

// Set outputs for commit step

|

||||

core.exportVariable('GITHUB_USERNAME', githubUsername);

|

||||

core.exportVariable('DISCORD_ID', discordId);

|

||||

core.exportVariable('ISSUE_NUMBER', issue.number.toString());

|

||||

|

||||

- name: Create PR

|

||||

run: |

|

||||

# Check if there are changes

|

||||

if git diff --quiet contributors.yml; then

|

||||

echo "No changes to contributors.yml"

|

||||

exit 0

|

||||

fi

|

||||

|

||||

BRANCH="docs/link-discord-${GITHUB_USERNAME}"

|

||||

git config user.name "github-actions[bot]"

|

||||

git config user.email "41898282+github-actions[bot]@users.noreply.github.com"

|

||||

git checkout -b "$BRANCH"

|

||||

git add contributors.yml

|

||||

git commit -m "docs: link @${GITHUB_USERNAME} to Discord"

|

||||

git push origin "$BRANCH"

|

||||

|

||||

gh pr create \

|

||||

--title "docs: link @${GITHUB_USERNAME} to Discord" \

|

||||

--body "Adds @${GITHUB_USERNAME} (Discord \`${DISCORD_ID}\`) to \`contributors.yml\` for bounty XP tracking.

|

||||

|

||||

Closes #${ISSUE_NUMBER}" \

|

||||

--base main \

|

||||

--head "$BRANCH" \

|

||||

--label "link-discord"

|

||||

env:

|

||||

GH_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Notify on issue

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const username = process.env.GITHUB_USERNAME;

|

||||

const issueNumber = parseInt(process.env.ISSUE_NUMBER);

|

||||

|

||||

await github.rest.issues.createComment({

|

||||

...context.repo,

|

||||

issue_number: issueNumber,

|

||||

body: `A PR has been created to link your account. A maintainer will merge it shortly — once merged, you'll receive XP and Discord pings when your bounty PRs are merged.`

|

||||

});

|

||||

@@ -35,6 +35,8 @@ jobs:

|

||||

GITHUB_REPOSITORY_OWNER: ${{ github.repository_owner }}

|

||||

GITHUB_REPOSITORY_NAME: ${{ github.event.repository.name }}

|

||||

DISCORD_WEBHOOK_URL: ${{ secrets.DISCORD_BOUNTY_WEBHOOK_URL }}

|

||||

BOT_API_URL: ${{ secrets.BOT_API_URL }}

|

||||

BOT_API_KEY: ${{ secrets.BOT_API_KEY }}

|

||||

LURKR_API_KEY: ${{ secrets.LURKR_API_KEY }}

|

||||

LURKR_GUILD_ID: ${{ secrets.LURKR_GUILD_ID }}

|

||||

SINCE_DATE: ${{ github.event.inputs.since_date || '' }}

|

||||

|

||||

@@ -68,7 +68,6 @@ temp/

|

||||

exports/*

|

||||

|

||||

.claude/settings.local.json

|

||||

.claude/skills/ship-it/

|

||||

|

||||

.venv

|

||||

|

||||

|

||||

@@ -1,4 +1,11 @@

|

||||

.PHONY: lint format check test install-hooks help frontend-install frontend-dev frontend-build

|

||||

.PHONY: lint format check test test-tools test-live test-all install-hooks help frontend-install frontend-dev frontend-build

|

||||

|

||||

# ── Ensure uv is findable in Git Bash on Windows ──────────────────────────────

|

||||

# uv installs to ~/.local/bin on Windows/Linux/macOS. Git Bash may not include

|

||||

# this in PATH by default, so we prepend it here.

|

||||

export PATH := $(HOME)/.local/bin:$(PATH)

|

||||

|

||||

# ── Targets ───────────────────────────────────────────────────────────────────

|

||||

|

||||

help: ## Show this help

|

||||

@grep -E '^[a-zA-Z_-]+:.*?## .*$$' $(MAKEFILE_LIST) | \

|

||||

@@ -46,4 +53,4 @@ frontend-dev: ## Start frontend dev server

|

||||

cd core/frontend && npm run dev

|

||||

|

||||

frontend-build: ## Build frontend for production

|

||||

cd core/frontend && npm run build

|

||||

cd core/frontend && npm run build

|

||||

@@ -41,7 +41,9 @@ Generate a swarm of worker agents with a coding agent(queen) that control them.

|

||||

|

||||

Visit [adenhq.com](https://adenhq.com) for complete documentation, examples, and guides.

|

||||

|

||||

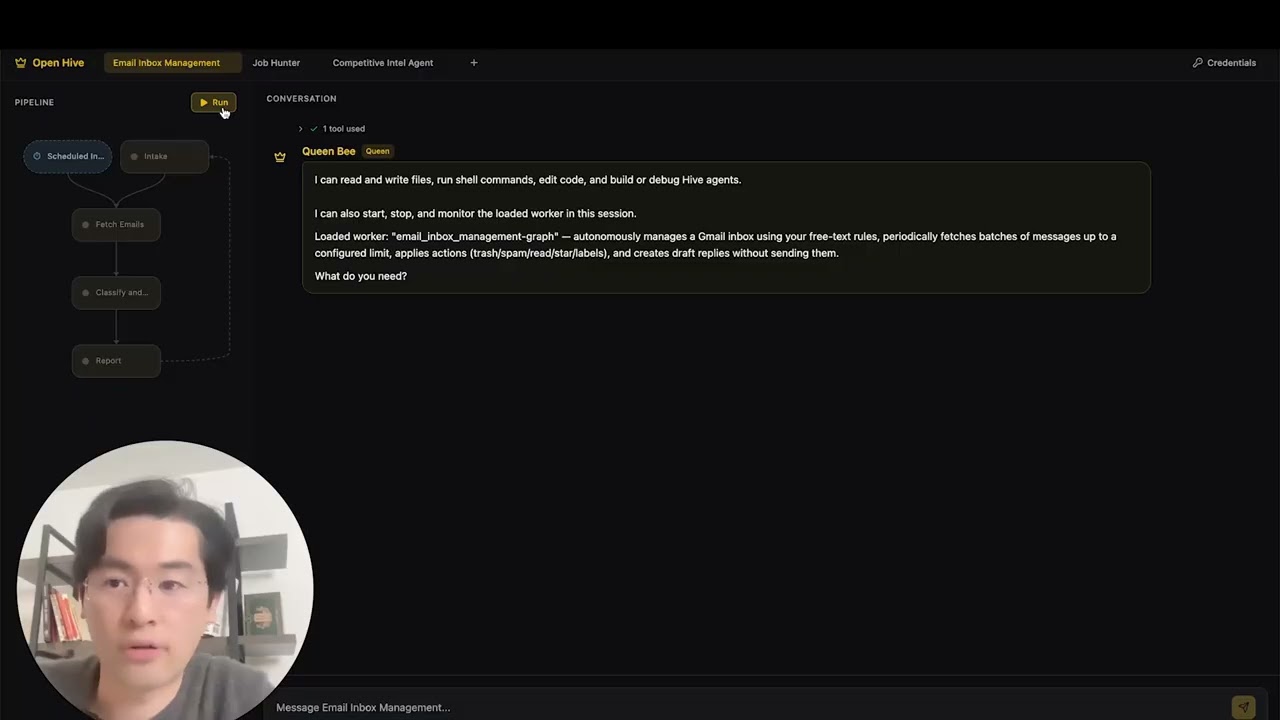

[](https://www.youtube.com/watch?v=XDOG9fOaLjU)

|

||||

|

||||

https://github.com/user-attachments/assets/bf10edc3-06ba-48b6-98ba-d069b15fb69d

|

||||

|

||||

|

||||

## Who Is Hive For?

|

||||

|

||||

|

||||

@@ -1,31 +0,0 @@

|

||||

perf: reduce subprocess spawning in quickstart scripts (#4427)

|

||||

|

||||

## Problem

|

||||

Windows process creation (CreateProcess) is 10-100x slower than Linux fork/exec.

|

||||

The quickstart scripts were spawning 4+ separate `uv run python -c "import X"`

|

||||

processes to verify imports, adding ~600ms overhead on Windows.

|

||||

|

||||

## Solution

|

||||

Consolidated all import checks into a single batch script that checks multiple

|

||||

modules in one subprocess call, reducing spawn overhead by ~75%.

|

||||

|

||||

## Changes

|

||||

- **New**: `scripts/check_requirements.py` - Batched import checker

|

||||

- **New**: `scripts/test_check_requirements.py` - Test suite

|

||||

- **New**: `scripts/benchmark_quickstart.ps1` - Performance benchmark tool

|

||||

- **Modified**: `quickstart.ps1` - Updated import verification (2 sections)

|

||||

- **Modified**: `quickstart.sh` - Updated import verification

|

||||

|

||||

## Performance Impact

|

||||

**Benchmark results on Windows:**

|

||||

- Before: ~19.8 seconds for import checks

|

||||

- After: ~4.9 seconds for import checks

|

||||

- **Improvement: 14.9 seconds saved (75.2% faster)**

|

||||

|

||||

## Testing

|

||||

- ✅ All functional tests pass (`scripts/test_check_requirements.py`)

|

||||

- ✅ Quickstart scripts work correctly on Windows

|

||||

- ✅ Error handling verified (invalid imports reported correctly)

|

||||

- ✅ Performance benchmark confirms 75%+ improvement

|

||||

|

||||

Fixes #4427

|

||||

@@ -1,27 +0,0 @@

|

||||

# Identity mapping: GitHub username -> Discord ID

|

||||

#

|

||||

# This file links GitHub accounts to Discord accounts for the

|

||||

# Integration Bounty Program. When a bounty PR is merged, the

|

||||

# GitHub Action uses this file to ping the contributor on Discord.

|

||||

#

|

||||

# HOW TO ADD YOURSELF:

|

||||

# Open a "Link Discord Account" issue:

|

||||

# https://github.com/aden-hive/hive/issues/new?template=link-discord.yml

|

||||

# A GitHub Action will automatically add your entry here.

|

||||

#

|

||||

# To find your Discord ID:

|

||||

# 1. Open Discord Settings > Advanced > Enable Developer Mode

|

||||

# 2. Right-click your name > Copy User ID

|

||||

#

|

||||

# Format:

|

||||

# - github: your-github-username

|

||||

# discord: "your-discord-id" # quotes required (it's a number)

|

||||

# name: Your Display Name # optional

|

||||

|

||||

contributors:

|

||||

# - github: example-user

|

||||

# discord: "123456789012345678"

|

||||

# name: Example User

|

||||

- github: TimothyZhang7

|

||||

discord: "408460790061072384"

|

||||

name: Timothy@Aden

|

||||

@@ -0,0 +1,583 @@

|

||||

#!/usr/bin/env python3

|

||||

"""Antigravity authentication CLI.

|

||||

|

||||

Implements OAuth2 flow for Google's Antigravity Code Assist gateway.

|

||||

Credentials are stored in ~/.hive/antigravity-accounts.json.

|

||||

|

||||

Usage:

|

||||

python -m antigravity_auth auth account add

|

||||

python -m antigravity_auth auth account list

|

||||

python -m antigravity_auth auth account remove <email>

|

||||

"""

|

||||

|

||||

from __future__ import annotations

|

||||

|

||||

import argparse

|

||||

import json

|

||||

import logging

|

||||

import os

|

||||

import secrets

|

||||

import socket

|

||||

import sys

|

||||

import time

|

||||

import urllib.parse

|

||||

import urllib.request

|

||||

import webbrowser

|

||||

from http.server import BaseHTTPRequestHandler, HTTPServer

|

||||

from pathlib import Path

|

||||

from typing import Any

|

||||

|

||||

logging.basicConfig(level=logging.INFO, format="%(message)s")

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

# OAuth endpoints

|

||||

_OAUTH_AUTH_URL = "https://accounts.google.com/o/oauth2/v2/auth"

|

||||

_OAUTH_TOKEN_URL = "https://oauth2.googleapis.com/token"

|

||||

|

||||

# Scopes for Antigravity/Cloud Code Assist

|

||||

_OAUTH_SCOPES = [

|

||||

"https://www.googleapis.com/auth/cloud-platform",

|

||||

"https://www.googleapis.com/auth/userinfo.email",

|

||||

"https://www.googleapis.com/auth/userinfo.profile",

|

||||

]

|

||||

|

||||

# Credentials file path in ~/.hive/

|

||||

_ACCOUNTS_FILE = Path.home() / ".hive" / "antigravity-accounts.json"

|

||||

|

||||

# Default project ID

|

||||

_DEFAULT_PROJECT_ID = "rising-fact-p41fc"

|

||||

_DEFAULT_REDIRECT_PORT = 51121

|

||||

|

||||

# OAuth credentials fetched from the opencode-antigravity-auth project.

|

||||

# This project reverse-engineered and published the public OAuth credentials

|

||||

# for Google's Antigravity/Cloud Code Assist API.

|

||||

# Source: https://github.com/NoeFabris/opencode-antigravity-auth

|

||||

_CREDENTIALS_URL = (

|

||||

"https://raw.githubusercontent.com/NoeFabris/opencode-antigravity-auth/dev/src/constants.ts"

|

||||

)

|

||||

|

||||

# Cached credentials fetched from public source

|

||||

_cached_client_id: str | None = None

|

||||

_cached_client_secret: str | None = None

|

||||

|

||||

|

||||

def _fetch_credentials_from_public_source() -> tuple[str | None, str | None]:

|

||||

"""Fetch OAuth client ID and secret from the public npm package source on GitHub."""

|

||||

global _cached_client_id, _cached_client_secret

|

||||

if _cached_client_id and _cached_client_secret:

|

||||

return _cached_client_id, _cached_client_secret

|

||||

|

||||

try:

|

||||

req = urllib.request.Request(

|

||||

_CREDENTIALS_URL, headers={"User-Agent": "Hive-Antigravity-Auth/1.0"}

|

||||

)

|

||||

with urllib.request.urlopen(req, timeout=10) as resp:

|

||||

content = resp.read().decode("utf-8")

|

||||

import re

|

||||

|

||||

id_match = re.search(r'ANTIGRAVITY_CLIENT_ID\s*=\s*"([^"]+)"', content)

|

||||

secret_match = re.search(r'ANTIGRAVITY_CLIENT_SECRET\s*=\s*"([^"]+)"', content)

|

||||

if id_match:

|

||||

_cached_client_id = id_match.group(1)

|

||||

if secret_match:

|

||||

_cached_client_secret = secret_match.group(1)

|

||||

return _cached_client_id, _cached_client_secret

|

||||

except Exception as e:

|

||||

logger.debug(f"Failed to fetch credentials from public source: {e}")

|

||||

return None, None

|

||||

|

||||

|

||||

def get_client_id() -> str:

|

||||

"""Get OAuth client ID from env, config, or public source."""

|

||||

env_id = os.environ.get("ANTIGRAVITY_CLIENT_ID")

|

||||

if env_id:

|

||||

return env_id

|

||||

|

||||

# Try hive config

|

||||

hive_cfg = Path.home() / ".hive" / "configuration.json"

|

||||

if hive_cfg.exists():

|

||||

try:

|

||||

with open(hive_cfg) as f:

|

||||

cfg = json.load(f)

|

||||

cfg_id = cfg.get("llm", {}).get("antigravity_client_id")

|

||||

if cfg_id:

|

||||

return cfg_id

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

# Fetch from public source

|

||||

client_id, _ = _fetch_credentials_from_public_source()

|

||||

if client_id:

|

||||

return client_id

|

||||

|

||||

raise RuntimeError("Could not obtain Antigravity OAuth client ID")

|

||||

|

||||

|

||||

def get_client_secret() -> str | None:

|

||||

"""Get OAuth client secret from env, config, or public source."""

|

||||

secret = os.environ.get("ANTIGRAVITY_CLIENT_SECRET")

|

||||

if secret:

|

||||

return secret

|

||||

|

||||

# Try to read from hive config

|

||||

hive_cfg = Path.home() / ".hive" / "configuration.json"

|

||||

if hive_cfg.exists():

|

||||

try:

|

||||

with open(hive_cfg) as f:

|

||||

cfg = json.load(f)

|

||||

secret = cfg.get("llm", {}).get("antigravity_client_secret")

|

||||

if secret:

|

||||

return secret

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

# Fetch from public source (npm package on GitHub)

|

||||

_, secret = _fetch_credentials_from_public_source()

|

||||

return secret

|

||||

|

||||

|

||||

def find_free_port() -> int:

|

||||

"""Find an available local port."""

|

||||

with socket.socket(socket.AF_INET, socket.SOCK_STREAM) as s:

|

||||

s.bind(("", 0))

|

||||

s.listen(1)

|

||||

return s.getsockname()[1]

|

||||

|

||||

|

||||

class OAuthCallbackHandler(BaseHTTPRequestHandler):

|

||||

"""Handle OAuth callback from browser."""

|

||||

|

||||

auth_code: str | None = None

|

||||

state: str | None = None

|

||||

error: str | None = None

|

||||

|

||||

def log_message(self, format: str, *args: Any) -> None:

|

||||

pass # Suppress default logging

|

||||

|

||||

def do_GET(self) -> None:

|

||||

parsed = urllib.parse.urlparse(self.path)

|

||||

|

||||

if parsed.path == "/oauth-callback":

|

||||

query = urllib.parse.parse_qs(parsed.query)

|

||||

|

||||

if "error" in query:

|

||||

self.error = query["error"][0]

|

||||

self._send_response("Authentication failed. You can close this window.")

|

||||

return

|

||||

|

||||

if "code" in query and "state" in query:

|

||||

OAuthCallbackHandler.auth_code = query["code"][0]

|

||||

OAuthCallbackHandler.state = query["state"][0]

|

||||

self._send_response(

|

||||

"Authentication successful! You can close this window "

|

||||

"and return to the terminal."

|

||||

)

|

||||

return

|

||||

|

||||

self._send_response("Waiting for authentication...")

|

||||

|

||||

def _send_response(self, message: str) -> None:

|

||||

self.send_response(200)

|

||||

self.send_header("Content-Type", "text/html")

|

||||

self.end_headers()

|

||||

html = f"""<!DOCTYPE html>

|

||||

<html>

|

||||

<head><title>Antigravity Auth</title></head>

|

||||

<body style="font-family: system-ui; display: flex; align-items: center;

|

||||

justify-content: center; height: 100vh; margin: 0; background: #1a1a2e;

|

||||

color: #eee;">

|

||||

<div style="text-align: center;">

|

||||

<h2>{message}</h2>

|

||||

</div>

|

||||

</body>

|

||||

</html>"""

|

||||

self.wfile.write(html.encode())

|

||||

|

||||

|

||||

def wait_for_callback(port: int, timeout: int = 300) -> tuple[str | None, str | None, str | None]:

|

||||

"""Start local server and wait for OAuth callback."""

|

||||

server = HTTPServer(("localhost", port), OAuthCallbackHandler)

|

||||

server.timeout = 1

|

||||

|

||||

start = time.time()

|

||||

while time.time() - start < timeout:

|

||||

if OAuthCallbackHandler.auth_code:

|

||||

return (

|

||||

OAuthCallbackHandler.auth_code,

|

||||

OAuthCallbackHandler.state,

|

||||

OAuthCallbackHandler.error,

|

||||

)

|

||||

server.handle_request()

|

||||

|

||||

return None, None, "timeout"

|

||||

|

||||

|

||||

def exchange_code_for_tokens(

|

||||

code: str, redirect_uri: str, client_id: str, client_secret: str | None

|

||||

) -> dict[str, Any] | None:

|

||||

"""Exchange authorization code for tokens."""

|

||||

data = {

|

||||

"code": code,

|

||||

"client_id": client_id,

|

||||

"redirect_uri": redirect_uri,

|

||||

"grant_type": "authorization_code",

|

||||

}

|

||||

if client_secret:

|

||||

data["client_secret"] = client_secret

|

||||

|

||||

body = urllib.parse.urlencode(data).encode()

|

||||

|

||||

req = urllib.request.Request(

|

||||

_OAUTH_TOKEN_URL,

|

||||

data=body,

|

||||

headers={"Content-Type": "application/x-www-form-urlencoded"},

|

||||

method="POST",

|

||||

)

|

||||

|

||||

try:

|

||||

with urllib.request.urlopen(req, timeout=30) as resp:

|

||||

return json.loads(resp.read())

|

||||

except Exception as e:

|

||||

logger.error(f"Token exchange failed: {e}")

|

||||

return None

|

||||

|

||||

|

||||

def get_user_email(access_token: str) -> str | None:

|

||||

"""Get user email from Google API."""

|

||||

req = urllib.request.Request(

|

||||

"https://www.googleapis.com/oauth2/v2/userinfo",

|

||||

headers={"Authorization": f"Bearer {access_token}"},

|

||||

)

|

||||

try:

|

||||

with urllib.request.urlopen(req, timeout=10) as resp:

|

||||

data = json.loads(resp.read())

|

||||

return data.get("email")

|

||||

except Exception:

|

||||

return None

|

||||

|

||||

|

||||

def load_accounts() -> dict[str, Any]:

|

||||

"""Load existing accounts from file."""

|

||||

if not _ACCOUNTS_FILE.exists():

|

||||

return {"schemaVersion": 4, "accounts": []}

|

||||

try:

|

||||

with open(_ACCOUNTS_FILE) as f:

|

||||

return json.load(f)

|

||||

except Exception:

|

||||

return {"schemaVersion": 4, "accounts": []}

|

||||

|

||||

|

||||

def save_accounts(data: dict[str, Any]) -> None:

|

||||

"""Save accounts to file."""

|

||||

_ACCOUNTS_FILE.parent.mkdir(parents=True, exist_ok=True)

|

||||

with open(_ACCOUNTS_FILE, "w") as f:

|

||||

json.dump(data, f, indent=2)

|

||||

logger.info(f"Saved credentials to {_ACCOUNTS_FILE}")

|

||||

|

||||

|

||||

def validate_credentials(access_token: str, project_id: str = _DEFAULT_PROJECT_ID) -> bool:

|

||||

"""Test if credentials work by making a simple API call to Antigravity.

|

||||

|

||||

Returns True if credentials are valid, False otherwise.

|

||||

"""

|

||||

endpoint = "https://daily-cloudcode-pa.sandbox.googleapis.com"

|

||||

body = {

|

||||

"project": project_id,

|

||||

"model": "gemini-3-flash",

|

||||

"request": {

|

||||

"contents": [{"role": "user", "parts": [{"text": "hi"}]}],

|

||||

"generationConfig": {"maxOutputTokens": 10},

|

||||

},

|

||||

"requestType": "agent",

|

||||

"userAgent": "antigravity",

|

||||

"requestId": "validation-test",

|

||||

}

|

||||

headers = {

|

||||

"Authorization": f"Bearer {access_token}",

|

||||

"Content-Type": "application/json",

|

||||

"User-Agent": (

|

||||

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) "

|

||||

"AppleWebKit/537.36 (KHTML, like Gecko) Antigravity/1.18.3"

|

||||

),

|

||||

"X-Goog-Api-Client": "google-cloud-sdk vscode_cloudshelleditor/0.1",

|

||||

}

|

||||

|

||||

try:

|

||||

req = urllib.request.Request(

|

||||

f"{endpoint}/v1internal:generateContent",

|

||||

data=json.dumps(body).encode("utf-8"),

|

||||

headers=headers,

|

||||

method="POST",

|

||||

)

|

||||

with urllib.request.urlopen(req, timeout=30) as resp:

|

||||

json.loads(resp.read())

|

||||

return True

|

||||

except Exception:

|

||||

return False

|

||||

|

||||

|

||||

def refresh_access_token(

|

||||

refresh_token: str, client_id: str, client_secret: str | None

|

||||

) -> dict | None:

|

||||

"""Refresh the access token using the refresh token."""

|

||||

data = {

|

||||

"grant_type": "refresh_token",

|

||||

"refresh_token": refresh_token,

|

||||

"client_id": client_id,

|

||||

}

|

||||

if client_secret:

|

||||

data["client_secret"] = client_secret

|

||||

|

||||

body = urllib.parse.urlencode(data).encode()

|

||||

req = urllib.request.Request(

|

||||

_OAUTH_TOKEN_URL,

|

||||

data=body,

|

||||

headers={"Content-Type": "application/x-www-form-urlencoded"},

|

||||

method="POST",

|

||||

)

|

||||

try:

|

||||

with urllib.request.urlopen(req, timeout=30) as resp:

|

||||

return json.loads(resp.read())

|

||||

except Exception as e:

|

||||

logger.debug(f"Token refresh failed: {e}")

|

||||

return None

|

||||

|

||||

|

||||

def cmd_account_add(args: argparse.Namespace) -> int:

|

||||

"""Add a new Antigravity account via OAuth2.

|

||||

|

||||

First checks if valid credentials already exist. If so, validates them

|

||||

and skips OAuth if they work. Otherwise, proceeds with OAuth flow.

|

||||

"""

|

||||

client_id = get_client_id()

|

||||

client_secret = get_client_secret()

|

||||

|

||||

# Check if credentials already exist

|

||||

accounts_data = load_accounts()

|

||||

accounts = accounts_data.get("accounts", [])

|

||||

|

||||

if accounts:

|

||||

account = next((a for a in accounts if a.get("enabled", True) is not False), accounts[0])

|

||||

access_token = account.get("access")

|

||||

refresh_token_str = account.get("refresh", "")

|

||||

refresh_token = refresh_token_str.split("|")[0] if refresh_token_str else None

|

||||

project_id = (

|

||||

refresh_token_str.split("|")[1] if "|" in refresh_token_str else _DEFAULT_PROJECT_ID

|

||||

)

|

||||

email = account.get("email", "unknown")

|

||||

expires_ms = account.get("expires", 0)

|

||||

expires_at = expires_ms / 1000.0 if expires_ms else 0.0

|

||||

|

||||

# Check if token is expired or near expiry

|

||||

if access_token and expires_at and time.time() < expires_at - 60:

|

||||

# Token still valid, test it

|

||||

logger.info(f"Found existing credentials for: {email}")

|

||||

logger.info("Validating existing credentials...")

|

||||

if validate_credentials(access_token, project_id):

|

||||

logger.info("✓ Credentials valid! Skipping OAuth.")

|

||||

return 0

|

||||

else:

|

||||

logger.info("Credentials failed validation, refreshing...")

|

||||

elif refresh_token:

|

||||

logger.info(f"Found expired credentials for: {email}")

|

||||

logger.info("Attempting token refresh...")

|

||||

|

||||

tokens = refresh_access_token(refresh_token, client_id, client_secret)

|

||||

if tokens:

|

||||

new_access = tokens.get("access_token")

|

||||

expires_in = tokens.get("expires_in", 3600)

|

||||

if new_access:

|

||||

# Update the account

|

||||

account["access"] = new_access

|

||||

account["expires"] = int((time.time() + expires_in) * 1000)

|

||||

accounts_data["last_refresh"] = time.strftime(

|

||||

"%Y-%m-%dT%H:%M:%SZ", time.gmtime()

|

||||

)

|

||||

save_accounts(accounts_data)

|

||||

|

||||

# Validate the refreshed token

|

||||

logger.info("Validating refreshed credentials...")

|

||||

if validate_credentials(new_access, project_id):

|

||||

logger.info("✓ Credentials refreshed and validated!")

|

||||

return 0

|

||||

else:

|

||||

logger.info("Refreshed token failed validation, proceeding with OAuth...")

|

||||

else:

|

||||

logger.info("Token refresh failed, proceeding with OAuth...")

|

||||

|

||||

# No valid credentials, proceed with OAuth

|

||||

if not client_secret:

|

||||

logger.warning(

|

||||

"No client secret configured. Token refresh may fail.\n"

|

||||

"Set ANTIGRAVITY_CLIENT_SECRET env var or add "

|

||||

"'antigravity_client_secret' to ~/.hive/configuration.json"

|

||||

)

|

||||

|

||||

# Use fixed port and path matching Google's expected OAuth redirect URI

|

||||

port = _DEFAULT_REDIRECT_PORT

|

||||

redirect_uri = f"http://localhost:{port}/oauth-callback"

|

||||

|

||||

# Generate state for CSRF protection

|

||||

state = secrets.token_urlsafe(16)

|

||||

|

||||

# Build authorization URL

|

||||

params = {

|

||||

"client_id": client_id,

|

||||

"redirect_uri": redirect_uri,

|

||||

"response_type": "code",

|

||||

"scope": " ".join(_OAUTH_SCOPES),

|

||||

"state": state,

|

||||

"access_type": "offline",

|

||||

"prompt": "consent",

|

||||

}

|

||||

auth_url = f"{_OAUTH_AUTH_URL}?{urllib.parse.urlencode(params)}"

|

||||

|

||||

logger.info("Opening browser for authentication...")

|

||||

logger.info(f"If the browser doesn't open, visit: {auth_url}\n")

|

||||

|

||||

# Open browser

|

||||

webbrowser.open(auth_url)

|

||||

|

||||

# Wait for callback

|

||||

logger.info(f"Listening for callback on port {port}...")

|

||||

code, received_state, error = wait_for_callback(port)

|

||||

|

||||

if error:

|

||||

logger.error(f"Authentication failed: {error}")

|

||||

return 1

|

||||

|

||||

if not code:

|

||||

logger.error("No authorization code received")

|

||||

return 1

|

||||

|

||||

if received_state != state:

|

||||

logger.error("State mismatch - possible CSRF attack")

|

||||

return 1

|

||||

|

||||

# Exchange code for tokens

|

||||

logger.info("Exchanging authorization code for tokens...")

|

||||

tokens = exchange_code_for_tokens(code, redirect_uri, client_id, client_secret)

|

||||

|

||||

if not tokens:

|

||||

return 1

|

||||

|

||||

access_token = tokens.get("access_token")

|

||||

refresh_token = tokens.get("refresh_token")

|

||||

expires_in = tokens.get("expires_in", 3600)

|

||||

|

||||

if not access_token:

|

||||

logger.error("No access token in response")

|

||||

return 1

|

||||

|

||||

# Get user email

|

||||

email = get_user_email(access_token)

|

||||

if email:

|

||||

logger.info(f"Authenticated as: {email}")

|

||||

|

||||

# Load existing accounts and add/update

|

||||

accounts_data = load_accounts()

|

||||

accounts = accounts_data.get("accounts", [])

|

||||

|

||||

# Build new account entry (V4 schema)

|

||||

expires_ms = int((time.time() + expires_in) * 1000)

|

||||

refresh_entry = f"{refresh_token}|{_DEFAULT_PROJECT_ID}"

|

||||

|

||||

new_account = {

|

||||

"access": access_token,

|

||||

"refresh": refresh_entry,

|

||||

"expires": expires_ms,

|

||||

"email": email,

|

||||

"enabled": True,

|

||||

}

|

||||

|

||||

# Update existing account or add new one

|

||||

existing_idx = next((i for i, a in enumerate(accounts) if a.get("email") == email), None)

|

||||

if existing_idx is not None:

|

||||

accounts[existing_idx] = new_account

|

||||

logger.info(f"Updated existing account: {email}")

|

||||

else:

|

||||

accounts.append(new_account)

|

||||

logger.info(f"Added new account: {email}")

|

||||

|

||||

accounts_data["accounts"] = accounts

|

||||

accounts_data["schemaVersion"] = 4

|

||||

accounts_data["last_refresh"] = time.strftime("%Y-%m-%dT%H:%M:%SZ", time.gmtime())

|

||||

|

||||

save_accounts(accounts_data)

|

||||

logger.info("\n✓ Authentication complete!")

|

||||

return 0

|

||||

|

||||

|

||||

def cmd_account_list(args: argparse.Namespace) -> int:

|

||||

"""List all stored accounts."""

|

||||

data = load_accounts()

|

||||

accounts = data.get("accounts", [])

|

||||

|

||||

if not accounts:

|

||||

logger.info("No accounts configured.")

|

||||

logger.info("Run 'antigravity auth account add' to add one.")

|

||||

return 0

|

||||

|

||||

logger.info("Configured accounts:\n")

|

||||

for i, account in enumerate(accounts, 1):

|

||||

email = account.get("email", "unknown")

|

||||

enabled = "enabled" if account.get("enabled", True) else "disabled"

|

||||

logger.info(f" {i}. {email} ({enabled})")

|

||||

|

||||

return 0

|

||||

|

||||

|

||||

def cmd_account_remove(args: argparse.Namespace) -> int:

|

||||

"""Remove an account by email."""

|

||||

email = args.email

|

||||

data = load_accounts()

|

||||

accounts = data.get("accounts", [])

|

||||

|

||||

original_len = len(accounts)

|

||||

accounts = [a for a in accounts if a.get("email") != email]

|

||||

|

||||

if len(accounts) == original_len:

|

||||

logger.error(f"No account found with email: {email}")

|

||||

return 1

|

||||

|

||||

data["accounts"] = accounts

|

||||

save_accounts(data)

|

||||

logger.info(f"Removed account: {email}")

|

||||

return 0

|

||||

|

||||

|

||||

def main() -> int:

|

||||

parser = argparse.ArgumentParser(

|

||||

description="Antigravity authentication CLI",

|

||||

formatter_class=argparse.RawDescriptionHelpFormatter,

|

||||

)

|

||||

subparsers = parser.add_subparsers(dest="command", help="Commands")

|

||||

|

||||

# auth account add

|

||||

auth_parser = subparsers.add_parser("auth", help="Authentication commands")

|

||||

auth_subparsers = auth_parser.add_subparsers(dest="auth_command")

|

||||

|

||||

account_parser = auth_subparsers.add_parser("account", help="Account management")

|

||||

account_subparsers = account_parser.add_subparsers(dest="account_command")

|

||||

|

||||

add_parser = account_subparsers.add_parser("add", help="Add a new account via OAuth2")

|

||||

add_parser.set_defaults(func=cmd_account_add)

|

||||

|

||||

list_parser = account_subparsers.add_parser("list", help="List configured accounts")

|

||||

list_parser.set_defaults(func=cmd_account_list)

|

||||

|

||||

remove_parser = account_subparsers.add_parser("remove", help="Remove an account")

|

||||

remove_parser.add_argument("email", help="Email of account to remove")

|

||||

remove_parser.set_defaults(func=cmd_account_remove)

|

||||

|

||||

args = parser.parse_args()

|

||||

|

||||

if hasattr(args, "func"):

|

||||

return args.func(args)

|

||||

|

||||

parser.print_help()

|

||||

return 0

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

sys.exit(main())

|

||||

@@ -23,25 +23,56 @@ class AgentEntry:

|

||||

last_active: str | None = None

|

||||

|

||||

|

||||

def _get_last_active(agent_name: str) -> str | None:

|

||||

"""Return the most recent updated_at timestamp across all sessions."""

|

||||

sessions_dir = Path.home() / ".hive" / "agents" / agent_name / "sessions"

|

||||

if not sessions_dir.exists():

|

||||

return None

|

||||

def _get_last_active(agent_path: Path) -> str | None:

|

||||

"""Return the most recent updated_at timestamp across all sessions.

|

||||

|

||||

Checks both worker sessions (``~/.hive/agents/{name}/sessions/``) and

|

||||

queen sessions (``~/.hive/queen/session/``) whose ``meta.json`` references

|

||||

the same *agent_path*.

|

||||

"""

|

||||

from datetime import datetime

|

||||

|

||||

agent_name = agent_path.name

|

||||

latest: str | None = None

|

||||

for session_dir in sessions_dir.iterdir():

|

||||

if not session_dir.is_dir() or not session_dir.name.startswith("session_"):

|

||||

continue

|

||||

state_file = session_dir / "state.json"

|

||||

if not state_file.exists():

|

||||

continue

|

||||

try:

|

||||

data = json.loads(state_file.read_text(encoding="utf-8"))

|

||||

ts = data.get("timestamps", {}).get("updated_at")

|

||||

if ts and (latest is None or ts > latest):

|

||||

latest = ts

|

||||

except Exception:

|

||||

continue

|

||||

|

||||

# 1. Worker sessions

|

||||

sessions_dir = Path.home() / ".hive" / "agents" / agent_name / "sessions"

|

||||

if sessions_dir.exists():

|

||||

for session_dir in sessions_dir.iterdir():

|

||||

if not session_dir.is_dir() or not session_dir.name.startswith("session_"):

|

||||

continue

|

||||

state_file = session_dir / "state.json"

|

||||

if not state_file.exists():

|

||||

continue

|

||||

try:

|

||||

data = json.loads(state_file.read_text(encoding="utf-8"))

|

||||

ts = data.get("timestamps", {}).get("updated_at")

|

||||

if ts and (latest is None or ts > latest):

|

||||

latest = ts

|

||||

except Exception:

|

||||

continue

|

||||

|

||||

# 2. Queen sessions

|

||||

queen_sessions_dir = Path.home() / ".hive" / "queen" / "session"

|

||||

if queen_sessions_dir.exists():

|

||||

resolved = agent_path.resolve()

|

||||

for d in queen_sessions_dir.iterdir():

|

||||

if not d.is_dir():

|

||||

continue

|

||||

meta_file = d / "meta.json"

|

||||

if not meta_file.exists():

|

||||

continue

|

||||

try:

|

||||

meta = json.loads(meta_file.read_text(encoding="utf-8"))

|

||||

stored = meta.get("agent_path")

|

||||

if not stored or Path(stored).resolve() != resolved:

|

||||

continue

|

||||

ts = datetime.fromtimestamp(d.stat().st_mtime).isoformat()

|

||||

if latest is None or ts > latest:

|

||||

latest = ts

|

||||

except Exception:

|

||||

continue

|

||||

|

||||

return latest

|

||||

|

||||

|

||||

@@ -169,7 +200,7 @@ def discover_agents() -> dict[str, list[AgentEntry]]:

|

||||

node_count=node_count,

|

||||

tool_count=tool_count,

|

||||

tags=tags,

|

||||

last_active=_get_last_active(path.name),

|

||||

last_active=_get_last_active(path),

|

||||

)

|

||||

)

|

||||

if entries:

|

||||

|

||||

@@ -702,6 +702,15 @@ stop_worker() to return to STAGING phase.

|

||||

_queen_behavior_always = """

|

||||

# Behavior

|

||||

|

||||

## Images attached by the user

|

||||

|

||||

Users can attach images directly to their chat messages. When you see an \

|

||||

image in the conversation, analyze it using your native vision capability — \

|

||||

do NOT say you cannot see images or that you lack access to files. The image \

|

||||

is embedded in the message; no tool call is needed to view it. Describe what \

|

||||

you see, answer questions about it, and use the visual content to inform your \

|

||||

response just as you would text.

|

||||

|

||||

## CRITICAL RULE — ask_user / ask_user_multiple

|

||||

|

||||

Every response that ends with a question, a prompt, or expects user \

|

||||

@@ -1144,6 +1153,8 @@ Batch your response — do not call run_agent_with_input() once per trigger.

|

||||

config since last run), skip it and inform the user.

|

||||

- Never disable a trigger without telling the user. Use remove_trigger() only \

|

||||

when explicitly asked or when the trigger is clearly obsolete.

|

||||

- When the user asks to remove or disable a trigger, you MUST call remove_trigger(trigger_id). \

|

||||

Never just say "it's removed" without actually calling the tool.

|

||||

"""

|

||||

|

||||

# -- Backward-compatible composed versions (used by queen_node.system_prompt default) --

|

||||

|

||||

@@ -150,7 +150,7 @@ Call all three subagents in a single response to run them in parallel:

|

||||

|

||||

## GCU Anti-Patterns

|

||||

|

||||

- Using `browser_screenshot` to read text (use `browser_snapshot`)

|

||||

- Using `browser_screenshot` to read text (use `browser_snapshot` instead; screenshots are for visual context only)

|

||||

- Re-navigating after scrolling (resets scroll position)

|

||||

- Attempting login on auth walls

|

||||

- Forgetting `target_id` in multi-tab scenarios

|

||||

|

||||

@@ -0,0 +1,286 @@

|

||||

"""Worker per-run digest (run diary).

|

||||

|

||||

Storage layout:

|

||||

~/.hive/agents/{agent_name}/runs/{run_id}/digest.md

|

||||

|

||||

Each completed or failed worker run gets one digest file. The queen reads

|

||||

these via get_worker_status(focus='diary') before digging into live runtime

|

||||

logs — the diary is a cheap, persistent record that survives across sessions.

|

||||

"""

|

||||

|

||||

from __future__ import annotations

|

||||

|

||||

import logging

|

||||

import traceback

|

||||

from collections import Counter

|

||||

from datetime import datetime

|

||||

from pathlib import Path

|

||||

from typing import TYPE_CHECKING, Any

|

||||

|

||||

if TYPE_CHECKING:

|

||||

from framework.runtime.event_bus import AgentEvent, EventBus

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

|

||||

_DIGEST_SYSTEM = """\

|

||||

You maintain run digests for a worker agent.

|

||||

A run digest is a concise, factual record of a single task execution.

|

||||

|

||||

Write 3-6 sentences covering:

|

||||

- What the worker was asked to do (the task/goal)

|

||||

- What approach it took and what tools it used

|

||||

- What the outcome was (success, partial, or failure — and why if relevant)

|

||||

- Any notable issues, retries, or escalations to the queen

|

||||

|

||||

Write in third person past tense. Be direct and specific.

|

||||

Omit routine tool invocations unless the result matters.

|

||||

Output only the digest prose — no headings, no code fences.

|

||||

"""

|

||||

|

||||

|

||||

def _worker_runs_dir(agent_name: str) -> Path:

|

||||

return Path.home() / ".hive" / "agents" / agent_name / "runs"

|

||||

|

||||

|

||||

def digest_path(agent_name: str, run_id: str) -> Path:

|

||||

return _worker_runs_dir(agent_name) / run_id / "digest.md"

|

||||

|

||||

|

||||

def _collect_run_events(bus: EventBus, run_id: str, limit: int = 2000) -> list[AgentEvent]:

|

||||

"""Collect all events belonging to *run_id* from the bus history.

|

||||

|

||||

Strategy: find the EXECUTION_STARTED event that carries ``run_id``,

|

||||

extract its ``execution_id``, then query the bus by that execution_id.

|

||||

This works because TOOL_CALL_*, EDGE_TRAVERSED, NODE_STALLED etc. carry

|

||||

execution_id but not run_id.

|

||||

|

||||

Falls back to a full-scan run_id filter when EXECUTION_STARTED is not

|

||||

found (e.g. bus was rotated).

|

||||

"""

|

||||

from framework.runtime.event_bus import EventType

|

||||

|

||||

# Pass 1: find execution_id via EXECUTION_STARTED with matching run_id

|

||||

started = bus.get_history(event_type=EventType.EXECUTION_STARTED, limit=limit)

|

||||

exec_id: str | None = None

|

||||

for e in started:

|

||||

if getattr(e, "run_id", None) == run_id and e.execution_id:

|

||||

exec_id = e.execution_id

|

||||

break

|

||||

|

||||

if exec_id:

|

||||

return bus.get_history(execution_id=exec_id, limit=limit)

|

||||

|

||||

# Fallback: scan all events and match by run_id attribute

|

||||

return [e for e in bus.get_history(limit=limit) if getattr(e, "run_id", None) == run_id]

|

||||

|

||||

|

||||

def _build_run_context(

|

||||

events: list[AgentEvent],

|

||||

outcome_event: AgentEvent | None,

|

||||

) -> str:

|

||||

"""Assemble a plain-text run context string for the digest LLM call."""

|

||||

from framework.runtime.event_bus import EventType

|

||||

|

||||

# Reverse so events are in chronological order

|

||||

events_chron = list(reversed(events))

|

||||

|

||||

lines: list[str] = []

|

||||

|

||||

# Task input from EXECUTION_STARTED

|

||||

started = [e for e in events_chron if e.type == EventType.EXECUTION_STARTED]

|

||||

if started:

|

||||

inp = started[0].data.get("input", {})

|

||||

if inp:

|

||||

lines.append(f"Task input: {str(inp)[:400]}")

|

||||

|

||||

# Duration (elapsed so far if no outcome yet)

|

||||

ref_ts = outcome_event.timestamp if outcome_event else datetime.utcnow()

|

||||

if started:

|

||||

elapsed = (ref_ts - started[0].timestamp).total_seconds()

|

||||

m, s = divmod(int(elapsed), 60)

|

||||

lines.append(f"Duration so far: {m}m {s}s" if m else f"Duration so far: {s}s")

|

||||

|

||||

# Outcome

|

||||

if outcome_event is None:

|

||||

lines.append("Status: still running (mid-run snapshot)")

|

||||

elif outcome_event.type == EventType.EXECUTION_COMPLETED:

|

||||

out = outcome_event.data.get("output", {})

|

||||

out_str = f"Outcome: completed. Output: {str(out)[:300]}"

|

||||

lines.append(out_str if out else "Outcome: completed.")

|

||||

else:

|

||||

err = outcome_event.data.get("error", "")

|

||||

lines.append(f"Outcome: failed. Error: {str(err)[:300]}" if err else "Outcome: failed.")

|

||||

|

||||

# Node path (edge traversals)

|

||||

edges = [e for e in events_chron if e.type == EventType.EDGE_TRAVERSED]

|

||||

if edges:

|

||||

parts = [

|

||||

f"{e.data.get('source_node', '?')}->{e.data.get('target_node', '?')}"

|

||||

for e in edges[-20:]

|

||||

]

|

||||

lines.append(f"Node path: {', '.join(parts)}")

|

||||

|

||||

# Tools used

|

||||

tool_events = [e for e in events_chron if e.type == EventType.TOOL_CALL_COMPLETED]

|

||||

if tool_events:

|

||||

names = [e.data.get("tool_name", "?") for e in tool_events]

|

||||

counts = Counter(names)

|

||||

summary = ", ".join(f"{name}×{n}" if n > 1 else name for name, n in counts.most_common())

|

||||

lines.append(f"Tools used: {summary}")

|

||||

# Note any tool errors

|

||||

errors = [e for e in tool_events if e.data.get("is_error")]

|

||||

if errors:

|

||||

err_names = Counter(e.data.get("tool_name", "?") for e in errors)

|

||||

lines.append(f"Tool errors: {dict(err_names)}")

|

||||

|

||||

# Issues

|

||||

issue_map = {

|

||||

EventType.NODE_STALLED: "stall",

|

||||

EventType.NODE_TOOL_DOOM_LOOP: "doom loop",

|

||||

EventType.CONSTRAINT_VIOLATION: "constraint violation",

|

||||

EventType.NODE_RETRY: "retry",

|

||||

}

|

||||

issue_parts: list[str] = []

|

||||

for evt_type, label in issue_map.items():

|

||||

n = sum(1 for e in events_chron if e.type == evt_type)

|

||||

if n:

|

||||

issue_parts.append(f"{n} {label}(s)")

|

||||

if issue_parts:

|

||||

lines.append(f"Issues: {', '.join(issue_parts)}")

|

||||

|

||||

# Escalations to queen

|

||||

escalations = [e for e in events_chron if e.type == EventType.ESCALATION_REQUESTED]

|

||||

if escalations:

|

||||

lines.append(f"Escalations to queen: {len(escalations)}")

|

||||

|

||||

# Final LLM output snippet (last LLM_TEXT_DELTA snapshot)

|

||||

text_events = [e for e in reversed(events_chron) if e.type == EventType.LLM_TEXT_DELTA]

|

||||

if text_events:

|

||||

snapshot = text_events[0].data.get("snapshot", "") or ""

|

||||

if snapshot:

|

||||

lines.append(f"Final LLM output: {snapshot[-400:].strip()}")

|

||||

|

||||

return "\n".join(lines)

|

||||

|

||||

|

||||

async def consolidate_worker_run(

|

||||

agent_name: str,

|

||||

run_id: str,

|

||||

outcome_event: AgentEvent | None,

|

||||

bus: EventBus,

|

||||

llm: Any,

|

||||

) -> None:

|

||||

"""Write (or overwrite) the digest for a worker run.

|

||||

|

||||

Called fire-and-forget either:

|

||||

- After EXECUTION_COMPLETED / EXECUTION_FAILED (outcome_event set, final write)

|

||||

- Periodically during a run on a cooldown timer (outcome_event=None, mid-run snapshot)

|

||||

|

||||

The digest file is always overwritten so each call produces the freshest view.

|

||||

The final completion/failure call supersedes any mid-run snapshot.

|

||||

|

||||

Args:

|

||||

agent_name: Worker agent directory name (determines storage path).

|

||||

run_id: The run ID.

|

||||

outcome_event: EXECUTION_COMPLETED or EXECUTION_FAILED event, or None for

|

||||

a mid-run snapshot.

|

||||

bus: The session EventBus (shared queen + worker).

|

||||

llm: LLMProvider with an acomplete() method.

|

||||

"""

|

||||

try:

|

||||

events = _collect_run_events(bus, run_id)

|

||||

run_context = _build_run_context(events, outcome_event)

|

||||

if not run_context:

|

||||

logger.debug("worker_memory: no events for run %s, skipping digest", run_id)

|

||||

return

|

||||

|

||||

is_final = outcome_event is not None

|

||||

logger.info(

|

||||

"worker_memory: generating %s digest for run %s ...",

|

||||

"final" if is_final else "mid-run",

|

||||

run_id,

|

||||

)

|

||||

|

||||

from framework.agents.queen.config import default_config

|

||||

|

||||

resp = await llm.acomplete(

|

||||

messages=[{"role": "user", "content": run_context}],

|

||||

system=_DIGEST_SYSTEM,

|

||||

max_tokens=min(default_config.max_tokens, 512),

|

||||

)

|

||||

digest_text = (resp.content or "").strip()

|

||||

if not digest_text:

|

||||

logger.warning("worker_memory: LLM returned empty digest for run %s", run_id)

|

||||

return

|

||||

|

||||

path = digest_path(agent_name, run_id)

|

||||

path.parent.mkdir(parents=True, exist_ok=True)

|

||||

|

||||

from framework.runtime.event_bus import EventType

|

||||

|

||||

ts = (outcome_event.timestamp if outcome_event else datetime.utcnow()).strftime(

|

||||

"%Y-%m-%d %H:%M"

|

||||

)

|

||||

if outcome_event is None:

|

||||

status = "running"

|

||||

elif outcome_event.type == EventType.EXECUTION_COMPLETED:

|

||||

status = "completed"

|

||||

else:

|

||||

status = "failed"

|

||||

|

||||

path.write_text(

|

||||

f"# {run_id}\n\n**{ts}** | {status}\n\n{digest_text}\n",

|

||||

encoding="utf-8",

|

||||

)

|

||||

logger.info(

|

||||

"worker_memory: %s digest written for run %s (%d chars)",

|

||||

status,

|

||||

run_id,

|

||||

len(digest_text),

|

||||

)

|

||||

|

||||

except Exception:

|

||||

tb = traceback.format_exc()

|

||||

logger.exception("worker_memory: digest failed for run %s", run_id)

|

||||

# Persist the error so it's findable without log access

|

||||

error_path = _worker_runs_dir(agent_name) / run_id / "digest_error.txt"

|

||||

try:

|

||||

error_path.parent.mkdir(parents=True, exist_ok=True)

|

||||

error_path.write_text(

|

||||

f"run_id: {run_id}\ntime: {datetime.now().isoformat()}\n\n{tb}",

|

||||

encoding="utf-8",

|

||||

)

|

||||

except Exception:

|

||||

pass

|

||||

|

||||

|

||||

def read_recent_digests(agent_name: str, max_runs: int = 5) -> list[tuple[str, str]]:

|

||||

"""Return recent run digests as [(run_id, content), ...], newest first.

|

||||

|

||||

Args:

|

||||

agent_name: Worker agent directory name.

|

||||

max_runs: Maximum number of digests to return.

|

||||

|

||||

Returns:

|

||||

List of (run_id, digest_content) tuples, ordered newest first.

|

||||

"""

|

||||

runs_dir = _worker_runs_dir(agent_name)

|

||||

if not runs_dir.exists():

|

||||

return []

|

||||

|

||||

digest_files = sorted(

|

||||

runs_dir.glob("*/digest.md"),

|

||||

key=lambda p: p.stat().st_mtime,

|

||||

reverse=True,

|

||||

)[:max_runs]

|

||||

|

||||

result: list[tuple[str, str]] = []

|

||||

for f in digest_files:

|

||||

try:

|

||||

content = f.read_text(encoding="utf-8").strip()

|

||||

if content:

|

||||

result.append((f.parent.name, content))

|

||||

except OSError:

|

||||

continue

|

||||

return result

|

||||

@@ -89,6 +89,16 @@ def main():

|

||||

|

||||

register_testing_commands(subparsers)

|

||||

|

||||

# Register skill commands (skill list, skill trust, ...)

|

||||

from framework.skills.cli import register_skill_commands

|

||||

|

||||

register_skill_commands(subparsers)

|

||||

|

||||

# Register debugger commands (debugger)

|

||||

from framework.debugger.cli import register_debugger_commands

|

||||

|

||||

register_debugger_commands(subparsers)

|

||||

|

||||

args = parser.parse_args()

|

||||

|

||||

if hasattr(args, "func"):

|

||||

|

||||

+251

-2

@@ -51,16 +51,167 @@ def get_preferred_model() -> str:

|

||||

"""Return the user's preferred LLM model string (e.g. 'anthropic/claude-sonnet-4-20250514')."""

|

||||

llm = get_hive_config().get("llm", {})

|

||||

if llm.get("provider") and llm.get("model"):

|

||||

return f"{llm['provider']}/{llm['model']}"

|

||||

provider = str(llm["provider"])

|

||||

model = str(llm["model"]).strip()

|

||||

# OpenRouter quickstart stores raw model IDs; tolerate pasted "openrouter/<id>" too.

|

||||

if provider.lower() == "openrouter" and model.lower().startswith("openrouter/"):

|

||||

model = model[len("openrouter/") :]

|

||||

if model:

|

||||

return f"{provider}/{model}"

|

||||

return "anthropic/claude-sonnet-4-20250514"

|

||||

|

||||

|

||||

def get_preferred_worker_model() -> str | None:

|

||||

"""Return the user's preferred worker LLM model, or None if not configured.

|

||||

|

||||

Reads from the ``worker_llm`` section of ~/.hive/configuration.json.

|

||||

Returns None when no worker-specific model is set, so callers can

|

||||

fall back to the default (queen) model via ``get_preferred_model()``.

|

||||

"""

|

||||

worker_llm = get_hive_config().get("worker_llm", {})

|

||||

if worker_llm.get("provider") and worker_llm.get("model"):

|

||||

provider = str(worker_llm["provider"])

|

||||

model = str(worker_llm["model"]).strip()

|

||||

if provider.lower() == "openrouter" and model.lower().startswith("openrouter/"):

|

||||

model = model[len("openrouter/") :]

|

||||

if model:

|

||||

return f"{provider}/{model}"

|

||||

return None

|

||||

|

||||

|

||||

def get_worker_api_key() -> str | None:

|

||||

"""Return the API key for the worker LLM, falling back to the default key."""

|

||||

worker_llm = get_hive_config().get("worker_llm", {})

|

||||

if not worker_llm:

|

||||

return get_api_key()

|

||||

|

||||

# Worker-specific subscription / env var

|

||||

if worker_llm.get("use_claude_code_subscription"):

|

||||

try:

|

||||

from framework.runner.runner import get_claude_code_token

|

||||

|

||||

token = get_claude_code_token()

|

||||

if token:

|

||||

return token

|

||||

except ImportError:

|

||||

pass

|

||||

|

||||

if worker_llm.get("use_codex_subscription"):

|

||||

try:

|

||||

from framework.runner.runner import get_codex_token

|

||||

|

||||

token = get_codex_token()

|

||||

if token:

|

||||

return token

|

||||

except ImportError:

|

||||

pass

|

||||

|

||||

if worker_llm.get("use_kimi_code_subscription"):

|

||||

try:

|

||||

from framework.runner.runner import get_kimi_code_token

|

||||

|

||||

token = get_kimi_code_token()

|

||||

if token:

|

||||

return token

|

||||

except ImportError:

|

||||

pass

|

||||

|

||||

if worker_llm.get("use_antigravity_subscription"):

|

||||

try:

|

||||

from framework.runner.runner import get_antigravity_token