Compare commits

175 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| adf1a10318 | |||

| a3916a6932 | |||

| cbd2c86bbf | |||

| f921846879 | |||

| a370403b16 | |||

| ad6d504ea4 | |||

| 65962ddf58 | |||

| bba44430c4 | |||

| 69c71d77fb | |||

| 7b98a6613a | |||

| 26481e27a6 | |||

| bb227b3d73 | |||

| 8a0cf5e0ae | |||

| 69218d5699 | |||

| 7d1433af21 | |||

| 0bfbf1e9c5 | |||

| 1ca4f5b22b | |||

| 0984e4c1e8 | |||

| 4cbf5a7434 | |||

| b33178c5be | |||

| dc6a336c60 | |||

| b855336448 | |||

| de021977fd | |||

| cd2b3fcd16 | |||

| b64024ede5 | |||

| a280d23113 | |||

| 41785abdba | |||

| de494c7e55 | |||

| 5fa0903ea8 | |||

| 7bd99fe074 | |||

| c838e1ca6d | |||

| f475923353 | |||

| 43f43c92e3 | |||

| 5463134322 | |||

| 3fbb392103 | |||

| a162da17e1 | |||

| b565134d57 | |||

| 3aafc89912 | |||

| 93449f92fe | |||

| d766e68d42 | |||

| 1d8b1f9774 | |||

| 5ea9abae83 | |||

| 15957499c5 | |||

| 0b50d9e874 | |||

| a1e54922bd | |||

| 63c0ca34ea | |||

| 135477e516 | |||

| 8cac49cd91 | |||

| 28dce63682 | |||

| 313ac952e0 | |||

| 0633d5130b | |||

| 995e487b49 | |||

| 64b58b57e0 | |||

| c6465908df | |||

| ca96bcc09f | |||

| 65ee628fae | |||

| 02043614e5 | |||

| 212b9bf9d4 | |||

| 6070c30a88 | |||

| 8a653e51bc | |||

| d562670425 | |||

| 677bee6fe5 | |||

| de27bfe76f | |||

| 1c1dcb9c33 | |||

| 4ba950f155 | |||

| 9c3a11d7bb | |||

| b7d357aea2 | |||

| b2fed68346 | |||

| 0e996928be | |||

| 6ff4ec3643 | |||

| a0eda3e492 | |||

| 099f9514ef | |||

| b2096e4a55 | |||

| 1bf2164745 | |||

| 48205bbde7 | |||

| 296aab6ecb | |||

| 14182c45fc | |||

| 2fa8f4283c | |||

| ad3cec2361 | |||

| eddb628298 | |||

| f63b226d8d | |||

| cc5bd61d86 | |||

| 8bd14fb16f | |||

| 30b5472e33 | |||

| bc836db0f9 | |||

| bd3b0fb8eb | |||

| 7f28474967 | |||

| 09460b28bc | |||

| 5d8ba1e49c | |||

| ccb394675b | |||

| 931487a7d4 | |||

| 3654c57f66 | |||

| fb28280ced | |||

| 6215441b58 | |||

| 52f16d5bb6 | |||

| e5b6c8581a | |||

| 5dcca99913 | |||

| 890b906f15 | |||

| 6a8286d4cf | |||

| 680024f790 | |||

| 6f7bfb92a8 | |||

| 335a9603e8 | |||

| 5e8a6202e7 | |||

| 55a4cdefd7 | |||

| 2b63135afb | |||

| 49d8c3572d | |||

| 4b40962186 | |||

| 779b376c6e | |||

| 4e2a9a247a | |||

| b1f3d6b155 | |||

| ea28a9d3c3 | |||

| 69a03e463f | |||

| e7da62e61c | |||

| 7176745e1c | |||

| cce0e26f5c | |||

| 641af16dfc | |||

| a335c427ef | |||

| 9ea6c959ae | |||

| 20efd523c9 | |||

| 8fc7fff496 | |||

| edf51e6996 | |||

| 6b867883ce | |||

| 35a05f4120 | |||

| e0e78a97ce | |||

| e4e476f463 | |||

| c4c8917ecb | |||

| 1524d2ef00 | |||

| 5032834034 | |||

| 0b83f6ea99 | |||

| 415201f467 | |||

| 73005a8498 | |||

| 4edb960fbd | |||

| 42d11ead01 | |||

| 5e18f85b10 | |||

| 85b25bf006 | |||

| c1ba108489 | |||

| 214098aaae | |||

| 241a0b7adc | |||

| 9a7b41a4be | |||

| 746f026654 | |||

| 8294cd3dd9 | |||

| 337fb6d922 | |||

| bda6b18e8a | |||

| d256ff929f | |||

| b00203702e | |||

| 754e33a1ae | |||

| 51154a3070 | |||

| b11b43bbe1 | |||

| 86f4645d1c | |||

| 2d05e96cd5 | |||

| 9c44d3b793 | |||

| 9b89ac694e | |||

| 630d8208cf | |||

| 9b342dc593 | |||

| ad879de6ff | |||

| 795266aab4 | |||

| 4e4ef121f9 | |||

| ddb9126955 | |||

| bac6d6dd68 | |||

| 3451570541 | |||

| e5e939f344 | |||

| 0d51d25482 | |||

| a0a5b10df0 | |||

| 04bac93c14 | |||

| 047f4a1a0c | |||

| 7994b90dfa | |||

| 04b6a80370 | |||

| fc0c3e169f | |||

| 4760f95bda | |||

| a04a8a866d | |||

| 8c9baa62b0 | |||

| 262eaa6d84 | |||

| fc1a48f3bc | |||

| 060f320cd1 | |||

| bff32bcaa3 |

@@ -195,7 +195,7 @@ class DeepResearchAgent:

|

||||

max_tokens=self.config.max_tokens,

|

||||

loop_config={

|

||||

"max_iterations": 100,

|

||||

"max_tool_calls_per_turn": 20,

|

||||

"max_tool_calls_per_turn": 30,

|

||||

"max_history_tokens": 32000,

|

||||

},

|

||||

conversation_mode="continuous",

|

||||

|

||||

@@ -71,6 +71,12 @@ Important:

|

||||

- Track which URL each finding comes from (you'll need citations later)

|

||||

- Call set_output for each key in a SEPARATE turn (not in the same turn as other tool calls)

|

||||

|

||||

Context management:

|

||||

- Your tool results are automatically saved to files. After compaction, the file \

|

||||

references remain in the conversation — use load_data() to recover any content you need.

|

||||

- Use append_data('research_notes.md', ...) to maintain a running log of key findings \

|

||||

as you go. This survives compaction and helps the report node produce a detailed report.

|

||||

|

||||

When done, use set_output (one key at a time, separate turns):

|

||||

- set_output("findings", "Structured summary: key findings with source URLs for each claim. \

|

||||

Include themes, contradictions, and confidence levels.")

|

||||

@@ -161,6 +167,9 @@ Requirements:

|

||||

- Every factual claim must cite its source with [n] notation

|

||||

- Be objective — present multiple viewpoints where sources disagree

|

||||

- Answer the original research questions from the brief

|

||||

- If findings appear incomplete or summarized, call list_data_files() and load_data() \

|

||||

to access the detailed source material from the research phase. The research node's \

|

||||

tool results and research_notes.md contain the full data.

|

||||

|

||||

Save the HTML:

|

||||

save_data(filename="report.html", data="<html>...</html>")

|

||||

|

||||

@@ -70,6 +70,7 @@ exports/*

|

||||

.agent-builder-sessions/*

|

||||

|

||||

.claude/settings.local.json

|

||||

.claude/skills/ship-it/

|

||||

|

||||

.venv

|

||||

|

||||

|

||||

@@ -14,7 +14,7 @@

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/adenhq/hive/blob/main/LICENSE"><img src="https://img.shields.io/badge/License-Apache%202.0-blue.svg" alt="Apache 2.0 License" /></a>

|

||||

<a href="https://github.com/aden-hive/hive/blob/main/LICENSE"><img src="https://img.shields.io/badge/License-Apache%202.0-blue.svg" alt="Apache 2.0 License" /></a>

|

||||

<a href="https://www.ycombinator.com/companies/aden"><img src="https://img.shields.io/badge/Y%20Combinator-Aden-orange" alt="Y Combinator" /></a>

|

||||

<a href="https://discord.com/invite/MXE49hrKDk"><img src="https://img.shields.io/discord/1172610340073242735?logo=discord&labelColor=%235462eb&logoColor=%23f5f5f5&color=%235462eb" alt="Discord" /></a>

|

||||

<a href="https://x.com/aden_hq"><img src="https://img.shields.io/twitter/follow/teamaden?logo=X&color=%23f5f5f5" alt="Twitter Follow" /></a>

|

||||

@@ -37,11 +37,11 @@

|

||||

|

||||

## Overview

|

||||

|

||||

Build autonomous, reliable, self-improving AI agents without hardcoding workflows. Define your goal through conversation with a coding agent, and the framework generates a node graph with dynamically created connection code. When things break, the framework captures failure data, evolves the agent through the coding agent, and redeploys. Built-in human-in-the-loop nodes, credential management, and real-time monitoring give you control without sacrificing adaptability.

|

||||

Build autonomous, reliable, self-improving AI agents without hardcoding workflows. Define your goal through conversation with hive coding agent(queen), and the framework generates a node graph with dynamically created connection code. When things break, the framework captures failure data, evolves the agent through the coding agent, and redeploys. Built-in human-in-the-loop nodes, credential management, and real-time monitoring give you control without sacrificing adaptability.

|

||||

|

||||

Visit [adenhq.com](https://adenhq.com) for complete documentation, examples, and guides.

|

||||

|

||||

https://github.com/user-attachments/assets/846c0cc7-ffd6-47fa-b4b7-495494857a55

|

||||

[](https://www.youtube.com/watch?v=XDOG9fOaLjU)

|

||||

|

||||

## Who Is Hive For?

|

||||

|

||||

@@ -50,7 +50,7 @@ Hive is designed for developers and teams who want to build **production-grade A

|

||||

Hive is a good fit if you:

|

||||

|

||||

- Want AI agents that **execute real business processes**, not demos

|

||||

- Prefer **goal-driven development** over hardcoded workflows

|

||||

- Need **fast or high volume agent execution** over open workflow

|

||||

- Need **self-healing and adaptive agents** that improve over time

|

||||

- Require **human-in-the-loop control**, observability, and cost limits

|

||||

- Plan to run agents in **production environments**

|

||||

@@ -71,7 +71,7 @@ Use Hive when you need:

|

||||

|

||||

- **[Documentation](https://docs.adenhq.com/)** - Complete guides and API reference

|

||||

- **[Self-Hosting Guide](https://docs.adenhq.com/getting-started/quickstart)** - Deploy Hive on your infrastructure

|

||||

- **[Changelog](https://github.com/adenhq/hive/releases)** - Latest updates and releases

|

||||

- **[Changelog](https://github.com/aden-hive/hive/releases)** - Latest updates and releases

|

||||

- **[Roadmap](docs/roadmap.md)** - Upcoming features and plans

|

||||

- **[Report Issues](https://github.com/adenhq/hive/issues)** - Bug reports and feature requests

|

||||

- **[Contributing](CONTRIBUTING.md)** - How to contribute and submit PRs

|

||||

@@ -81,7 +81,7 @@ Use Hive when you need:

|

||||

### Prerequisites

|

||||

|

||||

- Python 3.11+ for agent development

|

||||

- Claude Code, Codex CLI, or Cursor for utilizing agent skills

|

||||

- An LLM provider that powers the agents

|

||||

|

||||

> **Note for Windows Users:** It is strongly recommended to use **WSL (Windows Subsystem for Linux)** or **Git Bash** to run this framework. Some core automation scripts may not execute correctly in standard Command Prompt or PowerShell.

|

||||

|

||||

@@ -94,9 +94,10 @@ Use Hive when you need:

|

||||

|

||||

```bash

|

||||

# Clone the repository

|

||||

git clone https://github.com/adenhq/hive.git

|

||||

git clone https://github.com/aden-hive/hive.git

|

||||

cd hive

|

||||

|

||||

|

||||

# Run quickstart setup

|

||||

./quickstart.sh

|

||||

```

|

||||

@@ -109,77 +110,41 @@ This sets up:

|

||||

- **LLM provider** - Interactive default model configuration

|

||||

- All required Python dependencies with `uv`

|

||||

|

||||

- At last, it will initiate the open hive interface in your browser

|

||||

|

||||

<img width="2500" height="1214" alt="home-screen" src="https://github.com/user-attachments/assets/134d897f-5e75-4874-b00b-e0505f6b45c4" />

|

||||

|

||||

### Build Your First Agent

|

||||

|

||||

```bash

|

||||

# Build an agent using Claude Code

|

||||

claude> /hive

|

||||

Type the agent you want to build in the home input box

|

||||

|

||||

# Test your agent

|

||||

claude> /hive-debugger

|

||||

<img width="2500" height="1214" alt="Image" src="https://github.com/user-attachments/assets/1ce19141-a78b-46f5-8d64-dbf987e048f4" />

|

||||

|

||||

# (at separate terminal) Launch the interactive dashboard

|

||||

hive tui

|

||||

### Use Template Agents

|

||||

|

||||

# Or run directly

|

||||

hive run exports/your_agent_name --input '{"key": "value"}'

|

||||

```

|

||||

Click "Try a sample agent" and check the templates. You can run a templates directly or choose to build your version on top of the existing template.

|

||||

|

||||

## Coding Agent Support

|

||||

### Run Agents

|

||||

|

||||

### Codex CLI

|

||||

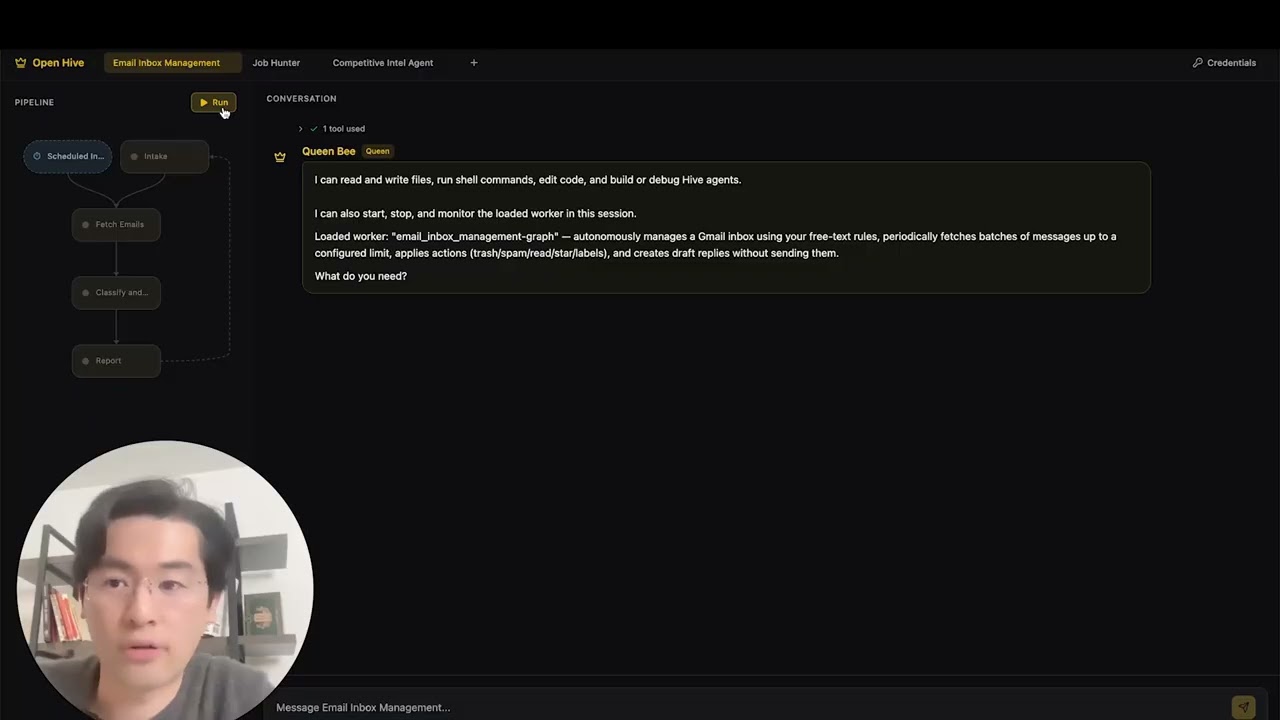

Now you can run an agent by selectiing the agent (either an existing agent or example agent). You can click the Run button on the top left, or talk to the queen agent and it can run the agent for you.

|

||||

|

||||

Hive includes native support for [OpenAI Codex CLI](https://github.com/openai/codex) (v0.101.0+).

|

||||

|

||||

1. **Config:** `.codex/config.toml` with `agent-builder` MCP server (tracked in git)

|

||||

2. **Skills:** `.agents/skills/` symlinks to Hive skills (tracked in git)

|

||||

3. **Launch:** Run `codex` in the repo root, then type `use hive`

|

||||

|

||||

Example:

|

||||

|

||||

```

|

||||

codex> use hive

|

||||

```

|

||||

|

||||

### Opencode

|

||||

|

||||

Hive includes native support for [Opencode](https://github.com/opencode-ai/opencode).

|

||||

|

||||

1. **Setup:** Run the quickstart script

|

||||

2. **Launch:** Open Opencode in the project root.

|

||||

3. **Activate:** Type `/hive` in the chat to switch to the Hive Agent.

|

||||

4. **Verify:** Ask the agent _"List your tools"_ to confirm the connection.

|

||||

|

||||

The agent has access to all Hive skills and can scaffold agents, add tools, and debug workflows directly from the chat.

|

||||

|

||||

**[📖 Complete Setup Guide](docs/environment-setup.md)** - Detailed instructions for agent development

|

||||

|

||||

### Antigravity IDE Support

|

||||

|

||||

Skills and MCP servers are also available in [Antigravity IDE](https://antigravity.google/) (Google's AI-powered IDE). **Easiest:** open a terminal in the hive repo folder and run (use `./` — the script is inside the repo):

|

||||

|

||||

```bash

|

||||

./scripts/setup-antigravity-mcp.sh

|

||||

```

|

||||

|

||||

**Important:** Always restart/refresh Antigravity IDE after running the setup script—MCP servers only load on startup. After restart, **agent-builder** and **tools** MCP servers should connect. Skills are under `.agent/skills/` (symlinks to `.claude/skills/`). See [docs/antigravity-setup.md](docs/antigravity-setup.md) for manual setup and troubleshooting.

|

||||

<img width="2500" height="1214" alt="Image" src="https://github.com/user-attachments/assets/71c38206-2ad5-49aa-bde8-6698d0bc55f5" />

|

||||

|

||||

## Features

|

||||

|

||||

- **[Goal-Driven Development](docs/key_concepts/goals_outcome.md)** - Define objectives in natural language; the coding agent generates the agent graph and connection code to achieve them

|

||||

- **Browser-Use** - Control the browser on your computer to achieve hard tasks

|

||||

- **Parallel Execution** - Execute the generated graph in parallel. This way you can have multiple agent compelteing the jobs for you

|

||||

- **[Goal-Driven Generation](docs/key_concepts/goals_outcome.md)** - Define objectives in natural language; the coding agent generates the agent graph and connection code to achieve them

|

||||

- **[Adaptiveness](docs/key_concepts/evolution.md)** - Framework captures failures, calibrates according to the objectives, and evolves the agent graph

|

||||

- **[Dynamic Node Connections](docs/key_concepts/graph.md)** - No predefined edges; connection code is generated by any capable LLM based on your goals

|

||||

- **SDK-Wrapped Nodes** - Every node gets shared memory, local RLM memory, monitoring, tools, and LLM access out of the box

|

||||

- **[Human-in-the-Loop](docs/key_concepts/graph.md#human-in-the-loop)** - Intervention nodes that pause execution for human input with configurable timeouts and escalation

|

||||

- **Real-time Observability** - WebSocket streaming for live monitoring of agent execution, decisions, and node-to-node communication

|

||||

- **Interactive TUI Dashboard** - Terminal-based dashboard with live graph view, event log, and chat interface for agent interaction

|

||||

- **Cost & Budget Control** - Set spending limits, throttles, and automatic model degradation policies

|

||||

- **Production-Ready** - Self-hostable, built for scale and reliability

|

||||

|

||||

## Integration

|

||||

|

||||

<a href="https://github.com/adenhq/hive/tree/main/tools/src/aden_tools/tools"><img width="100%" alt="Integration" src="https://github.com/user-attachments/assets/a1573f93-cf02-4bb8-b3d5-b305b05b1e51" /></a>

|

||||

|

||||

<a href="https://github.com/aden-hive/hive/tree/main/tools/src/aden_tools/tools"><img width="100%" alt="Integration" src="https://github.com/user-attachments/assets/a1573f93-cf02-4bb8-b3d5-b305b05b1e51" /></a>

|

||||

Hive is built to be model-agnostic and system-agnostic.

|

||||

|

||||

- **LLM flexibility** - Hive Framework is designed to support various types of LLMs, including hosted and local models through LiteLLM-compatible providers.

|

||||

@@ -240,35 +205,10 @@ flowchart LR

|

||||

4. **Control Plane Monitors** → Real-time metrics, budget enforcement, policy management

|

||||

5. **[Adaptiveness](docs/key_concepts/evolution.md)** → On failure, the system evolves the graph and redeploys automatically

|

||||

|

||||

## Run Agents

|

||||

|

||||

The `hive` CLI is the primary interface for running agents.

|

||||

|

||||

```bash

|

||||

# Browse and run agents interactively (Recommended)

|

||||

hive tui

|

||||

|

||||

# Run a specific agent directly

|

||||

hive run exports/my_agent --input '{"task": "Your input here"}'

|

||||

|

||||

# Run a specific agent with the TUI dashboard

|

||||

hive run exports/my_agent --tui

|

||||

|

||||

# Interactive REPL

|

||||

hive shell

|

||||

```

|

||||

|

||||

The TUI scans both `exports/` and `examples/templates/` for available agents.

|

||||

|

||||

> **Using Python directly (alternative):** You can also run agents with `PYTHONPATH=exports uv run python -m agent_name run --input '{...}'`

|

||||

|

||||

See [environment-setup.md](docs/environment-setup.md) for complete setup instructions.

|

||||

|

||||

## Documentation

|

||||

|

||||

- **[Developer Guide](docs/developer-guide.md)** - Comprehensive guide for developers

|

||||

- [Getting Started](docs/getting-started.md) - Quick setup instructions

|

||||

- [TUI Guide](docs/tui-selection-guide.md) - Interactive dashboard usage

|

||||

- [Configuration Guide](docs/configuration.md) - All configuration options

|

||||

- [Architecture Overview](docs/architecture/README.md) - System design and structure

|

||||

|

||||

@@ -398,8 +338,7 @@ flowchart TB

|

||||

```

|

||||

|

||||

## Contributing

|

||||

|

||||

We welcome contributions from the community! We’re especially looking for help building tools, integrations, and example agents for the framework ([check #2805](https://github.com/adenhq/hive/issues/2805)). If you’re interested in extending its functionality, this is the perfect place to start. Please see [CONTRIBUTING.md](CONTRIBUTING.md) for guidelines.

|

||||

We welcome contributions from the community! We’re especially looking for help building tools, integrations, and example agents for the framework ([check #2805](https://github.com/aden-hive/hive/issues/2805)). If you’re interested in extending its functionality, this is the perfect place to start. Please see [CONTRIBUTING.md](CONTRIBUTING.md) for guidelines.

|

||||

|

||||

**Important:** Please get assigned to an issue before submitting a PR. Comment on an issue to claim it, and a maintainer will assign you. Issues with reproducible steps and proposals are prioritized. This helps prevent duplicate work.

|

||||

|

||||

@@ -436,7 +375,7 @@ This project is licensed under the Apache License 2.0 - see the [LICENSE](LICENS

|

||||

|

||||

**Q: What LLM providers does Hive support?**

|

||||

|

||||

Hive supports 100+ LLM providers through LiteLLM integration, including OpenAI (GPT-4, GPT-4o), Anthropic (Claude models), Google Gemini, DeepSeek, Mistral, Groq, and many more. Simply set the appropriate API key environment variable and specify the model name.

|

||||

Hive supports 100+ LLM providers through LiteLLM integration, including OpenAI (GPT-4, GPT-4o), Anthropic (Claude models), Google Gemini, DeepSeek, Mistral, Groq, and many more. Simply set the appropriate API key environment variable and specify the model name. We recommend using Claude, GLM and Gemini as they have the best performance.

|

||||

|

||||

**Q: Can I use Hive with local AI models like Ollama?**

|

||||

|

||||

@@ -478,14 +417,6 @@ Visit [docs.adenhq.com](https://docs.adenhq.com/) for complete guides, API refer

|

||||

|

||||

Contributions are welcome! Fork the repository, create your feature branch, implement your changes, and submit a pull request. See [CONTRIBUTING.md](CONTRIBUTING.md) for detailed guidelines.

|

||||

|

||||

**Q: When will my team start seeing results from Aden's adaptive agents?**

|

||||

|

||||

Aden's adaptation loop begins working from the first execution. When an agent fails, the framework captures the failure data, helping developers evolve the agent graph through the coding agent. How quickly this translates to measurable results depends on the complexity of your use case, the quality of your goal definitions, and the volume of executions generating feedback.

|

||||

|

||||

**Q: How does Hive compare to other agent frameworks?**

|

||||

|

||||

Hive focuses on generating agents that run real business processes, rather than generic agents. This vision emphasizes outcome-driven design, adaptability, and an easy-to-use set of tools and integrations.

|

||||

|

||||

---

|

||||

|

||||

<p align="center">

|

||||

|

||||

+9

-9

@@ -64,7 +64,7 @@ To use the agent builder with Claude Desktop or other MCP clients, add this to y

|

||||

"agent-builder": {

|

||||

"command": "python",

|

||||

"args": ["-m", "framework.mcp.agent_builder_server"],

|

||||

"cwd": "/path/to/goal-agent"

|

||||

"cwd": "/path/to/hive/core"

|

||||

}

|

||||

}

|

||||

}

|

||||

@@ -85,14 +85,14 @@ The MCP server provides tools for:

|

||||

Run an LLM-powered calculator:

|

||||

|

||||

```bash

|

||||

# Single calculation

|

||||

uv run python -m framework calculate "2 + 3 * 4"

|

||||

# Run an exported agent

|

||||

uv run python -m framework run exports/calculator --input '{"expression": "2 + 3 * 4"}'

|

||||

|

||||

# Interactive mode

|

||||

uv run python -m framework interactive

|

||||

# Interactive shell session

|

||||

uv run python -m framework shell exports/calculator

|

||||

|

||||

# Analyze runs with Builder

|

||||

uv run python -m framework analyze calculator

|

||||

# Show agent info

|

||||

uv run python -m framework info exports/calculator

|

||||

```

|

||||

|

||||

### Using the Runtime

|

||||

@@ -141,8 +141,8 @@ uv run python -m framework test-run <agent_path> --goal <goal_id> --parallel 4

|

||||

# Debug failed tests

|

||||

uv run python -m framework test-debug <agent_path> <test_name>

|

||||

|

||||

# List tests for a goal

|

||||

uv run python -m framework test-list <goal_id>

|

||||

# List tests for an agent

|

||||

uv run python -m framework test-list <agent_path>

|

||||

```

|

||||

|

||||

For detailed testing workflows, see the [hive-test skill](../.claude/skills/hive-test/SKILL.md).

|

||||

|

||||

+4

-2

@@ -15,6 +15,7 @@ import base64

|

||||

import hashlib

|

||||

import http.server

|

||||

import json

|

||||

import os

|

||||

import platform

|

||||

import secrets

|

||||

import subprocess

|

||||

@@ -150,8 +151,9 @@ def save_credentials(token_data: dict, account_id: str) -> None:

|

||||

if "id_token" in token_data:

|

||||

auth_data["tokens"]["id_token"] = token_data["id_token"]

|

||||

|

||||

CODEX_AUTH_FILE.parent.mkdir(parents=True, exist_ok=True)

|

||||

with open(CODEX_AUTH_FILE, "w") as f:

|

||||

CODEX_AUTH_FILE.parent.mkdir(parents=True, exist_ok=True, mode=0o700)

|

||||

fd = os.open(CODEX_AUTH_FILE, os.O_WRONLY | os.O_CREAT | os.O_TRUNC, 0o600)

|

||||

with os.fdopen(fd, "w") as f:

|

||||

json.dump(auth_data, f, indent=2)

|

||||

|

||||

|

||||

|

||||

@@ -1768,7 +1768,7 @@ async def _run_pipeline(websocket, initial_message: str):

|

||||

judge=judge,

|

||||

config=LoopConfig(

|

||||

max_iterations=30,

|

||||

max_tool_calls_per_turn=15,

|

||||

max_tool_calls_per_turn=30,

|

||||

max_history_tokens=64000,

|

||||

max_tool_result_chars=8_000,

|

||||

spillover_dir=str(_DATA_DIR),

|

||||

|

||||

@@ -751,7 +751,7 @@ async def _run_pipeline(websocket, topic: str):

|

||||

judge=None, # implicit judge: accept when output_keys filled

|

||||

config=LoopConfig(

|

||||

max_iterations=20,

|

||||

max_tool_calls_per_turn=10,

|

||||

max_tool_calls_per_turn=30,

|

||||

max_history_tokens=32_000,

|

||||

),

|

||||

conversation_store=store_a,

|

||||

@@ -849,7 +849,7 @@ async def _run_pipeline(websocket, topic: str):

|

||||

judge=None, # implicit judge

|

||||

config=LoopConfig(

|

||||

max_iterations=10,

|

||||

max_tool_calls_per_turn=5,

|

||||

max_tool_calls_per_turn=30,

|

||||

max_history_tokens=32_000,

|

||||

),

|

||||

conversation_store=store_b,

|

||||

|

||||

@@ -1257,7 +1257,7 @@ async def _run_org_pipeline(websocket, topic: str):

|

||||

judge=judge,

|

||||

config=LoopConfig(

|

||||

max_iterations=30,

|

||||

max_tool_calls_per_turn=25,

|

||||

max_tool_calls_per_turn=30,

|

||||

max_history_tokens=32_000,

|

||||

),

|

||||

conversation_store=store,

|

||||

|

||||

@@ -453,7 +453,7 @@ identity_prompt = (

|

||||

)

|

||||

loop_config = {

|

||||

"max_iterations": 50,

|

||||

"max_tool_calls_per_turn": 10,

|

||||

"max_tool_calls_per_turn": 30,

|

||||

"max_history_tokens": 32000,

|

||||

}

|

||||

|

||||

@@ -539,7 +539,7 @@ class CredentialTesterAgent:

|

||||

max_tokens=self.config.max_tokens,

|

||||

loop_config={

|

||||

"max_iterations": 50,

|

||||

"max_tool_calls_per_turn": 10,

|

||||

"max_tool_calls_per_turn": 30,

|

||||

"max_history_tokens": 32000,

|

||||

},

|

||||

conversation_mode="continuous",

|

||||

|

||||

@@ -127,7 +127,7 @@ identity_prompt = (

|

||||

)

|

||||

loop_config = {

|

||||

"max_iterations": 100,

|

||||

"max_tool_calls_per_turn": 20,

|

||||

"max_tool_calls_per_turn": 30,

|

||||

"max_history_tokens": 32000,

|

||||

}

|

||||

|

||||

@@ -160,8 +160,8 @@ queen_graph = GraphSpec(

|

||||

edges=[],

|

||||

conversation_mode="continuous",

|

||||

loop_config={

|

||||

"max_iterations": 200,

|

||||

"max_tool_calls_per_turn": 10,

|

||||

"max_iterations": 999_999,

|

||||

"max_tool_calls_per_turn": 30,

|

||||

"max_history_tokens": 32000,

|

||||

},

|

||||

)

|

||||

|

||||

@@ -10,13 +10,35 @@ _ref_dir = Path(__file__).parent.parent / "reference"

|

||||

_framework_guide = (_ref_dir / "framework_guide.md").read_text()

|

||||

_file_templates = (_ref_dir / "file_templates.md").read_text()

|

||||

_anti_patterns = (_ref_dir / "anti_patterns.md").read_text()

|

||||

_gcu_guide_path = _ref_dir / "gcu_guide.md"

|

||||

_gcu_guide = _gcu_guide_path.read_text() if _gcu_guide_path.exists() else ""

|

||||

|

||||

|

||||

def _is_gcu_enabled() -> bool:

|

||||

try:

|

||||

from framework.config import get_gcu_enabled

|

||||

|

||||

return get_gcu_enabled()

|

||||

except Exception:

|

||||

return False

|

||||

|

||||

|

||||

def _build_appendices() -> str:

|

||||

parts = (

|

||||

"\n\n# Appendix: Framework Reference\n\n"

|

||||

+ _framework_guide

|

||||

+ "\n\n# Appendix: File Templates\n\n"

|

||||

+ _file_templates

|

||||

+ "\n\n# Appendix: Anti-Patterns\n\n"

|

||||

+ _anti_patterns

|

||||

)

|

||||

if _is_gcu_enabled() and _gcu_guide:

|

||||

parts += "\n\n# Appendix: GCU Browser Automation Guide\n\n" + _gcu_guide

|

||||

return parts

|

||||

|

||||

|

||||

# Shared appendices — appended to every coding node's system prompt.

|

||||

_appendices = (

|

||||

"\n\n# Appendix: Framework Reference\n\n" + _framework_guide

|

||||

+ "\n\n# Appendix: File Templates\n\n" + _file_templates

|

||||

+ "\n\n# Appendix: Anti-Patterns\n\n" + _anti_patterns

|

||||

)

|

||||

_appendices = _build_appendices()

|

||||

|

||||

# Tools available to both coder (worker) and queen.

|

||||

_SHARED_TOOLS = [

|

||||

@@ -348,7 +370,7 @@ value. These DO NOT EXIST.

|

||||

```python

|

||||

loop_config = {

|

||||

"max_iterations": 100,

|

||||

"max_tool_calls_per_turn": 20,

|

||||

"max_tool_calls_per_turn": 30,

|

||||

"max_history_tokens": 32000,

|

||||

}

|

||||

```

|

||||

@@ -388,7 +410,10 @@ If list_agent_tools() shows these don't exist, use alternatives \

|

||||

**Node rules**:

|

||||

- **2-4 nodes MAX.** Never exceed 4. Merge thin nodes aggressively.

|

||||

- A node with 0 tools is NOT a real node — merge it.

|

||||

- node_type always "event_loop"

|

||||

- node_type "event_loop" for all regular graph nodes. Use "gcu" ONLY for

|

||||

browser automation subagents (see GCU appendix). GCU nodes MUST be in a

|

||||

parent node's sub_agents list, NEVER connected via edges, and NEVER used

|

||||

as entry/terminal nodes.

|

||||

- max_node_visits default is 0 (unbounded) — correct for forever-alive. \

|

||||

Only set >0 in one-shot agents with bounded feedback loops.

|

||||

- Feedback inputs: nullable_output_keys

|

||||

@@ -466,7 +491,7 @@ Most agents use `terminal_nodes=[]` (forever-alive). This means \

|

||||

terminal node that doesn't exist. Agent tests MUST be structural:

|

||||

- Validate graph, node specs, edges, tools, prompts

|

||||

- Check goal/constraints/success criteria definitions

|

||||

- Test `AgentRunner.load()` + `_setup()` (skip if no API key)

|

||||

- Test `AgentRunner.load()` succeeds (structural, no API key needed)

|

||||

- NEVER call `runner.run()` or `trigger_and_wait()` in tests for \

|

||||

forever-alive agents — they will hang and time out.

|

||||

When you restructure an agent (change nodes/edges), always update \

|

||||

@@ -533,14 +558,35 @@ critical issue. Use sparingly.

|

||||

|

||||

## Agent Loading

|

||||

- load_built_agent(agent_path) — Load a newly built agent as the worker in \

|

||||

this session. Call after building and validating an agent to make it \

|

||||

available immediately. The user sees the graph update and can interact \

|

||||

with it without leaving the session.

|

||||

this session. If a worker is already loaded, it is automatically unloaded \

|

||||

first. Call after building and validating an agent to make it available \

|

||||

immediately.

|

||||

|

||||

## Credentials

|

||||

- list_credentials(credential_id?) — List all authorized credentials in the \

|

||||

local store. Returns IDs, aliases, status, and identity metadata (never \

|

||||

secrets). Optionally filter by credential_id.

|

||||

"""

|

||||

|

||||

_queen_behavior = """

|

||||

# Behavior

|

||||

|

||||

## Greeting and identity

|

||||

|

||||

When the user greets you ("hi", "hello") or asks what you can do / \

|

||||

what you are, respond concisely. DO NOT list internal processes \

|

||||

(validation steps, AgentRunner.load, tool discovery). Focus on \

|

||||

user-facing capabilities:

|

||||

|

||||

1. Direct capabilities: file operations, shell commands, coding, \

|

||||

agent building & debugging.

|

||||

2. Delegation: describe what the loaded worker does in one sentence \

|

||||

(read the Worker Profile at the end of this prompt). If no worker \

|

||||

is loaded, say so.

|

||||

3. End with a short prompt: "What do you need?"

|

||||

|

||||

Keep it under 10 lines. No bullet-point dumps of every tool you have.

|

||||

|

||||

## Direct coding

|

||||

You can do any coding task directly — reading files, writing code, running \

|

||||

commands, building agents, debugging. For quick tasks, do them yourself.

|

||||

@@ -556,23 +602,73 @@ subtasks to justify delegation.

|

||||

- Building, modifying, or configuring agents is ALWAYS your job. Never \

|

||||

delegate agent construction to the worker, even as a "research" subtask.

|

||||

|

||||

## When the user says "run", "execute", or "start" (without specifics)

|

||||

|

||||

The loaded worker is described in the Worker Profile below. Ask what \

|

||||

task or topic they want — do NOT call list_agents() or list directories. \

|

||||

The worker is already loaded. Just ask for the input the worker needs \

|

||||

(e.g., a research topic, a target domain, a job description).

|

||||

|

||||

If NO worker is loaded, say so and offer to build one.

|

||||

|

||||

## When idle (worker not running):

|

||||

- Greet the user. Mention what the worker can do.

|

||||

- Greet the user. Mention what the worker can do in one sentence.

|

||||

- For tasks matching the worker's goal, call start_worker(task).

|

||||

- For everything else, do it directly.

|

||||

|

||||

## When the user clicks Run (external event notification)

|

||||

When you receive an event that the user clicked Run:

|

||||

- If the worker started successfully, briefly acknowledge it — do NOT \

|

||||

repeat the full status. The user can see the graph is running.

|

||||

- If the worker failed to start (credential or structural error), \

|

||||

explain the problem clearly and help fix it. For credential errors, \

|

||||

guide the user to set up the missing credentials. For structural \

|

||||

issues, offer to fix the agent graph directly.

|

||||

|

||||

## When worker is running:

|

||||

- If the user asks about progress, call get_worker_status().

|

||||

- If the user asks about progress, call get_worker_status() ONCE and \

|

||||

report the result. Do NOT poll in a loop.

|

||||

- NEVER call get_worker_status() repeatedly without user input in between. \

|

||||

The worker will surface results through client-facing nodes. You do not \

|

||||

need to monitor it. One check per user request is enough.

|

||||

- If the user has a concern or instruction for the worker, call \

|

||||

inject_worker_message(content) to relay it.

|

||||

- You can still do coding tasks directly while the worker runs.

|

||||

- If an escalation ticket arrives from the judge, assess severity:

|

||||

- Low/transient: acknowledge silently, do not disturb the user.

|

||||

- High/critical: notify the user with a brief analysis and suggested action.

|

||||

- After starting the worker or checking its status, WAIT for the user's \

|

||||

next message. Do not take autonomous actions unless the user asks.

|

||||

|

||||

## When worker asks user a question:

|

||||

- The system will route the user's response directly to the worker. \

|

||||

You do not need to relay it. The user will come back to you after responding.

|

||||

|

||||

## Showing or describing the loaded worker

|

||||

|

||||

When the user asks to "show the graph", "describe the agent", or \

|

||||

"re-generate the graph", read the Worker Profile and present the \

|

||||

worker's current architecture as an ASCII diagram. Use the processing \

|

||||

stages, tools, and edges from the loaded worker. Do NOT enter the \

|

||||

agent building workflow — you are describing what already exists, not \

|

||||

building something new.

|

||||

|

||||

## Modifying the loaded worker

|

||||

|

||||

When the user asks to change, modify, or update the loaded worker \

|

||||

(e.g., "change the report node", "add a node", "delete node X"):

|

||||

|

||||

1. Use the **Path** from the Worker Profile to locate the agent files.

|

||||

2. Read the relevant files (nodes/__init__.py, agent.py, etc.).

|

||||

3. Make the requested changes using edit_file / write_file.

|

||||

4. Run validation (default_agent.validate(), AgentRunner.load(), \

|

||||

validate_agent_tools()).

|

||||

5. **Reload the modified worker**: call load_built_agent("{path}") \

|

||||

so the changes take effect immediately. If a worker is already loaded, \

|

||||

stop it first, then reload.

|

||||

|

||||

Do NOT skip step 5 — without reloading, the user will still be \

|

||||

interacting with the old version.

|

||||

"""

|

||||

|

||||

_queen_phase_7 = """

|

||||

@@ -622,7 +718,8 @@ coder_node = NodeSpec(

|

||||

"A complete, validated Hive agent package exists at "

|

||||

"exports/{agent_name}/ and passes structural validation."

|

||||

),

|

||||

tools=_SHARED_TOOLS + [

|

||||

tools=_SHARED_TOOLS

|

||||

+ [

|

||||

# Graph lifecycle tools (multi-graph sessions)

|

||||

"load_agent",

|

||||

"unload_agent",

|

||||

@@ -711,7 +808,8 @@ queen_node = NodeSpec(

|

||||

"User's intent is understood, coding tasks are completed correctly, "

|

||||

"and the worker is managed effectively when delegated to."

|

||||

),

|

||||

tools=_SHARED_TOOLS + [

|

||||

tools=_SHARED_TOOLS

|

||||

+ [

|

||||

# Worker lifecycle

|

||||

"start_worker",

|

||||

"stop_worker",

|

||||

@@ -722,6 +820,8 @@ queen_node = NodeSpec(

|

||||

"notify_operator",

|

||||

# Agent loading

|

||||

"load_built_agent",

|

||||

# Credentials

|

||||

"list_credentials",

|

||||

],

|

||||

system_prompt=(

|

||||

"You are the Queen — the user's primary interface. You are a coding agent "

|

||||

@@ -747,6 +847,8 @@ ALL_QUEEN_TOOLS = _SHARED_TOOLS + [

|

||||

"notify_operator",

|

||||

# Agent loading

|

||||

"load_built_agent",

|

||||

# Credentials

|

||||

"list_credentials",

|

||||

]

|

||||

|

||||

__all__ = [

|

||||

|

||||

@@ -80,7 +80,7 @@ One client-facing node handles ALL user interaction (setup, logging, reports). O

|

||||

- Validate graph structure (nodes, edges, entry points)

|

||||

- Verify node specs (tools, prompts, client-facing flag)

|

||||

- Check goal/constraints/success criteria definitions

|

||||

- Test that `AgentRunner.load()` + `_setup()` succeeds (skip if no API key)

|

||||

- Test that `AgentRunner.load()` succeeds (structural, no API key needed)

|

||||

|

||||

**What NOT to do:**

|

||||

```python

|

||||

@@ -105,3 +105,7 @@ def test_research_routes_back_to_interact(self):

|

||||

23. **Forgetting sys.path setup in conftest.py** — Tests need `exports/` and `core/` on sys.path.

|

||||

|

||||

24. **Not using auto_responder for client-facing nodes** — Tests with client-facing nodes hang without an auto-responder that injects input. But note: even WITH auto_responder, forever-alive agents still hang because the graph never terminates. Auto-responder only helps for agents with terminal nodes.

|

||||

|

||||

25. **Manually wiring browser tools on event_loop nodes** — If the agent needs browser automation, use `node_type="gcu"` which auto-includes all browser tools and prepends best-practices guidance. Do NOT manually list browser tool names on event_loop nodes — they may not exist in the MCP server or may be incomplete. See the GCU Guide appendix.

|

||||

|

||||

26. **Using GCU nodes as regular graph nodes** — GCU nodes (`node_type="gcu"`) are exclusively subagents. They must ONLY appear in a parent node's `sub_agents=["gcu-node-id"]` list and be invoked via `delegate_to_sub_agent()`. They must NEVER be connected via edges, used as entry nodes, or used as terminal nodes. If a GCU node appears as an edge source or target, the graph will fail pre-load validation.

|

||||

|

||||

@@ -235,16 +235,14 @@ class MyAgent:

|

||||

identity_prompt=identity_prompt,

|

||||

)

|

||||

|

||||

def _setup(self, mock_mode=False):

|

||||

def _setup(self):

|

||||

self._storage_path = Path.home() / ".hive" / "agents" / "my_agent"

|

||||

self._storage_path.mkdir(parents=True, exist_ok=True)

|

||||

self._tool_registry = ToolRegistry()

|

||||

mcp_config = Path(__file__).parent / "mcp_servers.json"

|

||||

if mcp_config.exists():

|

||||

self._tool_registry.load_mcp_config(mcp_config)

|

||||

llm = None

|

||||

if not mock_mode:

|

||||

llm = LiteLLMProvider(model=self.config.model, api_key=self.config.api_key, api_base=self.config.api_base)

|

||||

llm = LiteLLMProvider(model=self.config.model, api_key=self.config.api_key, api_base=self.config.api_base)

|

||||

tools = list(self._tool_registry.get_tools().values())

|

||||

tool_executor = self._tool_registry.get_executor()

|

||||

self._graph = self._build_graph()

|

||||

@@ -257,9 +255,9 @@ class MyAgent:

|

||||

checkpoint_max_age_days=7, async_checkpoint=True),

|

||||

)

|

||||

|

||||

async def start(self, mock_mode=False):

|

||||

async def start(self):

|

||||

if self._agent_runtime is None:

|

||||

self._setup(mock_mode=mock_mode)

|

||||

self._setup()

|

||||

if not self._agent_runtime.is_running:

|

||||

await self._agent_runtime.start()

|

||||

|

||||

@@ -274,8 +272,8 @@ class MyAgent:

|

||||

return await self._agent_runtime.trigger_and_wait(

|

||||

entry_point_id=entry_point, input_data=input_data or {}, session_state=session_state)

|

||||

|

||||

async def run(self, context, mock_mode=False, session_state=None):

|

||||

await self.start(mock_mode=mock_mode)

|

||||

async def run(self, context, session_state=None):

|

||||

await self.start()

|

||||

try:

|

||||

result = await self.trigger_and_wait("default", context, session_state=session_state)

|

||||

return result or ExecutionResult(success=False, error="Execution timeout")

|

||||

@@ -471,19 +469,17 @@ def cli():

|

||||

|

||||

@cli.command()

|

||||

@click.option("--topic", "-t", required=True)

|

||||

@click.option("--mock", is_flag=True)

|

||||

@click.option("--verbose", "-v", is_flag=True)

|

||||

def run(topic, mock, verbose):

|

||||

def run(topic, verbose):

|

||||

"""Execute the agent."""

|

||||

setup_logging(verbose=verbose)

|

||||

result = asyncio.run(default_agent.run({"topic": topic}, mock_mode=mock))

|

||||

result = asyncio.run(default_agent.run({"topic": topic}))

|

||||

click.echo(json.dumps({"success": result.success, "output": result.output}, indent=2, default=str))

|

||||

sys.exit(0 if result.success else 1)

|

||||

|

||||

|

||||

@cli.command()

|

||||

@click.option("--mock", is_flag=True)

|

||||

def tui(mock):

|

||||

def tui():

|

||||

"""Launch TUI dashboard."""

|

||||

from pathlib import Path

|

||||

from framework.tui.app import AdenTUI

|

||||

@@ -499,7 +495,7 @@ def tui(mock):

|

||||

storage.mkdir(parents=True, exist_ok=True)

|

||||

mcp_cfg = Path(__file__).parent / "mcp_servers.json"

|

||||

if mcp_cfg.exists(): agent._tool_registry.load_mcp_config(mcp_cfg)

|

||||

llm = None if mock else LiteLLMProvider(model=agent.config.model, api_key=agent.config.api_key, api_base=agent.config.api_base)

|

||||

llm = LiteLLMProvider(model=agent.config.model, api_key=agent.config.api_key, api_base=agent.config.api_base)

|

||||

runtime = create_agent_runtime(

|

||||

graph=agent._build_graph(), goal=agent.goal, storage_path=storage,

|

||||

entry_points=[EntryPointSpec(id="start", name="Start", entry_node="intake", trigger_type="manual", isolation_level="isolated")],

|

||||

@@ -564,7 +560,6 @@ import sys

|

||||

from pathlib import Path

|

||||

|

||||

import pytest

|

||||

import pytest_asyncio

|

||||

|

||||

_repo_root = Path(__file__).resolve().parents[3]

|

||||

for _p in ["exports", "core"]:

|

||||

@@ -576,18 +571,17 @@ AGENT_PATH = str(Path(__file__).resolve().parents[1])

|

||||

|

||||

|

||||

@pytest.fixture(scope="session")

|

||||

def mock_mode():

|

||||

return True

|

||||

def agent_module():

|

||||

"""Import the agent package for structural validation."""

|

||||

import importlib

|

||||

return importlib.import_module(Path(AGENT_PATH).name)

|

||||

|

||||

|

||||

@pytest_asyncio.fixture(scope="session")

|

||||

async def runner(tmp_path_factory, mock_mode):

|

||||

@pytest.fixture(scope="session")

|

||||

def runner_loaded():

|

||||

"""Load the agent through AgentRunner (structural only, no LLM needed)."""

|

||||

from framework.runner.runner import AgentRunner

|

||||

storage = tmp_path_factory.mktemp("agent_storage")

|

||||

r = AgentRunner.load(AGENT_PATH, mock_mode=mock_mode, storage_path=storage)

|

||||

r._setup()

|

||||

yield r

|

||||

await r.cleanup_async()

|

||||

return AgentRunner.load(AGENT_PATH)

|

||||

```

|

||||

|

||||

## entry_points Format

|

||||

|

||||

@@ -72,7 +72,7 @@ goal = Goal(

|

||||

| id | str | required | kebab-case identifier |

|

||||

| name | str | required | Display name |

|

||||

| description | str | required | What the node does |

|

||||

| node_type | str | required | Always `"event_loop"` |

|

||||

| node_type | str | required | `"event_loop"` or `"gcu"` (browser automation — see GCU Guide appendix) |

|

||||

| input_keys | list[str] | required | Memory keys this node reads |

|

||||

| output_keys | list[str] | required | Memory keys this node writes via set_output |

|

||||

| system_prompt | str | "" | LLM instructions |

|

||||

|

||||

@@ -0,0 +1,119 @@

|

||||

# GCU Browser Automation Guide

|

||||

|

||||

## When to Use GCU Nodes

|

||||

|

||||

Use `node_type="gcu"` when:

|

||||

- The user's workflow requires **navigating real websites** (scraping, form-filling, social media interaction, testing web UIs)

|

||||

- The task involves **dynamic/JS-rendered pages** that `web_scrape` cannot handle (SPAs, infinite scroll, login-gated content)

|

||||

- The agent needs to **interact with a website** — clicking, typing, scrolling, selecting, uploading files

|

||||

|

||||

Do NOT use GCU for:

|

||||

- Static content that `web_scrape` handles fine

|

||||

- API-accessible data (use the API directly)

|

||||

- PDF/file processing

|

||||

- Anything that doesn't require a browser UI

|

||||

|

||||

## What GCU Nodes Are

|

||||

|

||||

- `node_type="gcu"` — a declarative enhancement over `event_loop`

|

||||

- Framework auto-prepends browser best-practices system prompt

|

||||

- Framework auto-includes all 31 browser tools from `gcu-tools` MCP server

|

||||

- Same underlying `EventLoopNode` class — no new imports needed

|

||||

- `tools=[]` is correct — tools are auto-populated at runtime

|

||||

|

||||

## GCU Architecture Pattern

|

||||

|

||||

GCU nodes are **subagents** — invoked via `delegate_to_sub_agent()`, not connected via edges.

|

||||

|

||||

- Primary nodes (`event_loop`, client-facing) orchestrate; GCU nodes do browser work

|

||||

- Parent node declares `sub_agents=["gcu-node-id"]` and calls `delegate_to_sub_agent(agent_id="gcu-node-id", task="...")`

|

||||

- GCU nodes set `max_node_visits=1` (single execution per delegation), `client_facing=False`

|

||||

- GCU nodes use `output_keys=["result"]` and return structured JSON via `set_output("result", ...)`

|

||||

|

||||

## GCU Node Definition Template

|

||||

|

||||

```python

|

||||

gcu_browser_node = NodeSpec(

|

||||

id="gcu-browser-worker",

|

||||

name="Browser Worker",

|

||||

description="Browser subagent that does X.",

|

||||

node_type="gcu",

|

||||

client_facing=False,

|

||||

max_node_visits=1,

|

||||

input_keys=[],

|

||||

output_keys=["result"],

|

||||

tools=[], # Auto-populated with all browser tools

|

||||

system_prompt="""\

|

||||

You are a browser agent. Your job: [specific task].

|

||||

|

||||

## Workflow

|

||||

1. browser_start (only if no browser is running yet)

|

||||

2. browser_open(url=TARGET_URL) — note the returned targetId

|

||||

3. browser_snapshot to read the page

|

||||

4. [task-specific steps]

|

||||

5. set_output("result", JSON)

|

||||

|

||||

## Output format

|

||||

set_output("result", JSON) with:

|

||||

- [field]: [type and description]

|

||||

""",

|

||||

)

|

||||

```

|

||||

|

||||

## Parent Node Template (orchestrating GCU subagents)

|

||||

|

||||

```python

|

||||

orchestrator_node = NodeSpec(

|

||||

id="orchestrator",

|

||||

...

|

||||

node_type="event_loop",

|

||||

sub_agents=["gcu-browser-worker"],

|

||||

system_prompt="""\

|

||||

...

|

||||

delegate_to_sub_agent(

|

||||

agent_id="gcu-browser-worker",

|

||||

task="Navigate to [URL]. Do [specific task]. Return JSON with [fields]."

|

||||

)

|

||||

...

|

||||

""",

|

||||

tools=[], # Orchestrator doesn't need browser tools

|

||||

)

|

||||

```

|

||||

|

||||

## mcp_servers.json with GCU

|

||||

|

||||

```json

|

||||

{

|

||||

"hive-tools": { ... },

|

||||

"gcu-tools": {

|

||||

"transport": "stdio",

|

||||

"command": "uv",

|

||||

"args": ["run", "python", "-m", "gcu.server", "--stdio"],

|

||||

"cwd": "../../tools",

|

||||

"description": "GCU tools for browser automation"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

Note: `gcu-tools` is auto-added if any node uses `node_type="gcu"`, but including it explicitly is fine.

|

||||

|

||||

## GCU System Prompt Best Practices

|

||||

|

||||

Key rules to bake into GCU node prompts:

|

||||

|

||||

- Prefer `browser_snapshot` over `browser_get_text("body")` — compact accessibility tree vs 100KB+ raw HTML

|

||||

- Always `browser_wait` after navigation

|

||||

- Use large scroll amounts (~2000-5000) for lazy-loaded content

|

||||

- For spillover files, use `run_command` with grep, not `read_file`

|

||||

- If auth wall detected, report immediately — don't attempt login

|

||||

- Keep tool calls per turn ≤10

|

||||

- Tab isolation: when browser is already running, use `browser_open(background=true)` and pass `target_id` to every call

|

||||

|

||||

## GCU Anti-Patterns

|

||||

|

||||

- Using `browser_screenshot` to read text (use `browser_snapshot`)

|

||||

- Re-navigating after scrolling (resets scroll position)

|

||||

- Attempting login on auth walls

|

||||

- Forgetting `target_id` in multi-tab scenarios

|

||||

- Putting browser tools directly on `event_loop` nodes instead of using GCU subagent pattern

|

||||

- Making GCU nodes `client_facing=True` (they should be autonomous subagents)

|

||||

@@ -761,7 +761,7 @@ class GraphBuilder:

|

||||

path = self.storage_path / f"{session_id}.json"

|

||||

if not path.exists():

|

||||

raise FileNotFoundError(f"Session not found: {session_id}")

|

||||

return BuildSession.model_validate_json(path.read_text())

|

||||

return BuildSession.model_validate_json(path.read_text(encoding="utf-8"))

|

||||

|

||||

@classmethod

|

||||

def list_sessions(cls, storage_path: Path | str | None = None) -> list[str]:

|

||||

|

||||

@@ -90,6 +90,11 @@ def get_api_key() -> str | None:

|

||||

return None

|

||||

|

||||

|

||||

def get_gcu_enabled() -> bool:

|

||||

"""Return whether GCU (browser automation) is enabled in user config."""

|

||||

return get_hive_config().get("gcu_enabled", False)

|

||||

|

||||

|

||||

def get_api_base() -> str | None:

|

||||

"""Return the api_base URL for OpenAI-compatible endpoints, if configured."""

|

||||

llm = get_hive_config().get("llm", {})

|

||||

|

||||

@@ -42,6 +42,14 @@ For Vault integration:

|

||||

from core.framework.credentials.vault import HashiCorpVaultStorage

|

||||

"""

|

||||

|

||||

from .key_storage import (

|

||||

delete_aden_api_key,

|

||||

generate_and_save_credential_key,

|

||||

load_aden_api_key,

|

||||

load_credential_key,

|

||||

save_aden_api_key,

|

||||

save_credential_key,

|

||||

)

|

||||

from .models import (

|

||||

CredentialDecryptionError,

|

||||

CredentialError,

|

||||

@@ -132,6 +140,13 @@ __all__ = [

|

||||

"CredentialRefreshError",

|

||||

"CredentialValidationError",

|

||||

"CredentialDecryptionError",

|

||||

# Key storage (bootstrap credentials)

|

||||

"load_credential_key",

|

||||

"save_credential_key",

|

||||

"generate_and_save_credential_key",

|

||||

"load_aden_api_key",

|

||||

"save_aden_api_key",

|

||||

"delete_aden_api_key",

|

||||

# Validation

|

||||

"ensure_credential_key_env",

|

||||

"validate_agent_credentials",

|

||||

|

||||

@@ -26,7 +26,7 @@ Usage:

|

||||

storage = AdenCachedStorage(

|

||||

local_storage=EncryptedFileStorage(),

|

||||

aden_provider=provider,

|

||||

cache_ttl_seconds=300, # Re-check Aden every 5 minutes

|

||||

cache_ttl_seconds=600, # Re-check Aden every 5 minutes

|

||||

)

|

||||

|

||||

# Create store

|

||||

@@ -77,7 +77,7 @@ class AdenCachedStorage(CredentialStorage):

|

||||

storage = AdenCachedStorage(

|

||||

local_storage=EncryptedFileStorage(),

|

||||

aden_provider=provider,

|

||||

cache_ttl_seconds=300, # 5 minutes

|

||||

cache_ttl_seconds=00, # 5 minutes

|

||||

)

|

||||

|

||||

store = CredentialStore(

|

||||

|

||||

@@ -0,0 +1,201 @@

|

||||

"""

|

||||

Dedicated file-based storage for bootstrap credentials.

|

||||

|

||||

HIVE_CREDENTIAL_KEY -> ~/.hive/secrets/credential_key (plain text, chmod 600)

|

||||

ADEN_API_KEY -> ~/.hive/credentials/ (encrypted via EncryptedFileStorage)

|

||||

|

||||

Boot order:

|

||||

1. load_credential_key() -- reads/generates the Fernet key, sets os.environ

|

||||

2. load_aden_api_key() -- uses the encrypted store (which needs the key from step 1)

|

||||

"""

|

||||

|

||||

from __future__ import annotations

|

||||

|

||||

import logging

|

||||

import os

|

||||

import stat

|

||||

from pathlib import Path

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

CREDENTIAL_KEY_PATH = Path.home() / ".hive" / "secrets" / "credential_key"

|

||||

CREDENTIAL_KEY_ENV_VAR = "HIVE_CREDENTIAL_KEY"

|

||||

ADEN_CREDENTIAL_ID = "aden_api_key"

|

||||

ADEN_ENV_VAR = "ADEN_API_KEY"

|

||||

|

||||

|

||||

# ---------------------------------------------------------------------------

|

||||

# HIVE_CREDENTIAL_KEY

|

||||

# ---------------------------------------------------------------------------

|

||||

|

||||

|

||||

def load_credential_key() -> str | None:

|

||||

"""Load HIVE_CREDENTIAL_KEY with priority: env > file > shell config.

|

||||

|

||||

Sets ``os.environ["HIVE_CREDENTIAL_KEY"]`` as a side-effect when found.

|

||||

Returns the key string, or ``None`` if unavailable everywhere.

|

||||

"""

|

||||

# 1. Already in environment (set by parent process, CI, Windows Registry, etc.)

|

||||

key = os.environ.get(CREDENTIAL_KEY_ENV_VAR)

|

||||

if key:

|

||||

return key

|

||||

|

||||

# 2. Dedicated secrets file

|

||||

key = _read_credential_key_file()

|

||||

if key:

|

||||

os.environ[CREDENTIAL_KEY_ENV_VAR] = key

|

||||

return key

|

||||

|

||||

# 3. Shell config fallback (backward compat for old installs)

|

||||

key = _read_from_shell_config(CREDENTIAL_KEY_ENV_VAR)

|

||||

if key:

|

||||

os.environ[CREDENTIAL_KEY_ENV_VAR] = key

|

||||

return key

|

||||

|

||||

return None

|

||||

|

||||

|

||||

def save_credential_key(key: str) -> Path:

|

||||

"""Save HIVE_CREDENTIAL_KEY to ``~/.hive/secrets/credential_key``.

|

||||

|

||||

Creates parent dirs with mode 700, writes the file with mode 600.

|

||||

Also sets ``os.environ["HIVE_CREDENTIAL_KEY"]``.

|

||||

|

||||

Returns:

|

||||

The path that was written.

|

||||

"""

|

||||

path = CREDENTIAL_KEY_PATH

|

||||

path.parent.mkdir(parents=True, exist_ok=True)

|

||||

# Restrict the secrets directory itself

|

||||

path.parent.chmod(stat.S_IRWXU) # 0o700

|

||||

|

||||

path.write_text(key)

|

||||

path.chmod(stat.S_IRUSR | stat.S_IWUSR) # 0o600

|

||||

|

||||

os.environ[CREDENTIAL_KEY_ENV_VAR] = key

|

||||

return path

|

||||

|

||||

|

||||

def generate_and_save_credential_key() -> str:

|

||||

"""Generate a new Fernet key and persist it to ``~/.hive/secrets/credential_key``.

|

||||

|

||||

Returns:

|

||||

The generated key string.

|

||||

"""

|

||||

from cryptography.fernet import Fernet

|

||||

|

||||

key = Fernet.generate_key().decode()

|

||||

save_credential_key(key)

|

||||

return key

|

||||

|

||||

|

||||

# ---------------------------------------------------------------------------

|

||||

# ADEN_API_KEY

|

||||

# ---------------------------------------------------------------------------

|

||||

|

||||

|

||||

def load_aden_api_key() -> str | None:

|

||||

"""Load ADEN_API_KEY with priority: env > encrypted store > shell config.

|

||||

|

||||

**Must** be called after ``load_credential_key()`` because the encrypted

|

||||

store depends on HIVE_CREDENTIAL_KEY.

|

||||

|

||||

Sets ``os.environ["ADEN_API_KEY"]`` as a side-effect when found.

|

||||

Returns the key string, or ``None`` if unavailable everywhere.

|

||||

"""

|

||||

# 1. Already in environment

|

||||

key = os.environ.get(ADEN_ENV_VAR)

|

||||

if key:

|

||||

return key

|

||||

|

||||

# 2. Encrypted credential store

|

||||

key = _read_aden_from_encrypted_store()

|

||||

if key:

|

||||

os.environ[ADEN_ENV_VAR] = key

|

||||

return key

|

||||

|

||||

# 3. Shell config fallback (backward compat)

|

||||

key = _read_from_shell_config(ADEN_ENV_VAR)

|

||||

if key:

|

||||

os.environ[ADEN_ENV_VAR] = key

|

||||

return key

|

||||

|

||||

return None

|

||||

|

||||

|

||||

def save_aden_api_key(key: str) -> None:

|

||||

"""Save ADEN_API_KEY to the encrypted credential store.

|

||||

|

||||

Also sets ``os.environ["ADEN_API_KEY"]``.

|

||||

"""

|

||||

from pydantic import SecretStr

|

||||

|

||||

from .models import CredentialKey, CredentialObject

|

||||

from .storage import EncryptedFileStorage

|

||||

|

||||

storage = EncryptedFileStorage()

|

||||

cred = CredentialObject(

|

||||

id=ADEN_CREDENTIAL_ID,

|

||||

keys={"api_key": CredentialKey(name="api_key", value=SecretStr(key))},

|

||||

)

|

||||

storage.save(cred)

|

||||

os.environ[ADEN_ENV_VAR] = key

|

||||

|

||||

|

||||

def delete_aden_api_key() -> None:

|

||||

"""Remove ADEN_API_KEY from the encrypted store and ``os.environ``."""

|

||||

try:

|

||||

from .storage import EncryptedFileStorage

|

||||

|

||||

storage = EncryptedFileStorage()

|

||||

storage.delete(ADEN_CREDENTIAL_ID)

|

||||

except Exception:

|

||||

logger.debug("Could not delete %s from encrypted store", ADEN_CREDENTIAL_ID)

|

||||

|

||||

os.environ.pop(ADEN_ENV_VAR, None)

|

||||

|

||||

|

||||

# ---------------------------------------------------------------------------

|

||||

# Internal helpers

|

||||

# ---------------------------------------------------------------------------

|

||||

|

||||

|

||||

def _read_credential_key_file() -> str | None:

|

||||

"""Read the credential key from ``~/.hive/secrets/credential_key``."""

|

||||

try:

|

||||

if CREDENTIAL_KEY_PATH.is_file():

|

||||

value = CREDENTIAL_KEY_PATH.read_text(encoding="utf-8").strip()

|

||||

if value:

|

||||

return value

|

||||

except Exception:

|

||||

logger.debug("Could not read %s", CREDENTIAL_KEY_PATH)

|

||||

return None

|

||||

|

||||

|

||||

def _read_from_shell_config(env_var: str) -> str | None:

|

||||

"""Fallback: read an env var from ~/.zshrc or ~/.bashrc."""

|

||||

try:

|

||||

from aden_tools.credentials.shell_config import check_env_var_in_shell_config

|

||||

|

||||

found, value = check_env_var_in_shell_config(env_var)

|

||||

if found and value:

|

||||

return value

|

||||

except ImportError:

|

||||

pass

|

||||

return None

|

||||

|

||||

|

||||

def _read_aden_from_encrypted_store() -> str | None:

|

||||

"""Try to load ADEN_API_KEY from the encrypted credential store."""

|

||||

if not os.environ.get(CREDENTIAL_KEY_ENV_VAR):

|

||||

return None

|

||||

try:

|

||||

from .storage import EncryptedFileStorage

|

||||

|

||||

storage = EncryptedFileStorage()

|

||||

cred = storage.load(ADEN_CREDENTIAL_ID)

|

||||

if cred:

|

||||

return cred.get_key("api_key")

|

||||

except Exception:

|

||||

logger.debug("Could not load %s from encrypted store", ADEN_CREDENTIAL_ID)

|

||||

return None

|

||||

@@ -256,57 +256,23 @@ class CredentialSetupSession:

|

||||

|

||||

def _ensure_credential_key(self) -> bool:

|

||||

"""Ensure HIVE_CREDENTIAL_KEY is available for encrypted storage."""

|

||||

if os.environ.get("HIVE_CREDENTIAL_KEY"):

|

||||