Compare commits

107 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| adf1a10318 | |||

| a3916a6932 | |||

| cbd2c86bbf | |||

| f921846879 | |||

| a370403b16 | |||

| ad6d504ea4 | |||

| 65962ddf58 | |||

| bba44430c4 | |||

| 69c71d77fb | |||

| 7b98a6613a | |||

| 26481e27a6 | |||

| bb227b3d73 | |||

| 8a0cf5e0ae | |||

| 69218d5699 | |||

| 7d1433af21 | |||

| 0bfbf1e9c5 | |||

| 1ca4f5b22b | |||

| 0984e4c1e8 | |||

| 4cbf5a7434 | |||

| b33178c5be | |||

| dc6a336c60 | |||

| b855336448 | |||

| de021977fd | |||

| cd2b3fcd16 | |||

| b64024ede5 | |||

| a280d23113 | |||

| 41785abdba | |||

| de494c7e55 | |||

| 5fa0903ea8 | |||

| 7bd99fe074 | |||

| c838e1ca6d | |||

| f475923353 | |||

| 43f43c92e3 | |||

| 5463134322 | |||

| 3fbb392103 | |||

| a162da17e1 | |||

| b565134d57 | |||

| 3aafc89912 | |||

| 93449f92fe | |||

| d766e68d42 | |||

| 1d8b1f9774 | |||

| 5ea9abae83 | |||

| 15957499c5 | |||

| 0b50d9e874 | |||

| a1e54922bd | |||

| 63c0ca34ea | |||

| 135477e516 | |||

| 8cac49cd91 | |||

| 28dce63682 | |||

| 313ac952e0 | |||

| 0633d5130b | |||

| 995e487b49 | |||

| 64b58b57e0 | |||

| c6465908df | |||

| ca96bcc09f | |||

| 65ee628fae | |||

| 02043614e5 | |||

| 212b9bf9d4 | |||

| 6070c30a88 | |||

| 8a653e51bc | |||

| 1c1dcb9c33 | |||

| b7d357aea2 | |||

| 14182c45fc | |||

| 2fa8f4283c | |||

| ccb394675b | |||

| 931487a7d4 | |||

| fb28280ced | |||

| 52f16d5bb6 | |||

| e5b6c8581a | |||

| 2b63135afb | |||

| 779b376c6e | |||

| b1f3d6b155 | |||

| e7da62e61c | |||

| 7176745e1c | |||

| 20efd523c9 | |||

| edf51e6996 | |||

| 6b867883ce | |||

| 35a05f4120 | |||

| e0e78a97ce | |||

| 214098aaae | |||

| 754e33a1ae | |||

| b11b43bbe1 | |||

| 86f4645d1c | |||

| 2d05e96cd5 | |||

| 9c44d3b793 | |||

| 9b89ac694e | |||

| 630d8208cf | |||

| 9b342dc593 | |||

| ad879de6ff | |||

| 795266aab4 | |||

| 4e4ef121f9 | |||

| ddb9126955 | |||

| bac6d6dd68 | |||

| 3451570541 | |||

| e5e939f344 | |||

| 0d51d25482 | |||

| a0a5b10df0 | |||

| 04bac93c14 | |||

| 047f4a1a0c | |||

| 7994b90dfa | |||

| 04b6a80370 | |||

| a04a8a866d | |||

| 8c9baa62b0 | |||

| 262eaa6d84 | |||

| fc1a48f3bc | |||

| 060f320cd1 | |||

| bff32bcaa3 |

@@ -70,6 +70,7 @@ exports/*

|

||||

.agent-builder-sessions/*

|

||||

|

||||

.claude/settings.local.json

|

||||

.claude/skills/ship-it/

|

||||

|

||||

.venv

|

||||

|

||||

|

||||

@@ -37,11 +37,11 @@

|

||||

|

||||

## Overview

|

||||

|

||||

Build autonomous, reliable, self-improving AI agents without hardcoding workflows. Define your goal through conversation with a coding agent, and the framework generates a node graph with dynamically created connection code. When things break, the framework captures failure data, evolves the agent through the coding agent, and redeploys. Built-in human-in-the-loop nodes, credential management, and real-time monitoring give you control without sacrificing adaptability.

|

||||

Build autonomous, reliable, self-improving AI agents without hardcoding workflows. Define your goal through conversation with hive coding agent(queen), and the framework generates a node graph with dynamically created connection code. When things break, the framework captures failure data, evolves the agent through the coding agent, and redeploys. Built-in human-in-the-loop nodes, credential management, and real-time monitoring give you control without sacrificing adaptability.

|

||||

|

||||

Visit [adenhq.com](https://adenhq.com) for complete documentation, examples, and guides.

|

||||

|

||||

https://github.com/user-attachments/assets/846c0cc7-ffd6-47fa-b4b7-495494857a55

|

||||

[](https://www.youtube.com/watch?v=XDOG9fOaLjU)

|

||||

|

||||

## Who Is Hive For?

|

||||

|

||||

@@ -50,7 +50,7 @@ Hive is designed for developers and teams who want to build **production-grade A

|

||||

Hive is a good fit if you:

|

||||

|

||||

- Want AI agents that **execute real business processes**, not demos

|

||||

- Prefer **goal-driven development** over hardcoded workflows

|

||||

- Need **fast or high volume agent execution** over open workflow

|

||||

- Need **self-healing and adaptive agents** that improve over time

|

||||

- Require **human-in-the-loop control**, observability, and cost limits

|

||||

- Plan to run agents in **production environments**

|

||||

@@ -81,7 +81,7 @@ Use Hive when you need:

|

||||

### Prerequisites

|

||||

|

||||

- Python 3.11+ for agent development

|

||||

- Claude Code, Codex CLI, or Cursor for utilizing agent skills

|

||||

- An LLM provider that powers the agents

|

||||

|

||||

> **Note for Windows Users:** It is strongly recommended to use **WSL (Windows Subsystem for Linux)** or **Git Bash** to run this framework. Some core automation scripts may not execute correctly in standard Command Prompt or PowerShell.

|

||||

|

||||

@@ -110,71 +110,36 @@ This sets up:

|

||||

- **LLM provider** - Interactive default model configuration

|

||||

- All required Python dependencies with `uv`

|

||||

|

||||

- At last, it will initiate the open hive interface in your browser

|

||||

|

||||

<img width="2500" height="1214" alt="home-screen" src="https://github.com/user-attachments/assets/134d897f-5e75-4874-b00b-e0505f6b45c4" />

|

||||

|

||||

### Build Your First Agent

|

||||

|

||||

```bash

|

||||

# Build an agent using Claude Code

|

||||

claude> /hive

|

||||

Type the agent you want to build in the home input box

|

||||

|

||||

# Test your agent

|

||||

claude> /hive-debugger

|

||||

<img width="2500" height="1214" alt="Image" src="https://github.com/user-attachments/assets/1ce19141-a78b-46f5-8d64-dbf987e048f4" />

|

||||

|

||||

# (at separate terminal) Launch the interactive dashboard

|

||||

hive tui

|

||||

### Use Template Agents

|

||||

|

||||

# Or run directly

|

||||

hive run exports/your_agent_name --input '{"key": "value"}'

|

||||

```

|

||||

Click "Try a sample agent" and check the templates. You can run a templates directly or choose to build your version on top of the existing template.

|

||||

|

||||

## Coding Agent Support

|

||||

### Run Agents

|

||||

|

||||

### Codex CLI

|

||||

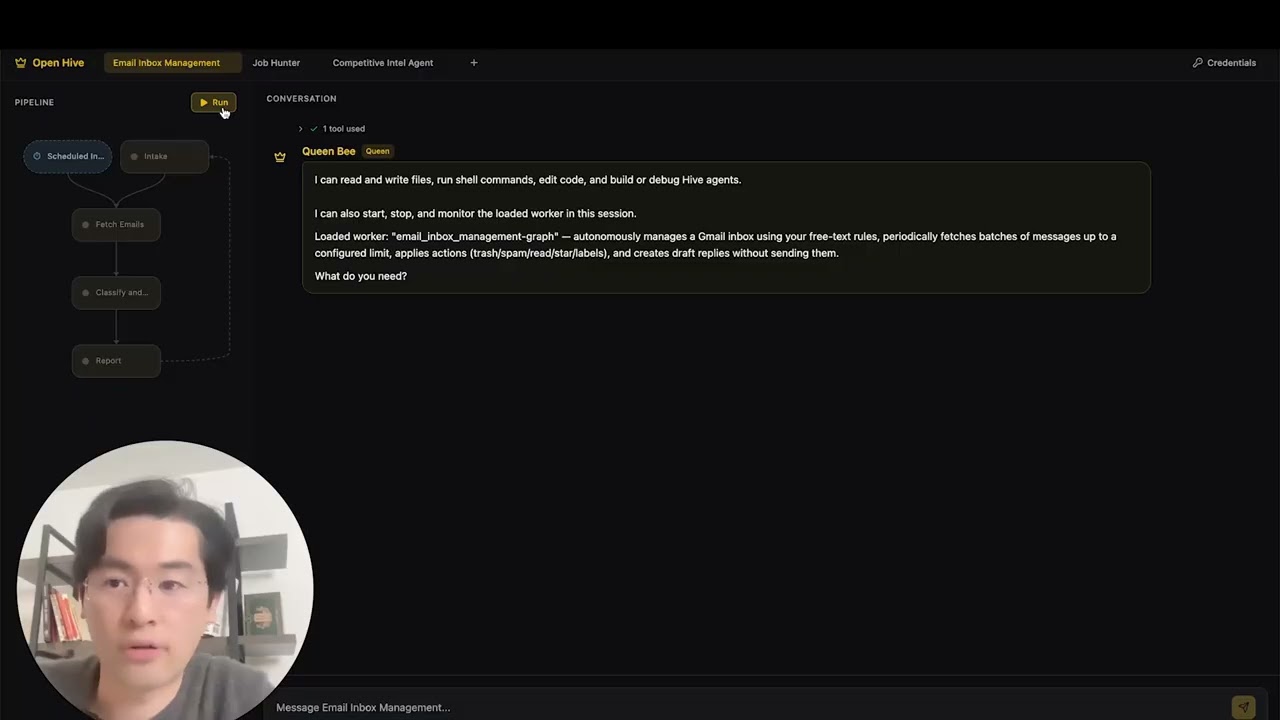

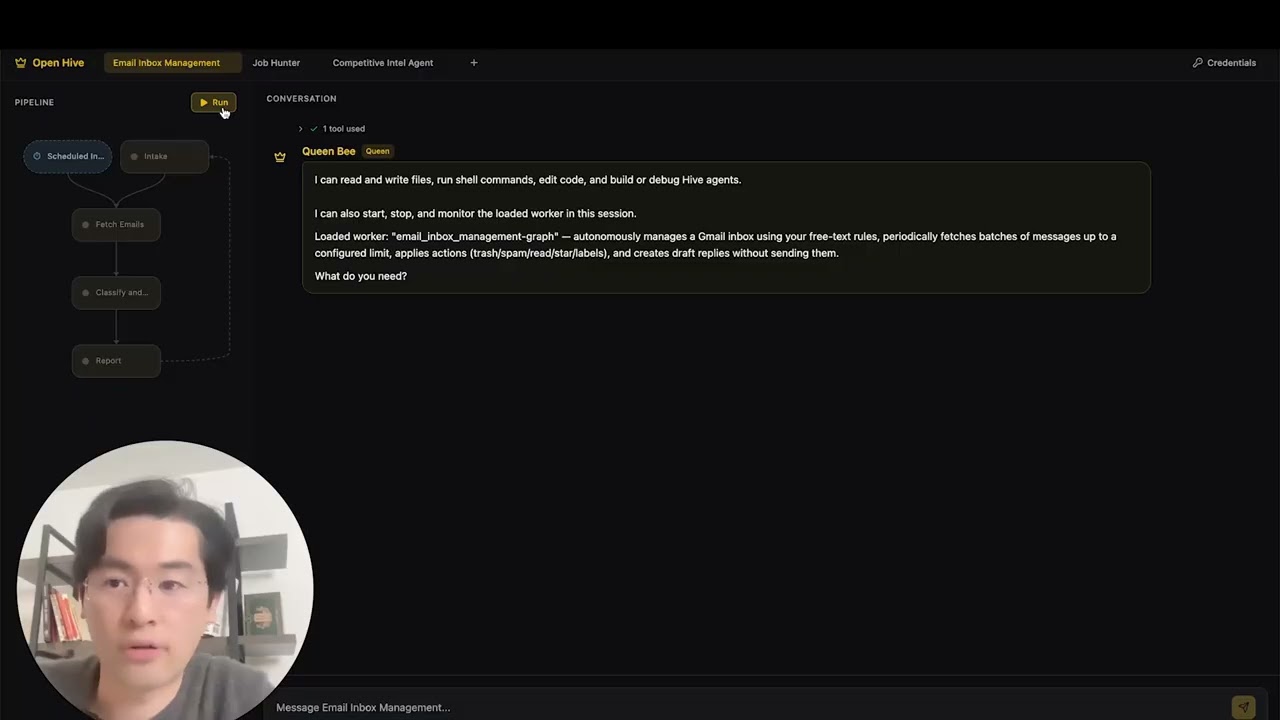

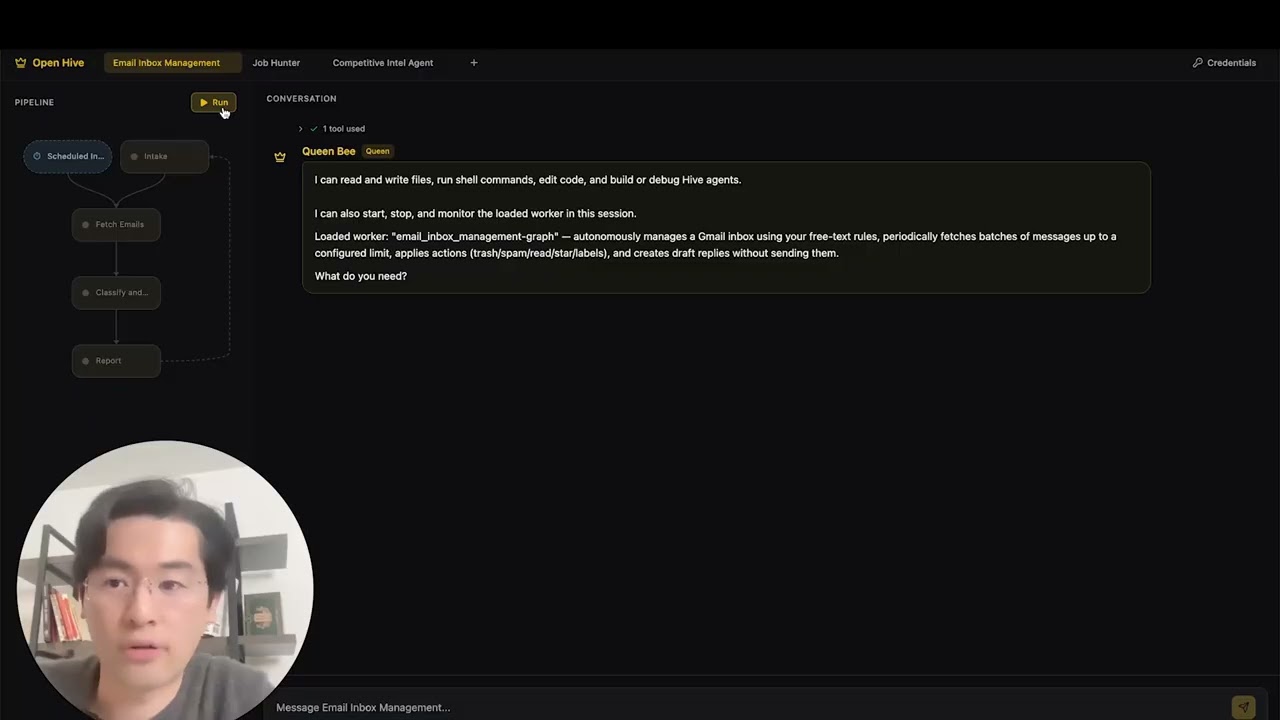

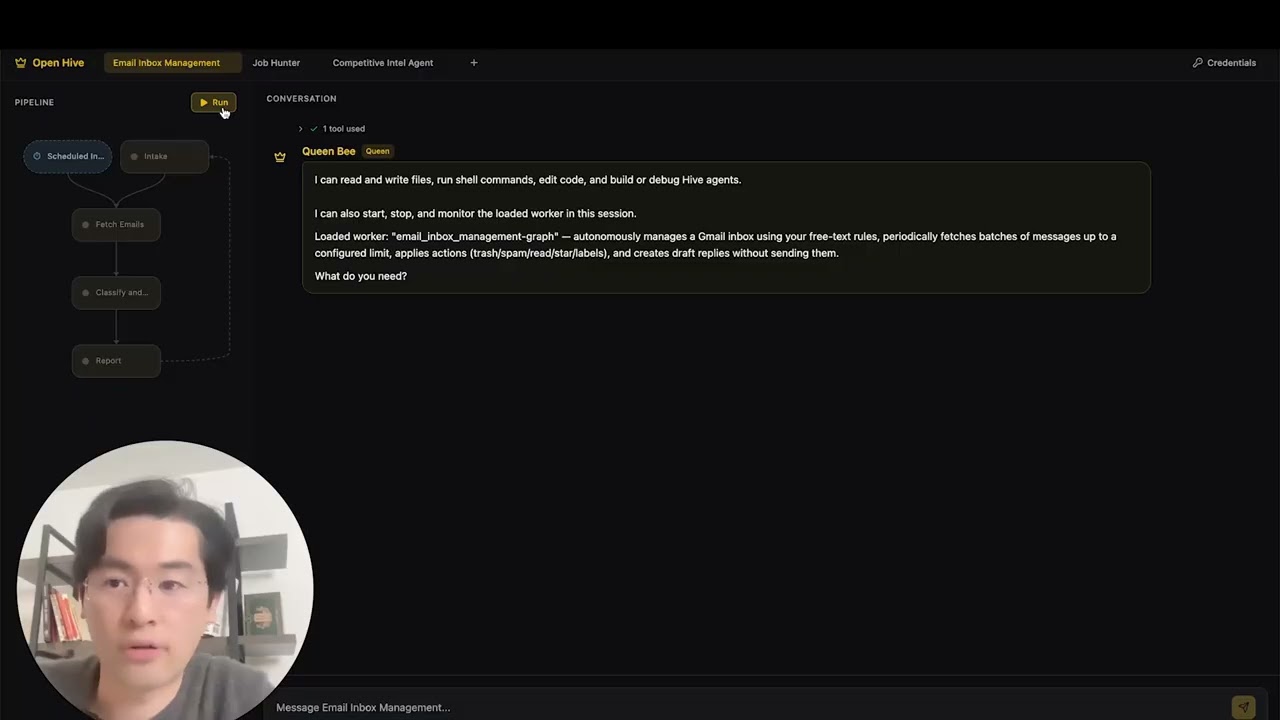

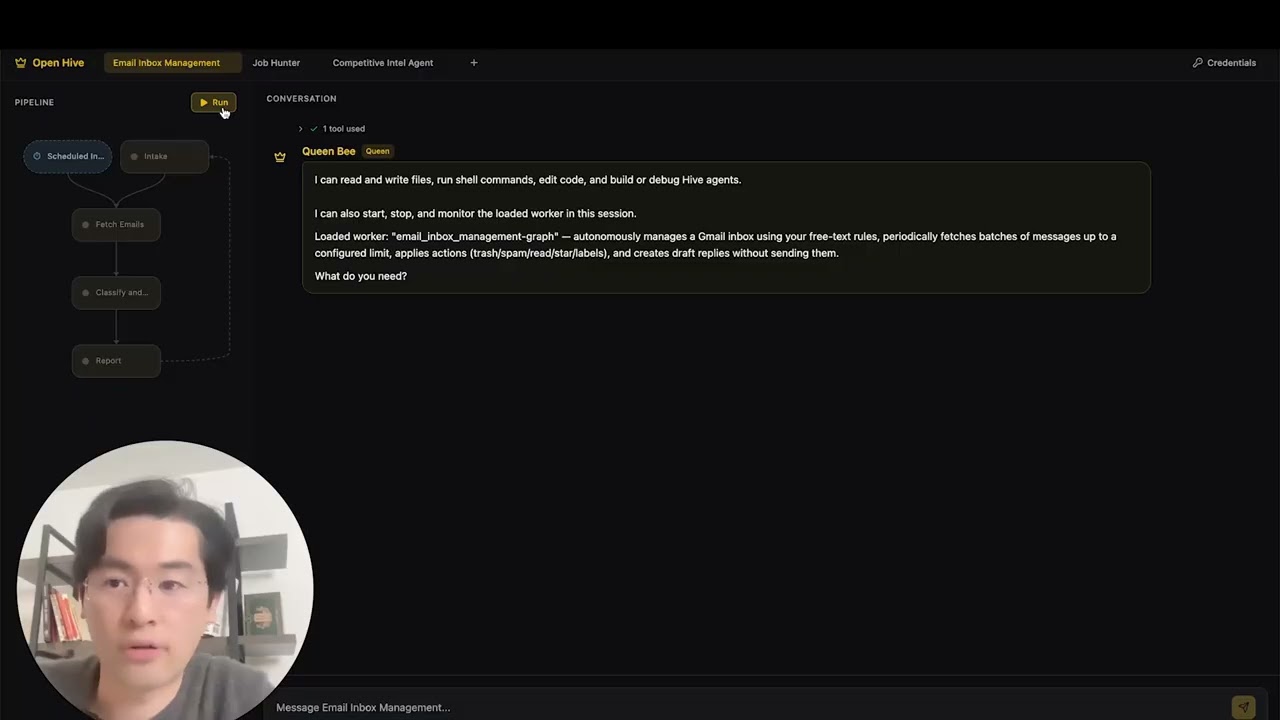

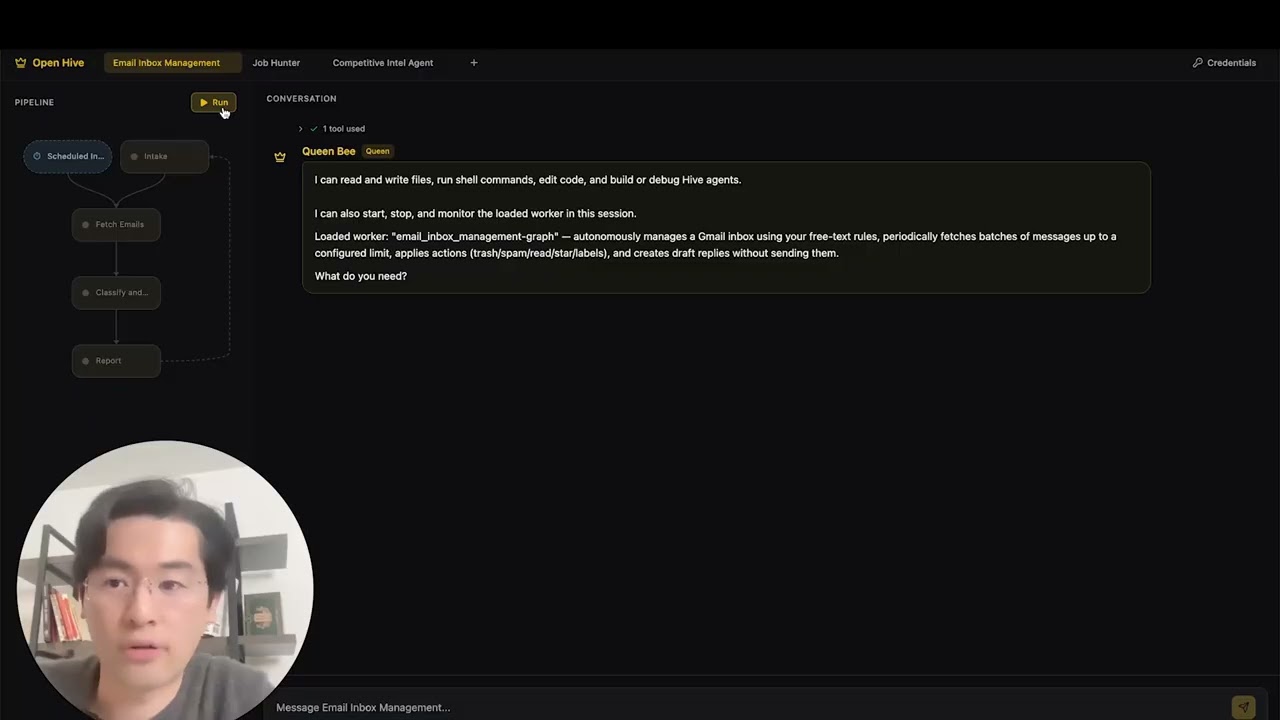

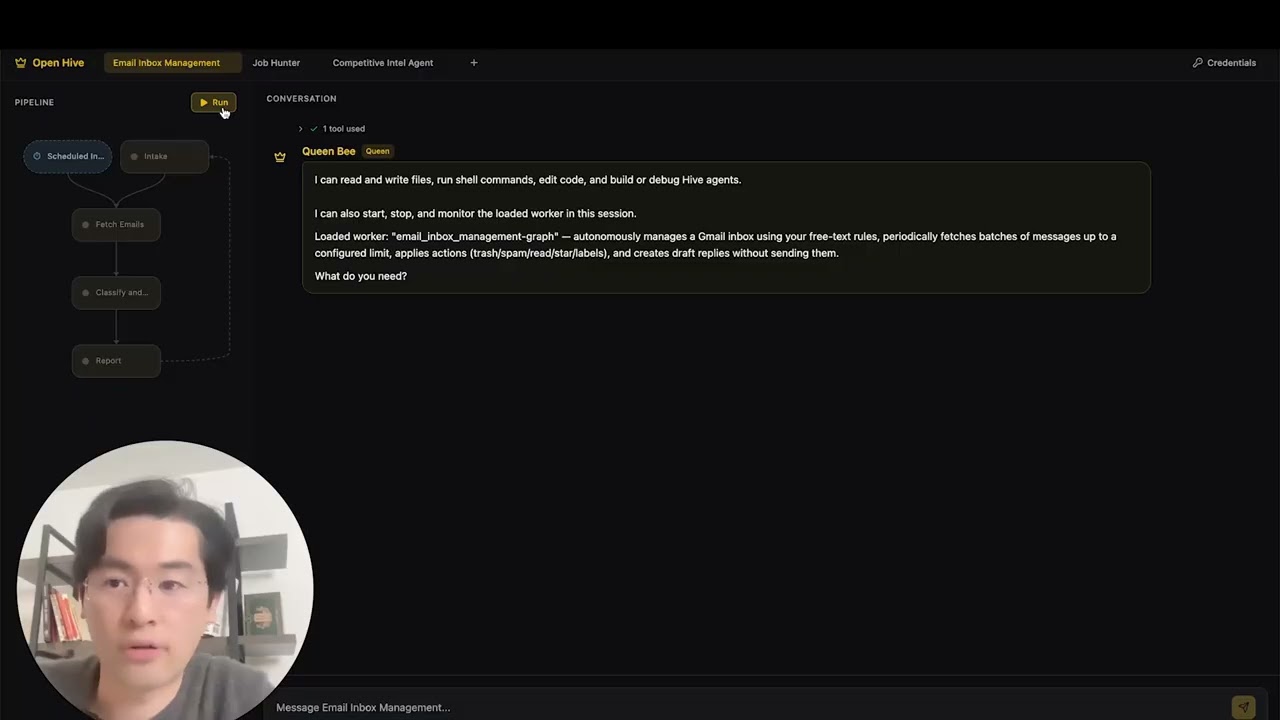

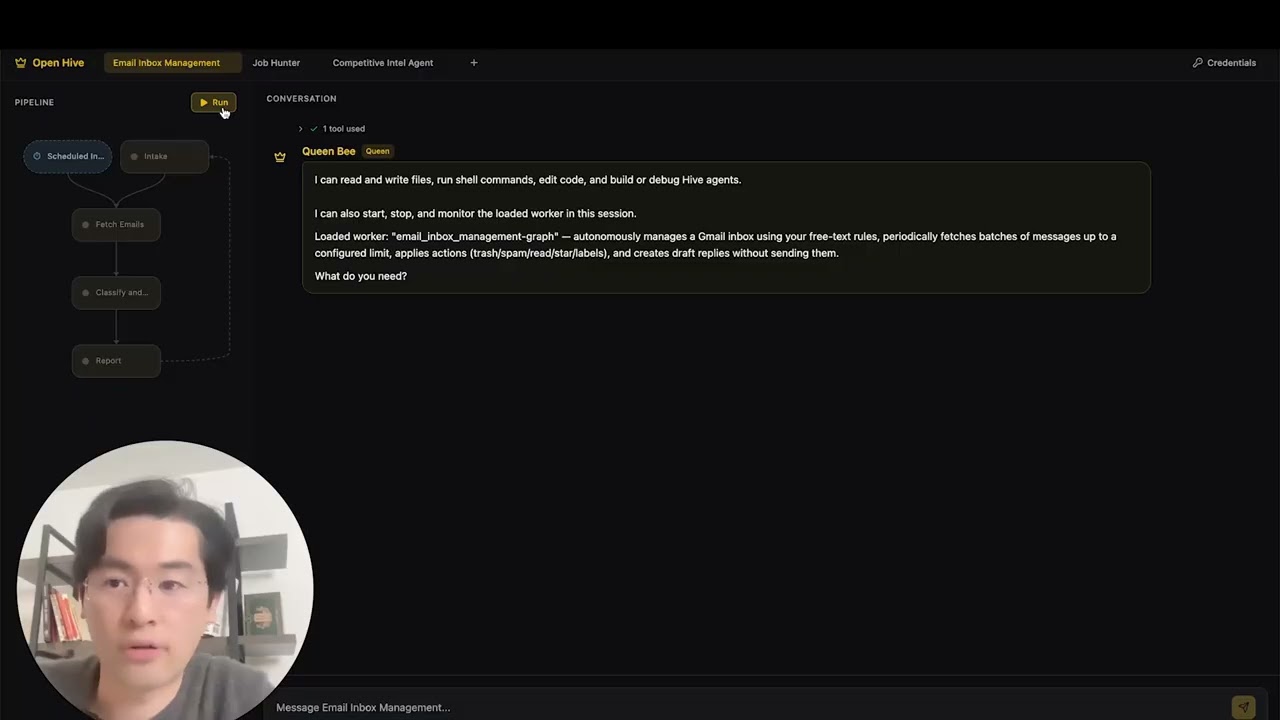

Now you can run an agent by selectiing the agent (either an existing agent or example agent). You can click the Run button on the top left, or talk to the queen agent and it can run the agent for you.

|

||||

|

||||

Hive includes native support for [OpenAI Codex CLI](https://github.com/openai/codex) (v0.101.0+).

|

||||

|

||||

1. **Config:** `.codex/config.toml` with `agent-builder` MCP server (tracked in git)

|

||||

2. **Skills:** `.agents/skills/` symlinks to Hive skills (tracked in git)

|

||||

3. **Launch:** Run `codex` in the repo root, then type `use hive`

|

||||

|

||||

Example:

|

||||

|

||||

```

|

||||

codex> use hive

|

||||

```

|

||||

|

||||

### Opencode

|

||||

|

||||

Hive includes native support for [Opencode](https://github.com/opencode-ai/opencode).

|

||||

|

||||

1. **Setup:** Run the quickstart script

|

||||

2. **Launch:** Open Opencode in the project root.

|

||||

3. **Activate:** Type `/hive` in the chat to switch to the Hive Agent.

|

||||

4. **Verify:** Ask the agent _"List your tools"_ to confirm the connection.

|

||||

|

||||

The agent has access to all Hive skills and can scaffold agents, add tools, and debug workflows directly from the chat.

|

||||

|

||||

**[📖 Complete Setup Guide](docs/environment-setup.md)** - Detailed instructions for agent development

|

||||

|

||||

### Antigravity IDE Support

|

||||

|

||||

Skills and MCP servers are also available in [Antigravity IDE](https://antigravity.google/) (Google's AI-powered IDE). **Easiest:** open a terminal in the hive repo folder and run (use `./` — the script is inside the repo):

|

||||

|

||||

```bash

|

||||

./scripts/setup-antigravity-mcp.sh

|

||||

```

|

||||

|

||||

**Important:** Always restart/refresh Antigravity IDE after running the setup script—MCP servers only load on startup. After restart, **agent-builder** and **tools** MCP servers should connect. Skills are under `.agent/skills/` (symlinks to `.claude/skills/`). See [docs/antigravity-setup.md](docs/antigravity-setup.md) for manual setup and troubleshooting.

|

||||

<img width="2500" height="1214" alt="Image" src="https://github.com/user-attachments/assets/71c38206-2ad5-49aa-bde8-6698d0bc55f5" />

|

||||

|

||||

## Features

|

||||

|

||||

- **[Goal-Driven Development](docs/key_concepts/goals_outcome.md)** - Define objectives in natural language; the coding agent generates the agent graph and connection code to achieve them

|

||||

- **Browser-Use** - Control the browser on your computer to achieve hard tasks

|

||||

- **Parallel Execution** - Execute the generated graph in parallel. This way you can have multiple agent compelteing the jobs for you

|

||||

- **[Goal-Driven Generation](docs/key_concepts/goals_outcome.md)** - Define objectives in natural language; the coding agent generates the agent graph and connection code to achieve them

|

||||

- **[Adaptiveness](docs/key_concepts/evolution.md)** - Framework captures failures, calibrates according to the objectives, and evolves the agent graph

|

||||

- **[Dynamic Node Connections](docs/key_concepts/graph.md)** - No predefined edges; connection code is generated by any capable LLM based on your goals

|

||||

- **SDK-Wrapped Nodes** - Every node gets shared memory, local RLM memory, monitoring, tools, and LLM access out of the box

|

||||

- **[Human-in-the-Loop](docs/key_concepts/graph.md#human-in-the-loop)** - Intervention nodes that pause execution for human input with configurable timeouts and escalation

|

||||

- **Real-time Observability** - WebSocket streaming for live monitoring of agent execution, decisions, and node-to-node communication

|

||||

- **Interactive TUI Dashboard** - Terminal-based dashboard with live graph view, event log, and chat interface for agent interaction

|

||||

- **Cost & Budget Control** - Set spending limits, throttles, and automatic model degradation policies

|

||||

- **Production-Ready** - Self-hostable, built for scale and reliability

|

||||

|

||||

## Integration

|

||||

@@ -240,35 +205,10 @@ flowchart LR

|

||||

4. **Control Plane Monitors** → Real-time metrics, budget enforcement, policy management

|

||||

5. **[Adaptiveness](docs/key_concepts/evolution.md)** → On failure, the system evolves the graph and redeploys automatically

|

||||

|

||||

## Run Agents

|

||||

|

||||

The `hive` CLI is the primary interface for running agents.

|

||||

|

||||

```bash

|

||||

# Browse and run agents interactively (Recommended)

|

||||

hive tui

|

||||

|

||||

# Run a specific agent directly

|

||||

hive run exports/my_agent --input '{"task": "Your input here"}'

|

||||

|

||||

# Run a specific agent with the TUI dashboard

|

||||

hive run exports/my_agent --tui

|

||||

|

||||

# Interactive REPL

|

||||

hive shell

|

||||

```

|

||||

|

||||

The TUI scans both `exports/` and `examples/templates/` for available agents.

|

||||

|

||||

> **Using Python directly (alternative):** You can also run agents with `PYTHONPATH=exports uv run python -m agent_name run --input '{...}'`

|

||||

|

||||

See [environment-setup.md](docs/environment-setup.md) for complete setup instructions.

|

||||

|

||||

## Documentation

|

||||

|

||||

- **[Developer Guide](docs/developer-guide.md)** - Comprehensive guide for developers

|

||||

- [Getting Started](docs/getting-started.md) - Quick setup instructions

|

||||

- [TUI Guide](docs/tui-selection-guide.md) - Interactive dashboard usage

|

||||

- [Configuration Guide](docs/configuration.md) - All configuration options

|

||||

- [Architecture Overview](docs/architecture/README.md) - System design and structure

|

||||

|

||||

@@ -435,7 +375,7 @@ This project is licensed under the Apache License 2.0 - see the [LICENSE](LICENS

|

||||

|

||||

**Q: What LLM providers does Hive support?**

|

||||

|

||||

Hive supports 100+ LLM providers through LiteLLM integration, including OpenAI (GPT-4, GPT-4o), Anthropic (Claude models), Google Gemini, DeepSeek, Mistral, Groq, and many more. Simply set the appropriate API key environment variable and specify the model name.

|

||||

Hive supports 100+ LLM providers through LiteLLM integration, including OpenAI (GPT-4, GPT-4o), Anthropic (Claude models), Google Gemini, DeepSeek, Mistral, Groq, and many more. Simply set the appropriate API key environment variable and specify the model name. We recommend using Claude, GLM and Gemini as they have the best performance.

|

||||

|

||||

**Q: Can I use Hive with local AI models like Ollama?**

|

||||

|

||||

@@ -477,14 +417,6 @@ Visit [docs.adenhq.com](https://docs.adenhq.com/) for complete guides, API refer

|

||||

|

||||

Contributions are welcome! Fork the repository, create your feature branch, implement your changes, and submit a pull request. See [CONTRIBUTING.md](CONTRIBUTING.md) for detailed guidelines.

|

||||

|

||||

**Q: When will my team start seeing results from Aden's adaptive agents?**

|

||||

|

||||

Aden's adaptation loop begins working from the first execution. When an agent fails, the framework captures the failure data, helping developers evolve the agent graph through the coding agent. How quickly this translates to measurable results depends on the complexity of your use case, the quality of your goal definitions, and the volume of executions generating feedback.

|

||||

|

||||

**Q: How does Hive compare to other agent frameworks?**

|

||||

|

||||

Hive focuses on generating agents that run real business processes, rather than generic agents. This vision emphasizes outcome-driven design, adaptability, and an easy-to-use set of tools and integrations.

|

||||

|

||||

---

|

||||

|

||||

<p align="center">

|

||||

|

||||

+1

-1

@@ -64,7 +64,7 @@ To use the agent builder with Claude Desktop or other MCP clients, add this to y

|

||||

"agent-builder": {

|

||||

"command": "python",

|

||||

"args": ["-m", "framework.mcp.agent_builder_server"],

|

||||

"cwd": "/path/to/goal-agent"

|

||||

"cwd": "/path/to/hive/core"

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

+4

-2

@@ -15,6 +15,7 @@ import base64

|

||||

import hashlib

|

||||

import http.server

|

||||

import json

|

||||

import os

|

||||

import platform

|

||||

import secrets

|

||||

import subprocess

|

||||

@@ -150,8 +151,9 @@ def save_credentials(token_data: dict, account_id: str) -> None:

|

||||

if "id_token" in token_data:

|

||||

auth_data["tokens"]["id_token"] = token_data["id_token"]

|

||||

|

||||

CODEX_AUTH_FILE.parent.mkdir(parents=True, exist_ok=True)

|

||||

with open(CODEX_AUTH_FILE, "w") as f:

|

||||

CODEX_AUTH_FILE.parent.mkdir(parents=True, exist_ok=True, mode=0o700)

|

||||

fd = os.open(CODEX_AUTH_FILE, os.O_WRONLY | os.O_CREAT | os.O_TRUNC, 0o600)

|

||||

with os.fdopen(fd, "w") as f:

|

||||

json.dump(auth_data, f, indent=2)

|

||||

|

||||

|

||||

|

||||

@@ -7,19 +7,38 @@ from framework.graph import NodeSpec

|

||||

# Load reference docs at import time so they're always in the system prompt.

|

||||

# No voluntary read_file() calls needed — the LLM gets everything upfront.

|

||||

_ref_dir = Path(__file__).parent.parent / "reference"

|

||||

_framework_guide = (_ref_dir / "framework_guide.md").read_text(encoding="utf-8")

|

||||

_file_templates = (_ref_dir / "file_templates.md").read_text(encoding="utf-8")

|

||||

_anti_patterns = (_ref_dir / "anti_patterns.md").read_text(encoding="utf-8")

|

||||

_framework_guide = (_ref_dir / "framework_guide.md").read_text()

|

||||

_file_templates = (_ref_dir / "file_templates.md").read_text()

|

||||

_anti_patterns = (_ref_dir / "anti_patterns.md").read_text()

|

||||

_gcu_guide_path = _ref_dir / "gcu_guide.md"

|

||||

_gcu_guide = _gcu_guide_path.read_text() if _gcu_guide_path.exists() else ""

|

||||

|

||||

|

||||

def _is_gcu_enabled() -> bool:

|

||||

try:

|

||||

from framework.config import get_gcu_enabled

|

||||

|

||||

return get_gcu_enabled()

|

||||

except Exception:

|

||||

return False

|

||||

|

||||

|

||||

def _build_appendices() -> str:

|

||||

parts = (

|

||||

"\n\n# Appendix: Framework Reference\n\n"

|

||||

+ _framework_guide

|

||||

+ "\n\n# Appendix: File Templates\n\n"

|

||||

+ _file_templates

|

||||

+ "\n\n# Appendix: Anti-Patterns\n\n"

|

||||

+ _anti_patterns

|

||||

)

|

||||

if _is_gcu_enabled() and _gcu_guide:

|

||||

parts += "\n\n# Appendix: GCU Browser Automation Guide\n\n" + _gcu_guide

|

||||

return parts

|

||||

|

||||

|

||||

# Shared appendices — appended to every coding node's system prompt.

|

||||

_appendices = (

|

||||

"\n\n# Appendix: Framework Reference\n\n"

|

||||

+ _framework_guide

|

||||

+ "\n\n# Appendix: File Templates\n\n"

|

||||

+ _file_templates

|

||||

+ "\n\n# Appendix: Anti-Patterns\n\n"

|

||||

+ _anti_patterns

|

||||

)

|

||||

_appendices = _build_appendices()

|

||||

|

||||

# Tools available to both coder (worker) and queen.

|

||||

_SHARED_TOOLS = [

|

||||

@@ -391,7 +410,10 @@ If list_agent_tools() shows these don't exist, use alternatives \

|

||||

**Node rules**:

|

||||

- **2-4 nodes MAX.** Never exceed 4. Merge thin nodes aggressively.

|

||||

- A node with 0 tools is NOT a real node — merge it.

|

||||

- node_type always "event_loop"

|

||||

- node_type "event_loop" for all regular graph nodes. Use "gcu" ONLY for

|

||||

browser automation subagents (see GCU appendix). GCU nodes MUST be in a

|

||||

parent node's sub_agents list, NEVER connected via edges, and NEVER used

|

||||

as entry/terminal nodes.

|

||||

- max_node_visits default is 0 (unbounded) — correct for forever-alive. \

|

||||

Only set >0 in one-shot agents with bounded feedback loops.

|

||||

- Feedback inputs: nullable_output_keys

|

||||

@@ -539,6 +561,11 @@ critical issue. Use sparingly.

|

||||

this session. If a worker is already loaded, it is automatically unloaded \

|

||||

first. Call after building and validating an agent to make it available \

|

||||

immediately.

|

||||

|

||||

## Credentials

|

||||

- list_credentials(credential_id?) — List all authorized credentials in the \

|

||||

local store. Returns IDs, aliases, status, and identity metadata (never \

|

||||

secrets). Optionally filter by credential_id.

|

||||

"""

|

||||

|

||||

_queen_behavior = """

|

||||

@@ -589,14 +616,29 @@ If NO worker is loaded, say so and offer to build one.

|

||||

- For tasks matching the worker's goal, call start_worker(task).

|

||||

- For everything else, do it directly.

|

||||

|

||||

## When the user clicks Run (external event notification)

|

||||

When you receive an event that the user clicked Run:

|

||||

- If the worker started successfully, briefly acknowledge it — do NOT \

|

||||

repeat the full status. The user can see the graph is running.

|

||||

- If the worker failed to start (credential or structural error), \

|

||||

explain the problem clearly and help fix it. For credential errors, \

|

||||

guide the user to set up the missing credentials. For structural \

|

||||

issues, offer to fix the agent graph directly.

|

||||

|

||||

## When worker is running:

|

||||

- If the user asks about progress, call get_worker_status().

|

||||

- If the user asks about progress, call get_worker_status() ONCE and \

|

||||

report the result. Do NOT poll in a loop.

|

||||

- NEVER call get_worker_status() repeatedly without user input in between. \

|

||||

The worker will surface results through client-facing nodes. You do not \

|

||||

need to monitor it. One check per user request is enough.

|

||||

- If the user has a concern or instruction for the worker, call \

|

||||

inject_worker_message(content) to relay it.

|

||||

- You can still do coding tasks directly while the worker runs.

|

||||

- If an escalation ticket arrives from the judge, assess severity:

|

||||

- Low/transient: acknowledge silently, do not disturb the user.

|

||||

- High/critical: notify the user with a brief analysis and suggested action.

|

||||

- After starting the worker or checking its status, WAIT for the user's \

|

||||

next message. Do not take autonomous actions unless the user asks.

|

||||

|

||||

## When worker asks user a question:

|

||||

- The system will route the user's response directly to the worker. \

|

||||

@@ -778,6 +820,8 @@ queen_node = NodeSpec(

|

||||

"notify_operator",

|

||||

# Agent loading

|

||||

"load_built_agent",

|

||||

# Credentials

|

||||

"list_credentials",

|

||||

],

|

||||

system_prompt=(

|

||||

"You are the Queen — the user's primary interface. You are a coding agent "

|

||||

@@ -803,6 +847,8 @@ ALL_QUEEN_TOOLS = _SHARED_TOOLS + [

|

||||

"notify_operator",

|

||||

# Agent loading

|

||||

"load_built_agent",

|

||||

# Credentials

|

||||

"list_credentials",

|

||||

]

|

||||

|

||||

__all__ = [

|

||||

|

||||

@@ -105,3 +105,7 @@ def test_research_routes_back_to_interact(self):

|

||||

23. **Forgetting sys.path setup in conftest.py** — Tests need `exports/` and `core/` on sys.path.

|

||||

|

||||

24. **Not using auto_responder for client-facing nodes** — Tests with client-facing nodes hang without an auto-responder that injects input. But note: even WITH auto_responder, forever-alive agents still hang because the graph never terminates. Auto-responder only helps for agents with terminal nodes.

|

||||

|

||||

25. **Manually wiring browser tools on event_loop nodes** — If the agent needs browser automation, use `node_type="gcu"` which auto-includes all browser tools and prepends best-practices guidance. Do NOT manually list browser tool names on event_loop nodes — they may not exist in the MCP server or may be incomplete. See the GCU Guide appendix.

|

||||

|

||||

26. **Using GCU nodes as regular graph nodes** — GCU nodes (`node_type="gcu"`) are exclusively subagents. They must ONLY appear in a parent node's `sub_agents=["gcu-node-id"]` list and be invoked via `delegate_to_sub_agent()`. They must NEVER be connected via edges, used as entry nodes, or used as terminal nodes. If a GCU node appears as an edge source or target, the graph will fail pre-load validation.

|

||||

|

||||

@@ -72,7 +72,7 @@ goal = Goal(

|

||||

| id | str | required | kebab-case identifier |

|

||||

| name | str | required | Display name |

|

||||

| description | str | required | What the node does |

|

||||

| node_type | str | required | Always `"event_loop"` |

|

||||

| node_type | str | required | `"event_loop"` or `"gcu"` (browser automation — see GCU Guide appendix) |

|

||||

| input_keys | list[str] | required | Memory keys this node reads |

|

||||

| output_keys | list[str] | required | Memory keys this node writes via set_output |

|

||||

| system_prompt | str | "" | LLM instructions |

|

||||

|

||||

@@ -0,0 +1,119 @@

|

||||

# GCU Browser Automation Guide

|

||||

|

||||

## When to Use GCU Nodes

|

||||

|

||||

Use `node_type="gcu"` when:

|

||||

- The user's workflow requires **navigating real websites** (scraping, form-filling, social media interaction, testing web UIs)

|

||||

- The task involves **dynamic/JS-rendered pages** that `web_scrape` cannot handle (SPAs, infinite scroll, login-gated content)

|

||||

- The agent needs to **interact with a website** — clicking, typing, scrolling, selecting, uploading files

|

||||

|

||||

Do NOT use GCU for:

|

||||

- Static content that `web_scrape` handles fine

|

||||

- API-accessible data (use the API directly)

|

||||

- PDF/file processing

|

||||

- Anything that doesn't require a browser UI

|

||||

|

||||

## What GCU Nodes Are

|

||||

|

||||

- `node_type="gcu"` — a declarative enhancement over `event_loop`

|

||||

- Framework auto-prepends browser best-practices system prompt

|

||||

- Framework auto-includes all 31 browser tools from `gcu-tools` MCP server

|

||||

- Same underlying `EventLoopNode` class — no new imports needed

|

||||

- `tools=[]` is correct — tools are auto-populated at runtime

|

||||

|

||||

## GCU Architecture Pattern

|

||||

|

||||

GCU nodes are **subagents** — invoked via `delegate_to_sub_agent()`, not connected via edges.

|

||||

|

||||

- Primary nodes (`event_loop`, client-facing) orchestrate; GCU nodes do browser work

|

||||

- Parent node declares `sub_agents=["gcu-node-id"]` and calls `delegate_to_sub_agent(agent_id="gcu-node-id", task="...")`

|

||||

- GCU nodes set `max_node_visits=1` (single execution per delegation), `client_facing=False`

|

||||

- GCU nodes use `output_keys=["result"]` and return structured JSON via `set_output("result", ...)`

|

||||

|

||||

## GCU Node Definition Template

|

||||

|

||||

```python

|

||||

gcu_browser_node = NodeSpec(

|

||||

id="gcu-browser-worker",

|

||||

name="Browser Worker",

|

||||

description="Browser subagent that does X.",

|

||||

node_type="gcu",

|

||||

client_facing=False,

|

||||

max_node_visits=1,

|

||||

input_keys=[],

|

||||

output_keys=["result"],

|

||||

tools=[], # Auto-populated with all browser tools

|

||||

system_prompt="""\

|

||||

You are a browser agent. Your job: [specific task].

|

||||

|

||||

## Workflow

|

||||

1. browser_start (only if no browser is running yet)

|

||||

2. browser_open(url=TARGET_URL) — note the returned targetId

|

||||

3. browser_snapshot to read the page

|

||||

4. [task-specific steps]

|

||||

5. set_output("result", JSON)

|

||||

|

||||

## Output format

|

||||

set_output("result", JSON) with:

|

||||

- [field]: [type and description]

|

||||

""",

|

||||

)

|

||||

```

|

||||

|

||||

## Parent Node Template (orchestrating GCU subagents)

|

||||

|

||||

```python

|

||||

orchestrator_node = NodeSpec(

|

||||

id="orchestrator",

|

||||

...

|

||||

node_type="event_loop",

|

||||

sub_agents=["gcu-browser-worker"],

|

||||

system_prompt="""\

|

||||

...

|

||||

delegate_to_sub_agent(

|

||||

agent_id="gcu-browser-worker",

|

||||

task="Navigate to [URL]. Do [specific task]. Return JSON with [fields]."

|

||||

)

|

||||

...

|

||||

""",

|

||||

tools=[], # Orchestrator doesn't need browser tools

|

||||

)

|

||||

```

|

||||

|

||||

## mcp_servers.json with GCU

|

||||

|

||||

```json

|

||||

{

|

||||

"hive-tools": { ... },

|

||||

"gcu-tools": {

|

||||

"transport": "stdio",

|

||||

"command": "uv",

|

||||

"args": ["run", "python", "-m", "gcu.server", "--stdio"],

|

||||

"cwd": "../../tools",

|

||||

"description": "GCU tools for browser automation"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

Note: `gcu-tools` is auto-added if any node uses `node_type="gcu"`, but including it explicitly is fine.

|

||||

|

||||

## GCU System Prompt Best Practices

|

||||

|

||||

Key rules to bake into GCU node prompts:

|

||||

|

||||

- Prefer `browser_snapshot` over `browser_get_text("body")` — compact accessibility tree vs 100KB+ raw HTML

|

||||

- Always `browser_wait` after navigation

|

||||

- Use large scroll amounts (~2000-5000) for lazy-loaded content

|

||||

- For spillover files, use `run_command` with grep, not `read_file`

|

||||

- If auth wall detected, report immediately — don't attempt login

|

||||

- Keep tool calls per turn ≤10

|

||||

- Tab isolation: when browser is already running, use `browser_open(background=true)` and pass `target_id` to every call

|

||||

|

||||

## GCU Anti-Patterns

|

||||

|

||||

- Using `browser_screenshot` to read text (use `browser_snapshot`)

|

||||

- Re-navigating after scrolling (resets scroll position)

|

||||

- Attempting login on auth walls

|

||||

- Forgetting `target_id` in multi-tab scenarios

|

||||

- Putting browser tools directly on `event_loop` nodes instead of using GCU subagent pattern

|

||||

- Making GCU nodes `client_facing=True` (they should be autonomous subagents)

|

||||

@@ -90,6 +90,11 @@ def get_api_key() -> str | None:

|

||||

return None

|

||||

|

||||

|

||||

def get_gcu_enabled() -> bool:

|

||||

"""Return whether GCU (browser automation) is enabled in user config."""

|

||||

return get_hive_config().get("gcu_enabled", False)

|

||||

|

||||

|

||||

def get_api_base() -> str | None:

|

||||

"""Return the api_base URL for OpenAI-compatible endpoints, if configured."""

|

||||

llm = get_hive_config().get("llm", {})

|

||||

|

||||

@@ -159,11 +159,7 @@ class CredentialValidationResult:

|

||||

f" {c.env_var} for {_label(c)}"

|

||||

f"\n Connect this integration at hive.adenhq.com first."

|

||||

)

|

||||

lines.append(

|

||||

"\nTo fix: run /hive-credentials in Claude Code."

|

||||

"\nIf you've already set up credentials, "

|

||||

"restart your terminal to load them."

|

||||

)

|

||||

lines.append("\nIf you've already set up credentials, restart your terminal to load them.")

|

||||

return "\n".join(lines)

|

||||

|

||||

|

||||

|

||||

@@ -107,17 +107,38 @@ _TC_ARG_LIMIT = 200 # max chars per tool_call argument after compaction

|

||||

def _compact_tool_calls(tool_calls: list[dict[str, Any]]) -> list[dict[str, Any]]:

|

||||

"""Truncate tool_call arguments to save context tokens during compaction.

|

||||

|

||||

Preserves ``id``, ``type``, and ``function.name`` exactly. Truncates

|

||||

``function.arguments`` (a JSON string) to at most ``_TC_ARG_LIMIT`` chars

|

||||

so that large payloads (e.g. set_output with full findings) don't survive

|

||||

compaction and defeat the purpose of context reduction.

|

||||

Preserves ``id``, ``type``, and ``function.name`` exactly. When arguments

|

||||

exceed ``_TC_ARG_LIMIT``, replaces the full JSON string with a compact

|

||||

**valid** JSON summary. The Anthropic API parses tool_call arguments and

|

||||

rejects requests with malformed JSON (e.g. unterminated strings), so we

|

||||

must never produce broken JSON here.

|

||||

"""

|

||||

compact = []

|

||||

for tc in tool_calls:

|

||||

func = tc.get("function", {})

|

||||

args = func.get("arguments", "")

|

||||

if len(args) > _TC_ARG_LIMIT:

|

||||

args = args[:_TC_ARG_LIMIT] + "…[truncated]"

|

||||

# Build a valid JSON summary instead of slicing mid-string.

|

||||

# Try to extract top-level keys for a meaningful preview.

|

||||

try:

|

||||

parsed = json.loads(args)

|

||||

if isinstance(parsed, dict):

|

||||

# Preserve key names, truncate values

|

||||

summary_parts = []

|

||||

for k, v in parsed.items():

|

||||

v_str = str(v)

|

||||

if len(v_str) > 60:

|

||||

v_str = v_str[:60] + "..."

|

||||

summary_parts.append(f"{k}={v_str}")

|

||||

summary = ", ".join(summary_parts)

|

||||

if len(summary) > _TC_ARG_LIMIT:

|

||||

summary = summary[:_TC_ARG_LIMIT] + "..."

|

||||

args = json.dumps({"_compacted": summary})

|

||||

else:

|

||||

args = json.dumps({"_compacted": str(parsed)[:_TC_ARG_LIMIT]})

|

||||

except (json.JSONDecodeError, TypeError):

|

||||

# Args were already invalid JSON — wrap the preview safely

|

||||

args = json.dumps({"_compacted": args[:_TC_ARG_LIMIT]})

|

||||

compact.append(

|

||||

{

|

||||

"id": tc.get("id", ""),

|

||||

|

||||

@@ -103,7 +103,12 @@ FEEDBACK: (reason if RETRY, empty if ACCEPT)"""

|

||||

|

||||

|

||||

def _extract_recent_context(conversation: NodeConversation, max_messages: int = 10) -> str:

|

||||

"""Extract recent conversation messages for evaluation."""

|

||||

"""Extract recent conversation messages for evaluation.

|

||||

|

||||

Includes tool-call summaries from assistant messages so the judge

|

||||

can see what tools were invoked (especially set_output values) even

|

||||

when the assistant message body is empty.

|

||||

"""

|

||||

messages = conversation.messages

|

||||

recent = messages[-max_messages:] if len(messages) > max_messages else messages

|

||||

|

||||

@@ -112,8 +117,24 @@ def _extract_recent_context(conversation: NodeConversation, max_messages: int =

|

||||

role = msg.role.upper()

|

||||

content = msg.content or ""

|

||||

# Truncate long tool results

|

||||

if msg.role == "tool" and len(content) > 200:

|

||||

content = content[:200] + "..."

|

||||

if msg.role == "tool" and len(content) > 500:

|

||||

content = content[:500] + "..."

|

||||

# For assistant messages with empty content but tool_calls,

|

||||

# summarise the tool calls so the judge knows what happened.

|

||||

if msg.role == "assistant" and not content.strip():

|

||||

tool_calls = getattr(msg, "tool_calls", None)

|

||||

if tool_calls:

|

||||

tc_parts = []

|

||||

for tc in tool_calls:

|

||||

fn = tc.get("function", {}) if isinstance(tc, dict) else {}

|

||||

name = fn.get("name", "")

|

||||

args = fn.get("arguments", "")

|

||||

if name == "set_output":

|

||||

# Show the value so the judge can evaluate content quality

|

||||

tc_parts.append(f" called {name}({args[:1000]})")

|

||||

else:

|

||||

tc_parts.append(f" called {name}(...)")

|

||||

content = "Tool calls:\n" + "\n".join(tc_parts)

|

||||

if content.strip():

|

||||

parts.append(f"[{role}]: {content.strip()}")

|

||||

|

||||

@@ -125,6 +146,10 @@ def _format_outputs(accumulator_state: dict[str, Any]) -> str:

|

||||

|

||||

Lists and dicts get structural formatting so the judge can assess

|

||||

quantity and structure, not just a truncated stringification.

|

||||

|

||||

String values are given a generous limit (2000 chars) so the judge

|

||||

can verify substantive content (e.g. a research brief with key

|

||||

questions, scope boundaries, and deliverables).

|

||||

"""

|

||||

if not accumulator_state:

|

||||

return "(none)"

|

||||

@@ -144,12 +169,12 @@ def _format_outputs(accumulator_state: dict[str, Any]) -> str:

|

||||

val_str += f"\n ... and {len(value) - 8} more"

|

||||

elif isinstance(value, dict):

|

||||

val_str = str(value)

|

||||

if len(val_str) > 400:

|

||||

val_str = val_str[:400] + "..."

|

||||

if len(val_str) > 2000:

|

||||

val_str = val_str[:2000] + "..."

|

||||

else:

|

||||

val_str = str(value)

|

||||

if len(val_str) > 300:

|

||||

val_str = val_str[:300] + "..."

|

||||

if len(val_str) > 2000:

|

||||

val_str = val_str[:2000] + "..."

|

||||

parts.append(f" {key}: {val_str}")

|

||||

return "\n".join(parts)

|

||||

|

||||

|

||||

@@ -338,6 +338,10 @@ class AsyncEntryPointSpec(BaseModel):

|

||||

max_concurrent: int = Field(

|

||||

default=10, description="Maximum concurrent executions for this entry point"

|

||||

)

|

||||

max_resurrections: int = Field(

|

||||

default=3,

|

||||

description="Auto-restart on non-fatal failure (0 to disable)",

|

||||

)

|

||||

|

||||

model_config = {"extra": "allow"}

|

||||

|

||||

@@ -503,45 +507,6 @@ class GraphSpec(BaseModel):

|

||||

"""Get all edges entering a node."""

|

||||

return [e for e in self.edges if e.target == node_id]

|

||||

|

||||

def build_capability_summary(self, from_node_id: str) -> str:

|

||||

"""Build a summary of the agent's downstream workflow phases and tools.

|

||||

|

||||

Walks the graph from *from_node_id* and collects all reachable nodes

|

||||

(excluding the starting node itself) so that client-facing entry nodes

|

||||

can inform the user about what the overall agent is capable of.

|

||||

|

||||

Returns:

|

||||

A formatted string listing each downstream node's name,

|

||||

description, and tools — or an empty string when there are

|

||||

no downstream nodes.

|

||||

"""

|

||||

reachable: list[Any] = []

|

||||

visited: set[str] = set()

|

||||

queue = [from_node_id]

|

||||

while queue:

|

||||

nid = queue.pop()

|

||||

if nid in visited:

|

||||

continue

|

||||

visited.add(nid)

|

||||

node = self.get_node(nid)

|

||||

if node and nid != from_node_id:

|

||||

reachable.append(node)

|

||||

for edge in self.get_outgoing_edges(nid):

|

||||

queue.append(edge.target)

|

||||

|

||||

if not reachable:

|

||||

return ""

|

||||

|

||||

lines = [

|

||||

"## Agent Capabilities",

|

||||

"This agent has the following workflow phases and tools:",

|

||||

]

|

||||

for node in reachable:

|

||||

tool_str = f" (tools: {', '.join(node.tools)})" if node.tools else ""

|

||||

lines.append(f"- {node.name}: {node.description}{tool_str}")

|

||||

|

||||

return "\n".join(lines)

|

||||

|

||||

def detect_fan_out_nodes(self) -> dict[str, list[str]]:

|

||||

"""

|

||||

Detect nodes that fan-out to multiple targets.

|

||||

@@ -683,6 +648,13 @@ class GraphSpec(BaseModel):

|

||||

for edge in self.get_outgoing_edges(current):

|

||||

to_visit.append(edge.target)

|

||||

|

||||

# Also mark sub-agents as reachable (they're invoked via delegate_to_sub_agent, not edges)

|

||||

for node in self.nodes:

|

||||

if node.id in reachable:

|

||||

sub_agents = getattr(node, "sub_agents", []) or []

|

||||

for sub_agent_id in sub_agents:

|

||||

reachable.add(sub_agent_id)

|

||||

|

||||

# Build set of async entry point nodes for quick lookup

|

||||

async_entry_nodes = {ep.entry_node for ep in self.async_entry_points}

|

||||

|

||||

@@ -734,4 +706,48 @@ class GraphSpec(BaseModel):

|

||||

else:

|

||||

seen_keys[key] = node_id

|

||||

|

||||

# GCU nodes must only be used as subagents

|

||||

gcu_node_ids = {n.id for n in self.nodes if n.node_type == "gcu"}

|

||||

if gcu_node_ids:

|

||||

# GCU nodes must not be entry nodes

|

||||

if self.entry_node in gcu_node_ids:

|

||||

errors.append(

|

||||

f"GCU node '{self.entry_node}' is used as entry node. "

|

||||

"GCU nodes must only be used as subagents via delegate_to_sub_agent()."

|

||||

)

|

||||

|

||||

# GCU nodes must not be terminal nodes

|

||||

for term in self.terminal_nodes:

|

||||

if term in gcu_node_ids:

|

||||

errors.append(

|

||||

f"GCU node '{term}' is used as terminal node. "

|

||||

"GCU nodes must only be used as subagents."

|

||||

)

|

||||

|

||||

# GCU nodes must not be connected via edges

|

||||

for edge in self.edges:

|

||||

if edge.source in gcu_node_ids:

|

||||

errors.append(

|

||||

f"GCU node '{edge.source}' is used as edge source (edge '{edge.id}'). "

|

||||

"GCU nodes must only be used as subagents, not connected via edges."

|

||||

)

|

||||

if edge.target in gcu_node_ids:

|

||||

errors.append(

|

||||

f"GCU node '{edge.target}' is used as edge target (edge '{edge.id}'). "

|

||||

"GCU nodes must only be used as subagents, not connected via edges."

|

||||

)

|

||||

|

||||

# GCU nodes must be referenced in at least one parent's sub_agents

|

||||

referenced_subagents = set()

|

||||

for node in self.nodes:

|

||||

for sa_id in node.sub_agents or []:

|

||||

referenced_subagents.add(sa_id)

|

||||

|

||||

orphaned = gcu_node_ids - referenced_subagents

|

||||

for nid in orphaned:

|

||||

errors.append(

|

||||

f"GCU node '{nid}' is not referenced in any node's sub_agents list. "

|

||||

"GCU nodes must be declared as subagents of a parent node."

|

||||

)

|

||||

|

||||

return errors

|

||||

|

||||

+1144

-126

File diff suppressed because it is too large

Load Diff

@@ -193,6 +193,9 @@ class GraphExecutor:

|

||||

# Pause/resume control

|

||||

self._pause_requested = asyncio.Event()

|

||||

|

||||

# Track the currently executing node for external injection routing

|

||||

self.current_node_id: str | None = None

|

||||

|

||||

def _write_progress(

|

||||

self,

|

||||

current_node: str,

|

||||

@@ -338,6 +341,9 @@ class GraphExecutor:

|

||||

cumulative_tool_names: set[str] = set()

|

||||

cumulative_output_keys: list[str] = [] # Output keys from all visited nodes

|

||||

|

||||

# Build node registry for subagent lookup

|

||||

node_registry: dict[str, NodeSpec] = {node.id: node for node in graph.nodes}

|

||||

|

||||

# Initialize checkpoint store if checkpointing is enabled

|

||||

checkpoint_store: CheckpointStore | None = None

|

||||

if checkpoint_config and checkpoint_config.enabled and self._storage_path:

|

||||

@@ -694,6 +700,9 @@ class GraphExecutor:

|

||||

# Execute this node, then pause

|

||||

# (We'll check again after execution and save state)

|

||||

|

||||

# Expose current node for external injection routing

|

||||

self.current_node_id = current_node_id

|

||||

|

||||

self.logger.info(f"\n▶ Step {steps}: {node_spec.name} ({node_spec.node_type})")

|

||||

self.logger.info(f" Inputs: {node_spec.input_keys}")

|

||||

self.logger.info(f" Outputs: {node_spec.output_keys}")

|

||||

@@ -729,6 +738,7 @@ class GraphExecutor:

|

||||

override_tools=cumulative_tools if is_continuous else None,

|

||||

cumulative_output_keys=cumulative_output_keys if is_continuous else None,

|

||||

event_triggered=_event_triggered,

|

||||

node_registry=node_registry,

|

||||

identity_prompt=getattr(graph, "identity_prompt", ""),

|

||||

narrative=_resume_narrative,

|

||||

graph=graph,

|

||||

@@ -1131,6 +1141,7 @@ class GraphExecutor:

|

||||

source_result=result,

|

||||

source_node_spec=node_spec,

|

||||

path=path,

|

||||

node_registry=node_registry,

|

||||

)

|

||||

|

||||

total_tokens += branch_tokens

|

||||

@@ -1583,6 +1594,7 @@ class GraphExecutor:

|

||||

event_triggered: bool = False,

|

||||

identity_prompt: str = "",

|

||||

narrative: str = "",

|

||||

node_registry: dict[str, NodeSpec] | None = None,

|

||||

graph: "GraphSpec | None" = None,

|

||||

) -> NodeContext:

|

||||

"""Build execution context for a node."""

|

||||

@@ -1612,17 +1624,7 @@ class GraphExecutor:

|

||||

node_tool_names=node_spec.tools,

|

||||

)

|

||||

|

||||

# Build goal context, enriched with capability summary for

|

||||

# client-facing nodes so the LLM knows what the full agent can do.

|

||||

goal_context = goal.to_prompt_context()

|

||||

if graph and node_spec.client_facing:

|

||||

capability_summary = graph.build_capability_summary(graph.entry_node)

|

||||

if capability_summary:

|

||||

goal_context = (

|

||||

f"{goal_context}\n\n{capability_summary}"

|

||||

if goal_context

|

||||

else capability_summary

|

||||

)

|

||||

|

||||

return NodeContext(

|

||||

runtime=self.runtime,

|

||||

@@ -1646,10 +1648,14 @@ class GraphExecutor:

|

||||

narrative=narrative,

|

||||

execution_id=self._execution_id,

|

||||

stream_id=self._stream_id,

|

||||

node_registry=node_registry or {},

|

||||

all_tools=list(self.tools), # Full catalog for subagent tool resolution

|

||||

shared_node_registry=self.node_registry, # For subagent escalation routing

|

||||

)

|

||||

|

||||

VALID_NODE_TYPES = {

|

||||

"event_loop",

|

||||

"gcu",

|

||||

}

|

||||

# Node types removed in v0.5 — provide migration guidance

|

||||

REMOVED_NODE_TYPES = {

|

||||

@@ -1684,8 +1690,8 @@ class GraphExecutor:

|

||||

f"Must be one of: {sorted(self.VALID_NODE_TYPES)}."

|

||||

)

|

||||

|

||||

# Create based on type (only event_loop is valid)

|

||||

if node_spec.node_type == "event_loop":

|

||||

# Create based on type

|

||||

if node_spec.node_type in ("event_loop", "gcu"):

|

||||

# Auto-create EventLoopNode with sensible defaults.

|

||||

# Custom configs can still be pre-registered via node_registry.

|

||||

from framework.graph.event_loop_node import EventLoopNode, LoopConfig

|

||||

@@ -1902,6 +1908,7 @@ class GraphExecutor:

|

||||

source_result: NodeResult,

|

||||

source_node_spec: Any,

|

||||

path: list[str],

|

||||

node_registry: dict[str, NodeSpec] | None = None,

|

||||

) -> tuple[dict[str, NodeResult], int, int]:

|

||||

"""

|

||||

Execute multiple branches in parallel using asyncio.gather.

|

||||

@@ -2000,7 +2007,13 @@ class GraphExecutor:

|

||||

|

||||

# Build context for this branch

|

||||

ctx = self._build_context(

|

||||

node_spec, memory, goal, mapped, graph.max_tokens, graph=graph

|

||||

node_spec,

|

||||

memory,

|

||||

goal,

|

||||

mapped,

|

||||

graph.max_tokens,

|

||||

node_registry=node_registry,

|

||||

graph=graph,

|

||||

)

|

||||

node_impl = self._get_node_implementation(node_spec, graph.cleanup_llm_model)

|

||||

|

||||

|

||||

@@ -0,0 +1,23 @@

|

||||

"""File tools MCP server constants.

|

||||

|

||||

Analogous to ``gcu.py`` — defines the server name and default stdio config

|

||||

so the runner can auto-register the files MCP server for any agent that has

|

||||

``event_loop`` or ``gcu`` nodes.

|

||||

"""

|

||||

|

||||

# ---------------------------------------------------------------------------

|

||||

# MCP server identity

|

||||

# ---------------------------------------------------------------------------

|

||||

|

||||

FILES_MCP_SERVER_NAME = "files-tools"

|

||||

"""Name used to identify the file tools MCP server in ``mcp_servers.json``."""

|

||||

|

||||

FILES_MCP_SERVER_CONFIG: dict = {

|

||||

"name": FILES_MCP_SERVER_NAME,

|

||||

"transport": "stdio",

|

||||

"command": "uv",

|

||||

"args": ["run", "python", "files_server.py", "--stdio"],

|

||||

"cwd": "../../tools",

|

||||

"description": "File tools for reading, writing, editing, and searching files",

|

||||

}

|

||||

"""Default stdio config for the file tools MCP server (relative to exports/<agent>/)."""

|

||||

@@ -0,0 +1,86 @@

|

||||

"""GCU (browser automation) node type constants.

|

||||

|

||||

A ``gcu`` node is an ``event_loop`` node with two automatic enhancements:

|

||||

1. A canonical browser best-practices system prompt is prepended.

|

||||

2. All tools from the GCU MCP server are auto-included.

|

||||

|

||||

No new ``NodeProtocol`` subclass — the ``gcu`` type is purely a declarative

|

||||

signal processed by the runner and executor at setup time.

|

||||

"""

|

||||

|

||||

# ---------------------------------------------------------------------------

|

||||

# MCP server identity

|

||||

# ---------------------------------------------------------------------------

|

||||

|

||||

GCU_SERVER_NAME = "gcu-tools"

|

||||

"""Name used to identify the GCU MCP server in ``mcp_servers.json``."""

|

||||

|

||||

GCU_MCP_SERVER_CONFIG: dict = {

|

||||

"name": GCU_SERVER_NAME,

|

||||

"transport": "stdio",

|

||||

"command": "uv",

|

||||

"args": ["run", "python", "-m", "gcu.server", "--stdio"],

|

||||

"cwd": "../../tools",

|

||||

"description": "GCU tools for browser automation",

|

||||

}

|

||||

"""Default stdio config for the GCU MCP server (relative to exports/<agent>/)."""

|

||||

|

||||

# ---------------------------------------------------------------------------

|

||||

# Browser best-practices system prompt

|

||||

# ---------------------------------------------------------------------------

|

||||

|

||||

GCU_BROWSER_SYSTEM_PROMPT = """\

|

||||

# Browser Automation Best Practices

|

||||

|

||||

Follow these rules for reliable, efficient browser interaction.

|

||||

|

||||

## Reading Pages

|

||||

- ALWAYS prefer `browser_snapshot` over `browser_get_text("body")`

|

||||

— it returns a compact ~1-5 KB accessibility tree vs 100+ KB of raw HTML.

|

||||

- Use `browser_snapshot_aria` when you need full ARIA properties

|

||||

for detailed element inspection.

|

||||

- Do NOT use `browser_screenshot` for reading text content

|

||||

— it produces huge base64 images with no searchable text.

|

||||

- Only fall back to `browser_get_text` for extracting specific

|

||||

small elements by CSS selector.

|

||||

|

||||

## Navigation & Waiting

|

||||

- Always call `browser_wait` after navigation actions

|

||||

(`browser_open`, `browser_navigate`, `browser_click` on links)

|

||||

to let the page load.

|

||||

- NEVER re-navigate to the same URL after scrolling

|

||||

— this resets your scroll position and loses loaded content.

|

||||

|

||||

## Scrolling

|

||||

- Use large scroll amounts ~2000 when loading more content

|

||||

— sites like twitter and linkedin have lazy loading for paging.

|

||||

- After scrolling, take a new `browser_snapshot` to see updated content.

|

||||

|

||||

## Error Recovery

|

||||

- If a tool fails, retry once with the same approach.

|

||||

- If it fails a second time, STOP retrying and switch approach.

|

||||

- If `browser_snapshot` fails → try `browser_get_text` with a

|

||||

specific small selector as fallback.

|

||||

- If `browser_open` fails or page seems stale → `browser_stop`,

|

||||

then `browser_start`, then retry.

|

||||

|

||||

## Tab Management

|

||||

- Use `browser_tabs` to list open tabs when managing multiple pages.

|

||||

- Pass `target_id` to tools when operating on a specific tab.

|

||||

- Open background tabs with `browser_open(url=..., background=true)`

|

||||

to avoid losing your current context.

|

||||

- Close tabs you no longer need with `browser_close` to free resources.

|

||||

|

||||

## Login & Auth Walls

|

||||

- If you see a "Log in" or "Sign up" prompt instead of expected

|

||||

content, report the auth wall immediately — do NOT attempt to log in.

|

||||

- Check for cookie consent banners and dismiss them if they block content.

|

||||

|

||||

## Efficiency

|

||||

- Minimize tool calls — combine actions where possible.

|

||||

- When a snapshot result is saved to a spillover file, use

|

||||

`run_command` with grep to extract specific data rather than

|

||||

re-reading the full file.

|

||||

- Call `set_output` in the same turn as your last browser action

|

||||

when possible — don't waste a turn.

|

||||

"""

|

||||

@@ -166,7 +166,7 @@ class NodeSpec(BaseModel):

|

||||

# Node behavior type

|

||||

node_type: str = Field(

|

||||

default="event_loop",

|

||||

description="Type: 'event_loop' (recommended), 'router', 'human_input'.",

|

||||

description="Type: 'event_loop' (recommended), 'gcu' (browser automation).",

|

||||

)

|

||||

|

||||

# Data flow

|

||||

@@ -204,6 +204,16 @@ class NodeSpec(BaseModel):

|

||||

default=None, description="Specific model to use (defaults to graph default)"

|

||||

)

|

||||

|

||||

# For subagent delegation

|

||||

sub_agents: list[str] = Field(

|

||||

default_factory=list,

|

||||

description="Node IDs that can be invoked as subagents from this node",

|

||||

)

|

||||

# For function nodes

|

||||

function: str | None = Field(

|

||||

default=None, description="Function name or path for function nodes"

|

||||

)

|

||||

|

||||

# For router nodes

|

||||

routes: dict[str, str] = Field(

|

||||

default_factory=dict, description="Condition -> target_node_id mapping for routers"

|

||||

@@ -520,6 +530,20 @@ class NodeContext:

|

||||

# Falls back to node_id when not set (legacy / standalone executor).

|

||||

stream_id: str = ""

|

||||

|

||||

# Subagent mode

|

||||

is_subagent_mode: bool = False # True when running as a subagent (prevents nested delegation)

|

||||

report_callback: Any = None # async (message: str, data: dict | None) -> None

|

||||

node_registry: dict[str, "NodeSpec"] = field(default_factory=dict) # For subagent lookup

|

||||

|

||||

# Full tool catalog (unfiltered) — used by _execute_subagent to resolve

|

||||

# subagent tools that aren't in the parent node's filtered available_tools.

|

||||

all_tools: list[Tool] = field(default_factory=list)

|

||||

|

||||

# Shared reference to the executor's node_registry — used by subagent

|

||||

# escalation (_EscalationReceiver) to register temporary receivers that

|

||||

# the inject_input() routing chain can find.

|

||||

shared_node_registry: dict[str, Any] = field(default_factory=dict)

|

||||

|

||||

|

||||

@dataclass

|

||||

class NodeResult:

|

||||

|

||||

@@ -280,7 +280,7 @@ def build_transition_marker(

|

||||

]

|

||||

if file_lines:

|

||||

sections.append(

|

||||

"\nData files (use load_data to access):\n" + "\n".join(file_lines)

|

||||

"\nData files (use read_file to access):\n" + "\n".join(file_lines)

|

||||

)

|

||||

|

||||

# Agent working memory

|

||||

|

||||

@@ -237,6 +237,11 @@ def _is_stream_transient_error(exc: BaseException) -> bool:

|

||||

|

||||

Transient errors (recoverable=True): network issues, server errors, timeouts.

|

||||

Permanent errors (recoverable=False): auth, bad request, context window, etc.

|

||||

|

||||

NOTE: "Failed to parse tool call arguments" (malformed LLM output) is NOT

|

||||

transient at the stream level — retrying with the same messages produces the

|

||||

same malformed output. This error is handled at the EventLoopNode level

|

||||

where the conversation can be modified before retrying.

|

||||

"""

|

||||

try:

|

||||

from litellm.exceptions import (

|

||||

@@ -917,30 +922,6 @@ class LiteLLMProvider(LLMProvider):

|

||||

# and we skip the retry path — nothing was yielded in vain.)

|

||||

has_content = accumulated_text or tool_calls_acc

|

||||

if not has_content:

|

||||

# If the conversation ends with an assistant or tool

|

||||

# message, an empty stream is expected — the LLM has

|

||||

# nothing new to say. Don't burn retries on this;

|

||||

# let the caller (EventLoopNode) decide what to do.

|

||||

# Typical case: client_facing node where the LLM set

|

||||

# all outputs via set_output tool calls, and the tool

|

||||

# results are the last messages.

|

||||

last_role = next(

|

||||

(m["role"] for m in reversed(full_messages) if m.get("role") != "system"),

|

||||

None,

|

||||

)

|

||||

if last_role in ("assistant", "tool"):

|

||||

logger.warning(

|

||||

"[stream] %s returned empty stream after %s message "

|

||||

"(no text, no tool calls). Treating as a no-op turn. "

|

||||

"If this repeats, the agent may be stuck — check for "

|

||||

"ghost empty assistant messages in conversation history.",

|

||||

self.model,

|

||||

last_role,

|

||||

)

|

||||

for event in tail_events:

|

||||

yield event

|

||||

return

|

||||

|

||||

# finish_reason=length means the model exhausted

|

||||

# max_tokens before producing content. Retrying with

|

||||

# the same max_tokens will never help.

|

||||

@@ -958,10 +939,16 @@ class LiteLLMProvider(LLMProvider):

|

||||

yield event

|

||||

return

|

||||

|

||||

# Empty stream after a user message — use short fixed

|

||||

# retries, not the rate-limit backoff. This is likely

|

||||

# a deterministic conversation-structure issue, so long

|

||||

# exponential waits don't help.

|

||||

# Empty stream — always retry regardless of last message

|

||||

# role. Ghost empty streams after tool results are NOT

|

||||

# expected no-ops; they create infinite loops when the

|

||||

# conversation doesn't change between iterations.

|

||||

# After retries, return the empty result and let the

|

||||

# caller (EventLoopNode) decide how to handle it.

|

||||

last_role = next(

|

||||

(m["role"] for m in reversed(full_messages) if m.get("role") != "system"),

|

||||

None,

|

||||

)

|

||||

if attempt < EMPTY_STREAM_MAX_RETRIES:

|

||||

token_count, token_method = _estimate_tokens(

|

||||

self.model,

|

||||

@@ -974,7 +961,8 @@ class LiteLLMProvider(LLMProvider):

|

||||

attempt=attempt,

|

||||

)

|

||||

logger.warning(

|

||||

f"[stream-retry] {self.model} returned empty stream — "

|

||||

f"[stream-retry] {self.model} returned empty stream "

|

||||

f"after {last_role} message — "

|

||||

f"~{token_count} tokens ({token_method}). "

|

||||

f"Request dumped to: {dump_path}. "

|

||||

f"Retrying in {EMPTY_STREAM_RETRY_DELAY}s "

|

||||

@@ -983,7 +971,17 @@ class LiteLLMProvider(LLMProvider):

|

||||

await asyncio.sleep(EMPTY_STREAM_RETRY_DELAY)

|

||||

continue

|

||||

|

||||

# Success (or final attempt) — flush remaining events.

|

||||

# All retries exhausted — log and return the empty

|

||||

# result. EventLoopNode's empty response guard will

|

||||

# accept if all outputs are set, or handle the ghost

|

||||

# stream case if outputs are still missing.

|

||||

logger.error(

|

||||

f"[stream] {self.model} returned empty stream after "

|

||||

f"{EMPTY_STREAM_MAX_RETRIES} retries "

|

||||

f"(last_role={last_role}). Returning empty result."

|

||||

)

|

||||

|

||||

# Success (or empty after exhausted retries) — flush events.

|

||||

for event in tail_events:

|

||||

yield event

|

||||

return

|

||||

|

||||

@@ -10,6 +10,7 @@ Usage:

|

||||

import json

|

||||

import logging

|

||||

import os

|

||||

import shutil

|

||||

import sys

|

||||

from datetime import datetime

|

||||

from pathlib import Path

|

||||

@@ -562,16 +563,29 @@ def _validate_agent_path(agent_path: str) -> tuple[Path | None, str | None]:

|

||||

path = Path(agent_path)

|

||||

|

||||

# Resolve relative paths against project root (not MCP server's cwd)

|

||||

if not path.is_absolute() and not path.exists():

|

||||

resolved = _PROJECT_ROOT / path

|

||||

if resolved.exists():

|

||||

path = resolved

|

||||

if not path.is_absolute():

|

||||

path = _PROJECT_ROOT / path

|

||||

|

||||

# Restrict to allowed directories BEFORE checking existence to prevent

|

||||

# leaking whether arbitrary filesystem paths exist on disk.

|

||||

from framework.server.app import validate_agent_path

|

||||

|

||||

try:

|

||||

path = validate_agent_path(path)

|

||||

except ValueError:

|

||||

return None, json.dumps(

|

||||

{

|

||||

"success": False,

|

||||

"error": "agent_path must be inside an allowed directory "

|

||||

"(exports/, examples/, or ~/.hive/agents/)",

|

||||

}

|

||||

)

|

||||

|

||||

if not path.exists():

|

||||

return None, json.dumps(

|

||||

{

|

||||

"success": False,

|

||||

"error": f"Agent path not found: {path}",

|

||||

"error": f"Agent path not found: {agent_path}",

|

||||

"hint": "Run export_graph to create an agent in exports/ first",

|

||||

}

|

||||

)

|

||||

@@ -586,7 +600,7 @@ def add_node(

|

||||

description: Annotated[str, "What this node does"],

|

||||

node_type: Annotated[

|

||||

str,

|

||||

"Type: event_loop (recommended), router.",

|

||||

"Type: event_loop (recommended), gcu (browser automation), router.",

|

||||

],

|

||||

input_keys: Annotated[str, "JSON array of keys this node reads from shared memory"],

|

||||

output_keys: Annotated[str, "JSON array of keys this node writes to shared memory"],

|

||||

@@ -675,8 +689,23 @@ def add_node(

|

||||

if node_type == "event_loop" and not system_prompt:

|

||||

warnings.append(f"Event loop node '{node_id}' should have a system_prompt")

|

||||

|

||||

# GCU node validation

|

||||

if node_type == "gcu":

|

||||

if tools_list:

|

||||

warnings.append(

|

||||

f"GCU node '{node_id}' auto-includes all browser tools from the "

|

||||

f"gcu-tools MCP server. Manually listed tools {tools_list} will be "

|

||||

f"merged with the auto-included set."

|

||||

)

|

||||

if not system_prompt:

|

||||

warnings.append(

|

||||

f"GCU node '{node_id}' has a default browser best-practices prompt. "

|

||||